AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

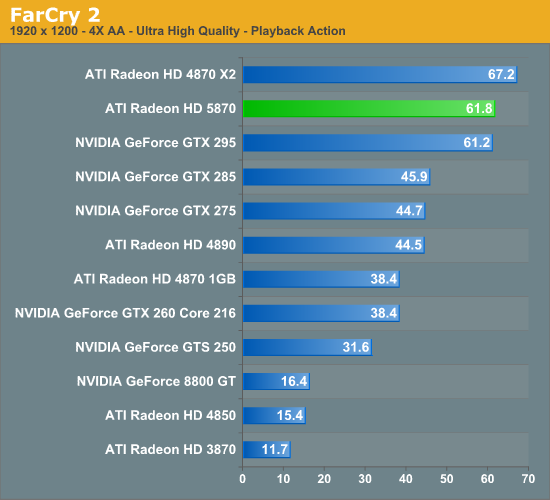

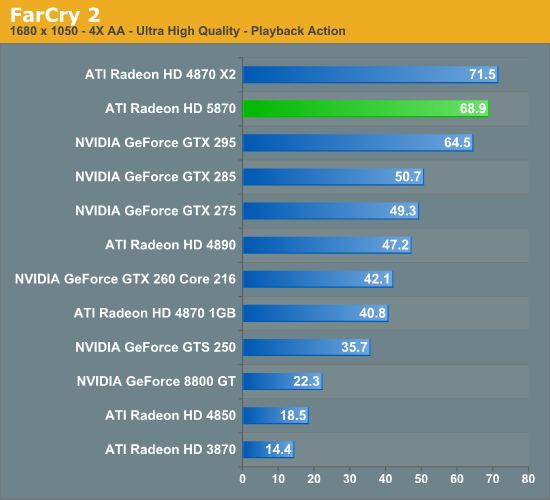

Far Cry 2

Far Cry 2 is another foliage-heavy game. Thankfully it’s not nearly as punishing as Crysis, and it’s possible to achieve a good framerate even with all the settings at their highest.

Unlike Crysis, we have a few interesting things going on here for the 5870. First, it manages to just beat out the GTX 295. The second is that it manages to lose to its other multi-GPU competitor, the 4870X2. Among its other single-GPU competitors however it’s no contest, with the 5870 beating the GTX 285 by over 40%.

Meanwhile the 5870 CF once again comes out on top of it all. This is going to be a recurring pattern, folks.

327 Comments

View All Comments

mapesdhs - Saturday, September 26, 2009 - link

MODel3 writes:

> 1.Geometry/vertex performance issues ...

> 2.Geometry/vertex shading performance issues ...

Would perhaps some of the subtests in 3DMark06 be able to test this?

(not sure about Vantage, never used that yet) Though given what Jarred

said about the bandwidth and other differences, I suppose it's possible

to observe large differences in synthetic tests which are not the real

cause of a performance disparity.

The trouble with heavy GE tests is, they often end up loading the fill

rates anyway. I've run into this problem with the SGI tests I've done

over the years:

http://www.sgidepot.co.uk/sgi.html">http://www.sgidepot.co.uk/sgi.html

The larger landscape models used in the Inventor tests are a good

example. The points models worked better in this regard for testing

GE speed (stars3/star4), but I don't know to what extent modern PC

gfx is designed to handle points modelling - probably works better

on pro cards. Actually, Inventor wasn't a good choice anyway as it's

badly CPU-bound and API-heavy (I should have used Performer, gives

results 5 to 10X faster).

Anyway, point is, synthetic tests might allow one to infer that one

aspect of the gfx pipeline is a bottleneck when infact it isn't.

Ages ago I emailed NVIDIA (Ujesh, who I used to know many moons ago,

but alas he didn't reply) asking when, if ever, they would add

performance counters and other feedback monitors to their gfx

products so that applications could tell what was going on in the

gfx pipeline. SGI did this ages years ago, which allowed systems like

IR to support impressive functions such as Dynamic Video Resizing by

being able to monitor frame by frame what was going on within the gfx

engine at each stage. Try loading any 3D model into perfly, press F1

and click on 'Gfx' in the panel (Linux systems can run Performer), eg.:

http://www.sgidepot.co.uk/misc/perfly.gif">http://www.sgidepot.co.uk/misc/perfly.gif

Given how complex modern PC gfx has become, it's always been a

mystery to me why such functions haven't been included long ago.

Indeed, for all that Crysis looks amazing, I was never that keen on

it being used as a benchmark since there was no way of knowing

whether the performance hammering it created was due to a genuinely

complex environment or just an inefficient gfx engine. There's still

no way to be sure.

If we knew what was happening inside the gfx system, we could easily

work out why performance differences for different apps/games crop

up the way they do. And I would have thought that feedback monitors

within the gfx pipe would be even more useful to those using

professional applications, just as it was for coders working on SGI

hardware in years past.

Come to think of it, how do NVIDIA/ATI even design these things

without being able to monitor what's going on? Jarred, have you ever

asked either company about this?

Ian.

JarredWalton - Saturday, September 26, 2009 - link

I haven't personally, since I'm not really the GPU reviewer here. I'd assume most of their design comes from modeling what's happening, and with knowledge of their architecture they probably have utilities that help them debug stuff and figure out where stalls and bottlenecks are occurring. Or maybe they don't? I figure we don't really have this sort of detail for CPUs either, because we have tools that know the pipeline and architecture and they can model how the software performs without any hardware feedback.MODEL3 - Thursday, October 1, 2009 - link

I checked the web for synthetic geometry tests.Sadly i only found 3dMark Vantage tests.

You can't tell much from them, but they are indicative.

Check:

http://www.pcper.com/article.php?aid=783&type=...">http://www.pcper.com/article.php?aid=783&type=...

GPU Cloth: 5870 is only 1,2X faster than 4890. (vertex/geometry shading test)

GPU Particles: 5870 is only 1,2X faster than 4890. (vertex/geometry shading test)

Perlin Noise: 5870 is 2,5X faster than 4890. (Math-heavy Pixel Shader test)

Parallax Occlusion Mapping: 5870 is 2,1X faster than 4890. (Complex Pixel Shader test)

All the above 4 tests are not bandwidth limited at all.

Just for example, if you check:

http://www.pcper.com/article.php?aid=674&type=...">http://www.pcper.com/article.php?aid=674&type=...

You will see that a 750MHz 4870 512MB is 20-23% faster than a 625MHz 4850 in all the above 4 tests, so the extra bandwidth (115,2GB/s vs 64GB/s) it doesn't help at all.

But 4850 is extremely bandwidth limited in the color fillrate test (4870 is 60% faster than 4850)

Also it shouldn't be a problem of the dual rasterizer/dual SIMDs engine efficiency since synthetic Pixel Shader tests is fine (more than 2X) while the synthetic geometry shading tests is only 1,2X.

My guess is ATI didn't improve the classic geometry set-up engine and the GS because they want to promote vertex/geometry techniques based on the DX11 tesselator from now on.

Zool - Friday, September 25, 2009 - link

In Dx11 the fixed tesselation units will do much finer geometry details for much less memmory space and on chip so i think there isnt a single problem with that. Also the compute shader need minimal memory bandwith and can utilize plenty of idle shaders. The card is designed with dx11 in mind and it isnt using the wholle pipeline after all. I wouldnt make too early conclusions.(I think the perfomance will be much better after few drivers)MODEL3 - Saturday, September 26, 2009 - link

The DX11 tesselator in order to be utilized must the game engine to take advantage of it.I am not talking about the tesselator.

I am talking about the classic Geometry unit (DX9/DX10 engines) and the Geometry Shader [GS] (DX10 engines only).

I'll check to see if i can find a tech site that has synthetic bench for Geometry related perf. and i will post again tomorrow, if i can find anything.

JarredWalton - Friday, September 25, 2009 - link

It's worth noting that when you factor in clock speeds, compared to the 5870 the 4870X2 offers 88% of the core performance and 50% more bandwidth. Some algorithms/games require more bandwidth and others need more core performance, but it's usually a combination of the two. The X2 also has CrossFire inefficiencies to deal with.More interesting perhaps is that the GTX 295 offers (by my estimates, which admittedly are off in some areas) roughly 10% more GPU shader performance, about 18.5% more fill rate, and 46% more bandwidth than the HD 5870. The fact that the HD 4870 is still competitive is a good sign that ATI is getting good use of their 5 SPs per Stream Processor design, and that they are not memory bandwidth limited -- at least not entirely.

SiliconDoc - Wednesday, September 30, 2009 - link

The 4870x2 has somewhere around "double the data paths" in and out of it's 2 cpu's. So what you have with the 5870 putting as some have characterized " 2x 770 cores melded into one " is DOUBLE THE BOTTLENECK in and out of the core.They tried to compensate with ddr5 1200/4800 - but the fact remains, they only get so much with that "NOT ENOUGH DATA PATHS/PINS in and out of that gpu core."

cactusdog - Friday, September 25, 2009 - link

Omg these cards look great. Lol Silicon Doc is so gutted and furious he is making hmself look like a dam fool again only this time he should be on suicide watch...Nvidia cards are now obsolete..LOL.mapesdhs - Friday, September 25, 2009 - link

Hehe, indeed. Have you ever seen a scifi series called, "They Came

From Somewhere Else?" S.D.'s getting so worked up, reminds me of

the scene where the guy's head explodes. :D

Hmm, that's an alternative approach I suppose in place of post

moderation. Just get someone so worked up about something they'll

have an aneurism and pop their clogs... in which case, I'll hand

it back to Jarred. *grin*

Ian.

SiliconDoc - Friday, September 25, 2009 - link

That is quite all right, you fellas make sure to read it all, I am more than happy that the truth is sinking into your gourds, you won't be able to shake it.I am very happy about it.