AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

DirectCompute, OpenCL, and the Future of CAL

As a journalist, GPGPU stuff is one of the more frustrating things to cover. The concept is great, but the execution makes it difficult to accurately cover, exacerbated by the fact that until now AMD and NVIDIA each had separate APIs. OpenCL and DirectCompute will unify things, but software will be slow to arrive.

As it stands, neither AMD nor NVIDIA have a complete OpenCL implementation that's shipping to end-users for Windows or Linux. NVIDIA has OpenCL working on the 8-series and later on Mac OS X Snow Leopard, and AMD has it working under the same OS for the 4800 series, but for obvious reasons we can’t test a 5870 in a Mac. As such it won’t be until later this year that we see either side get OpenCL up and running under Windows. Both NVIDIA and AMD have development versions that they're letting developers play with, and both have submitted implementations to Khronos, so hopefully we’ll have something soon.

It’s also worth noting that OpenCL is based around DirectX 10 hardware, so even after someone finally ships an implementation we’re likely to see a new version in short order. AMD is already talking about OpenCL 1.1, which would add support for the hardware features that they have from DirectX 11, such as append/consume buffers and atomic operations.

DirectCompute is in comparatively better shape. NVIDIA already supports it on their DX10 hardware, and the beta drivers we’re using for the 5870 support it on the 5000 series. The missing link at this point is AMD’s DX10 hardware; even the beta drivers we’re using don’t support it on the 2000, 3000, or 4000 series. From what we hear the final Catalyst 9.10 drivers will deliver this feature.

Going forward, one specific issue for DirectCompute development will be that there are three levels of DirectCompute, derived from DX10 (4.0), DX10.1 (4.1), and DX11 (5.0) hardware. The higher the version the more advanced the features, with DirectCompute 5.0 in particular being a big jump as it’s the first hardware generation designed with DirectCompute in mind. Among other notable differences, it’s the first version to offer double precision floating point support and atomic operations.

AMD is convinced that developers should and will target DirectCompute 5.0 due to its feature set, but we’re not sold on the idea. To say that there’s a “lot” of DX10 hardware out there is a gross understatement, and all of that hardware is capable of supporting at a minimum DirectCompute 4.0. Certainly DirectCompute 5.0 is the better API to use, but the first developers testing the waters may end up starting with DirectCompute 4.0. Releasing something written in DirectCompute 5.0 right now won’t do developers much good at the moment due to the low quantity of hardware out there that can support it.

With that in mind, there’s not much of a software situation to speak about when it comes to DirectCompute right now. Cyberlink demoed a version of PowerDirector using DirectCompute for rendering effects, but it’s the same story as most DX11 games: later this year. For AMD there isn’t as much of an incentive to push non-game software as fast or as hard as DX11 games, so we’re expecting any non-game software utilizing DirectCompute to be slow to materialize.

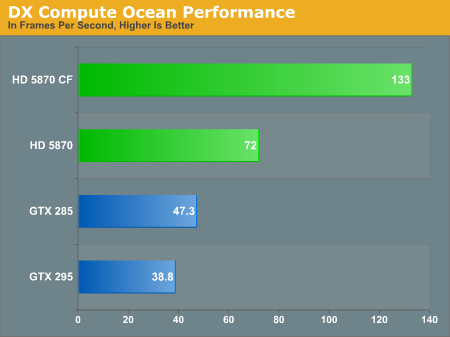

Given that DirectCompute is the only common GPGPU API that is currently working on both vendors’ cards, we wanted to try to use it as the basis of a proper GPGPU comparison. We did get something that would accomplish the task, unfortunately it was an NVIDIA tech demo. We have decided to run it anyhow as it’s quite literally the only thing we have right now that uses DirectCompute, but please take an appropriately sized quantity of salt – it’s not really a fair test.

NVIDIA’s ocean demo is a fairly simple proof of concept program that uses DirectCompute to run Fast Fourier transforms directly on the GPU for better performance. The FFTs in turn are used to generate the wave data, forming the wave action seen on screen as part of the ocean. This is a DirectCompute 4.0 program, as it’s intended to run on NVIDIA’s DX10 hardware.

The 5870 has no problem running the program, and in spite of whatever home field advantage that may exist for NVIDIA it easily outperforms the GTX 285. Things get a little more crazy once we start using SLI/Crossfire; the 5870 picks up speed, but the GTX 295 ends up being slower than the GTX 285. As it’s only a tech demo this shouldn’t be dwelt on too much beyond the fact that it’s proof that DirectCompute is indeed working on the 5800 series.

Wrapping things up, one of the last GPGPU projects AMD presented at their press event was a GPU implementation of Bullet Physics, an open source physics simulation library. Although they’ll never admit it, AMD is probably getting tired of being beaten over the head by NVIDIA and PhysX; Bullet Physics is AMD’s proof that they can do physics too. However we don’t expect it to go anywhere given its very low penetration in existing games and the amount of trouble NVIDIA has had in getting developers to use anything besides Havok. Our expectations for GPGPU physics remains the same: the unification will come from a middleware vendor selling a commercial physics package. If it’s not Havok, then it will be someone else.

Finally, while AMD is hitting the ground running for OpenCL and DirectCompute, their older APIs are being left behind as AMD has chosen to focus all future efforts on OpenCL and DirectCompute. Brook+, AMD’s high level language, has been put out to pasture as a Sourceforge project. Compute Abstract Layer (CAL) lives on since it’s what AMD’s OpenCL support is built upon, however it’s not going to see any further public development with the interface frozen at the current 1.4 standard. AMD is discouraging any CAL development in favor of OpenCL, although it’s likely the High Performance Computing (HPC) crowd will continue to use it in conjunction with AMD’s FireStream cards to squeeze every bit of performance out of AMD’s hardware.

327 Comments

View All Comments

erple2 - Wednesday, September 23, 2009 - link

I think that you're missing the point. AMD appeared to want the part to be small enough to maximize the number of gpu's generated per wafer. They had their own internal idea of how to get a good yield from the 40nm wafers.It appears to be similar to their line of thinking with the 4870 launch (see http://www.anandtech.com/video/showdoc.aspx?i=3469">http://www.anandtech.com/video/showdoc.aspx?i=3469 for more information) - they didn't feel like they needed to get the biggest, fastest, most power hungry part to compete well. It turns out that with the 5870, they have that, at least until we see what Nvidia comes out with the G300.

It turns out that performance really isn't all people care about - otherwise nobody would run anything other than dual GTX285's in SLI. People care about performance __at a particular price point__. ATI is trying to grab that particular sweet spot - be able to take the performance crown for a particular price range. They would probably be able to make a gargantuan low-yield, high power monster that would decimate everything currently available (crossfire/SLI or single), but that chip would be massively expensive to produce, and surprisingly, be a poor Return on Investment.

So the comment that Cypress is "too big" I think really is apropos. I think that AMD would have been able to launch the 5870 at the $299 price point of the 4870 only if the die had been significantly smaller (around the same size as the 4870). THAT would have been an amazing bang-for-buck card, I believe.

Doormat - Wednesday, September 23, 2009 - link

[Big Chart] and suchfaxon - Wednesday, September 23, 2009 - link

page 15 is missing its charts guys! look at it, how did that happen lmaoGary Key - Wednesday, September 23, 2009 - link

Ryan is updating the page now. He should be finished up shortly. We had a lot of images that needed to be displayed in a different manner at the last minute.Totally - Wednesday, September 23, 2009 - link

the images are missingdguy6789 - Wednesday, September 23, 2009 - link

You very clearly fail to mention that the cheapest GTX295 one can buy is nearly $100 more expensive than the HD 5870.Ryan Smith - Wednesday, September 23, 2009 - link

In my own defense, when I wrote that paragraph Newegg's cheapest brand-new GTX 295 was only $409. They've been playing price games...SiliconDoc - Friday, September 25, 2009 - link

That "price game" is because the 5870 is rather DISAPPOINTING when compared to the GTX295.I guess that means ATI "blew the competition" this time, huh, and NVidia is going to get more money for their better GTX295.

LOL

That's a *scowl* "new egg price game" for red fans.

Thanks ATI for making NVidia more money !

strikeback03 - Wednesday, September 23, 2009 - link

lol, did they drop the price while they had 5870s in stock, then raise it again once they were gone?SiliconDoc - Wednesday, September 23, 2009 - link

Oh, so sorry, 1:46pm, NO 5870's available at the egg...I guess they sold 1 powercolor and one asus...

http://www.newegg.com/Product/ProductList.aspx?Sub...">http://www.newegg.com/Product/ProductLi...1&na...

---

Come on anandtech workers, you can say it "PAPER LUANCH !"