AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

The First DirectX 11 Games

With any launch of a new DirectX generation of hardware, software availability becomes a concern. As the hardware needs to come before the software so that developers can tailor their games’ performance, it’s just not possible to immediately launch with games ready to go. For the launch of DirectX 11 and the 5800 series, AMD gave us a list of what games to expect and when.

First out of the gate is Battleforge, EA’s card-based online-only RTS. We had initially been told that it would miss the 5870 launch, but in fact EA and AMD managed to get it in under the wire and deliver it a day early. This gives AMD the legitimate claim of having a DX11 title out there that only their new hardware can fully exploit, and from a press perspective it’s nice to have something out there we can test besides tech demos. Unfortunately we wrapped up our testing 2 days early in order to attend IDF, which means we have not yet had a chance to benchmark this title’s DX11 mode or look at it in-depth.

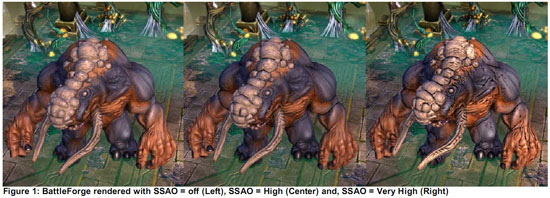

We did have a chance to see the title in action quickly at AMD’s press event 2 weeks ago, where AMD was using it to show off High Definition Ambient Occlusion. As far as we can tell, HDAO is the only DX11 wonder-feature that it current implements, which makes sense given that it should be the easiest to patch in.

The next big title in AMD’s stack of DX11 games is STALKER: Call of Pripyat. This game went gold in Russia earlier this week, with the English version some time behind it. Unfortunately we don’t know what DX11 features it will be using, but as STALKER games have historically been hard on computers, it should prove to be an interesting test case for DX11 performance.

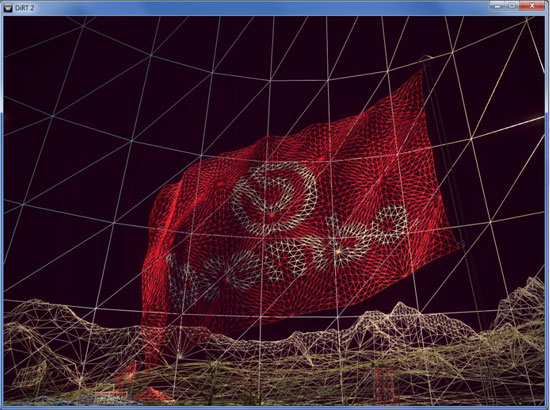

DIRT 2 is a title that got a great deal of promotion at AMD’s press event. AMD has been using it to show off their 6-way Eyefinity configuration, and we had a chance to play it quickly in their testing labs when looking at Eyefinity. This should be a fuller-featured DX11 game, utilizing tessellation, better shadow filtering, and other DX11 features. Certainly it’s the closest thing AMD’s going to have for a showcase title this year for the DX11 features of their hardware, and the console version has been scoring well in reviews. The PC version is due December 11th.

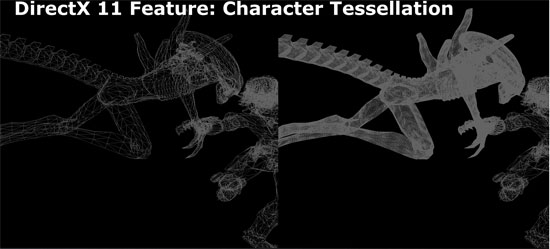

Finally, AMD had Rebellion Games in house to show off an early version of Aliens vs. Predator. This was certainly the most impressive title shown, with Rebellion showing off tessellation and HDAO in real time. Unfortunately screenshots don’t really do the game justice here; the difference from using DX11 is far more noticeable in motion. At any rate, this game is the farthest out – it won’t ship until Q1 of next year at the earliest.

327 Comments

View All Comments

erple2 - Tuesday, September 29, 2009 - link

What the heck are you talking about? Are you saying that electricity consumed by a device divided by the "volume" of the device is the only way to measure the heat output of the device? Every single Engineering class I took tells me that's wrong, and I'm right. I think you need to take some basic courses in Electrical Engineering and/or Thermodynamics.(simplified)

power consumed = work + waste

You're looking for the waste heat generated by the device. If something can completely covert every watt of electricity that passes through it to do some type of work (light a light bulb, turn a motor, make some calculation on a GPU etc), then it's not going to heat up. As a result, you HAVE to take into consideration how inefficient the particular device is before you can make any claim about how much the device heats up.

I'll bet that if you put a Liquid Nitrogen cooler on every ATI card, and used the standard air coolers on every NVidia card, that the ATI cards are going to run crazy cooler than the NVidia cards.

Ultimately the temperature of the GPU's depends a significant amount on the efficiency of the cooler, and how much heat the GPU is generating as waste. My point is that we don't have enough data to determine whether the ATI die runs hot because the coolers are less than ideal, Nvidia ones are closer to ideal, the die is smaller, or whatever you have. You have to look at a combination of the efficiency of the die (how well it converts input power to "work done"), the efficiency of the cooler (how well it removes heat from it's heat source), and the combination of the two.

I'd posit that the ATI card is more efficient than the NVidia card (at least in WoW, the only thing we have actual numbers of the "work done" and "input power consumed").

Now, if you look at the measured temperature of the core as a means of comparing the worthiness of one GPU over another, I think you're making just as meaningful a comparison as comparing the worthiness of the GPU based on the size of the retail box that it comes in.

SiliconDoc - Friday, September 25, 2009 - link

You simply repeated my claim about watts, and replaced core size, with fps, and created a framerate per watt chart, that has near nothing to do with actual heat inside the die, since the SIZE of the die, vs the power traversing through it is the determining factor, affected by fan quality (ram size as well).Your argument is "framerate power efficiency", as in watts per framerate, and has nothing to do with core temperature (modified by fan cooling of course to some degree), that the article indeed posts except for the two failed ati cards.

The problem with your flawwed "science" that turns it into hokum, is that no matter what outputs on the screen, the HEAT generated by the power consumption of the card itself, remains in the card, and is not "pumped through the videoport to the screen".

If you'd like to claim "wattage vs framerate" efficency for 5870, fine I've got no problem, but claiming that proves core temps are not dependent on power consumption vs die size ( modified by the rest of the card *mem size/power useage/ and the fan heatsink* ) is RIDICULOUS.

---

The cards are generally equivalent manufacturing and component additions, so you take the wattage consumed (by the core) and divide by core size, for heat density.

Hence, ATI cards, smaller cores and similar power consumption, wind up hotter.

That's what the charts show, that's what should be stated, that is the rule, and that's the way it plays in the real world, too.

---

The only modification to that is heatsink fan efficiency, and I don't find you fellas claiming stock NVIDIA fans and heatsinks are way better than the ATI versions, hence 66C for NVIDIA, 75C, 85C, etc, and only higher for ATI, in all their cards listed.

Would you like to try that one on for size ? Should I just make it known that NVIDIA fans and heatsinks are superior to ATI ?

What is true is a lager surface area (die side squared) dissipates the same amount of heat easier, and that of course is what is going on.

ATI dies are smaller ( by a marked surface area as has so foten been pointed out), and have similar power consumption, and a higher DENSITY of heat generation, and therefore run hotter.

erple2 - Friday, September 25, 2009 - link

Oops, "milliwatt" should be "kilowatt". I got the decimal place mixed up - I used kilowatt since I thought it was easier to see than 0.247, 0.140, 0.137, 0.181...SiliconDoc - Wednesday, September 23, 2009 - link

Let's take that LOAD TEMP chart and the article's comments. Right above it, it is stated a good cooler includes the 4850 that ILDE TEMPs in at around 40C (it's actually 42C the highest of those mentioned)."The floor for a good cooler looks to be about 40C, with the GTS 250(39C), 3870(41C), and 4850 all turning in temperatures around here"

OK, so the 4850 has a good cooler, as well as the 3870... then right below is the LOAD TEMP.. and the 4850 is @ 90C -OBVIOUSLY that good cooler isn't up to keeping that tiny hammered core cool...

3870 is at 89C, 4870 is at 88C, 5870 is at 89C ALL ati....

but then, nvidia...

250, 216, 285, 275 all come in much lower at 66C to 85C.... but "temps are all over the place".

NOT only that crap, BUT the 4890 and 4870x2 are LISTED but with no temps - and take the "coolest position" on the chart!

Well we KNOW they are in the 90C range or higher...

So, you NEVER MENTION why 4870x2 and 4980 are "no load temp shown in the chart" - you give them the WINNING SPOTS anyway, you fail to mention the 260's 65C lowest LOAD WIN and instead mention GTX275 at 75C...LOL

The bias is SO THICK it's difficult to imagine how anyone came up with that CRAP, frankly.

So the superhot 4980 and 4870x2 are given #1 and #2 spots repsectively, a free ride, the other Nvidia cards KICK BUTT in lower load temps EXCEPT the 295, but it makes sure to mention the 8800GT while leaving the 4980 and 4870x2 LOAD TEMP spots blank ?

roflmao

---

What were you saying about "why" ? If why the 8800GT was included is TRUE, then comment on the gigantic LOAD TEMP bias... tell me WHY.

SiliconDoc - Wednesday, September 23, 2009 - link

AND, you don't take temps from WOW to use for those two, which no doubt even though it is NOT gpu stressing much, will yeild the 90C for those two cards 4870x2 and 4980, anyway.So they FAIL the OCCT, but you have NOTHING on them, which would if listed put EVERY SINGLE ATI CARD @ near 90C LOAD, PERIOD...

---

And we just CANNOT have that stark FACT revelaed, can we ? I mean I've seen this for well over a year here now.

LET's FINALLY SAY IT.

---

LOAD TEMPS ON THE ATI CARDS ARE ALL, EVERY SINGLE ONE NEAR 90c, much higher than almost ALL of the Nvidia cards.

pksta - Thursday, September 24, 2009 - link

I just want to know...With this much zeal about videocards and more specifically the bias that you see, doesn't it make you sound biased too? Can you say that you have owned the cards you are bashing and seen the differences firsthand? I can say I did. I had an 8800 GT and it was running in the upper 80s under load. I switched to my 4850 with the worst cooler I think I've ever seen mind you, and it stays in the mid to upper 60s under load. The cooler on the 8800 gt was the dual-slot design that was the original reference design. The 4850 had the most pathetic fan I've ever seen. It was similar to the fan and heatsink Intel used on the first Core2 stuff. It was the really cheap aluminum with a tiny copper circle that made contact with the die itself. Now, don't get me wrong I love ATI...But I also love nVidia...Anything that keeps games getting better and prices getting better. I honestly don't think, though, that the article is too biased. I think maybe a little for ATI but nothing to rage on and on about. Besides...Calm down. You know nVidia will have a response for this.SiliconDoc - Sunday, September 27, 2009 - link

1. Who cares what you think about how you percieve me ? Unless you have a fact to refute, who cares ? What is biased ? There has been quite a DISSSS on PhysX for quite some time here, but the haters have no equal alternative - NOTHING that even comes close. Just ASK THEM. DEAD SILENCE. So, strangely, the very best there is, is BAD.Now ask yourself again who is biased, won't you? Ask yourself who is spewing out the endless strings... Do yourself a favor and figure it out. Most of them have NEVER tried PhysX ! They slip up and let it be known, when they are slamming away. Then out comes their PC hate the greedy green rage, and more, because they have to, to fit in the web PC code, instead of thinking for themselves.

2. Yes, I own more cards currently than you will in your entire life. I started retail computer well over a decade ago.

3. And now, the standard red rooster tale. It sounds like you were running in 2d clocks 100% of the time, probably on a brand board like a DELL. Happens a lot with red cards. Users have no idea.

4850 with The worst fan in the World ! ( quick call Keith Olbermann) and it was ice cold, a degree colder than anything else in the review. ROFLMAO

Once again, the red shorts pinnocchio tale. Forgive me while I laugh, again !

ROFLMAO

Did you ever put your finger on the HS under load ? You should have. Did you check your 3D mhz..

http://forums.anandtech.com/messageview.aspx?catid...">http://forums.anandtech.com/messageview.aspx?catid...

Not like 90C is offbase, not like I made up that forum thread.

4. I could care less if nvidia has a response or not. Point is, STOP LYING. Or don't. I certainly have noticed many of the lies I've complained about over a year or so have gone dead silent, they won't pull it anymore, and in at least one case, used in reverse for more red bias, unfortunately, before it became the accepted idea.

So, I do a service, at the very least people are going to think, and be helped, even if they hate me.

SiliconDoc - Wednesday, September 23, 2009 - link

Well of course that's the excuse, but I'll keep my conclusion considering how the last 15 reviews on the top videocards were done, along with the TEXT that is pathetically biased for ati, that I pointed out. (Even though Derek was often the author).--

You want ot tell me how it is that ONLY the GTX295 is near or at 90C, but ALL the ati cards ARE, and we're told "temperatures are all over the place" ?

Can you really explain that, sir ?

529th - Wednesday, September 23, 2009 - link

holy shit, a full review is up already!bill3 - Wednesday, September 23, 2009 - link

Does the article keep referring to Cypress as "too big"? If Cypress is too big, what the hell is GT200 at 480mm^2 or whatever it was? Are you guys serious with that crap?I've heard that the "sweet spot" talk from AMD was a bit of a misdirection from the start anyway. IMO if AMD is going to compete for the performance crown or come reasonably close (and frankly, performance is all video card buyers really care about, as we see with all the forum posts only mentioning that GT300 will supposedly be faster than 58XX and not anything else about it) then they're going to need slightly bigger dies. So Cypress being bigger is a great thing. If anything it's too small. Imagine the performance a 480mm^2 Cypress would have! Yes, Cypress is far too small, period.

Personally it's wonderful to see AMD engineer two chips this time, a bigger performance one and smaller lower end one. This works out far better all around.

The price is also great. People expecting 299 are on crack.