The SSD Relapse: Understanding and Choosing the Best SSD

by Anand Lal Shimpi on August 30, 2009 12:00 AM EST- Posted in

- Storage

The Instruction That Changes (almost) Everything: TRIM

TRIM is an interesting command. It lets the SSD prioritize blocks for cleaning. In the example I used before, a block is cleaned only when the drive runs out of places to write things and has to dip into its spare area. With TRIM, if you delete a file, the OS sends a TRIM command to the drive along with the associated LBAs that are no longer needed. The TRIM command tells the drive that it can schedule those blocks for cleaning and add them to the pool of replacement blocks.

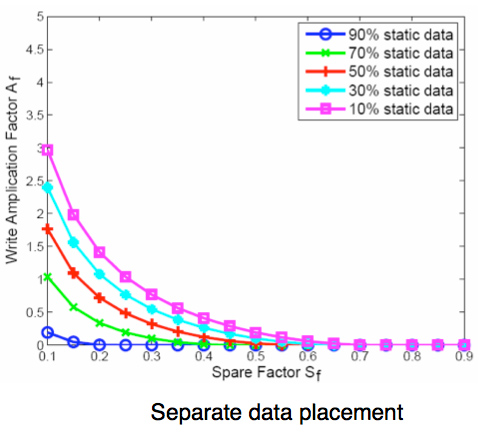

A used SSD will only have its spare area to use as a scratch pad for moving data around; on most consumer drives that’s around 7%. Take a look at this graph from a study IBM did on SSD performance:

Write Amplification vs. Spare Area, courtesy of IBM Zurich Research Laboratory

Note how dramatically write amplification goes down when you increase the percentage of spare area the drive has. In order to get down to a write amplification factor of 1 our spare area needs to be somewhere in the 10 - 30% range, depending on how much of the data on our drive is static.

Remember our pool of replacement blocks? This graph actually assumes that we have multiple pools of replacement blocks. One for frequently changing data (e.g. file tables, pagefile, other random writes) and one for static data (e.g. installed applications, data). If the SSD controller only implements a single pool of replacement blocks, the spare area requirements are much higher:

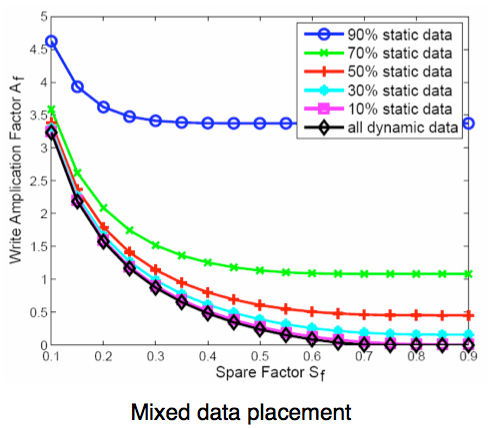

Write Amplification vs. Spare Area, courtesy of IBM Zurich Research Laboratory

We’re looking at a minimum of 30% spare area for this simpler algorithm. Some models don’t even drop down to 1.0x write amplification.

But remember, today’s consumer drives only ship with roughly 6 - 7% spare area on them. That’s under the 10% minimum even from our more sophisticated controller example. By comparison, the enterprise SSDs like Intel’s X25-E ship with more spare area - in this case 20%.

What TRIM does is help give well architected controllers like that in the X25-M more spare area. Space you’re not using on the drive, space that has been TRIMed, can now be used in the pool of replacement blocks. And as IBM’s study shows, that can go a long way to improving performance depending on your workload.

295 Comments

View All Comments

GourdFreeMan - Tuesday, September 1, 2009 - link

Yes, rewriting a cell will refill the floating gate with trapped electrons to the proper voltage level unless the gate has begun to wear out, so backing up your data, secure erasing your drive and copying the data back will preserve the life (within reason) of even drives that use minimalistic wear leveling to safeguard data. Charge retention is only a problem for users if they intend to use the drive for archival storage, or operate the drive at highly elevated temperatures.It is a bigger problem for flash engineers, however, and one of the reasons why MLC cannot be moved easily to more bits per cell without design changes. To store n-bits in a single cell you need 2^n separate energy levels to represent them, and thus each bit is only has approximately 1/(2^(n-1)) the amount of energy difference between states when compared to SLC using similar designs and materials.

Zheos - Tuesday, September 1, 2009 - link

Man you seem to know a lot about what you're talking about :)Yeah now i understand why SSD for database and file storage server would be quite a bad idea.

But for personal windows & everyday application storage, seems like a pure win to me if you can afford one :)

I was only worried about its life-span but thankx to you and you're quick replys (and for the maths and technical stuff about how it realy work ;) im sold on the fact that i will buy one soon.

The G2 from Intel seems like the best choice for now but I'll just wait and see how it's going when TRIM will become almost enable on every SSD and i'll make my decision there in a couple of months =)

GourdFreeMan - Wednesday, September 2, 2009 - link

It isn't so much that SSDs make a bad storage server, but rather that you can't neglect to make periodic backups, as with any type of storage, if your data has great monetary or sentimental value. In addition to backups, RAID (1-6) is also an option if cost is no object and you want to use SSDs for long term storage in a running server. Database servers are a little more complicated, but SSDs can be an intelligent choice there as well if your usage patterns aren't continuous heavy small (i.e. <= 4K) writes.I plan on getting a G2 myself for my laptop after Intel updates the firmware to support TRIM and Anand reviews the effects in Windows 7, and I have already been using an Indilinx-based SLC drive in my home server.

If you do anything that stresses your hard drive(s), or just like snappy boot times and application load times you will probably be impressed by the speeds of a new SSD. The cost per GB and lack of long term reliability studies are really the only things holding them back from taking the storage market by storm now.

ninevoltz - Thursday, September 17, 2009 - link

GourdFreeMan could you please continue your explanation? I would like to learn more. You have really dived deeply into the physical properties of these drives.GourdFreeMan - Tuesday, September 1, 2009 - link

Minor correction to the second paragraph in my post above -- "each bit is only has" should read "each representation only has" in the last sentence.philosofool - Monday, August 31, 2009 - link

Nice job. This has been a great series.I'm getting a SSD once I can get one at $1/GB. I want a system/program files drive of at least 80GB and then a conventional HDD (a tenth of the cost/GB) for user data.

Would keeping user data on a conventional HDD affect these results? It would seem like it wouldn't, but I would like to see the evidence.

I would really like to see more benchmarks for these drives that aren't synthetic. Have you tried things like Crysis or The Witcher load times? (Both seemed to me to have pretty slow loads for maps.) I don't know if these would be affected, but as real world applications, I think it makes sense to try them out.

Anand Lal Shimpi - Monday, August 31, 2009 - link

Personally I keep docs on my SSD but I keep pictures/music on a hard drive. Neither gets touched all that often in the grand scheme of things, but one is a lot smaller :)In The SSD Anthology I looked at Crysis load times. Performance didn't really improve when going to an SSD.

Take care,

Anand

Eeqmcsq - Monday, August 31, 2009 - link

I would have thought that the read speed of an SSD would have helped cut down some of the compile time. Is there any tool that lets you analyze disk usage vs cpu usage during the compile time, to see what percentage of the compile was spent reading/writing to disk vs CPU processing?Is there any way you can add a temperature test between an HDD and an SSD? I read a couple of Newegg reviews that say their SSDs got HOT after use, though I think that may have just been 1 particular brand that I don't remember. Also, there was at least one article online that tested an SSD vs an HDD and the SSD ran a little warmer than the HDD.

Also, garbage collection does have one advantage: It's OS independent. I'm still using Ubuntu 8.04 at work, and I'm stuck on 8.04 because my development environment WORKS, and I won't risk upgrading and destabilizing it. A garbage collecting SSD would certainly be helpful for my system... though your compiling tests are now swaying me against an SSD upgrade. Doh!

And just for fun, have you thought about running some of your benchmarks on a RAM drive? I'd like to see how far SSDs and SATA have to go before matching the speed of RAM.

Finally, any word from JMicron and their supposed update to the much "loved" JMF602 controller? I'd like to see some non-stuttering cheapo SSDs enter the market and really bring the $$$/GB down, like the Kingston V-series. Also, I'd like to see a refresh in the PATA SSD market.

"Am I relieved to be done with this article? You betcha." And I give you a great THANK YOU!!! for spending the time working on it. As usual, it was a great read.

Per Hansson - Monday, August 31, 2009 - link

Photofast have released Indilinx based PATA drives;http://www.photofastuk.com/engine/shop/category/G-...">http://www.photofastuk.com/engine/shop/category/G-...

aggressor - Monday, August 31, 2009 - link

What ever happened to the price drops that OCZ announced when the Intel G2 drives came out? I want 128GB for $280!