ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

New Drivers From NVIDIA Change The Landscape

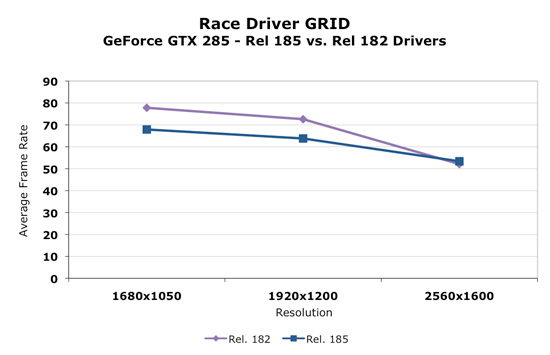

Today, NVIDIA will release it's new 185 series driver. This driver not only enables support for the GTX 275, but affects performance in parts across NVIDIA's lineup in a good number of games. We retested our NVIDIA cards with the 185 driver and saw some very interesting results. For example, take a look at before and after performance with Race Driver: GRID.

As we can clearly see, in the cards we tested, performance decreased at lower resolutions and increased at 2560x1600. This seemed to be the biggest example, but we saw flattened resolution scaling in most of the games we tested. This definitely could affect the competitiveness of the part depending on whether we are looking at low or high resolutions.

Some trade off was made to improve performance at ultra high resolutions at the expense of performance at lower resolutions. It could be a simple thing like creating more driver overhead (and more CPU limitation) to something much more complex. We haven't been told exactly what creates this situation though. With higher end hardware, this decision makes sense as resolutions lower than 2560x1600 tend to perform fine. 2560x1600 is more GPU limited and could benefit from a boost in most games.

Significantly different resolution scaling characteristics can be appealing to different users. An AMD card might look better at one resolution, while the NVIDIA card could come out on top with another. In general, we think these changes make sense, but it might be nicer if the driver automatically figured out what approach was best based on the hardware and resolution running (and thus didn't degrade performance at lower resolutions).

In addition to the performance changes, we see the addition of a new feature. In the past we've seen the addition of filtering techniques, optimizations, and even dynamic manipulation of geometry to the driver. Some features have stuck and some just faded away. One of the most popular additions to the driver was the ability to force Full Screen Antialiasing (FSAA) enabling smoother edges in games. This features was more important at a time when most games didn't have an in-game way to enable AA. The driver took over and implemented AA even on games that didn't offer an option to adjust it. Today the opposite is true and most games allow us to enable and adjust AA.

Now we have the ability to enable a feature, which isn't available natively in many games, that could either be loved or hated. You tell us which.

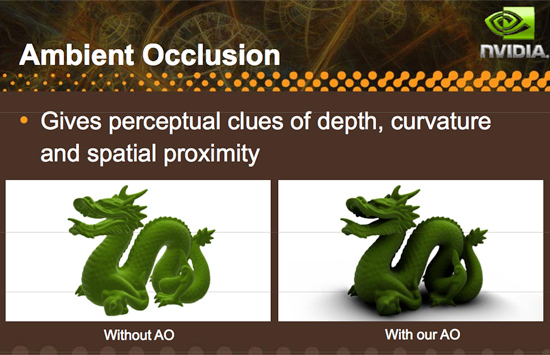

Introducing driver enabled Ambient Occlusion.

What is Ambient Occlusion you ask? Well, look into a corner or around trim or anywhere that looks concave in general. These areas will be a bit darker than the surrounding areas (depending on the depth and other factors), and NVIDIA has included a way to simulate this effect in it's 185 series driver. Here is an example of what AO can do:

Here's an example of what AO generally looks like in games:

This, as with other driver enabled features, significantly impacts performance and might not be able to run on all games or at all resolutions. Ambient Occlusion may be something some gamers like and some do not depending on the visual impact it has on a specific game or if performance remains acceptable. There are already games that make use of ambient occlusion, and some games that NVIDIA hasn't been able to implement AO on.

There are different methods to enable the rendering of an ambient occlusion effect, and NVIDIA implements a technique called Horizon Based Ambient Occlusion (HBAO for short). The advantage is that this method is likely very highly optimized to run well on NVIDIA hardware, but on the down side, developers limit the ultimate quality and technique used for AO if they leave it to NVIDIA to handle. On top of that, if a developer wants to guarantee that the feature work for everyone, they would need implement it themselves as AMD doesn't offer a parallel solution in their drivers (in spite of the fact that they are easily capable of running AO shaders).

We haven't done extensive testing with this feature yet, either looking for quality or performance. Only time will tell if this addition ends up being gimmicky or really hits home with gamers. And if more developers create games that natively support the feature we wouldn't even need the option. But it is always nice to have something new and unique to play around with, and we are happy to see NVIDIA pushing effects in games forward by all means possible even to the point of including effects like this in their driver.

In our opinion, lighting effects like this belong in engine and game code rather than the driver, but until that happens it's always great to have an alternative. We wouldn't think it a bad idea if AMD picked up on this and did it too, but whether it is more worth it to do this or spend that energy encouraging developers to adopt this and comparable techniques for more complex writing is totally up to AMD. And we wouldn't fault them either way.

294 Comments

View All Comments

josh6079 - Thursday, April 2, 2009 - link

I'm one with the opinion that PhysX is good and will only become better in time. Yet, I more than acknowledge the fact that CUDA is going to hinder its adoption so long as nVidia remains unwilling to decouple the two.There was a big thread concerning this on the Video forum, and some people just can't get through the fact that CUDA is proprietary and OpenCL is not. As long as you have that factor, hardware vendors are going to refrain from supporting their competitors proprietary parallel programming and because of that developers will continue to aim for the biggest market segment.

PhysX set the stage for non-CPU physic calculations, but that is no longer going to be an advantageous trait for them. They'll need to improve PhysX itself, and even then they will have to provide it to all consumers -- be it if they have an ATi or nVidia GPU in their system. They'll have to do this because Havok will be doing this with OpenCL to serve as the parallel programming instead of CUDA, thereby allowing Havok GPU-accelerated physics for all OpenCL-compliant GPUs.

tamalero - Sunday, April 5, 2009 - link

the problem is, by the time the PhysX becomes norm, you will be on your NVidia 480GTX :Pit happened to AMD and their X64 technology, took quite a bit to blast off.

haukionkannel - Thursday, April 2, 2009 - link

Well, if these cards reduce the price of earlier cards it's just a good thing :-)From ATI's part changes are not big, but they make the product better. It's better owerclocker than the predessor, it has better power lines. It's just ok upgrade like Phenom 2 was compared to original Phenom (though 4870 was and still is better GPU than Phenom was as an CPU...)

Nvidias 275 offers good upgrade over the 260, so not so bad if those rumors about shady preview samples turns out to be false. If the preview parts really are beefed up versions... Well Nvidia would be in some trouble, and I really think that they would not be that stubid, would'n they? All in all the improvement from 260 to 275 seems to be bigger than 4870 to 4890, so the competition is getting tighter. So far so good.

In real life both producers are keen on developing their DX11 cards to be ready for DX11 launch, so this may be guite boring year in GPU front untill the next generation comes out...

knutjb - Thursday, April 2, 2009 - link

Both cards performed well and the performance differences are small. I can buy the 4890 today on newegg but not the 275. I know the 4890 is a new chip even if it is just a refined RV770 it's still a NEW part. It falls within in an easily understood hierarchy in the 4800 range. Bottom line I know what I'm getting. The 275 I can't buy today and it appears to be another recycled part with unclear origins. Nvidia's track record with musical labeling is bothersome to me. I want to know what I'm buying without having to spend days figuring out which version is the best bang for the buck. Come on Nvidia this is a problem and you can do better than this. The CUDA and PhysX aren't enough to sway me on their own merits since most of the benefits require me to spend more money, yes they add value, but at what expense?.SiliconDoc - Monday, April 6, 2009 - link

nutjob, you're not smart enough to own NVidia. Stick with the card for dummies, the ati.Here's a clue "overclocked 4870 with 1 gig ram not 512, not a 4850 because it has ddr5 not ddr3 - so we call it 4870+ - no wait that would be fair, if we call it 4870 overclocked, uhh... umm.. no we need a better name to make it sound twice as good... let's see 4850, then 4870, so twice as good would be uhh. 4890 ! That's it !

There ya go... So the 4890 is that much better than the 4870, as it is above the 4850, right ? LOL

Maybe they should have called it the 4875, and been HONEST about it like NVidia was > 280 285 ...

No ATI likes to lie, and their dummy fans praise them for it.

Oh well, another red rooster FUD packet blown to pieces.

knutjb - Saturday, April 11, 2009 - link

Dude you missed the whole point, must be the green blurring your vision. Nvidia takes an existing chip and reduces it's capacity or takes one the doesn't meet spec and puts it out as a new product or they take the 8800, then 9800, then the 250, then... that is re-badging. The 4850 and 4830 same same. Grading chip is nothing new but Nvidia keeps rebadging OLD, but good, chips and releases them as if they are NEW which is where my primary complaint about Nvidia gfx cards comes from.4890 might not be an entirely new core but they ADDED to it, rearranged the layout, in the end improving it, they didn't SUBTRACT from it. It is more than a 4870+. It is a very simple concept that apparently you are unable to grasp due to your being such a fanboy. So you don't like ATI, I don't care, I buy whoever has the best bang for the buck that meets my needs not what you think.

ATI looked at the market and decided to hit the midrange and expand down and up from there. They went where most of the money is, in the midrange, not high end gaming. They are hurting and a silly money flag ship doesn't make sense right now. If Nvidia wasn't concerned with the 4890 they wouldn't have released another cut down chip. Put down the pipe and step away from the torch.... Seek help.

SiliconDoc - Thursday, April 23, 2009 - link

So your primary problem is that you think nvidia didn't rework their layout when they changed from G80, to G92, to G92b, and you don't like the fact that they can cover the entire midrange by doing that, because of the NAME they sue when they change the bit width, the shaders, the mem speed etc - BUTWhen aTI does it it's ok because they went for the mid range, you admit the 4850 and 4830 are the same core, but fail to mention the 4870 and fairly include the 4980 as well - because it's OK when ati does it.

Then you ignore all the other winning features of nvidia, and call me names - when I'M THE PERSON TELLING THE TRUTH, AND YOU ARE LYING.

Sorry bubba, doesn't work that way in the real world.

The real horror is ATI doesn't have a core better than the G80/G92/G92b - and the only thing that puts the 4870 and 4890 up to 260/280 levels is the DDR5, which I had to point out to all the little lying spewboys here already.

Now your argument that ATI went for the middle indicates you got that point, and YOU AGREE WITH IT, but just can't bring yourself to say it. Yes, that's the truth as well.

Look at the title of the continuing replies "RE: Another Nvidia knee jerk" - GET A CLUE SON.

lol

Man are you people pathetic. Wow.

Exar3342 - Thursday, April 2, 2009 - link

These are both basically rebadges; deal with it.knutjb - Friday, April 3, 2009 - link

If 3,000,000 more tranistors is "basically a rebadge" you are lost on how much work goes into designing a chip as opposed to changing the stamper on the chip printing machine. I would speculate ATI/AMD has made some interesting progress on their next gen chip design and applied it to the RV770 it worked so they're selling it now to fill a hole in the market.It sounds like you are trying to deal with Nvida's constant rebaging and have to point the finger and claim ATI/AMD is doing it too. Where did the 275 chip come from? Yes it is a good product but how many names do you want it called?

I have bought just as many Nvidia cards as I have ATI/AMD based on bang for the buck, just calling it like I see it...

SiliconDoc - Monday, April 6, 2009 - link

Well, they worked it for overclocking - and apparently did a fine job of that - but it is a rebadging, none the less.It seems the less than one half of one percent "new core" transistors are used as a sort of multi capacitor ring around the outside of the core, for overclocking legs. Not bad, but not a new core. I do wonder as they hinted they "did some rearranging" - if they had to waste some of those on the core works - lengthening or widening or bridging this or that - or connections to the bois for volt modding or what have you.

When eother company moves to a smaller die, a similar effect is had for the cores, some movements and fittings and optimizations always occur, although this site always jumped on the hate and lie bandwagon to screech about "rebranding" - as well as "confusing names" since the cards were not all the same... bit width, memory type, size, shaders, etc.

So I'm sure we would hear about the IMMENSE VERSATILITY of the awesome technology of the ati core (if they did the same thing with their core).

However, they've done a rebranding a ring around the overclock. Nice, but same deal.

Can you tell us how much more epxensive it's going to be to produce since derak and anand decided to "not mention the cost" since they didn't have the green monster to bash about it ?

Oh that's right, it's RUDE to mention the extra cost when the red rooster company is burning through a billion a year they don't have - ahh, the great sales numbers, huh ?