ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

The New $250 Price Point: Radeon HD 4890 vs. GeForce GTX 275

Here it is, what you've all been waiting for. And it's a tie. Pretty much. These cards stay pretty close in performance across the board.

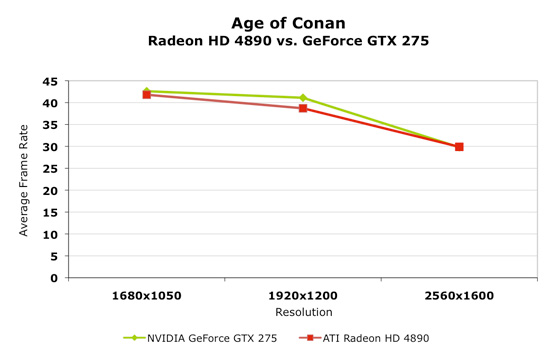

Looking at Age of Conan, we see something we didn't expect. NVIDIA is actually performing on par with AMD in this benchmark. NVIDIA's come a long way to closing the gap in this one, and for this comparison it's paid off a bit. Despite the fact that this one is essentially a tie, NVIDIA gets props for being competitive here.

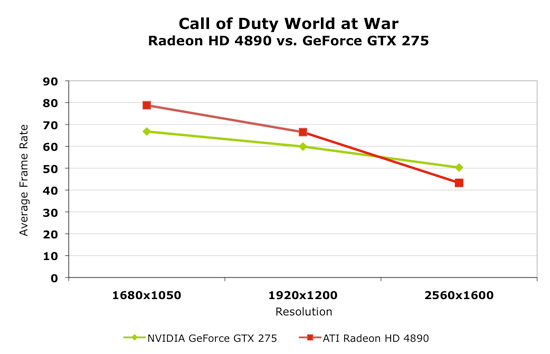

While NVIDIA usually owns Call of Duty benchmarks, the 4890 outpaces the GTX 275 at 16x10 and 19x12 while the GTX 275 leads at the 30" panel resolution. As long as its still playable, then this isn't a huge deal, but the fact that most people have lower resolution monitors who might want one of these GPUs isn't in NVIDIA's favor.

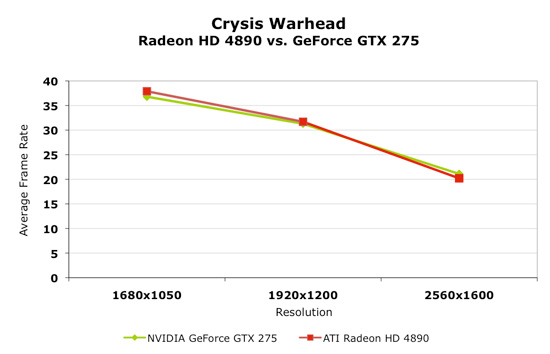

Crysis Warhead is really close in performance again.

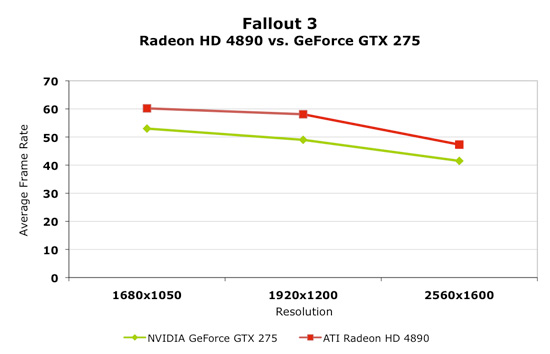

AMD leads Fallout 3, and this is the first game we've seen any consistent significant difference favoring one card over another.

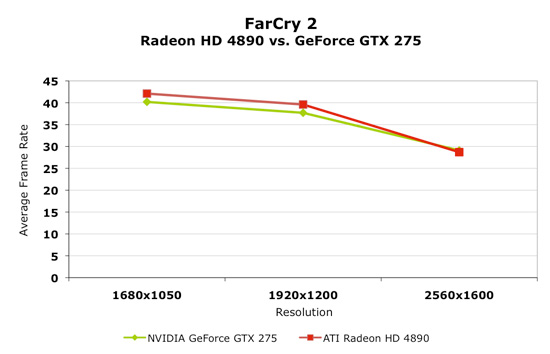

FarCry 2 takes us back to the norm with both cards performing essentially the same.

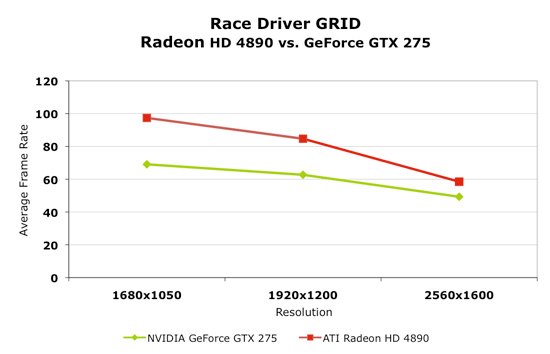

The 4890 does have a pretty hefty lead under Race Driver GRID. The gap does close at higher resolution, but it's still a gap in AMD's favor.

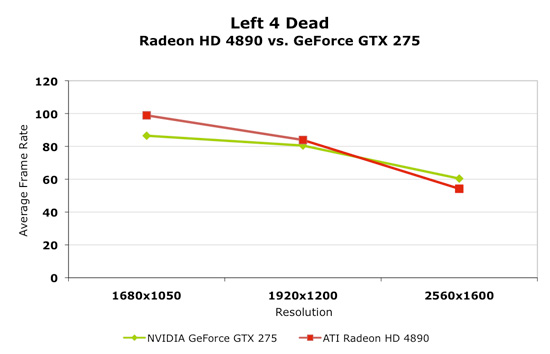

Left4Dead is also pretty much a tie with the card you would want changing depending on the resolution of your monitor.

Overall, this is really a wash. These parts are very close in performance and very competitive.

294 Comments

View All Comments

SiliconDoc - Monday, April 6, 2009 - link

Yes, exactly why added value of CUDA, PhysX, badaboom, vReveal, the game profiles ready in nv panel, the forced SLI, the ambient occlusion games and their MODS ( se back a page or two in comments) - all MATTER to a lot gamers.Let's not forget card size for htpc'ers - heat, dissipation, H.264 etc.

Just the frames matter here just for ati - formerly at 2560x when ati had that crown, now of course, just for lower resolutions - the most important suddenly to the same reviewers, when ati is stuck down there.

Yeah, PATHETIC describes the dismissal of added values.

Flunk - Thursday, April 2, 2009 - link

I have a CUDA-supporting GPU (8800GTS) and I have rarely used it. Other than to run the CUDA version of folding at home (there is also an Ati Stream version) or to look at the preitty effects in a few games. I don't really think these effects are particularly worthwhile and unless the industry comes together and supports a standard like OpenCL I don't see GPU-based processing becoming important to most uses.SiliconDoc - Monday, April 6, 2009 - link

Here's a clue as to why you're already WRONG.Most "gpu users" use NVidia. DUH.

So while you're whistling in the dark, it's already past that time when your line of crap has any basis in reality.

It takes a gigantic red fanboy brain fart to state otherwise.

Oh well, since when did facts matter when the red plague is rampant?

Hrel - Thursday, April 2, 2009 - link

You can get an Nvidia GPU that runs CUDA and Badaboom for $50; the 9600GT. End of page 13.Hrel - Thursday, April 2, 2009 - link

You can get an Nvidia GPU that runs CUDA and Badaboom for $50; the 9600GT.punjabiplaya - Thursday, April 2, 2009 - link

Just need to some stable OC vs OC results!SiliconDoc - Monday, April 6, 2009 - link

anand doesn't do the overclocked part comparison of the videocard wars - BUT DON 'T worry - a red rooster exception with charts and babbling is no doubt coming down the pike.Keep begging, then they can "respond to customer demands". lol

Oh man, this is going to be fun.

I suggest they start with the gainward gtx260 overclock goes like hell, that whips every single 4870 1g XXX ever made. Sound good ?

Griswold - Thursday, April 2, 2009 - link

What I'm really curious about because neither of the cards is what I'm interested in buying, but I like to follow both companies business strategies:Does nvidia really lose money or is looking at a fat zero on the bottom line with this card?

SiliconDoc - Monday, April 6, 2009 - link

Uhh ati is losing a billion a year.If you want card specifics, that's probably difficult to calculate - and loss leaders are nothing new in business - in fact that's what successful businesses use as a sales tool. Seems ATI has taken it a bit too far and made every card they sell a loss leader, hence their billions in the hole.

Now as far as the NVidia card in question, even if Obama takes over the mean greedy green machine - he and his cabal "won't release the information because it's just not fair and may cause those not really needing help at the money window to be expsoed".

So no, you won't be finding out.

The problem is anyway, if a certain card is a loss leader, they calculate how much other business it brings in, and that makes it a WINNER - and that's the idea.

flashbacck - Thursday, April 2, 2009 - link

The physx/cuda section was interesting, although it sounded a bit... whiny.I would LOVE it if someone would write an article about all the PR and marketing shenanigans that go on with reviewers behind the scenes. It'll never happen because it would kill any relationship the author has with the companies, but I bet it would be an eye opening read.