The SSD Anthology: Understanding SSDs and New Drives from OCZ

by Anand Lal Shimpi on March 18, 2009 12:00 AM EST- Posted in

- Storage

Putting Theory to Practice: Understanding the SSD Performance Degradation Problem

Let’s look at the problem in the real world. You, me and our best friend have decided to start making SSDs. We buy up some NAND-flash and build a controller. The table below summarizes our drive’s characteristics:

| Our Hypothetical SSD | |

| Page Size | 4KB |

| Block Size | 5 Pages (20KB) |

| Drive Size | 1 Block (20KB |

| Read Speed | 2 KB/s |

| Write Speed | 1 KB/s |

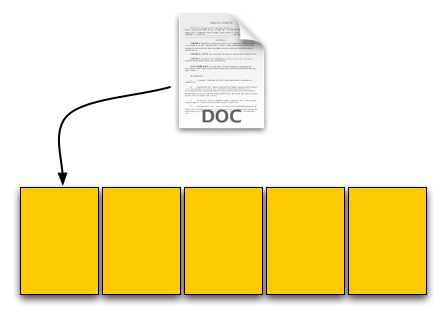

Through impressive marketing and your incredibly good looks we sell a drive. Our customer first goes to save a 4KB text file to his brand new SSD. The request comes down to our controller, which finds that all pages are empty, and allocates the first page to this text file.

Our SSD. The yellow boxes are empty pages

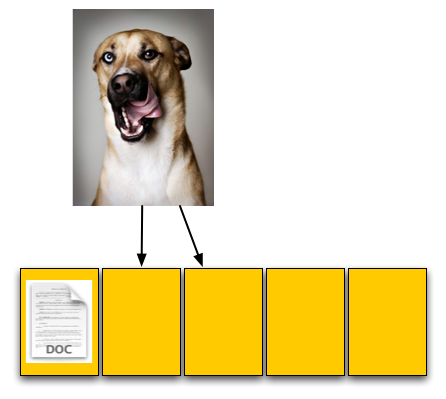

The user then goes and saves an 8KB JPEG. The request, once again, comes down to our controller, and fills the next two pages with the image.

The picture is 8KB and thus occupies two pages, which are thankfully empty

The OS reports that 60% of our drive is now full, which it is. Three of the five open pages are occupied with data and the remaining two pages are empty.

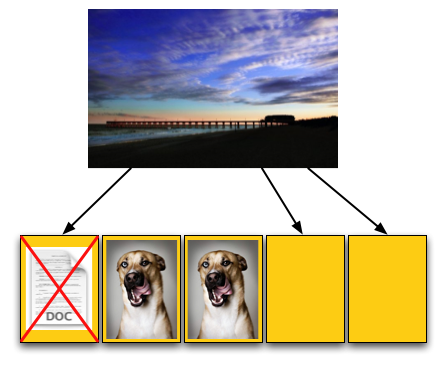

Now let’s say that the user goes back and deletes that original text file. This request doesn’t ever reach our controller, as far as our controller is concerned we’ve got three valid and two empty pages.

For our final write, the user wants to save a 12KB JPEG, that requires three 4KB pages to store. The OS knows that the first LBA, the one allocated to the 4KB text file, can be overwritten; so it tells our controller to overwrite that LBA as well as store the last 8KB of the image in our last available LBAs.

Now we have a problem once these requests get to our SSD controller. We’ve got three pages worth of write requests incoming, but only two pages free. Remember that the OS knows we have 12KB free, but on the drive only 8KB is actually free, 4KB is in use by an invalid page. We need to erase that page in order to complete the write request.

Uhoh, problem. We don't have enough empty pages.

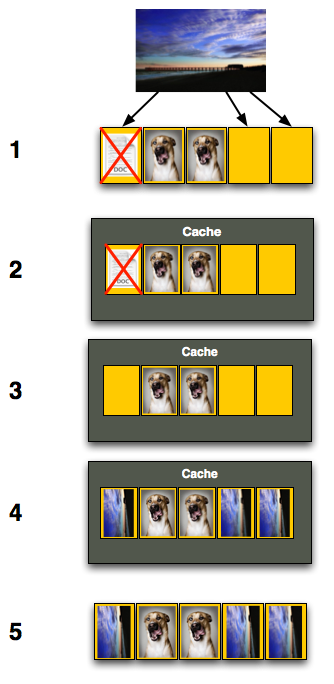

Remember back to Flash 101, even though we have to erase just one page we can’t; you can’t erase pages, only blocks. We have to erase all of our data just to get rid of the invalid page, then write it all back again.

To do so we first read the entire block back into memory somewhere; if we’ve got a good controller we’ll just read it into an on-die cache (steps 1 and 2 below), if not hopefully there’s some off-die memory we can use as a scratch pad. With the block read, we can modify it, remove the invalid page and replace it with good data (steps 3 and 4). But we’ve only done that in memory somewhere, now we need to write it to flash. Since we’ve got all of our data in memory, we can erase the entire block in flash and write the new block (step 5).

Now let’s think about what’s just happened. As far as the OS is concerned we needed to write 12KB of data and it got written. Our SSD controller knows what really transpired however. In order to write that 12KB of data we had to first read 12KB then write an entire block, or 20KB.

Our SSD is quite slow, it can only write at 1KB/s and read at 2KB/s. Writing 12KB should have taken 12 seconds but since we had to read 12KB and then write 20KB the whole operation now took 26 seconds.

To the end user it would look like our write speed dropped from 1KB/s to 0.46KB/s, since it took us 26 seconds to write 12KB.

Are things starting to make sense now? This is why the Intel X25-M and other SSDs get slower the more you use them, and it’s also why the write speeds drop the most while the read speeds stay about the same. When writing to an empty page the SSD can write very quickly, but when writing to a page that already has data in it there’s additional overhead that must be dealt with thus reducing the write speeds.

250 Comments

View All Comments

Erickffd - Friday, March 20, 2009 - link

Also created an account just to post this comment.Really impressive and well done article ! Will stay tune for further developments and reviews. Thank you so much :)

Also... very impressed by OCZ's respond and commitment upon end users needs and product quality assurance (unfortunately not so commun by large this days among other companies). Certanly will buy from them my next SSDs to reward and support their healty policy.

Be well ! ;)

Gasaraki88 - Friday, March 20, 2009 - link

This truly was a GREAT article. I enjoyed reading it and was very informative. Thank you so much. That's why Anandtech is the best site out there.davidlants - Friday, March 20, 2009 - link

This is one of the best tech articles I have ever read, I created an account just to post this comment. I've been a fan of Anandtech for years and articles like this (and the RV700 article from a while back) show the truly unique perspective and access that Anand has that simply no other tech site can match. GREAT WORK!!!Zak - Friday, March 20, 2009 - link

I just got the Apex. I'd probably cough up more dough for the Vertex after reading this. However, I've run it for two days as my system disk in MacPro and haven't noticed any issues, it's really fast. But I guess I'll get Vertex for my Windows 7 build.Z.

Nemokrad - Friday, March 20, 2009 - link

What I find intriguing about this article is that these smaller manufacturers do not do real world internal testing for these things. They should not need 3rd parties like you to figure this shit out for them. Maybe now OCZ will learn what they need to do for the future.JonasR - Friday, March 20, 2009 - link

Thanks for an excellent article. I have one question does anyone know which controller is beeing used in the new Patriot 256GB V.3 SSD?

tgwgordon - Friday, March 20, 2009 - link

Anyone know if the Vertex Anand used had 32M or 64M cache?Dennis Travis - Friday, March 20, 2009 - link

Excellent and informative article as always Anand. Thanks so much for posting the truth!!IsLNdbOi - Friday, March 20, 2009 - link

Can't remember what page it was, but you showed some charts on the performance of SSDs at their lowest possible performance levels.At their lowest possible performance levels are they still faster than the 300GB Raptor?

Edgemeal - Friday, March 20, 2009 - link

It's too bad Windows and applications don't let you select where all the data that needs to be updated and saved to is stored. If that was an option a SSD could be used to only load data (EXE files and support files) and a HDD could be used to store files that are updated frequently, like a web browser for example, their constantly caching files, from the sound of this article that would kill the performance of a SSD in no time.Great article, I'll stick to HDDs for now.