The SSD Anthology: Understanding SSDs and New Drives from OCZ

by Anand Lal Shimpi on March 18, 2009 12:00 AM EST- Posted in

- Storage

Random Read/Write Performance

Arguably much more important to any PC user than sequential read/write performance is random access performance. It's not often that you're writing large files sequentially to your disk, but you do encounter tons of small file reads/writes as you use your PC.

To measure random read/write performance I created an iometer script that peppered the drive with random requests, with an IO queue depth of 3 (to add some multitasking spice to the test). The write test was performed over an 8GB range on the drive, while the read test was performed across the whole drive. I ran the test for 3 minutes.

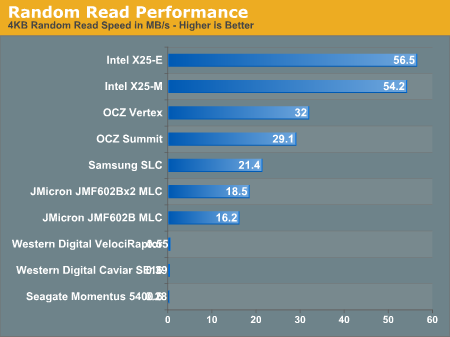

The three hard drives all posted scores below 1MB/s and thus aren't visible on our graph above. This is where SSDs shine and no hard drive, regardless of how many you RAID together, can come close.

The two Intel drives top the charts and maintain a huge lead. The OCZ Vertex actually beats out the more expensive (and unreleased) Summit drive with a respectable 32MB/s transfer rate here. Note that the Vertex is also faster than last year's Samsung SLC drive that everyone was selling for $1000. Even the JMicron drives do just fine here.

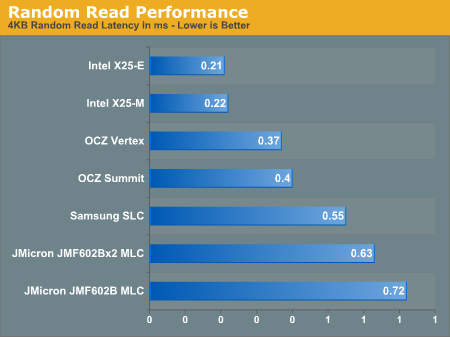

If we look at latency instead of transfer rate it helps put things in perspective:

Read latencies for hard drives have always been measured in several ms, but every single SSD here manages to complete random reads in less than 1ms under load.

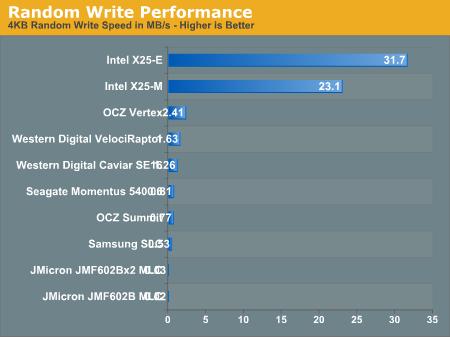

Random write speed is where we can thin the SSD flock:

Only the Intel drives and to an extent, the OCZ Vertex, post numbers visible on this scale. Let's go to a table to see everything in greater detail:

| 4KB Random Write Speed | |

| Intel X25-E | 31.7 MB/s |

| Intel X25-M | 23.1 MB/s |

| JMicron JMF602B MLC | 0.02 MB/s |

| JMicron JMF602Bx2 MLC | 0.03 MB/s |

| OCZ Summit | 0.77 MB/s |

| OCZ Vertex | 2.41 MB/s |

| Samsung SLC | 0.53 MB/s |

| Seagate Momentus 5400.6 | 0.81 MB/s |

| Western Digital Caviar SE16 | 1.26 MB/s |

| Western Digital VelociRaptor | 1.63 MB/s |

Every single drive other than the Intel X25-E, X25-M and OCZ's Vertex is slower than the 2.5" Seagate Momentus 5400.6 hard drive in this test. The Vertex, thanks to OCZ's tweaks, is now 48% faster than the VelociRaptor.

The Intel drives are of course architected for the type of performance needed on a desktop/notebook and thus they deliver very high random write performance.

Random write performance is merely one corner of the performance world. A drive needs good sequential read, sequential write, random read and random write performance. The fatal mistake is that most vendors ignore random write performance and simply try to post the best sequential read/write speeds; doing so simply produces a drive that's undesirable.

While the Vertex is slower than Intel's X25-M, it's also about half the price per GB. And note that the Vertex is still 48% faster than the VelociRaptor here, and multiple times faster in the other tests.

250 Comments

View All Comments

punjabiplaya - Wednesday, March 18, 2009 - link

Great info. I'm looking to get an SSD but was put off by all these setbacks. Why should I put away my HDDS and get something a million times more expensive that stutters?This article is why I visit AT first.

Hellfire26 - Wednesday, March 18, 2009 - link

Anand, when you filled up the drives to simulate a full drive, did you also write to the extended area that is reserved? If you didn't, wouldn't the Intel SLC drive (as an example) not show as much of a performance drop, versus the MLC drive? As you stated, Intel has reserved more flash memory on the SLC drive, above the stated SSD capacity.I also agree with GourdFreeMan, that the physical block size needs to be reduced. Due to the constant erasing of blocks, the Trim command is going to reduce the life of the drive. Of course, drive makers could increase the size of the cache and delay using the Trim command until the number of blocks to be erased equals the cache available. This would more efficiently rearrange the valid data still present in the blocks that are being erased (less writes). Microsoft would have to design the Trim command so it would know how much cache was available on the drive, and drive makers would have to specifically reserve a portion of their cache for use by the Trim command.

I also like Basilisk's comment about increasing the cluster size, although if you increase it too big, you are likely to be wasting space and increasing overhead. Surely, even if MS only doubles the cluster size for NTFS partitions to 8KB's, write cycles to SSD's would be reduced. Also, There is the difference between 32bit and 64bit operating systems to consider. However, I don't have the knowledge to say whether Microsoft can make these changes without running into serious problems with other aspects of the operating system.

Anand Lal Shimpi - Wednesday, March 18, 2009 - link

I only wrote to the LBAs reported to the OS. So on the 80GB Intel drive that's from 0 - 74.5GB.I didn't test the X25-E as extensively as the rest of the drives so I didn't look at performance degradation as closely just because I was running out of time and the X25-E is sooo much more expensive. I may do a standalone look at it in the near future.

Take care,

Anand

gss4w - Wednesday, March 18, 2009 - link

Has anyone at Anandtech talked to Microsoft about when the "Trim" command will be supported in Windows 7. Also it would be great if you could include some numbers from Windows 7 beta when you do a follow-up.One reason I ask is that I searched for "Windows 7 ssd trim" and I saw a presentation from WinHEC that made it sound like support for the trim command would be a requirement for SSD drives to meet the Windows 7 logo requirements. I would think if this were the case then Windows 7 would have support for trim. However, this article made it sound like support for Trim might not be included when Windows 7 is initially released, but would be added later.

ryedizzel - Thursday, March 19, 2009 - link

I think it is obvious that Windows 7 will support TRIM. The bigger question this article points out is whether or not the current SSDs will be upgradeable via firmware- which is more important for consumers wanting to buy one now.Martimus - Wednesday, March 18, 2009 - link

It took me an hour to read the whole thing, but I really enjoyed it. It reminded me of the time I spent testing circuitry and doing root cause analysis.alpha754293 - Wednesday, March 18, 2009 - link

I think that it would be interesting if you were to be able to test the drives for the "desktop/laptop/consumer" front by writing a 8 GiB file using 4 kiB block sizes, etc. for the desktop pattern and also to test the drive then with a larger sizes and larger block size for the server/workstation pattern as well.You present some very very good arguments and points, and I found your article to be thoroughly researched and well put.

So I do have to commend you on that. You did an excellent job. It is thoroughly enjoyable to read.

I'm currently looking at a 4x 256 GB Samsung MLC on Solaris 10/ZFS for apps/OS (for PXE boot), and this does a lot of the testing; but I would be interested to see how it would handle more server-type workloads.

korbendallas - Wednesday, March 18, 2009 - link

If The implementation of the Trim command is as you described here, it would actually kind of suck."The third step was deleting the original 4KB text file. Since our drive now supports TRIM, when this deletion request comes down the drive will actually read the entire block, remove the first LBA and write the new block back to the flash:"

First of all, it would create a new phenomenon called Erase Amplification. This would negatively impact the lifetime of a drive.

Secondly, you now have worse delete performance.

Basically, an SSD 4kB block can be in 3 different states: erased, data, garbage. A block enters the garbage state when a block is "overwritten" or the Trim command marks the contents as invalid.

The way i would imagine it working, marking block content as invalid is all the Trim command does.

Instead the drive will spend idle time finding the 512kB pages with the most garbage blocks. Once such a page is found, all the data blocks from that page would be copied to another page, and the page would be erased. Doing it in this way maximizes the number of garbage blocks being converted to erased.

alpha754293 - Wednesday, March 18, 2009 - link

BTW...you might be able to simulate the drive as well using Cygwin where you go to the drive and run the following:$ dd if=/dev/random of=testfile bs=1024k count=76288

I'm sure that you can come up with fancier shell scripts and stuff that uses the random number generator for the offsets (and if you really want it to work well, partition it so that when it does it, it takes up the entire initial 74.5 GB partition, and when you're done "dirtying" the data using dd and offset in a random pattern, grow the partition to take up the entire disk again.)

Just as a suggestion for future reference.

I use parts of that to some (varying) degree for when I do my file/disk I/O subsystem tests.

nubie - Wednesday, March 18, 2009 - link

I should think that most "performance" laptops will come with a Vertex drive in the near future.Finally a performance SSD that comes near mainstream pricing.

Things are looking up, if more manufacturers get their heads out of the sand we should see prices drop as competition finally starts breeding excellence.