The Dark Knight: Intel's Core i7

by Anand Lal Shimpi & Gary Key on November 3, 2008 12:00 AM EST- Posted in

- CPUs

Nehalem.

Nuh - hay - lem

At least that's how Intel PR pronounces it.

I've been racking my brain for the past month on how best to review this thing, what angle to take, it's tough. You see, with Conroe the approach was simple: the Pentium 4 was terrible, AMD proudly wore its crown and Intel came in and turned everyone's world upside down. With Nehalem, the world is fine, it doesn't need fixing. AMD's pricing is quite competitive, Intel's performance is solid, power consumption isn't getting out of control...things are nice.

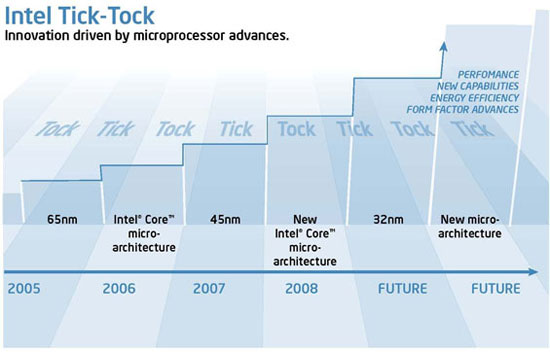

But we've got that pesky tick-tock cadence and things have to change for the sake of change (or more accurately, technological advancement, I swear I'm not getting cynical in my old age):

2008, that's us, that's Nehalem.

Could Nehalem ever be good enough? It's the first tock after Conroe, that's like going on stage after the late Richard Pryor, it's not an enviable position to be in. Inevitably Nehalem won't have the same impact that Conroe did, but what could Intel possibly bring to the table that it hasn't already?

Let's go ahead and get started, this is going to be interesting...

Nehalem's Architecture - A Recap

I spent 15 pages and thousands of words explaining Intel's Nehalem architecture in detail already, but what I'm going to try and do now is summarize that in a page. If you want greater detail please consult the original article, but here are the cliff's notes.

Nehalem

Nehalem, as I've mentioned countless times before, is a "tock" processor in Intel's tick-tock cadence. That means it's a new microarchitecture but based on an existing manufacturing process, in this case 45nm.

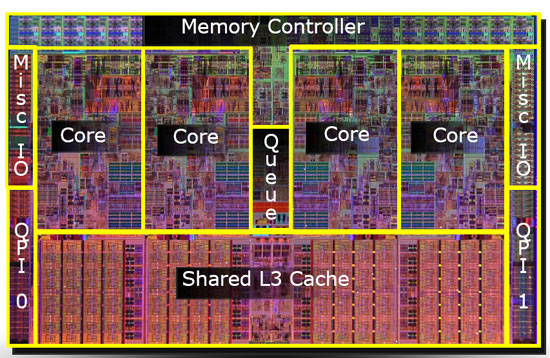

A quad-core Nehalem is made up of 731M transistors, down from 820M in Yorkfield, the current quad-core Core 2s based on the Penryn microarchitecture. The die size has gone up however, from 214 mm^2 to 263 mm^2. That's fewer transistors but less densely packed ones, part of this is due to a reduction in cache size and part of it is due to a fundamental rearchitecting of the microprocessor.

Nehalem is Intel's first "native" quad-core design, meaning that all four cores are a part of one large, monolithic die. Each core has its own L1 and L2 caches, and all four sit behind a large 8MB L3 cache. The L1 cache remains unchanged from Penryn (the current 45nm Core 2 architecture), although it is slower at 4 cycles vs. 3. The L2 cache gets a little faster but also gets a lot smaller at 256KB per core, whereas the lowest end Penryns split 3MB of L2 among two cores. The L3 cache is a new addition and serves as a common pool that all four cores can access, which will really help in cache intensive multithreaded applications (such as those you'd encounter in a server). Nehalem also gets a three-channel, on-die DDR3 memory controller, if you haven't heard by now.

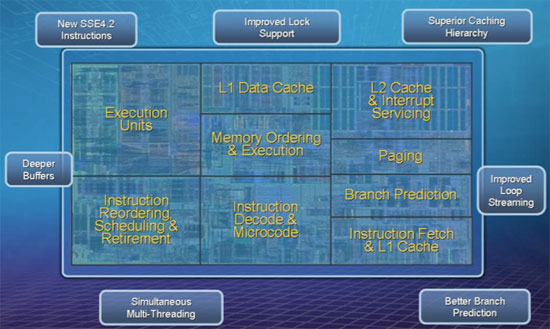

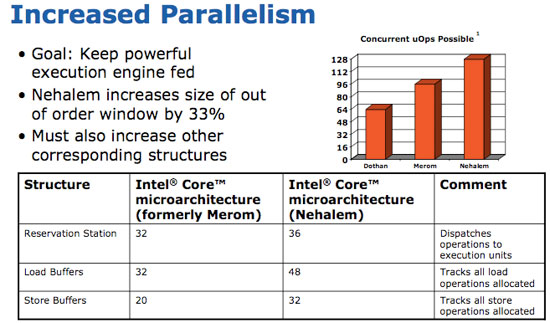

At the core level, everything gets deeper in Nehalem. The CPU is just as wide as before and the pipeline stages haven't changed, but the reservation station, load and store buffers and OoO scheduling window all got bigger. Peak execution power hasn't gone up, but Nehalem should be much more efficient at using its resources than any Core microarchitecture before it.

Once again to address the server space Nehalem increases the size of its TLBs and adds a new 2nd level unified TLB. Branch prediction is also improved, but primarily for database applications.

Hyper Threading is back in its typical 2-way fashion, so a single quad-core Nehalem can work on 8 threads at once. Here we have yet another example of Nehalem making more efficient use of the execution resources rather than simply throwing more transistors at the problem. With Penryn Intel hit nearly 1 billion transistors for a desktop quad-core chip, clearly Nehalem was an attempt to both address the server market and make more efficient use of those transistors before the next big jump and crossing the billion transistor mark.

73 Comments

View All Comments

anand4happy - Sunday, February 8, 2009 - link

saw many thing but this is the thing something dfferentsd4us.blogspot.com/2009/01/intel-viivintel-975x-express-955x.html

nidhoggr - Monday, November 10, 2008 - link

I cant find that information on the test setup page.nidhoggr - Monday, November 10, 2008 - link

test not text :)puffpio - Wednesday, November 5, 2008 - link

would you guys consider rebenchmarking?from the x264 changelog since the nehalem specific optimizations:

"Overall speed improvement with Nehalem vs Penryn at the same clock speed is around 40%."

anartik - Wednesday, November 5, 2008 - link

Good review and better than Tom's overall. However Tom's stumbled on something that changed my mind about gaming with Nehalem. While Anand's testing shows minimal performance gains (and came to the not good for games conclusion) Tom's approached it with 1-4 GPU's SLI or Crossfire. All I can say is the performance gains with Nvidia cards in SLI was stunning. Maybe the platform favors SLI or Nvidia had a driver advantage in licensing SLI to Intel. Either way Nehalem and SLI smoked ATI and the current 3.2 extreme quad across the board.dani31 - Wednesday, November 5, 2008 - link

I know it would't change any conclusion, but since we discuss bleeding edge Intel hardware it would have been nice to see the same in the AMD testbed.Using a SB600 mobo (instead of the acclaimed SB750) and an old set of drivers makes it look like the AMD numbers were simply pasted from an old article.

Casper42 - Tuesday, November 4, 2008 - link

Something I think you guys missed in your article/conslusion is the fact that we're now able to pair a great CPU with a pretty damn good North/South Bridge AND SLI.I found that the 680/780/790 featureset is plainly lacking and that the Intel ICH9R/10R seems to always perform better and has more features. If any doubt, look at Matrix RAID vs nVidia's RAID. Night and day difference, especially with RAID5.

The problem with the X38/X48 was you got a great board but were effectively locked into ATI for high end Gaming.

Now we have the best of both worlds. You get ICH10R, a very well performing CPU (even the 920 beats most of the Intel Quad Core lineup) AND you can run 1/2/3 nVidia GPUs on the machine. In my opinion, this is a winning combination.

The only downside I see is board designs seem to suck more and more.

With socket 1366 being so massive and 6 DIMM slots on the Enthusiast/Gamer boards, we're seeing not only 6 expansion slots (down from the standard of 7) but in most boards I have seen pics of, the top slot is an x1 so they can wedge it next to the x58 IOH which means your left with only 5 slots for other cards. Using 3 dual slot cards is out of the question without a massive 10 slot case (of which there are only like 3-5 on the market) and even if you can wedge 2 or 3 dual slot cards into the machine, you have almost zero expansion card slots should you ever need them.

Then we get to all the cooling crap surrounding the CPU. ALL these designs rely on a top down traditional cooler and if you decide to use a highly effective tower cooling solution, all the little heatsink fins on the Northbridge and pwer regulators around the CPU get very little or no airflow. Now your in there adding puny little 40/60mm fans that produce more noise than airflow, not to mention that the DIMMs are hardly ever cooled in today's board designs.

Call me a cooling purist if you will, but I much prefer traditional front to back airflow and all this side intake top exhaust stuff just makes me cringe. I personally run a Tyan Thunder K8WE with 2 Hyper6+ coolers and the procs and RAM are all cooled front to back. Intake and exhaust are 120mm and I have a bit of an air channel in which that airflow never goes near the expansion card slots below, which by the way have a 92mm fan up front pushing air in across the drives and another 92mm fan clipped onto the expansion slots in the back pulling it back out.

I dont know how to resolve these issues, but I think someone surely needs to because IMHO its getting out of control.

lemonadesoda - Tuesday, November 4, 2008 - link

"Looking at POV-Ray we see a 30% increase in performance for a 12% increase in total system power consumption, that more than exceeds Intel's 2:1 rule for performance improvement vs. increase in power consumption."You cant use "total system power", but must make the best estimate of CPU power draw. Why? Because imagine if you had a system with 6 sticks of RAM, 4 HDDs, etc. you would have ever increasing power figures that would make the ratio of increased power consumption (a/b) smaller and smaller!

If you take your figures and subtract (a guestimate of) 100W for non CPU power draw, then you DONT get the Intel 2:1 ratio at all!

The figures need revisiting.

AnnonymousCoward - Thursday, November 6, 2008 - link

Performance vs power appears to linearly increase with HT. Using the 100W figure for non-CPU draw means a 25% power increase, which is close to the 30% performance.Unless we're talking about servers, I think looking at power draw per application is silly. Just do idle power, load power, and maybe some kind of flops/watt benchmark just for fun.

silversound - Tuesday, November 4, 2008 - link

great article, tomsharware reviews always pro intel and nvidia, not sure if they got pay $ to suppot them. anandtech is always neutral, thx!