Intel X25-M SSD: Intel Delivers One of the World's Fastest Drives

by Anand Lal Shimpi on September 8, 2008 4:00 PM EST- Posted in

- Storage

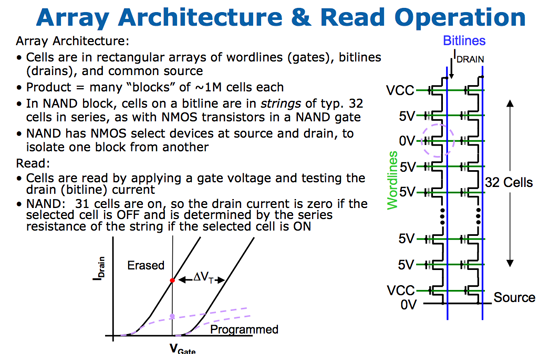

The Flash Hierarchy & Data Loss

We've already established that a flash cell can either store one or two bits depending on whether it's a SLC or MLC device. Group a bunch of cells together and you've got a page. A page is the smallest structure you can program (write to) in a NAND flash device. In the case of most MLC NAND flash each page is 4KB. A block consists of a number of pages, in the Intel MLC SSD a block is 128 pages (128 pages x 4KB per page = 512KB per block = 0.5MB). A block is the smallest structure you can erase. So when you write to a SSD you can write 4KB at a time, but when you erase from a SSD you have to erase 512KB at a time. I'll explore that a bit further in a moment, but let's look at what happens when you erase data from a SSD.

Whenever you write data to flash we go through the same iterative programming process again. Create an electric field, electrons tunnel through the oxide and the charge is stored. Erasing the data causes the same thing to happen but in the reverse direction. The problem is that the more times you tunnel through that oxide, the weaker it becomes, eventually reaching a point where it will no longer prevent the electrons from doing whatever they want to do.

On MLC flash that point is reached after about 10,000 erase/program cycles. With SLC it's 100,000 thanks to the simplicity of the SLC design. With a finite lifespan, SSDs have to be very careful in how and when they choose to erase/program each cell. Note that you can read from a cell as many times as you want to, that doesn't reduce the cell's ability to store data. It's only the erase/program cycle that reduces life. I refer to it as a cycle because an SSD has no concept of just erasing a block, the only time it erases a block is to write new data. If you delete a file in Windows but don't create a new one, the SSD doesn't actually remove the data from flash until you're ready to write new data.

Now going back to the disparity between how you program and how you erase data on a SSD, you program in pages and you erase in blocks. Say you save an 8KB file and later decide that you want to delete it, it could just be a simple note you wrote for yourself that you no longer need. When you saved the file, it'd be saved as two pages in the flash memory. When you go to delete it however, the SSD mark the pages as invalid but it won't actually erase the block. The SSD will wait until a certain percentage of pages within a block are marked as invalid before copying any valid data to new pages and erasing the block. The SSD does this to limit the number of times an individual block is erased, and thus prolong the life of your drive.

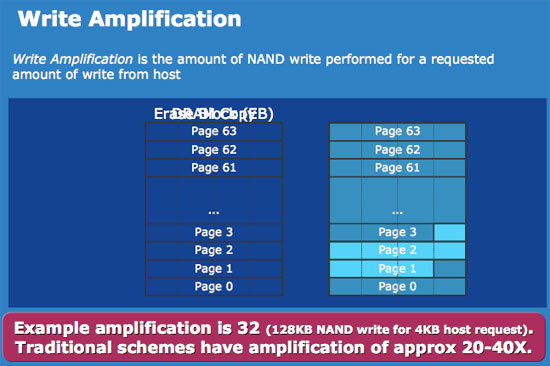

Not all SSDs handle deletion requests the same way, how and when you decide to erase a block with invalid pages determines the write amplification of your device. In the case of a poorly made SSD, if you simply wanted to change a 16KB file the controller could conceivably read the entire block into main memory, change the four pages, erase the block from the SSD and then write the new block with the four changed pages. Using the page/block sizes from the Intel SSD, this would mean that a 16KB write would actually result in 512KB of writes to the SSD - a write amplification factor of 32x.

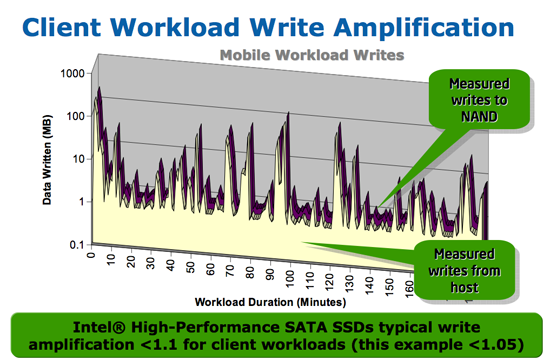

At this point we don't have any data from any of the other SSD controller makers on how they handle situations like this, but Intel states that traditional SSD controllers suffer from write amplification in the 20 - 40x range, which reduces the longevity of their drives. Intel states that on typical client workloads its write amplification factor is less than 1.1x, in other words you're writing less than 10% more data than you need to. The write amplification factor itself doesn't mean much, what matters is the longevity of the drive and there's one more factor that contributes there.

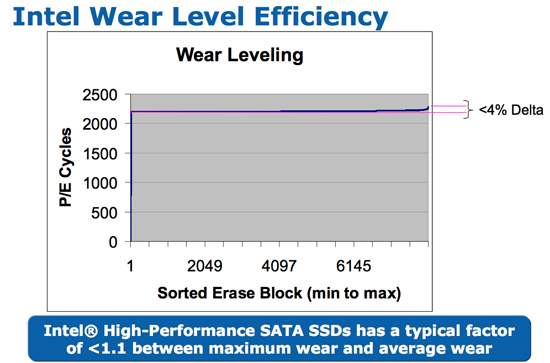

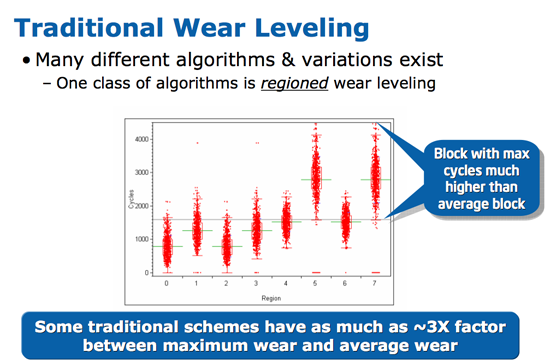

We've already established that with flash there are a finite number of times you can write to a block before it loses its ability to store data. SSDs are pretty intelligent and will use wear leveling algorithms to spread out block usage across the entirety of the drive. Remember that unlike mechanical disks, it doesn't matter where on a SSD you write to, the performance will always be the same. SSDs will thus attempt to write data to all blocks of the drive equally. For example, let's say you download a 2MB file to your band new, never been used SSD, which gets saved to blocks 10, 11, 12 and 13. You realize you downloaded the wrong file and delete it, then go off to download the right file. Rather than write the new file to blocks 10, 11, 12 and 13, the flash controller will write to blocks 14, 15, 16 and 17. In fact, those four blocks won't get used again until every other block on the drive has been written to once. So while your MLC SSD may only have a lifespan of 10,000 cycles, it's going to last quite a while thanks to intelligent wear leveling algorithms.

Intel's wear leveling efficiency, all blocks get used nearly the same amount

Bad wear leveling, presumably on existing SSDs, some blocks get used more than others

Intel's SSDs carry about a 4% wear leveling inefficiency, meaning that 4% of the blocks on an Intel SSD will be worn at a rate higher than the rest.

96 Comments

View All Comments

bharatwaja - Tuesday, September 9, 2008 - link

This is by far the best article I have ever read about SSDs... Anand you ROCK!!! and EVERYONE at ANANDTECH ROCKS!....Great article... Thank you........ you just saved me from spending $99 on a pretty much useless SSD.

[Takes a printout of Anand to worship]

hyperdoggy - Tuesday, September 9, 2008 - link

I noticed that ocz have released core v2 series of their ssd. It seems to come in a different size configurations than its 1st gen product, can you confirm if this is based on the same controller as its 1st gen product Anand?Also, i would love to see some ssd raid action since ssd does not lose its data when the array fails. I'm more interested to see if there is other limiting factor when you raid with such fast ssds, such as the motherboard chipset itself. If raiding two of those cheap $99 core 32gig ssd helps to reduce the write bug, it might be worthwhile buy, which was something i was thinking of doing till i read the review here.

And might i say, great review indeed Aanad.

bytekeeper - Tuesday, September 9, 2008 - link

Hi there. There is a discussion in the OCZ support forum aboutthe test results published in this article and the OCZ support

staff is thinking that you've got a faulty drive. Check here:

http://www.ocztechnologyforum.com/forum/showthread...">http://www.ocztechnologyforum.com/forum/showthread...

Is there any chance you can re run the test with another

Core drive or Supertalent/Patriot JMicron JMF602 victims?

Thanks.

Gary Key - Wednesday, September 10, 2008 - link

I posted results with a newly arrived Core drive straight off the shelf from Newegg in that thread. No changes to our initial results, although I will try a different controller later this week.Anand Lal Shimpi - Tuesday, September 9, 2008 - link

In total I have four drives based on the JMF602 controller: one from OCZ, SuperTalent, Patriot and Silicon Power.Of the drives, the OCZ Core is the oldest. If you look at page 9 in the article I've got a table that has the SuperTalent, Silicon Power and OCZ Core drives in it. The Silicon Power and SuperTalent drive both perform worse than the OCZ.

The situation gets more interesting if you look at the Iometer results for the drives, which I didn't publish in great depth but I did run internally. Average write latency goes down tremendously on the SuperTalent drive, but max latency more than doubled. I suspect that the SuperTalent drive may use a newer version of the JMF602, but the result is a reduction in real world performance - at least in the test cases I ran.

The inability to complete an OS install also applies to other drives. As I mentioned in the article I had the same problem with the SuperTalent MLC drive in OS X.

While there are undoubtedly ways of attempting to lessen the impact of the JMF602's poor design, I believe only a new controller will actually completely address these problems.

Take care,

Anand

yottabit - Tuesday, September 9, 2008 - link

This is why I love your website. Lots of your articles are so in-depth they make me think I'm reading some grad school journal that I should be paying money for.I actually first saw the Intel SSD article on TomsHardware, but didn't even bother to read it because I imagined it was going to say something like "OMG INTEL SSD ROX LOLZ SO MUCH FASTER THAN OTHER HDS"

I come here expecting to get a detailed analaysis, and that's always what you provide! Thank you so much.

My only gripe is you seem to have skipped a very basic test- dragging and dropping large files! You combine extracting files with other tests but you don't have any basic drag + drop tests I saw.

This rocks for the computer industry though. I personally couldn't believe so many people were buying the existing SSDs. This new Intel stuff looks like something that would actually make an improvement without compromises. I could certainly live with the capacity, I'm still running a 36GB raptor for my boot drive. Hah.

Dariusbird - Tuesday, September 9, 2008 - link

I'm proud to be working for the company producing these IC's. Nice work on the article.therealnickdanger - Tuesday, September 9, 2008 - link

It would be very nice to see where on all these charts the best 5400RPM 2.5" SATA drive would sit. Most laptop users have 5400RPM drives, so this would be MOST EXCELLENT to see the benefits of all these drives in comparison - especially the now "crappy" JM-based MLC drives.johncl - Tuesday, September 9, 2008 - link

I must congratulate Intel for adressing the issues with MLC and creating a brilliant product. Also thanks to the author of this article to point to the problem of MLC that seems to go by unnoticed by so many benchmark review sites.The initial price from intel is suggested retail price so I guess in a short while this should come down a bit, as its a bit over the top. As the author says, at around $400 this is a no-brainer for an enthusiast considering the improvement you will experience on your system. This is for me the most important advancement in computer technology in the last years and we are just starting to see the advantages of it. Besides the person sitting behind the keyboard, the slowest thing in your computer is the harddisk. You can swap CPUs and GPUs to your hearts desire, but eventually, percieved performance of a computer often boils down to how fast you get data in and out of system memory. SSDs will fix this technological void we have had for all these years with mechanical disks.

In general its about time we get rid of all mechanical parts in a computer, including fans. We need good cooling solutions built into our systems that rely on other means than rotating motors. If it means to stop the CPU speed race, then by all means do that, lets try to get todays performance but passively cooled. The SSD will walk hand in hand with this new trend in computing so we can get truly silent workstations. Amen to SSDs!

Gastrian - Tuesday, September 9, 2008 - link

I'm looking into creating a small multimedia PC where by I'll be archiving all my DVDs to DivX and running them through the PC instead of a DVD Player.Would I see any benefits in performance and acoustics (I don't want any noise from the PC interfering with my viewing) by having the OS on the Intel MLC SSD while storing the movies on a mechanical HDD?

Essentially I want to be able to sit down and browse my movies then pick one and watch it without having to worry about loading/transfer times and noise levels.