Lucid Hydra 100 - Enabling SLI/CrossFire on Any Platform

by Derek Wilson on August 20, 2008 12:00 AM EST- Posted in

- Trade Shows

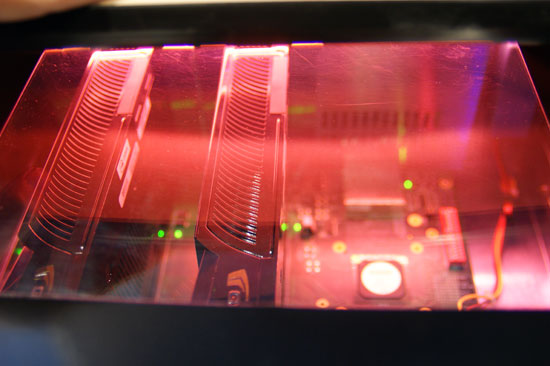

Well the title is a little misleading. It isn't enabling SLI or CrossFire: it is making them irrelevant and useless by replacing them with a better GPU independent multi-GPU solution. Lucid is a company with lots of funding from Intel, and they are a chip designer that is gearing up to sell hardware that enables truly scalable multi-GPU rendering.

While graphics is completely scalable and there are native solutions to extract near linear performance gains in every case, NVIDIA and AMD have opted not to go down this path yet. It is quite difficult, as it involves the sharing of resources and more fine grained distribution of the workload. SLI and CrossFire are bandaid solutions that don't scale well past 3 GPUs. Their very course grained load balancing and the tricks that must be done to handle certain rendering techniques really hobbles the inherent parallelism of graphics.

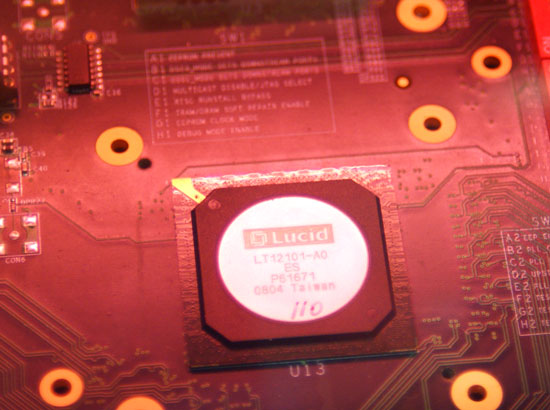

Lucid, with their Hydra Engine and the Hydra 100 chip, are going in a different direction. With a background in large data set analysis, these guy are capable of intercepting the DirectX or OpenGL command stream before it hits the GPU, analyzing the data, and dividing up scene at an object level. Rather than rendering alternating frames, or screens split on the horizontal, this part is capable of load balancing things like groups of triangles that are associated with a single group of textures and sending these tasks to whatever GPU it makes the most sense to render on. The scene is composited after all GPUs finish rendering their parts and send the data back to the Lucid chip.

The tasks that they load balance are even dynamically defined, and they haven't gone into a lot of detail with us at this point. But we have another meeting scheduled with them today. So we'll see what happens there.

These guys say they always get near linear scaling regardless of application, and that the scaling is not limited to a number of GPUs. Meaning that 4x GPUs would actually see nearly 4x scaling. Or that 10 GPUs would see 10x scaling. The implications, if this actually works as advertised are insane.

So why is Intel interested in this? Well, they could offer a platform solution through motherboards with this chip on them that delivers better multi-GPU scaling than either NVIDIA or AMD are capable of offering natively on their own platforms. With the issues in getting NVIDIA SLI on Intel systems, this will really be a slap in the face for them.

We will definitely bring you more when we know it. At this point it seems like a great idea, but the theory doesn't always line up with the execution. If they pull of what they say they can it will revolutionize multi-GPU. We'll see what happens.

33 Comments

View All Comments

AggressorPrime - Wednesday, August 20, 2008 - link

We all know that SLI (and maybe Crossfire) takes a scene and loads it into the memory of each card. Say you have a 1GB scene and 2 512MB cards. The scene is not split into 2 512MB portions, but is sent, 1/2 to both cards to process, then another 1/2 to both cards to process. So you are limited in GPU RAM by the lowest RAM in the GPU system.Is this chip going to use its own RAM (system RAM) to hold the entire scene and send parts of the scene to each GPU (so that if you have 2 1GB GPUs, each GPU can process a different 1GB of data from a 2GB scene)? Or does this chip not need to use RAM to split the scene into different parts so that GPUs can process without knowing the other parts? Or are we again limited for GPU RAM to the GPU that has the lowest RAM?

jnanster - Wednesday, August 20, 2008 - link

This is a system-on-a-chip. It must have complete control of the cards and monitor their individual output and limitations. Much more complicated processing than SLI/CF.AggressorPrime - Wednesday, August 20, 2008 - link

So does it just use GPU RAM to hold the entire scene and adds them all up, so that if you have 4 1GB cards, it will be like having a 4GB card instead of a 1GB card like we have with SLI?jnanster - Wednesday, August 20, 2008 - link

Not only 4GB but 4 GPU's "being told" told exactly what to render. This is how they are claiming improved scaling.AggressorPrime - Wednesday, August 20, 2008 - link

So basically a 3GB command is given to the Hydra 100. The Hydra 100 splits the command and distributes the segments, giving 2 512MB segments with low processing power needs to 2 512MB mid-end GPUs and then 2 1GB segments to 2 GeForce GTX 280's, being tightly bound to the GPUs' RAM to know how it all fits together.Penti - Wednesday, August 20, 2008 - link

No, see my comment below.The Lucid Hydra 100 is just a PCI-e switch and a accelerator chip for the software that sits BEFORE the graphics driver. It doesn't mess around with the low end stuff of talking directly to the hardware. The CPU in the Hydra 100 is just a 225MHz 32-bit processor with 16kB of cache. The load balancing happens before the graphic cards drivers and they just render some of the DX/OGL each. It also detects the power of the cards so it just won't send as much stuff to the card if it feels it being overloaded. The hydra software won't load the textures or store it, as it don't mess around with communication between the cards as said it will just direct the work from the app to the (drivers) gpus. The textures and stuff don't pass through the Hydra.

allajunaki - Wednesday, August 20, 2008 - link

So,if the Hydra intercepts the calls before the driver, and then decides the split based on objects, then my guess is improvement maynot be linear.... Coz it will mess up the card's certain bandwidth saving tricks like Z-Occlusion culling, wont it? Since at any given point the graphics card is not seeing the entire scene, rather only a few objects within the scene. It will not know if the object he is rendering is partially or full obstructed by another object.

Also I wonder how will it handle pixel shader routines, coz some of the shading techniques tends to "blend" the objects..

Penti - Thursday, August 21, 2008 - link

Well I'm still wondering about all this my self, I guess the software has to know all this. It's not clear how exactly it works from there own site, and the pcper article hints that the software aren't really ready, they just have DX9 support right now. However they also hint at that the software will know how to distribute the scenes and combine them. So I guess they need drivers that knows how to handle every game pretty much. It could work great, but we have to wait and see till next year too see how it does on the commercial market.jnanster - Wednesday, August 20, 2008 - link

Seems like you need a separate box for your video cards???MrHanson - Thursday, August 21, 2008 - link

Having a separete box with it's own power supply(s) is ideal. That way if you want to add 2 or more 3 gpu's to your hydra system, you don't have to rip apart your computer and put in a different motherboard and power supply. I imagine this system will probably come with it's own mainboard and power supply with several separate pcie x16 slots for scalablity. Time to put that external pci express specification to good use!