AMD's Radeon HD 4870 X2 - Testing the Multi-GPU Waters

by Anand Lal Shimpi & Derek Wilson on August 12, 2008 12:00 AM EST- Posted in

- GPUs

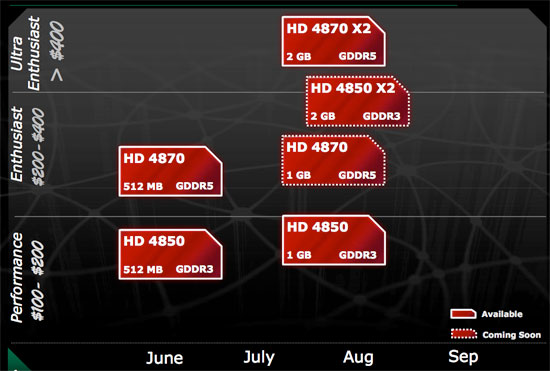

Today is all about the Radeon HD 4870 X2, the same card we previewed last month but AMD is quietly announcing a few other products alongside it. The 4870 X2, internally referred to as R700, is a pair of RV770 GPUs on a single card - effectively a single-card, Radeon HD 4870 CrossFire (hence the X2 moniker). Like previous X2 cards, the 4870 X2 appears to the user and the driver as a single card and all of the CrossFire magic happens behind the scenes.

| ATI Radeon HD 4870 X2 | ATI Radeon HD 4870 | ATI Radeon HD 4850 | |

| Stream Processors | 800 x 2 | 800 | 800 |

| Texture Units | 40 x 2 | 40 | 40 |

| ROPs | 16 x 2 | 16 | 16 |

| Core Clock | 750MHz | 750MHz | 625MHz |

| Memory Clock | 900MHz (3600MHz data rate) GDDR5 | 900MHz (3600MHz data rate) GDDR5 | 993MHz (1986MHz data rate) GDDR3 |

| Memory Bus Width | 256-bit x 2 | 256-bit | 256-bit |

| Frame Buffer | 1GB x 2 | 512MB | 512MB |

| Transistor Count | 956M x 2 | 956M | 956M |

| Manufacturing Process | TSMC 55nm | TSMC 55nm | TSMC 55nm |

| Price Point | $549 | $299 | $199 |

The benefit of single-card CrossFire is of course that you can use this single card on any platform, not just ones that explicitly support CF. Since CrossFire is supported on both Intel chipsets and AMD chipsets, it's a bit more flexible than SLI and the need for single-card CF isn't nearly as great as the need for single-card SLI.

Unlike most single-card multi-GPU solutions, the 4870 X2 is literally two Radeon HD 4870s on a single card. The clock speeds, both core and memory, are identical and this thing should perform like a pair of 4870s (which is pretty quick if you have forgotten). The only difference here is that while the standard Radeon HD 4870 ships with 512MB of GDDR5 memory, each RV770 on a X2 gets a full 1GB of GDDR5 for a total of 2GB per card.

...which leads us nicely into some of AMD's other products that will be coming out in the next month or so. There will be 1GB versions of both the Radeon HD 4870 and Radeon HD 4850.

Then at $399 we'll see a Radeon HD 4850 X2, which as you can probably guess is a pair of Radeon HD 4850 GPUs on a single card, but with 2GB of GDDR3 and not GDDR5 like the 4870 X2. As interesting as all of these cards are, we only have the 4870 X2 for you today, the rest will have to wait for another time. But it is worth noting that if you are interested in buying a Radeon HD 4870/4850 and keeping it for a while, you may want to wait for the 1GB versions as they should give you a bit more longevity.

Enough with being distracted by AMD's product lineup, let's talk about the competition.

93 Comments

View All Comments

Spoelie - Tuesday, August 12, 2008 - link

How come 3dfx was able to have a transparant multigpu solution back in the 90's - granted, memory still was not shared - when it seems impossible for everyone else these days.Shader functionality problems? Too much integration (a single card voodoo2 was a 3 chip solution to begin with)?

Calin - Tuesday, August 12, 2008 - link

The SLI from 3dfx used scan line interleaving (or Scan Line Interleaving to be exact). The new SLI still has Scan Line Interleaving, amongst other modes.The reason 3dfx was able to use this is that the graphic library used was their own, and it was built specifically to the task. Now, Microsoft's DirectX is not built for this SLI thing, and it shows (see the CrossFire profiles, selected for the best performance for a game, depending on that game).

Also, 3dfx's SLI had a dongle feeding video signal from the second card (slave) into the first card (master), and the video from the two cards was interleaved. Now, this uses lots of bandwidth, and I don't think DirectX is able to generate scenes in "only even/odd lines", and much of the geometry work must be done by both cards (so if your game engine is geometry bound, SLI doesn't help you)

mlambert890 - Friday, August 15, 2008 - link

Great post... Odd that people seem to remember 3DFX and dont remember GLIDE or how it worked. Im guessing they're too young to have actually owned the original 3D cards (I still have my dedicated 12MB Voodoo cards in a closet), and they just hear something on the web about how "great" 3DFX was.It was a different era and there was no real unified 3D API. Back then we used to argue about OpenGL vs GLIDE and the same types of malcontents would rant and rave about how "evil" MSFT was for daring to think to create DirectX

Today a new generation of illinformed malcontents continue to rant and rave about Direct3D and slam NVidia for "screwing up" 3DFX when the reality is that time moves on and NVidia used the IP from 3DFX that made sense to use (OBVIOUSLY - sometimes the people spending hundreds of millions and billions have SOME clue what they're buying/doing and actually have CS PhDs rather than just "forum posting cred")

Zoomer - Wednesday, August 13, 2008 - link

Ah, I remember wanting to get a Voodoo5 5000, but ultimately decided on the Radeon 32MB DDR instead.Yes, 32MB DDR framebuffer!

JarredWalton - Tuesday, August 12, 2008 - link

Actually, current SLI stands for "Scalable Link Interface" and has nothing to do with the original SLI other than the name. Note also that 3dfx didn't support anti-aliasing with SLI, and they had issues going beyond the Voodoo2... which is why they're gone.CyberHawk - Tuesday, August 12, 2008 - link

nVidia bought them .... and is now uncapable of take advantage if the technology :DStevoLincolnite - Tuesday, August 12, 2008 - link

They could have at least included support for 3DFX glide so all those GLIDE only games would continue to function.Also, ATI have had a "Dual GPU" Card for many years (Rage Furry Maxx) before nVidia released one.

TonyB - Tuesday, August 12, 2008 - link

can it play Crysis though?two of my friends computer died while playing it.

Spoelie - Tuesday, August 12, 2008 - link

no it can't, the crysis benchmarks are just made upstop with the bearded comments already

MamiyaOtaru - Wednesday, August 13, 2008 - link

Dude was joking. And it was funny.It's apparently pretty dangerous to joke around here. Two of my friends died from it.