ATI Radeon HD 4870 X2 - R700 Preview: AMD's Fastest Single Card

by Derek Wilson on July 14, 2008 12:00 AM EST- Posted in

- GPUs

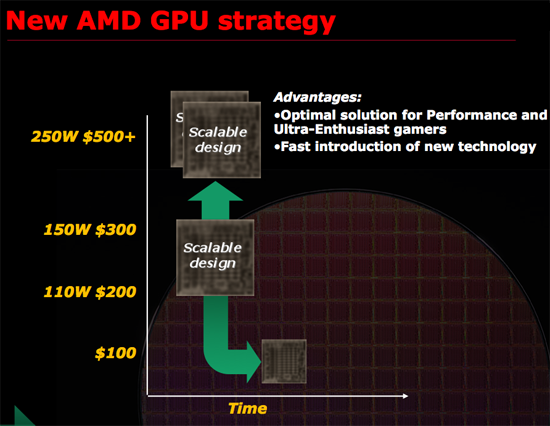

Remember this slide?

The "Scalable design" block we already know about, that's RV770 - we reviewed it last month. The 150W TDP $300 part is the Radeon HD 4870, and the 110W $200 part is the Radeon HD 4850, the two cards that have caused NVIDIA quite a bit of pain already. The smaller $100 part has a name, and a release date, neither of which we can talk about at this point, but it's coming.

Today however, is about the 250W, $500 multi-GPU solution - internally known as R700. Hot on the heels of the Radeon HD 4800 series launch, AMD shipped out ten R700 cards worldwide, attempting to capitalize on the success of the 4800 and showcase the strength of AMD's small-GPU strategy.

We're assuming that AMD will call the R700 based cards the Radeon HD 4870 X2, and based on the chart above we're expecting them to retail above $500 (possibly $549?). Today's article is merely a preview as R700s won't be officially launched for at least another month, but AMD wanted to unveil a bit of what it's cooking.

| |

ATI R700 | ATI Radeon HD 4870 | ATI Radeon HD 4850 | ATI Radeon HD 3870 |

| Stream Processors | 800 x 2 | 800 | 800 | 320 |

| Texture Units | 40 x 2 | 40 | 40 | 16 |

| ROPs | 16 x 2 | 16 | 16 | 16 |

| Core Clock | 750MHz | 750MHz | 625MHz | 775MHz+ |

| Memory Clock | 900MHz (3600MHz data rate) GDDR5 | 900MHz (3600MHz data rate) GDDR5 | 993MHz (1986MHz data rate) GDDR3 | 1125MHz (2250MHz data rate) GDDR3 |

| Memory Bus Width | 256-bit x 2 | 256-bit | 256-bit | 256-bit |

| Frame Buffer | 1GB x 2 | 512MB | 512MB | 512MB |

| Transistor Count | 956M x 2 | 956M | 956M | 666M |

| Manufacturing Process | TSMC 55nm | TSMC 55nm | TSMC 55nm | TSMC 55nm |

| Price Point | > $500 | $299 | $199 | $199 |

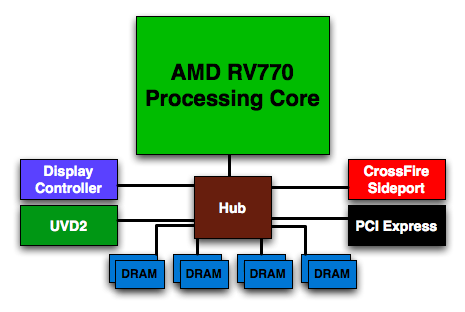

The R700 board is literally made up of two RV770s with a PCI Express switch connecting the two. The clock speeds are identical to the Radeon HD 4870, and memory size per GPU has been doubled to 1GB, which could help in hi res cases with AA enabled. In other words, R700 should perform very much like a pair of 4870s in CrossFire. Or should it?

Building a Better CrossFire

When AMD began talking about no longer building high end hardware using single monolithic GPUs a few weeks back, we let them know that improving CrossFire support would be incredibly important going forward. AMD told us that they are putting a lot into that but also that they have some exciting technology up their sleeves with R700 to help out as well. Unfortunately, we haven't gotten as much detailed information on how it works, but the new technology is GPU to GPU communication.

Until now, CrossFire has done zero GPU to GPU or framebuffer to framebuffer communication. As with the first iteration, each card fully renders the parts of the screen for which it is responsible (be it a whole frame in AFR, the top or bottom half of a screen, or alternating tiles). These results are sent to a combiner where the digital signals are merged and output to the screen. This is the only communication that takes place in CrossFire at the moment. R700 will change that by allowing GPUs to communicate.

RV770 has a CrossFire X Sideport...we assume that the two RV770s on a single R700 board somehow connect Sideports and make fast. AMD hasn't told us how yet.

It is not clear how extensive this communication will be, what information will be shared, or how much bandwidth requirements are increased because of this feature. And while it is a step in the right direction, the holy grail of single-card multi-GPU solutions will be a shared framebuffer. Currently both GPUs need a copy of all textures, geometry, etc., and this is a huge waste of resources. While the R700 has 2GB of RAM on board, it will still be limited in many of the same ways a 1GB RV770 would be as each GPU only has access to half the RAM on the card. Of course, since we don't have a 1GB RV770 yet, this card could show some advantages over the single 4870 regardless of CrossFire.

Regardless of where we want (and need) to see multi-GPU technology get to, R700 is the first part to follow AMD's official change in strategy, and as such it will be very important to establish their place in the market and will need to prove to gamers that they are taking the high end seriously. It's great that single-card multi-GPU solutions are capable of providing high end performance, but when spending the amount of money required to put a high end part in your system, people expect compatibility, reliability, and consistent performance. We can't really talk about how well they pull that off with prerelease hardware and prerelease drivers, but we can't emphasize the importance of this enough. We will certainly be putting the screws to it when the hardware does eventually make it out into the wild.

UPDATE: Our initial publication of this article indicated a 2x 512MB framebuffer for a total of 1GB on board. We have since learned that the R700 we tested has 2GB of RAM total for 2x 1GB framebuffers. This has affected some of our analysis and conclusion. We do apologize for any confusion this may have caused.

55 Comments

View All Comments

ZootyGray - Monday, July 14, 2008 - link

I look forward to Anandtech testing. Other sites - some good, some biased, some illiterate, etc.I describe Anandtech testing as thorough, fearless, accurate, brutal, relentless, and uncompromising. And few typos/language issues. And this results in far fewer fanboy/junk comments as well.

If I want the real truth, I come here, and I read it slowly - it's like a feast.

Thanks Anand and company.

jamesbond007 - Monday, July 14, 2008 - link

On page 7:It is likely that the extra 512MB of RAM available to each GPU has significantly impacted perforamnce since we are testing with all the options cranked up and 4xAA.

Anyways, I can't wait to see tests with 2x 4780X2 going! I also would have liked to see the 4780 (single) in all of the tests, but I figured the card would get roughly half of the performance of the new X2. No big deal.

The power consumption diagrams are making me realize why the 1000W+ PSUs exist. :) Good gravy, boy! A guy would have to pick up an extra shift or job just to pay the electric bill for the new cards coming out these days. Then again, most guys who buy this card likely live in their parent's basement. :p

Just joking, of course!

Game on, ATi! Nicely done.

~Travis

hooflung - Monday, July 14, 2008 - link

This can't be a 1G version Derek. This has to be the 2G version everyone else has gotten. Can you confirm that?Nvidia... meet face. Age of Conan on this card is simply amazing. I think I'll trade in my 3850 512 for the X2 once it comes out. Something I said I wouldn't do for a while.

yacoub - Monday, July 14, 2008 - link

Kind of annoying you didn't include the ATI Radeon HD 4870 card in every test, as that's the most expensive one most people will care about. The $500+ stuff is ridiculous. The $299 card is a bit more worth reading about.The other thing is how you have to test at 2560x now just to show the biggest differences. Kinda shows that something like an 8800GT is still fine for 1680x and even 1920x for most games.

7Enigma - Monday, July 14, 2008 - link

Let's see this is a preview about AMD's new high-end card that will directly compete with the 280. Of course most of us will never buy it, it doesn't mean it isn't important. There is already a review about the $300 card, it came out already with an indepth review.Every comparison has a nice graph and chart at the bottom showing performance at several different resolutions. If you are too lazy to THINK instead of just looking at the pretty color bars and seeing which one is longer, that's on you.

Really these complaints are just getting rediculous. If they failed to review the cheaper cards or only showed a single resolution/setting (cough...cough..(H)) then I could see your point. Fact of the matter is people shelling out >$500 for a graphics card probably DO have the screens with the resolutions in the bar graphs.

Alexstarfire - Tuesday, July 15, 2008 - link

No offense to you, but it would seem to me that they were just a bit lazy on making the extra lines in the graph. I know that at Guru3D that they include different resolutions in the graphs.To me, it's not that I'm lazy, but it's a lot more difficult to compare different numbers across a range of cards and settings than it is to see which one is above and/or below others on a graph.

FXi - Monday, July 14, 2008 - link

Put 2x 4870x2's in CF on an Intel X48 or X58 and you can kiss BOTH Nvidia's chipsets AND GPU's goodbye.Advantages:

Crossfire is fully supported on Intel chipsets with NO bridge chip required.

Crossfire won't be denied to function on Intel chipsets - the current bridge chip on Skulltrail has had 280 SLI denied by Nvidia, because they don't "feel" like enabling it.

Dual screens on Crossfire? No problem. Crossfire doesn't artificially limit dual screen use to just their workstation cards like Quadro.

4870x2 is already managing to beat SLI 280's in some places. Driver improvements will only make it a stronger beating in the future.

Don't you want a company that is committed to working WITH Intel, where it makes sense, rather than fighting them?

Support for DX 10.1 "just in case" it should end up enabled in some games.

Can you possibly imagine what the R800 is going to do? People should be considering the 4870x2 over the 280 and Crossfire of 280 SLI without a doubt. High res gaming has never looked so good.

DigitalFreak - Monday, July 14, 2008 - link

Fanboy much?piroroadkill - Monday, July 14, 2008 - link

Uh, multiple monitors are really important to a lot of people, I know it is to me, rendering SLI pointlessGriswold - Monday, July 14, 2008 - link

So, AMD needs to bring their drivers to the point where all games of the past benefit from multi-GPU? Are you sure we need to play 5-10 years old games (assuming they even run on todays systems) at 5000fps just to prove a point? :P