NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

One-Point-Four-Billion. That's transistors folks.

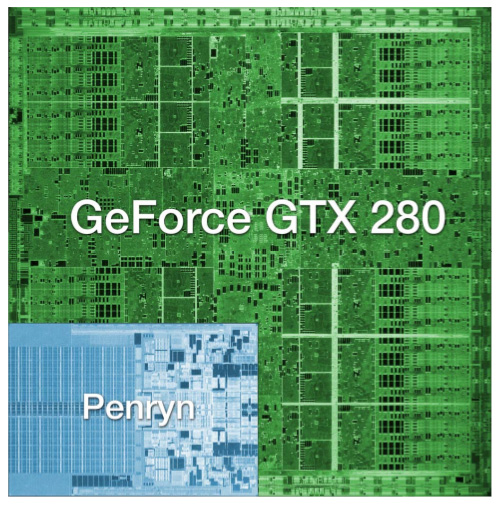

The chip is codenamed GT200 and it's the successor to NVIDIA's G80 and G92 families. Why the change in naming? The GT stands for "Graphics Tesla" and this is the second generation Graphics Tesla architecture, the first being the G80. The GT200 is launching today in two flavors, the die of the larger one is pictured below:

Um...yeah...we're reviewing that today

(this die comparison is to scale and that's a dual-core Penryn)

Let's put aside all the important considerations for a moment and bask in the glow of sheer geekdom. Intel's Montecito processor (their dual core Itanium 2) weighs in at over 1.7 billion transistors, but the vast majority of this is L3 cache (over 1.5 billion transistors for 24MB of on die memory). In contrast, the vast majority of the transistors on NVIDIA's GT200 chip are used for compute power. Whether or not NVIDIA has used these transistors well is certainly the most important consideration for consumers, but there's no reason we can't take a second to be in awe of the sheer magnitude of the hardware. This chip is packed full of logic and it is huge.

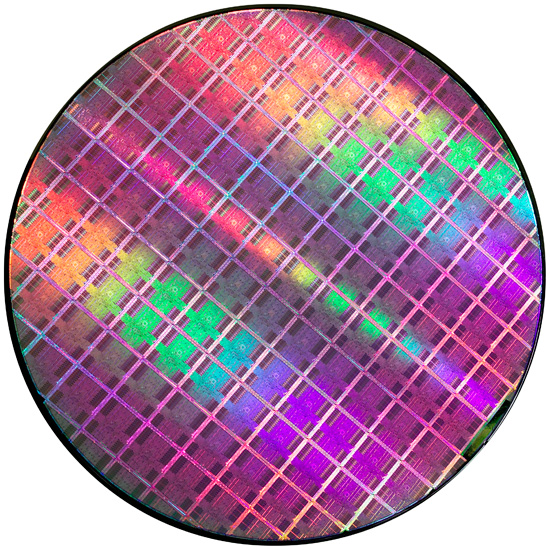

At most, 94 NVIDIA GT200 die can be produced on a single 300mm 65nm wafer from TSMC. On the other end of the spectrum, Intel can fit around 2500 45nm Atom processors on a 300mm wafer.

If the number of transistors wasn't enough to turn this thing into a dinner plate sized bit of hardware, the fact that it's fabbed on a 65nm process definitely puts it over the top. Current CPUs are at 45nm and NVIDIA's major competitor in the GPU market, AMD, has been building 55nm graphics chips for over 7 months now. With so many transistors, choosing not to shrink their manufacturing process doesn't seem to make much sense to us. Smaller fab processes offer not only the potential for faster, cooler chips, but also significantly reduce the cost of the GPU itself. Because manufacturing costs are (after ramping production) on a per wafer basis, the more dies that can be packed onto a single waffer, the less each die costs. It is likely that NVIDIA didn't want to risk any possible delays arising from manufacturing process changes on this cycle, but that seems like a risk that would have been worth taking in this case.

Instead, GT200 is the largest die TSMC has ever fabbed for production. Quite a dubious honor, and I wouldn't expect NVIDIA to really see this as something of which to be proud. Of course, that doesn't mean we can't be impressed with the sheer massiveness of the beast.

And what do we get from all these transistors? Moving up from 690M transistors of the original G80 and 754M transistors in G92 to the 1.4B transistors of GT200 is not a small tweak. One of the major new features is the ability to processes double precision floating point data in hardware (there are 30 64-bit FP units in GT200). The size of the register file for each SP array has been doubled. The promised ability of an SP to process a MAD and a MUL at the same time has been enhanced to work in more cases (G80 was supposedly able to do this, but the number of cases where it worked as advertised were extremely limited). And the number of SPs has increased from 128 on G80 to 240 with GT200. To better understand what all this means, we'll take a closer look at the differences between G80 and GT200, but first, the cards.

Clock Speeds, Pricing and HDMI Information

NVIDIA is introducing two GT200 parts today along with slightly tweaked branding. The new brand is called the GeForce GTX, and the first two cards are the GTX 280 and GTX 260.

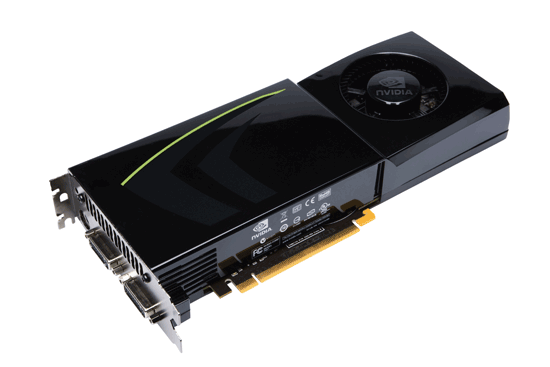

Here's the card, say hello:

The GeForce GTX 280

It's got a little sibling too:

The GeForce GTX 260

The GeForce GTX 280 features 240 streaming processors running at 1.3GHz. It's got a 512-bit memory interface to 1GB of GDDR3 running at 2.2GHz (1107MHz core clock). The rest of the GPU runs at 602MHz.

| |

GTX 280 | GTX 260 | 9800 GX2 | 9800 GTX | 8800 GTS 512 | 8800 GT |

| Stream Processors | 240 | 192 | 256 | 128 | 128 | 112 |

| Texture Address / Filtering | 80 / 80 | 64 / 64 | 128 / 128 | 64 / 64 | 56 / 56 | 56 / 56 |

| ROPs | 32 | 28 | 32 | 16 | 16 | 16 |

| Core Clock | 602MHz | 576MHz | 600MHz | 675MHz | 650MHz | 600MHz |

| Shader Clock | 1296MHz | 1242MHz | 1500MHz | 1690MHz | 1625MHz | 1500MHz |

| Memory Clock | 1107MHz | 999MHz | 1000MHz | 1100MHz | 970MHz | 900MHz |

| Memory Bus Width | 512-bit | 448-bit | 256-bit x 2 | 256-bit | 256-bit | 256-bit |

| Frame Buffer | 1GB | 896MB | 1GB | 512MB | 512MB | 512MB |

| Transistor Count | 1.4B | 1.4B | 1.5B | 754M | 754M | 754M |

| Manufacturing Process | TSMC 65nm | TSMC 65nm | TSMC 65nm | TSMC 65nm | TSMC 65nm | TSMC 65nm |

| Price Point | $650 | $400 | $500 | $300 | $280 | $170-$230 |

You'll need a power supply that can deliver up to 236W for the card itself and you'll need both a 6-pin and an 8-pin PCIe power connector (the board won't work with two 6-pin connectors).

The GeForce GTX 280 will retail for $650 with availability planned for June 17th.

The GTX 260 brings the total core count down to 192 by disabling two Texture/Processing Clusters on the GPU (but still maintaining more cores than any single-GPU G80/G92 based card). The SPs run slightly slower on the GTX 260 (1242MHz vs. 1296MHz, NVIDIA uses a 27MHz crystal so that's why we get these funny clock frequencies), while the memory runs at approximately 2GHz (999MHz memory clock, 1998MHz data rate). The GTX 260 also loses one of the 8 64-bit memory controllers, bringing the total memory bus down to 448-bits and total memory size down to 896MB.

The GTX 260 consumes a maximum of 183W and only needs two 6-pin power connectors. The GeForce GTX 260 will set you back $400 and should be available on June 26th.

The GTX 280's shroud, though it takes some cues from the 9800 GX2, is better engineered to allow for heat expulsion through a larger vent next to the IO ports. The 9800 GX2 didn't have a very wide opening to the outside world from which to remove heat. This resulted in the 9800 GX2 disproportionately increasing the heat of the system. Since we run our systems without cases we don't see problems like this as soon as our readers do, and we would like to thank you for pointing this out to us.

Both the GTX 260 and 280 have two dual-link DVI outputs, which can be converted to HDMI using an adapter if you'd like. You can pass SPDIF using a connector at the top of the card, which will then be passed to the HDMI output when using the DVI-HDMI adapter. For the HTPC enthusiasts out there, the GTX 280 and 260 can output the following audio formats over HDMI:

2-channel LPCM

6-channel DD bitstream

6-channel DTS bitstream

Unfortunately there's no support for 8-channel LPCM or bitstreaming of Dolby TrueHD or DTS HD-MA.

108 Comments

View All Comments

tkrushing - Wednesday, June 18, 2008 - link

Say what you want about this guy but this is partially true which is why AMD/ATI is in the position they have been. They are slowly climbing out of that hole they've been in though. Would have been nice to see 4870x2 hit the market first. As we know competition = less prices for everyone!hk690 - Tuesday, June 17, 2008 - link

I would love to kick you hard in the face, breaking it. Then I'd cut your stomach open with a chainsaw, exposing your intestines. Then I'd cut your windpipe in two with a boxcutter. Then I'd tie you to the back of a pickup truck, and drag you, until your useless fucking corpse was torn to a million fucking useless, bloody, and gory pieces.

Hopefully you'll get what's coming to you. Fucking bitch

http://www.youtube.com/watch?v=XNAFUpDTy3M">http://www.youtube.com/watch?v=XNAFUpDTy3M

I wish you a truly painful, bloody, gory and agonizing death, cunt

7Enigma - Wednesday, June 18, 2008 - link

Anand, I'm all for free speech and such, but this guy is going a bit far. I read these articles at work frequently and once the dreaded C-word is used I'm paranoid I'm being watched.Mr Roboto - Thursday, June 19, 2008 - link

I thought those comments would be deleted already. I'm sure no one cares if they are. I don't know what that person is so mad about .hk690 - Tuesday, June 17, 2008 - link

Die painfully okay? Prefearbly by getting crushed to death in a garbage compactor, by getting your face cut to ribbons with a pocketknife, your head cracked open with a baseball bat, your stomach sliced open and your entrails spilled out, and your eyeballs ripped out of their sockets. Fucking bitch

Mr Roboto - Wednesday, June 18, 2008 - link

Ouch.. Looks like you hit a nerve with AMD\ATI's marketing team!bobsmith1492 - Monday, June 16, 2008 - link

The main benefit from the 280 is the reduced power at idle! If I read the graph right, at idle the 9800 takes ~150W more than the 280 while at idle. Since that's where computers spend the majority of their time, depending on how much you game, that can be a significant cost.kilkennycat - Monday, June 16, 2008 - link

Maybe you should look at the GT200 series from the point of view of nvidia's GPGPU customers - the academic researchers, technology companies requiring fast number-cruching available on the desktop, the professionals in graphics-effects and computer animation - not necessarily real-time, but as quick as possible... The CUDA-using crew. The Tesla initative. This is an explosively-expanding and highly profitable business for nVidia - far more profitable per unit than any home desktop graphics application. An in-depth analysis by Anandtech of what the GT200 architecture brings to these markets over and above the current G8xx/G9xx architecture would be highly appreciated. I have a very strong suspicion that sales of the GT2xx series to the (ultra-rich) home user who has to have the latest and greatest graphics card is just another way of paying the development bills and not the true focus for this particular architecture or product line.nVidia is strongly rumored to be working on the true 2nd-gen Dx10.x product family, to be introduced early next year. Considering the size of the GTX280 silicon, I would expect them to transition the 65nm GTX280 GPU to either TSMC's 45nm or 55nm process before the end of 2008 to prove out the process with this size of device, then in 2009 introduce their true 2nd-gen GPU/GPGPU family on this latter process. A variant on the Intel "tic-toc" process strategy.

strikeback03 - Tuesday, June 17, 2008 - link

But look at the primary audience of this site. Whatever nvidia's intentions are for the GT280, I'm guessing more people here are interested in gaming than in subsidizing research.Wirmish - Tuesday, June 17, 2008 - link

"...requiring fast number-cruching available on the desktop..."GTX 260 = 715 GFLOPS

GTX 280 = 933 GFLOPS

HD 4850 = 1000 GFLOPS

HD 4870 = 1200 GFLOPS

4870 X2 = 2400 GFLOPS

Take a look here: http://tinyurl.com/5jwym5">http://tinyurl.com/5jwym5