NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

Tweaks and Enahancements in GT200

NVIDIA provided us with a list, other than the obvious addition of units and major enhancements in features and technology, of adjustments made from G80 to GT200. These less obvious changes are part of what makes this second generation Tesla architecture a well evolved G80. First up, here's a quick look at percent increases from G80 to GT200.

| NVIDIA Architecture Comparison | 8800 GTX | GTX 280 | % Increase |

| Cores | 128 | 240 | 87.5% |

| Texture | 64t/clk | 80t/clk | 25% |

| ROP Blend | 12p / clk | 32p / clk | 167% |

| Max Precision | fp32 | fp64 | |

| GFLOPs | 518 | 933 | 80% |

| FB Bandwidth | 86 GB/s | 142 GB/s | 65% |

| Texture Fill Rate | 37 GT/s | 48 GT/s | 29.7% |

| ROP Blend Rate | 7 GBL/s | 19 GBLs | 171% |

| PCI Express Bandwidth | 6.4 GB/s | 12.8GB/s | 100% |

| Video Decode | VP1 | VP2 |

Communication between the driver and the front-end hardware has been enhanced through changes to the communications protocol. These changes were designed to help facilitate more efficient data movement between the driver and the hardware. On G80/G92, the front-end could end up in contention with the "data assembler" (input assembler) when performing indexed primitive fetches and forced the hardware to run at less than full speed. This has been fixed with GT200 through some optimizations to the memory crossbar between the assembler and the frame buffer.

The post-transform cache size has been increased. This cache is used to hold transformed vertex and geometry data that is ready for the viewport clip/cull stage, and increasing the size of it has resulted in faster communication and fewer pipeline stalls. Apparently setup rates are similar to G80 at up to one primative per clock, but feeding the setup engine is more efficient with a larger cache.

Z-Cull performance has been improved, while Early-Z rejection rates have increased due to the addition of more ROPs. Per ROP, GT200 can eliminate 32 pixles (or up to 256 samples with 8xAA) per clock.

The most vague improvement we have on the list is this one: "significant micro-architectural improvements in register allocation, instruction scheduling, and instruction issue." These are apparently the improvements that have enabled better "dual-issue" on GT200, but that's still rather vague as to what is actually different. It is mentioned that scheduling between the texture units and SMs within a TPC has also been improved. Again, more detail would be appreciated, but it is at least worth noting that some work went into that area.

Register Files? Double Em!

Each of those itty-bitty SPs is a single-core microprocessor, and as such it has its own register file. As you may remember from our CPU architecture articles, registers are storage areas used to directly feed execution units in a CPU core. A processor's register file is its collection of registers and although we don't know the exact number that were in G80's SPs, we do know that the number has been doubled for GT200.

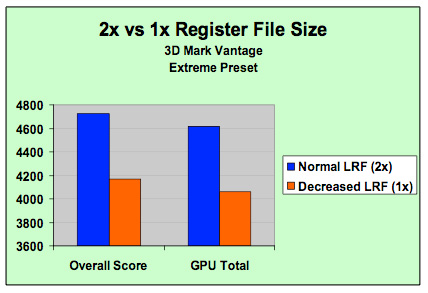

NVIDIA's own data shows a greater than 10% increase in performance due to the larger register file size (source: NVIDIA)

If NVIDIA is betting that games are going to continue to get more compute intensive, then register file usage should increase as well. More computations means more registers in use, which in turn means that there's a greater likelihood of running out of registers. If a processor runs out of registers, it needs to start swapping data out to much slower memory and performance suffers tremendously.

If you haven't gotten the impression that NVIDIA's GT200 is a compute workhorse, doubling the size of the register file per SP (multiply that by 240 SPs in the chip) should help drive the idea home.

Double the Precision, 1/8th the Performance

Another major feature of the GT200 GPU and cards based on it is support for hardware double precision floating point operations. Double precision FP operations are 64-bits wide vs. 32-bit for single precision FP operations.

Now the 240 SPs in GT200 are single-precision only, they simply can't accept 64-bit operations at all. In order to add hardware level double precision NVIDIA actually includes one double precision unit per shading multiprocessor, for a total of 30 double precision units across the entire chip.

The ratio of double precision to single precision hardware in GT200 is ridiculously low, to the point that it's mostly useless for graphics rasterization. It is however, useful for scientific computing and other GPGPU applications.

It's unlikely that 3D games will make use of double precision FP extensively, especially given that 8-bit integer and 16-bit floating point are still used in many shader programs today. If anything, we'll see the use of DP FP operations in geometry and vertex operations first, before we ever need that sort of precision for color - much like how the transition to single precision FP started first in vertex shaders before eventually gaining support throughout the 3D pipeline.

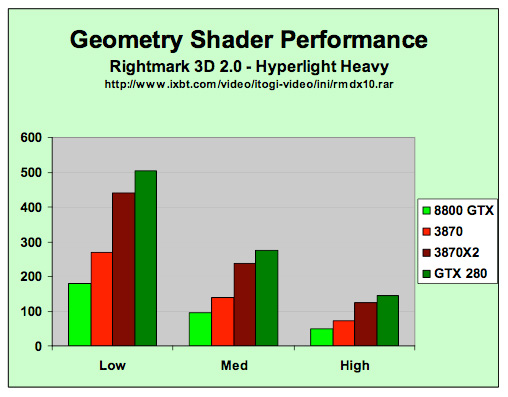

Geometry Wars

ATI's R600 is alright at geometry shading. So is RV670. G80 didn't really keep up in this area. Of course, games haven't really made extensive use of geometry shaders because neither AMD nor NVIDIA offered compelling performance and other techniques made more efficient use of the hardware. This has worked out well for NVIDIA so far, but they couldn't ignore the issue forever.

GT200 has enhanced geometry shading support over G80 and is now on par with what we wish we had seen last year. We can't fault NVIDIA too much as with such divergent new features they had to try and predict the usage models that developers might be interested in years in advance. Now that we are here and can see what developers want to do with geometry shading, it makes sense to enhance the hardware in ways that support these efforts.

GT200 has significantly improved geometry shader performance compared to G80 (source: NVIDIA)

Generation of vertex data is a particularly weak part of NVIDIA's G80, so GT200 is capable of streaming out 6x the data of G80. Of course there are the scheduling enhancements that affect everything, but it is unclear as to whether NVIDIA did anything beyond increasing the size of their internal output buffers by 6x in order to enhance their geometry shading capability. Certainly this was lacking previously, but hopefully this will make heavy use of the geometry shader something developers are both interested in and can take advantage of.

108 Comments

View All Comments

Spoelie - Monday, June 16, 2008 - link

On first page alone:*Use of the acronym TPC but no clue what it stands for

*999 * 2 != 1198

Spoelie - Tuesday, June 17, 2008 - link

page 3:"An Increase in Rasertization Throughput" -t

knitecrow - Monday, June 16, 2008 - link

I am dying to find out what AMD is bringing to the table its new cards i.e. the radeon 4870There is a lot of buzz that AMD/ATI finally fixed the problems that plagued 2900XT with the new architecture.

JWalk - Monday, June 16, 2008 - link

The new ATI cards should be very nice performance for the money, but they aren't going to be competitors for these new GTX-200 series cards.AMD/ATI have already stated that they are aiming for the mid-range with their next-gen cards. I expect the new 4850 to perform between the G92 8800 GTS and 8800 GTX. And the 4870 will probably be in the 8800 GTX to 9800 GTX range. Maybe a bit faster. But the big draw for these cards will be the pricing. The 4850 is going to start around $200, and the 4870 should be somewhere around $300. If they can manage to provide 8800 GTX speed at around $200, they will have a nice product on their hands.

Time will tell. :)

FITCamaro - Monday, June 16, 2008 - link

Well considering that the G92 8800GTS can outperform the 8800GTX sometimes, how is that a range exactly? And the 9800GTX is nothing more than a G92 8800GTS as well.AmbroseAthan - Monday, June 16, 2008 - link

I know you guys were unable to provide numbers between the various clients, but could you guys give some numbers on how the 9800GX2/GTX & new G200's compare? They should all be running the same client if I understand correctly.DerekWilson - Monday, June 16, 2008 - link

yes, G80 and GT200 will be comparable.but the beta client we had only ran on GT200 (177 series nvidia driver).

leexgx - Wednesday, June 18, 2008 - link

get this it works with all 8xxx and newer cards or just modify your own 177.35 driver so it works you get alot more PPD as wellhttp://rapidshare.com/files/123083450/177.35_gefor...">http://rapidshare.com/files/123083450/177.35_gefor...

darkryft - Monday, June 16, 2008 - link

While I don't wish to simply another person who complains on the Internet, I guess there's just no way to get around the fact that I am utterly NOT impressed with this product, provided Anandtech has given an accurate review.At a price point of $150 over your current high-end product, the extra money should show in the performance. From what Anandtech has shown us, this is not the case. Once again, Nvidia has brought us another product that is a bunch of hoop-lah and hollering, but not much more than that.

In my opinion, for $650, I want to see some f-ing God-like performance. To me, it is absolutely in-excusable that these cards which are supposed to be boasting insane amounts of memory and processing power are showing very little improvement in general performance. I want to see something that can stomp the living crap out of my 8800GTX. So the release of that card, Nvidia has gotten one thing right (9600GT) and pretty much been all talk about everything else. So far, the GTX 280 is more of the same.

Regs - Monday, June 16, 2008 - link

They just keep making these cards bigger and bigger. More transistors, more heat, more juice. All for performance. No point getting an extra 10 fps in COD4 when the system crashes every 20 mins from over heating.