Brightness and Contrast Ratio

For the brightness (luminance), contrast, and color accuracy tests, we depend on a hardware colorimeter and software to help calibrate the displays. We use a Monaco Optix XR (DTP-94) colorimeter and Monaco Optix XR Pro software, and we also test with ColorEyes Display Pro. Results in nearly every case have been better with Monaco Optix XR Pro, so we only report the ColorEyes Display Pro results on the monitor evaluation pages. We'll start with a look at the range of brightness and contrast at the default LCD settings while changing just the brightness level. (In some cases, it will be necessary to reduce the color levels if you want to achieve a more reasonable brightness setting of 100 or 120 nits.)

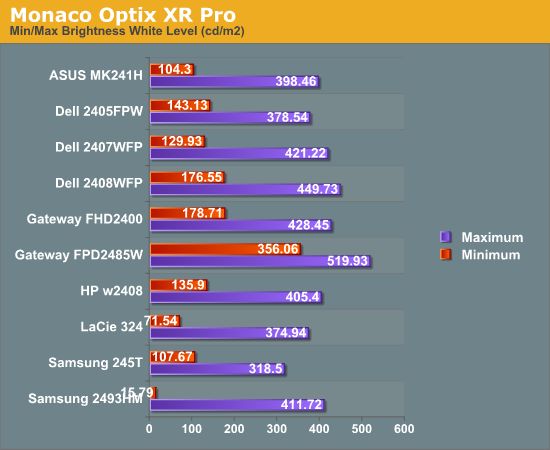

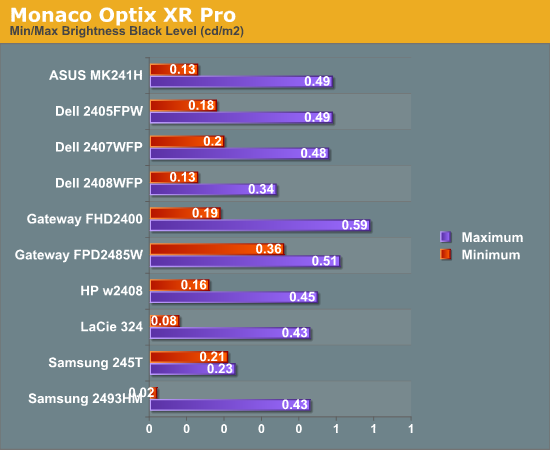

Nearly all of the LCDs have a maximum brightness level of around 400 nits, which is more than sufficient and is actually brighter than what most users prefer to use in an office environment. Minimum brightness without adjusting other settings is often above 100 nits, so it will be necessary to go in and adjust color levels as mentioned already. The Gateway FPD2485W is the prime example of this, where the default settings have a minimum brightness of 356 nits. Black levels are also reasonably consistent among the LCDs, with maximum and minimum black levels corresponding to the maximum and minimum white levels.

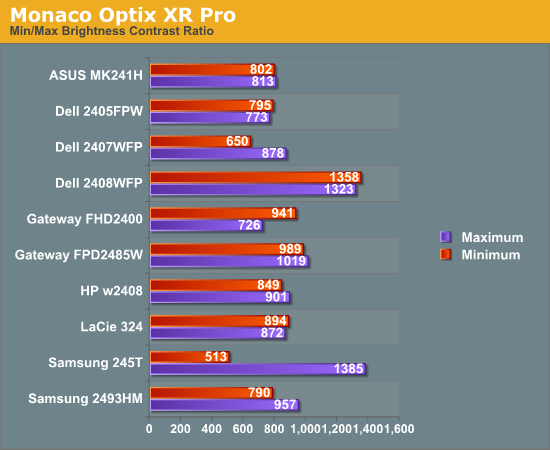

More important than the luminosity is the contrast ratio that is achievable at the various brightness settings. Here we begin to see some differences, with many of the LCDs following in the 800:1 ~ 900:1 range. The Dell 2408WFP and Samsung 245T stand out as having some of the highest contrast ratios, with the Dell taking the lead as it maintains the high contrast ratio even at low brightness settings. However, we should also mention that in practice the difference between 500:1 and 750:1 really isn't very significant for most users. It's only when you fall below 500:1 that colors really start to look washed out.

Color Gamut

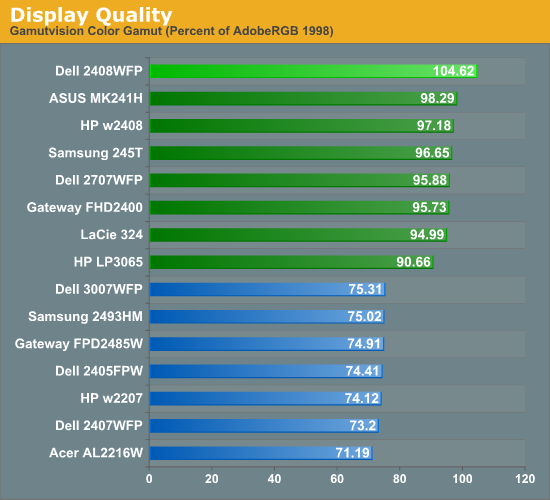

We've already discussed color gamut of individual LCD evaluations, but it's a new addition to our LCD testing. This is something we wanted to add previously, but we lacked a good utility for generating the appropriate charts and data. We recently found out about Gamutvision, a utility developed by Imatest LLC. They were kind enough to provide us with a copy of their software, and it does exactly what we need. We compared the color profiles of all previously tested LCDs to the Adobe RGB 1998 color profile. Graphs of the individual gamut volumes are available on the evaluation pages. Below is a chart showing the percentage of the Adobe RGB 1998 gamut from the various displays.

We basically end up with two tiers of quality in terms of color gamut. Filling the bottom tier are mostly older displays that have 82% NTSC color gamut backlighting. These may seem drastically inferior to the newer LCDs, but keep in mind that if you are just using the standard sRGB profile these LCDs look fine. It's only when you work in applications like Adobe Photoshop with its improved color space that you begin to notice a difference between the displays. Most of the newer displays now have ~95% Adobe RGB color gamuts, and the Dell 2408WFP actually surpasses the Adobe RGB 1998 color space. The only display in this round up that doesn't make it into the upper tier is the Samsung 2493HM.

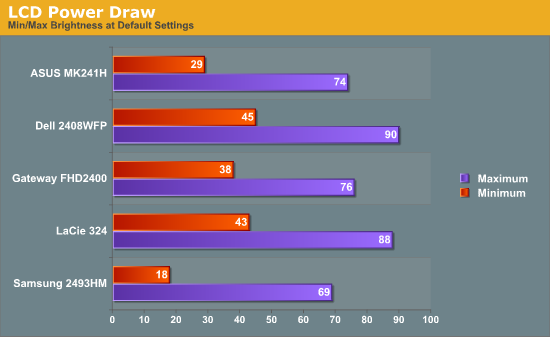

Power Requirements

Another new test we decided to add with this roundup is a quick look at power requirements. Like the above tests, power requirements are checked at default LCD settings while varying the brightness setting. Also note that minimum power requirements are going to depend largely on how dim the backlight is at the minimum setting, so looking at the above charts it shouldn't be difficult to figure out that the Samsung 2493HM will require less power than the others when it's only putting out 16 lumens.

We've only begun collecting this data with this batch of LCDs, so we don't have any clear patterns established yet. However, it's interesting to note that the two S-PVA panels to seem to draw slightly more power than the three TN panels. At equivalent brightness settings, the differences in power draw are very small.

89 Comments

View All Comments

chrisdent - Monday, May 5, 2008 - link

Should this be 16.2 million, or have they developed a new algorithm that manages to create an additions 500,000 colours from 6 bits?JarredWalton - Monday, May 5, 2008 - link

The specs say "16.7 million", but I believe all TN panels continue to use 6-bit plus dithering. Since no one with absolute knowledge would answer the question, I put the question mark in there. I honestly can't spot the difference between true 8-bit and 6-bit plus dithering in 99% of situations; if you want best colors, though, get a PVA or IPS (or MVA) panel.soltys - Sunday, May 4, 2008 - link

I'd like to remind about an excellent thread regarding LCDs:http://forums.anandtech.com/messageview.aspx?catid...">http://forums.anandtech.com/messageview...amp;thre...

It's THE source of information before considering buying a new monitor. IMO.

After all the panel lotteries and problems with Dell over last few years, I'd be very careful with purchasing any of its monitors these days. You might end badly surprised...

Honeybadger - Sunday, May 4, 2008 - link

Thanks for the excellent review of the 24 inch screens. I'd been thinking about replacing my Sony CRT workhorse, and after reading your article, I went over to Dell coupons, and found a $75 off deal on the 2408 WFP for the first 150 users. Add to that an additional $18 off by using a Dell credit card and I made the purchase for $585 plus free shipping. Hugs and kisses!homebredcorgi - Friday, May 2, 2008 - link

Okay...count me really confused as to how the lag measurements were made. How is input/output lag time being measured by comparing two monitors side by side? It seems that you are measuring a relative lag (lag as compared to some "really good" monitor) and not absolute lag where the true timing of the monitor getting a frame and then displaying it is measured. The difference between the two should be more clear as I would bet most people would see these and assume that an absolute lag was measured.While I don't doubt the trends you have found, the numbers seem sketchy. You state that the refresh rate is 60hz, so how could you ever measure a lag below 1/60 seconds (16.67 ms) using this method? Why are all of the lag numbers multiples of 10ms? Why are the numbers so scattered? This seems like it should be something constant, unique to each monitor and very repeatable. When I see measurements being made near an absolute limit and data that doesn't appear too repeatable I question the accuracy of the measurement and how it is being made.

It seems that a true test of absolute lag would need to measure time between some input that changes the display to the monitor physically displaying the output, correct?

JarredWalton - Saturday, May 3, 2008 - link

Short of spending a lot of money on some specialized equipment (and I'm not even sure what equipment I'd need), you have to measure relative lag. Why are the results scattered rather than a constant value? Precisely because of refresh rate issues. That was the point of the relatively lengthy explanation on how we tested. If I simply chose one sample point, I could put an input lag 10ms lower or higher than the average. That was why I showed all 10 measurements - the lag does not always seem to be constant for various reasons.Why don't I measure with something that's more accurate than 10ms? I looked (for many hours, including testing), and was unable to find a timing utility that offered better resolution. I found quite a few timers that show seconds out to .001, but they don't really have that level of accuracy. Taking pictures, I was able to determine that most timing applications were only accurate to 0.054 seconds - apparently a PC hardware and/or Windows timing issue. 3DMark03 has the added advantage of showing scenes where you can see response time artifacts.

I would imagine that if I used a CRT, I might get a higher relative input lag on the LCDs - probably 10 to 20 ms more. Since the question for new display purchases is pretty much which LCD to buy and not whether to get a CRT or an LCD, relative lag compared to a good LCD seems perfectly acceptable. I'd also say that anything under 20ms is a small enough delay that it won't make a difference.

Also worth mention is that technologies like CrossFire/SLI and triple-buffering can add more absolute lag relative to user input, especially if you're using 3-way or 4-way SLI/CF with alternate frame rendering. Input sampling rates can also introduce input lag. If your frame rates aren't really high - at least 60FPS and preferably higher - you could see an extra one or two frame delay with 4-way GPU setups. And yet, I've never heard gamers complain about that, so I have to wonder if some of the comments regarding LCD lag aren't merely psychosomatic. I've certainly never noticed it without resorting to a camera with a very fast shutter speed.

homebredcorgi - Monday, May 5, 2008 - link

I completely understand about the budget constraints. However, if you are measuring something at levels close to or below the resolution of your measurement device, the results have little meaning. For instance: one monitor may have close to 9.9ms of lag and you could measure zero seven times and ten three times for an average of three. Another monitor could be around 2ms of lag and you might measure zero nine times and ten once for an average of one. These two appear to be within ~50% of each other but are actually close to 500% of each other.While the averaging helps to reduce your error on variables that have a normal distribution you are still stuck with the low limit on resolution. I would guess these measurements have a normal dist. with a std. dev. that is in the nanoseconds.

I would look into avoiding the computer's timing altogether if I were to try to measure absolute lag (the computer that is generating the image, that is).

My best guess at how to measure absolute lag would be to use a physical switch that turns the monitor from black to white (or white to grey?). Along with this switch would be an LED that lights up when the switch is turned on. A high speed video camera can be used to view both the LED and monitor (I hear there's a Casio point and shoot that can do 500 fps out there now...though in reality you would want one even faster). Then measure the delay between the LED turning on and the display changing.

The only problem with this method is that it assumes whatever software is used to detect the switch going from low to high and then change the monitor output has a negligible timing difference. I *think* this would be the case, but if you want to eliminate that variable you would want to look into generating the signal on more specialized hardware.

I would guess the way this is measured by the manufacturers involves a spectrophotometer or high speed camera and some specialized hardware/software that can switch the monitor signal while logging the output from the spectrophotometer or camera and monitor's input signal at high speed. Hell maybe even a dark room and some photo diodes could get the job done instead of the spectrophotometer or camera. That would allow for some absurdly high sample rates (10khz +)...not sure about the frequency response of spectrophotometers....

Perhaps some emails to the manufactures regarding the details of this measurement are in order?

Forgot to add - nice review overall...I'm in the market for a 24" and this helped narrow it down.

And just to annoy you more, here are some other questions to ponder:

What was the framerate of the benchmarking program? Did it ever drop under 60?

Does using the second output on a video card in clone mode just split the signal, or is it actually generating the same image twice?

Is lag (absolute or relative) a stable measurement? Could it get worse over the life of a monitor? Does brightness/contrast settings of the monitor impact this measurement?

Good luck! Welcome to the bag of worms that is measurement systems.

JarredWalton - Monday, May 5, 2008 - link

The big problem with input lag is that a signal is sent to the LCD at 60Hz. Technically, then, it seems to me that actual lag will be either one frame, two frames, three frames, etc. Or put another way: 16.7ms, 33.3ms, 50ms, etc. Either the lag is a frame or it is not. The averages seem to bear this out: ~18ms, ~32ms, etc. If I had a better time resolution I might be able to get a closer result to one frame multiples.Other lag is measuring something else, i.e. pixel response time, which can be more or less than one frame. I'd be curious to know precisely how some sites measure this, because to accurately determine response times requires testing of lots of transitions with sophisticated equipment. (I'm quite sure my camera isn't going to be a good tool for determining true response times.) But I'm okay with including pictures of some high-action scenes that show image persistence - and so far all of the LCDs seem to be in the 1-2 frame persistence range.

You'll note that I'm not going to make a big deal out of a display that scores 0-3ms in my testing and one that scores 3-6ms; the bigger issue is between 0-6ms and 30ms+ that we see. Certainly I'm not going to recommend a 1ms "input lag" LCD over 5ms "input lag" purely on that factor; I firmly believe that lag of under 10ms isn't noticeable and those who think it is are deceiving themselves. I also understand that there's plenty of margin for error in these tests - as much as 10ms either way, though with the averaging it should be less than 5ms.

For the record, I am running 3DMark03 to make sure frame rates stay above 60FPS even with a 1920x1200 resolution and 4xAA. Minimum frame rate ends up being something like 80FPS, with the average generally being over 200FPS. I tried both output ports on two LCDs -- i.e. HP LP3065 on port one with the 2408WFP on port two, and vice versa -- and the results were the same within 2ms over 10 samples. (I too was worried that internally one port might get the signal first.) Long-term, I have no idea if input lag will stay constant. Considering my HP LP3065 is over a year old and still seems to hold its own against new TN panels, I'd say that the lag appears to be in the integrated circuits and not in the LCD matrices. Thus, unless you think processors can become slower over time, input lag should remain constant.

JarredWalton - Monday, May 5, 2008 - link

Thinking about it a bit more, I suppose internally the LCD could process a signal for less than 16.7ms. The problem is that you need a way to determine that delay, and since the frames are sent ever 1/60s, you could have a 5ms lag that ends up showing the previous frame. So the averaging does make sense as a way to remove that influence, but I'm still not convinced that the accuracy overall is any better than around 5ms unless you're willing to take about 50 pictures and average all those results.Rasterman - Tuesday, May 6, 2008 - link

Yes, the lag could be anything, it is not tied to refresh rate at all. Internally the display can process and buffer the image how ever long it wants.The OP had a very good idea about how to measure it though using a high speed camera, but his suggested setup seemed pretty involved and pricey, I think I have something that is very simple, basically the only piece of special hardware you need is a high speed camera.

Setup a computer so it splits a signal to 2 monitors, 1 will be the reference and the other will be the tested, actually you could shoot as many monitors as you could split the signal. Then simply shoot them with the high speed and compare.