Intel's Atom Architecture: The Journey Begins

by Anand Lal Shimpi on April 2, 2008 12:05 AM EST- Posted in

- CPUs

Lower Power than Centrino

(This page is taken from our earlier look at the Intel Atom architecture)

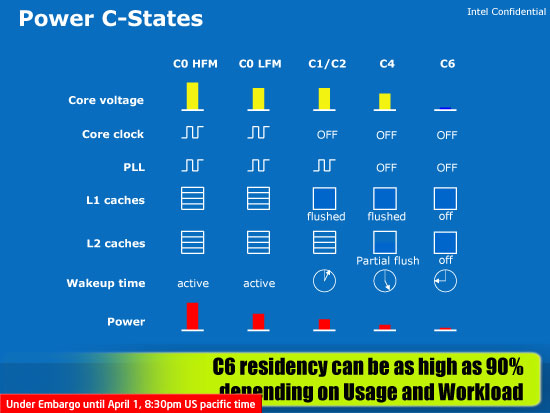

With mobile Penryn Intel introduced a new power state it calls C6. In the C6 power state the CPU is in a virtual reset state, and core voltage is very close to zero. The core clock, all of the PLLs, and caches are completely turned off. All of the state data is saved in a 10.5KB storage area, similar to mobile Penryn (but smaller since there's not as much state to save). Upon exiting C6 the processor's previous state is restored from this memory, called the C6 array. It takes around 100us to get out of C6, but the power savings are more than worth the effort - it's a similar approach of power for performance that we saw in the design of the original Pentium M processor.

Clock gating (sending the clock signal through a logic gate that can disable it on the fly, thus shutting off whatever the clock connects to) is an obvious aspect of Atom's design. All Intel processors use clock gating; Atom simply uses it more aggressively - the clock going to every "power zone" is gated, something that isn't the case in mobile Core 2. Each logic cluster (205 total) in Atom is referred to as a Functional Unit Block (FUB) and the entire chip uses what Intel calls a sea of FUB design. Each FUB is clock gated and can be disabled independently to optimize for power consumption. The cache in Atom is in its own FUB, which apparently isn't the case in mobile Core 2.

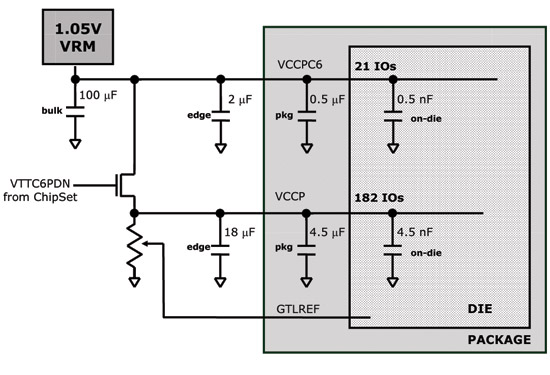

Keeping Silverthorne on life support, only 21 pins are necessary

Atom uses a split power plane; in its deepest sleep state (C6) the chip can shut off all but 21 pins which are driven by the 1.05V VRM. By having two separate power planes the chip can manage power on a more granular level. While it can't disable individual pins, it can disable large groups of them leaving only 21 active when things like the L2 cache and bus interface are powered down.

Intel mentioned that Atom will remain in its C6 sleep state 90% of the time. However, that figure is slightly misleading because it's only possible to remain in C6 when the CPU is completely idle. The 90% figure comes from taking into account a mobile device sitting in your pocket doing nothing most of the time. When in use, Atom won't be able to spend nearly that much time in C6.

Despite the implementation of a C6 power state, Atom will still lose to ARM based processors in both active and idle power. The active power disadvantage will be erased over the coming years as the microarchitecture evolves (and smaller manufacturing processes are implemented), while the idle power requires more of a platform approach. As we reported in our first Menlow/Silverthorne article:

"The idle power reduction will come through highly integrated platforms, like what we're describing with Moorestown. By getting rid of the PCI bus and replacing it with Intel's own custom low-power interface, Intel hopes to get idle power under control. The idea is that I/O ports will only be woken up when needed (similar to how the data lines on the Centrino FSB function), and what will result are platforms with multiple days of battery life when playing back music."

46 Comments

View All Comments

highlandsun - Thursday, April 3, 2008 - link

With all due respect to Fred Weber, with Atom at 47 million transistors, it's pretty obvious that the 10% figure for X86 ISA compatibility is not negligible, particularly in this performance-at-absolute-minimum-power space. Anybody using X86 in tiny embedded systems is automatically giving up a chunk of their power budget that someone using a cleaner instruction set encoding can apply directly to useful work. And as the previous poster already pointed out - source code portability is the only thing that matters to application developers, and that's a non-problem these days. Using the X86 instruction set encoding is stupid. Using it on a low-power-budget device is suicide.Jovec - Thursday, April 3, 2008 - link

I don't think the 10% reference meant 10% of all chips, but rather 10% of the current chip at the time the statement was made. In other words, x86 instruction decoding requires (roughly) a fixed amount of transistors for any chip, so the smaller the die size and larger the transistor count, less and less space is devoted to it.highlandsun - Thursday, April 3, 2008 - link

Yes, that's obvious. And it's also obvious that Atom at 47 million transistors is paying a greater proportionate cost than Core2 Duo at 410 million transistors. In 2002 when Fred made that statement, AMD's current chip was the AthlonXP Thoroughbred, with about 37 million transistors. At the same time the Pentium 4 had 55 million. Put in context, I'd guess that the Atom at 47M vs P4 at 55M has more than 10% of its resources devoted to X86 decoding.Also, Fred's statement in 2002 didn't take into account the additional complexity introduced by the AMD64 instruction extensions, where now a single instruction may be anywhere from 1 to 16 bytes long. Given that you're doing a completely clean ground-up chip design in the first place, it would have made more sense (from both a power budget and real estate perspective) to design a clean, orthogonal, uniform-length encoding at the same time.

Cross-platform ABI compatibility is stupid in the context they're aiming for; nobody is going to run their PC version of Crysis or MSWord on their cellphone. All that matters is API compatibility. With a consistent API, you can still run a separate binary translator if you really really want to move a desktop app to your mobile device but in most cases it would be a bad idea because a desktop app is unlikely to take advantage of power-saving APIs that would be important on a mobile. I.e., most of the time you're going to want purpose-built mobile apps anyway.

floxem - Tuesday, April 15, 2008 - link

I agree. But it's Intel. What do you expect?maree - Thursday, April 3, 2008 - link

I dont think MS will be ready before Windows 7 is released, which is another 3-5 years... and might coincide with Moorestown. Microsoft started work on WindowsLite only after releasing Vista. Vista is bloatware as of now. As of now MS has to rely on crippled versions of XP and Vista like starter and home, which is not very ideal.Apple and Linux are going to have a free run till then...

TA152H - Wednesday, April 2, 2008 - link

Bringing up the Pentium is a little strange, because the whole market is completely different.The Pentium wasn't supposed to be for everyone when it came out. The processor market was different back then where previous generations lasted a long, long time. The Pentium wasn't supposed to replace the 486 right away, or even quickly, and being huge and a terrible power hog was acceptable because the initial iteration was just for a very small group of people who absolutely needed it. The original Pentium had a lot of problems, and struggled badly to reach 66 MHz, so they sold most of their processors at 60 MHz. The second generation was intended more for mainstream.

Nowadays the latest generation replaces the earlier much more quickly, and has to cover more market segments more quickly. I still remember IBM releasing new machines for the 8086 in 1987. That's 9 years after the chip was made. It's just a different market.

The Pentium is nothing like the Silverthorne though, and it's a strange comparison. The Pentium executed x86 instructions, it wasn't decoupled. It also had both pipes, the U and V, lockstepped, which is limitation the Silverthorne doesn't have.

Saying the Pentium Pro was the first processor that allowed out of order processing is strange indeed. The only other processor this would have made sense with was the Pentium, since it was the only previous processor that was superscalar. So, they only made one in order processor, and then went to out of order with the next. It's difficult to see the extrapolation from this that it will be five years or more before Silverthorne goes out of order. It might be that long, but the backwards reference shouldn't be used to back that; it does more to contradict it.

Anand Lal Shimpi - Wednesday, April 2, 2008 - link

The Pentium reference was merely to show that what was once a huge, 300mm^2 design could now be built on a much, much smaller scale. And starting from scratch it's now possible to build something in-order that's significantly faster.The Pentium was an obvious comparison given that it was Intel's last two-issue in-order design, but I didn't mean to imply anything beyond that.

It won't be too long before we'll be able to have something the speed of a Core 2 in a similarly small/cool running package as well :)

Take care,

Anand

fitten - Wednesday, April 2, 2008 - link

I remember back in the days of the Mac FX we talked about 'what ifs' like making a 6502 with the (then) modern process technologies and how fast would it run. I wonder what about now :)crimson117 - Wednesday, April 2, 2008 - link

I am SO going to hold you to that! But I can only hope "won't be long" will mean within 12 months rather than within 12 years :P

Especially after my fiasco mounting a Freezer 7 Pro on an Abit IP35-E, I'd love if a heatsink weren't even necessary.

Anand Lal Shimpi - Wednesday, April 2, 2008 - link

12 months won't be a reality unfortunately :) But look at it this way, the first Pentium M came out in 2003? And 5 years later we're able to have somewhat comparable performance with the Atom processor.I'm really curious to see what happens with Atom on 32nm...