Quad SLI with 9800 GX2: Pushing a System to its Limit

by Derek Wilson on March 25, 2008 9:00 AM EST- Posted in

- GPUs

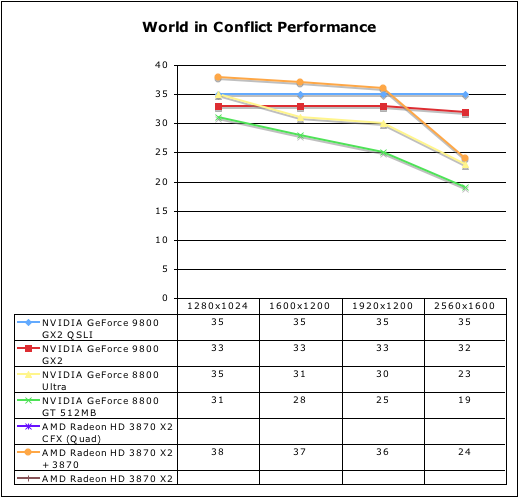

Do we see the same thing with World in Conflict?

World in Conflict is also system bound. In our overclock test, we did not see any improvement in performance using the built-in benchmark. This indicates that the limitation is coming from somewhere else. Our platform swap did give us almost 10% improvement but our performance “curve” was still just as flat, meaning we’re still limited somewhere.

With the type of high-end hardware now in our hands that has enabled us to expose such limitations, we still have a lot of investigating to do before we better understand the issues. It is clear that the benchmark for World in Conflict is much harder on the system then actual gameplay, so we will try out some in-game tests to see if we can’t make any more sense of this one. For now, here’s what we see on Skulltrail.

54 Comments

View All Comments

DerekWilson - Wednesday, March 26, 2008 - link

we've looked at using the hp blackbird ...the major reason i want to use skulltrail is to compare crossfire to sli.

there are plenty of reasons i'd rather use another platform, but i'd love it if either AMD or NVIDIA would take a step outside of the box and enable either their platform to support other multiGPU configurations or enable their drivers and cards to run in multiGPU configurations on other platforms.

7Enigma - Tuesday, March 25, 2008 - link

It's a slippery slope. We want to be able to say Nvidia is better than ATI (or vice versa), or that future scaling due to architecture will make A better than B. The truth as you pointed out is that it doesn't happen this way in all cases. You can have a A64 3200+ (like I currently do) and throw a GX2 at it and it probably won't perform better than a 9600GT. But that doesn't mean its an equal card, just that in a particular situation it performed the same. It's up to the buyer to do their homework and figure out whether its worth it to drop $650 when their system in current $$$ might not be worth the price of the card....Hocp tried this tactic with the launch of the C2D's and got a lot of heat (I agreed with the anger). They were trying to show that most games are GPU limited and so the new CPU's showed no benefit. Of course it was only a small selection of games, and they didn't take into consideration the 2 largest cpu-straining genre's (RTS and flight sim), and they were running at very high resolutions (which obviously would make things GPU bound).

One of gamespot.com's good features is in their game coverage (pretty much all I use them for). They put out hardware guides that do exactly what you want. They take a specific game and throw a battery of systems at it to see what makes a difference. Those guides will show you just how much improvement you can expect when going from a certain graphics card to a better one on a specific cpu platform. You'll be able to see whether your cpu/mobo combination is at the end of its usable lifespan (ie upgrading other components such as GPU/ram no longer yield a large improvement due to cpu/other bottleneck).

I would say while like you I read these reviews knowing I'll likely never own one of these cards/cpu's, it does give a good picture of who is at the top, and therefore, who has the potential to outlast the other in a system before an upgrade is needed.

With all that said, there is clearly a major problem going on and so all the data generated in this and possibly the previous single GX2 review also benchmarked on the skulltrail platform needs to be taken with a grain of salt, realizing that the numbers could very likely be invalid.

DerekWilson - Tuesday, March 25, 2008 - link

I doubt there is a major problem that would invalidate the data ... and I'm not just saying that because I spent weeks testing and troubleshooting :-)certainly something is going on, but in spite of the fact that 780i performs a bit higher, performance characteristics are exactly the same -- if there is an issue with my setup it is not platform specific.

I'm still tracking issues though ...

chizow - Tuesday, March 25, 2008 - link

Nice job on the review Derek, looks like you ran into some problems but I'd guess testing these new pieces of hardware make it worthwhile.It really looks like Quad SLI scaling is really poor right now, do you think its a case of drivers needing to mature, CPU bottleneck, or frame buffer limitations? I know Vista should be maxed at 4 frame buffers, but there seems to be very little scaling beyond a single GX2 in everything except Crysis (and COD4). In some games, performance actually decreases with the 2nd GX2.

Also, seeing the massive performance difference between Skulltrail and 780i, is it even worthwhile to continue using Skulltrail as a test platform? I understand it makes it more convenient for you guys to test between different GPU vendors, but a 25% difference in Crysis between an NV SLI solution and Intel's SLI solution is rather drastic, and that's *after* you factor in the 2nd CPU for Skulltrail. Does ATI suffer a similar performance hit when compared against its best performing chipset platform?

I would've liked to have seen Tri-SLI compared in there. Personally I think Tri-SLI with 8800 GTX/Ultra and soon, 9800 GTX will outperform Quad-SLI as it seems the drivers are a bit more mature for Tri-SLI and scaling was better as well. SLI performance with those parts is slightly better already than the GX2 and adding that third card should give Tri-SLI the lead over Quad-SLI.

Lastly, how was your actual gameplay experience with these high-end parts? Micro-stutter is a buzz word that has been gaining steam lately with multi-GPU solutions. Did you notice any in your testing? It looks like frame buffer size really kills all of these 512MB parts at 2560, would you consider games at that resolution unplayable? It seems many who considered 2-GX2 or 2-X2 would have done so to play at 2560. If that resolution is unplayable, you're looking at an even smaller window of consumers that would actually buy and benefit from such an expensive set-up.

seamusmc - Wednesday, March 26, 2008 - link

chizow check out Hard OCP's review in regards to 'micro' stutter. They do a great job of presenting the issue and how it affects gameplay.They feel the problem is due to the smaller amount of memory/memory bandwidth on the GX2 as opposed to an 8800 GTX/Ultra.

DerekWilson - Wednesday, March 26, 2008 - link

in my gameplay experience, i had no difficulty with micro stutter and the 9800gx2 in quad sli.i will say that i have run into the problem on crossfirex in oblivion with 4 way at very high res. it wasn't that pronounced or detrimental to the overall experience to me, but i'll make sure to mention it when i run into this problem in the future.

cactusjack - Tuesday, March 25, 2008 - link

Nvidia should go back to making good stable video cards with good IQ instead of flexing their e muscles with crap like this that no one will ever want or need. Nvidia had problems with power issues and vista driver issues on 8 series cards (G92)that they should be working on.raymondse - Tuesday, March 25, 2008 - link

Crysis this, Crysis that. SLI this, CrossFire that...After reading almost a dozen reviews of SLI, Tri-SLI, Quad-SLI, and CrossFire running Crysis and handful of other games, it seems that there is something terribly wrong with the all the benchmarks. Test results show that raw multi-GPU horsepower, even when coupled with multi-CPUs, just isn't delivering the kinds of numbers that most of us were expecting. The potential computing power that this kind of hardware can deliver just doesn't show in the numbers. Something is really, really wrong with one of these components thats disrupting the whole point of going for more than one CPU/GPU.

What I'd like to see is some definitive study showing where the problem(s) is and who to blame. Is it the CPU? GPU? Memory? System Bus? PCI-E? Drivers? DirectX? Windows? or the game/application itself?

After all these tests and benchmarks run by really, really smart people, someone out there ought to be able to deduce who messed up in all this business.

Das Capitolin - Wednesday, April 2, 2008 - link

What I dislike about many reviews, is that they test Crysis on "HIGH" settings. There's a major difference between "HIGH" which doesn't use AA, and HIGH with 16x Q AA.Here's an example of the difference it makes.

http://benchmarkreviews.com/index.php?option=com_c...">http://benchmarkreviews.com/index.php?o...;Itemid=...

Das Capitolin - Wednesday, April 2, 2008 - link

What I dislike about many reviews, is that they test Crysis on "HIGH" settings. There's a major difference between "HIGH" which doesn't use AA, and HIGH with 16x Q AA.Here's an example of the difference it makes.