More Single Card Dual GPU Madness: NVIDIA's Flagship 9800 GX2

by Derek Wilson on March 18, 2008 9:00 AM EST- Posted in

- GPUs

The 9800 GX2 Inside, Out and Quad

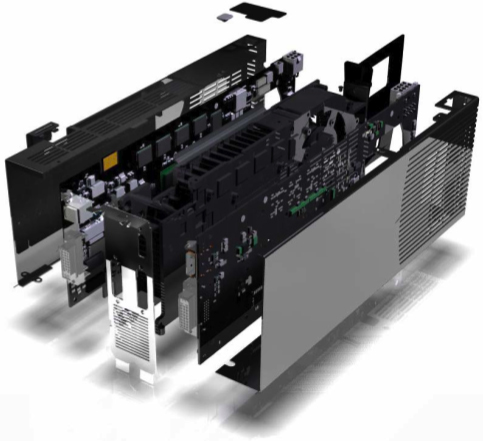

The most noticeable thing about the card is the fact that it looks like a single PCB with a dual slot HSF solution. Appearances are quite deceiving though, as on further inspection, it is clear that their are really two PCBs hidden inside the black box that is the 9800 GX2. This is quite unlike the 3870 X2 which puts two GPUs on the same PCB, but it isn't quite the same as the 7950 GX2 either.

The special sauce on this card is the fact that the cooling solution is sandwiched between the GPUs. Having the GPUs actually face each other is definitely interesting, as it helps make the look of the solution quite a bit more polished than the 7950 GX2 (and let's face it, for $600+ you expect the thing to at least look like it has some value).

NVIDIA also opted not to put both display outputs on one PCB as they did with their previous design. The word on why: it is easier for layout and cooling. This adds an unexpected twist in that the DVI connectors are oriented in opposite directions. Not really a plus or a minus, but its just a bit different. Moving from ISA to PCI was a bit awkward with everything turned upside down, and now we've got one of each on the same piece of hardware.

On the inside, the GPUs are connected via PCIe 1.0 lanes in spite of the fact that the GPUs support PCIe 2.0. This is likely another case where cost benefit analysis lead the way and upgrading to PCIe 2.0 didn't offer any real benefit.

Because the 9800 GX2 is G9x based, it also features all the PureVideo enhancements contained in the 9600 GT and the 8800 GT. We've already talked about these features, but the short list is the inclusion of some dynamic image enhancement techniques (dynamic contrast and color enhancement), and the ability to hardware accelerate the decode of multiple video streams in order to assist in playing movies with picture in picture features.

We will definitely test these features out, but this card is certainly not aimed at the HTPC user. For now we'll focus on the purpose of this card: gaming performance. Although, it is worth mentioning that the cards coming out at launch are likely to all be reference design based, and thus they will all include an internal SPDIF connector and an HDMI output. Let's hope that next time NVIDIA puts these features on cards that really need it.

Which brings us to Quad SLI. Yes, the beast has reared its ugly head once again. And this time around, under Vista (Windows XP is still limited by a 3 frame render ahead), Quad SLI will be able to implement a 4 frame AFR mode for some blazing fast speed in certain games. Unfortunately, we can't bring you numbers today, but when we can we will absolutely pit it against AMD's CrossFireX. We do expect to see similarities with CrossFireX in that it won't scale quite as well when we move from 3 to 4 GPUs.

Once again, we are fortunate to have access to an Intel D5400XS board in which we can compare SLI to CrossFire on the same platform. While 4-way solutions are novel, they certainly are not for everyone. Especially when the pair of cards costs between $1200 and $1300. But we are certainly interested in discovering just how much worse price / performance gets when you plug two 9800 GX2 cards into the same box.

It is also important to note that these cards come with hefty power requirements and using a PCIe 2.0 powersupply is a must. Unlike the AMD solutions, it is not possible to run the 9800 GX2 with a 6-pin PCIe power connector in the 8-pin PCIe 2.0 socket. NVIDIA recommends a 580W PSU with PCIe 2.0 support for a system with a single 9800 GX2. For Quad, they recommend 850W+ PSUs.

NVIDIA notes that some PSU makers have built their connectors a little out of spec so the fit is tight. They say that some card makers or PSU vendors will be offering adapters but that future power supply revisions should meet the specifications better.

As this is a power hungry beast, NVIDIA is including its HybridPower support for 9800 GX2 when paired with a motherboard that features NVIDIA integrated graphics. This will allow normal usage of the system to run on relatively low power by turning off the 9800 GX2 (or both if you have Quad set up), and should save quite a bit on your power bill. We don't have a platform to test the power savings in our graphics lab right now, but it should be interesting to see just how big an impact this has.

50 Comments

View All Comments

iceveiled - Tuesday, March 18, 2008 - link

Probably the reason why a dual 8800 GT wasn't tested is because the mobo in the setup doesn't support SLi (it's an intel mobo)....chizow - Tuesday, March 18, 2008 - link

Its Skull Trail which does support SLI (they actually mention a GX2 SLI, ie Quad SLI, review upcoming). More likely NV put an embargo or warning on direct 8800GT/GTS comparisons so the spotlight didn't shift to artificial clock speed and driver discrepancies. After all, they do want to sell these abominations. ;)madgonad - Tuesday, March 18, 2008 - link

You clearly aren't paying attention to the market. A lot of people who cling to their PC gaming experience would also like to move their PC into the living room so that they can experience the big screen + 5/7.1 surround sound like their console brethren. The new Hybrid power and graphics solutions will allow a HTPC to have one of these Beasts as a partner for the onboard graphics. When watching movies or viewing the internet, this beast will be off and not making heat or noise. But once Crysis comes on, so does the discrete video card and it is off to the races. I have been waiting for the market to mature so that I can build a PC that games well, holds all my movies, and TiVos my shows - all in one box. All that I am waiting for is a Bitstream solution for the HD audio - which are due in Q2.JarredWalton - Tuesday, March 18, 2008 - link

That's true... but the HybridPower + SLI stuff isn't out yet, right? We need 790g or some such first. I also seem to recall NVIDIA saying that HybridPower would only work with *future* NVIDIA IGPs, not with current stuff. So until we have the necessary chipset, GPU, and drivers I for one would not even think of putting a 9800 GX2 into an HTPC. We also need better HDMI audio solutions.Anyway, we're not writing off HTPC... we're just saying that more the vast majority of HTPC users this isn't going to be the ideal GPU. As such, we focused on getting the gaming testing done for this article, and we can talk about the video aspects in a future article. Then again, there's not much to say: this card handles H.264 offload as well as the other G92 solutions, which is good enough for most HTPC users. Still need better HDMI audio, though.

casanova99 - Tuesday, March 18, 2008 - link

While this is most likely a G92 variant, this isn't really akin to an SLI 8800GT setup, as the 8800GT has 112 shaders and 56 texture units. This card has 256 (128 * 2) shaders and 128 (64 * 2) texture units.It seems to match more with a 8800GTS 512MB, but with an underclocked core and shaders, paired with faster memory.

chizow - Tuesday, March 18, 2008 - link

While this is true, it only perpetuates the performance myths Nvidia propagates with its misleading product differentiation. As has been shown time and time again, the differences in shaders/texture units with G92 have much less impact on performance compared to core clock and memory speeds. There's lots of relevant reviews with same-clocked 8800GT vs GTS performing nearly identically (FiringSquad has excellent comparisons), but you really need to look no further than the 9600GT to see how overstated specs like shaders are with current gen GPUs. If you dig enough you'll find the info you're looking for, like an 8800GT vs 8800GTS both at 650/1000 (shocker, 9800GTX is expected to weigh in at 675/1100). Problem is most reviews will take the artificial stock clock speeds of both and compare them, so 600/900 vs 650/1000 and then point to irrelevant core differences as the reason for the performance gap.hooflung - Tuesday, March 18, 2008 - link

Well, I am really disapointed in this review. It seems almost geared towards being a marketing tool for Nvidia. So it might be geared towards HD resolutions, what about the others resolutions? If AMD is competitive at 1680x1050 and 1900x1200 for ~200+ dollars less would the conclusion have been less favorable and start to nitpick the sheer awkwardness of this card? Also, I find it disturbing that 9600GTs can do nearly what this thing can do, probably at less power ( who knows you didn't review power consumption like every other card revier ) and cost half as much.To me, Nvidia is grasping at straws.

Genx87 - Tuesday, March 18, 2008 - link

Eh? Grasping at straws with a solution that at times is clearly faster than the competition? This is a pretty solid single card offering if you ask me. Is it for everybody? Not at all. High end uber cards never are. But it definately took the crown back from AMD with authority.hooflung - Tuesday, March 18, 2008 - link

A single slot solution that isn't much better than a SLI 9600GT setup at those highest of high resolutions. Not for everyone is the understatement of the year. Yes I can see it is the single fastest on the block but at what cost? Another drop in the hat of a mislabeled 8 series product that is in a package about as illustrious as a cold sore.This card is a road bump. The article is written based on a conclusion that Nvidia cannot afford, and we cannot afford, to have a next generation graphics processor right now. To me, it smacks of laziness. Furthermore, gone are the times of 600 dollar graphics cards I am afraid. I guess Nvidia employees get company gas cards so the don't pay 3.39 a gallon for gasoline.

How does this card flare for THE MAJORITY of users on 22" and 24" LCDs. I don't care about gaps at resolutions I need a 30" or HDTV to play on.

Genx87 - Tuesday, March 18, 2008 - link

Sounds like you have a case of sour grapes. Dont get so hung up on AMD's failings. I know you guys wanted the x2 to trounce or remain competitive with this "bump". But you have to remember AMD has to undo years of mismanagement at the hands of ATI's management.600 dollar cards keep showing up because they sell. Nobody is forcing you buy one.