ATI Radeon HD 3870 & 3850: A Return to Competition

by Anand Lal Shimpi & Derek Wilson on November 15, 2007 12:00 AM EST- Posted in

- GPUs

New Features you Say? UVD and DirectX 10.1

As we mentioned, new to RV670 are UVD, PowerPlay, and DX10.1 hardware. We've covered UVD quite a bit before now, and we are happy to learn that UVD is now part of AMD's top to bottom product line. To recap, UVD is AMD's video decode engine which supports decode, deinterlacing, and post processing for video playback. The key features of UVD are full decode support for both VC-1 and H.264. MPEG-2 decode is also supported, but the entropy decode step is not performed for MPEG-2 video in hardware. The advantage over NVIDIA hardware is the inclusion of entropy decode support for VC-1 video, but this tends to be overplayed by AMD. VC-1 is lighter weight than H.264, and the entropy decode step for VC-1 doesn't make or break playability even on lower end CPUs.

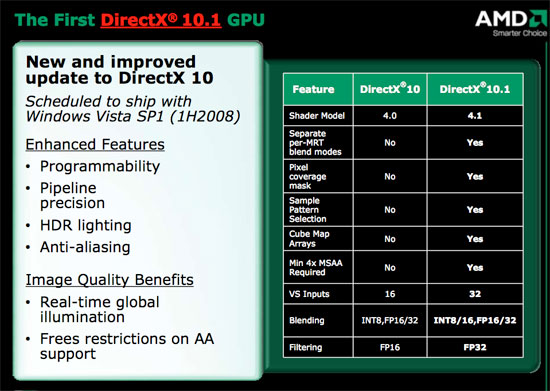

DirectX 10.1 is basically a release of DirectX that clarifies some functionality and adds a few features. Both AMD and NVIDIA's DX10 hardware support some of the DX10.1 requirements, but since they don't support everything they can't claim DX10.1 as a feature. Because there are no capability bits, game developers can't rely on any of the DX10.1 features to be implemented in DX10 hardware.

It's good to see AMD embracing DX10.1 so quickly, as it will eventually be the way of the world. The new capabilities that DX10.1 enables are enhanced developer control of AA sample patterns and pixel coverage, blend modes can be unique per render target rather, vertex shader inputs are doubled, fp32 filtering is required, and cube map arrays are supported which can help make global illumination algorithms faster. These features might not make it into games very quickly, as we're still waiting for games that really push DX10 as it is now. But AMD is absolutely leading NVIDIA in this area.

Better Power Management

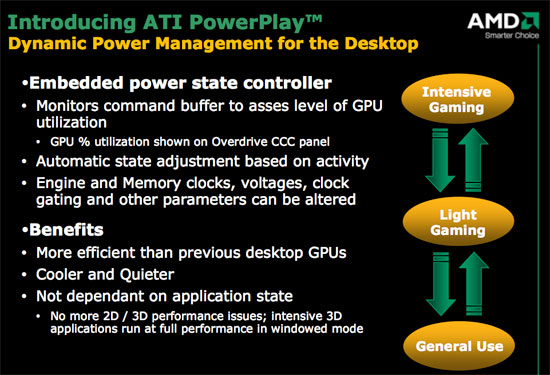

As for PowerPlay, which is usually found in mobile GPUs, AMD has opted to include broader power management support in their desktop GPUs as well. While they aren't to wholly turn off parts of the chip, clock gaiting is used, as well as dynamic adjustment of core and memory clock speed and voltages. The command buffer is monitored to determine when power saving features need to be applied. This means that when applications need the power of the GPU it will run at full speed, but when less is going on (or even when something is CPU limited) we should see power, noise, and heat characteristics improve.

One of the cool side effects of PowerPlay is that clock speeds are no longer determined by application state. On previous hardware, 3d clock speeds were only enabled when a fullscreen 3D application started. This means that GPU computing software (like folding@home) was only run at 2D clock speeds. Since these programs will no doubt fill the command queue, they will get full performance from the GPU now. This also means that games run in a window will perform better which should be good news to MMO players everywhere.

But like we said, dropping 55nm parts less than a year after the first 65nm hardware is a fairly aggressive schedule and one of the major benefits of the 3800 series and an enabler of the kind of performance this hardware is able to deliver. We asked AMD about their experience with the transition from 65nm to 55nm, and their reply was something along the lines of: "we hate to use the word flawless... but we're running on first silicon." Moving this fast even surprised AMD it seems, but it's great when things fall in line. This terrific execution has served to put AMD back on level competition with NVIDIA in terms of release schedule and performance segment. Coming back from the delay in R600 to hit the market in time to compete with 8800 GT is a huge thing and we can't stress it enough. To spoil the surprise a bit, AMD did not outperform 8800 GT, but this schedule puts AMD back in the game. Top performance is secondary at this point to solid execution, great pricing, and high availability. Good price/performance and a higher level of competition with NVIDIA than the R600 delivered will go a long way to reestablish AMD's position in the graphics market.

Keeping in mind that this is an RV GPU, we can expect AMD to have been working on a new R series part in conjunction with this. It remains to be seen what (and if) this part will actually be, but hopefully we can expect something that will put AMD back in the fight for a high end graphics part.

Right now, all that AMD has confirmed is a single slot dual GPU 3800 series part slated for next year, which makes us a little nervous about the prospect of a solid high end single GPU product. But we'll have to wait and see what's in store for us when we get there.

117 Comments

View All Comments

Locut0s - Thursday, November 15, 2007 - link

1) Only Vista was used, though XP has a lot larger user base.You answered your own question there. Remember this card is aimed at the midrange not the enthusiast and even more of these consumers are using XP.

2) Limited variety of games.

The games covered though are all the important big names that actually stress these cards and show what they are made of.

3) Limited variation of AF/AA

See Anand's reply above

4) No UVD tests.

You can see previous reviews to see UVD performance. I doubt this has changed at all since the hardware is identical.

NullSubroutine - Thursday, November 15, 2007 - link

I was saying XP should have been benchmarked because it is the largest userbase and most people especially at this price range will be using XP.When you limit the number of games benchmark you do not show an accurate performance of a video card, it has been shown that certain games play better on certain cards. Some sites only do reviews with games that are biased towards a certain brand or GPU; I expect that Anandtech is not one of those sites and expect a variety of games that show the true performance of the cards.

Locut0s - Thursday, November 15, 2007 - link

Sorry misread your question about XP/Vista. Yes they could test on XP. However it has been shown that the performance difference between the two is fairly small now and is in XPs favour meaning that games should run as good or better than what they show here.NullSubroutine - Thursday, November 15, 2007 - link

I would have to disagree. There was at least a 20 to 25 percent difference in XP very high settings vs Visa very high settings in Crysis. If you look at any number of games, there is still a deficit for performance betwen XP and Vista, while the gap is shrinking, it is still very pronounced.Anand Lal Shimpi - Thursday, November 15, 2007 - link

I think the real solution to the XP/Vista issue is to do a separate article looking at driver performance in XP vs. Vista. Derek was working on such a beast before the 8800 GT launched, and as far as I remember he found that with the latest driver releases that there's finally performance parity between the OSes (and between 32-bit/64-bit versions of Vista as well, interestingly enough).As far as more titles go, we tried to focus on the big game releases that people were more likely upgrading their hardware for. Time is always limited with these things, but do you have any specific requests for games you'd like to see included? As long as they aren't overly CPU limited we can always look at including them.

I'll have to confirm with Derek, but I believe UVD performance hasn't changed since our last look at UVD with these GPUs: http://www.anandtech.com/showdoc.aspx?i=3047">http://www.anandtech.com/showdoc.aspx?i=3047.

Thanks for the suggestions, I aim to please so keep it coming :)

Take care,

Anand

NullSubroutine - Friday, November 16, 2007 - link

The biggest discreptency (spelling) I have seen between all reviews have been the drivers used (especially if you take in consideration the difference from say XP to Vista 64 bit new to old drivers).Many review sites are using drivers that came with the disks, 8.43 or 8.44 which are supposed to be out Nov 15th for download (I couldnt find them on AMD's site earlier today). There seems to be these new drivers (must be beta drivers) give a huge boost in performance (it seems) for the 3800 series.

What I cannot figure out why they test the 3800 series with the 8.43/8.44 but the 2900s with 7.10. So its hard to tell if the newer drivers are good for the HD series in general or more specific to the 3800s.

Has Anand tested the different drivers?

Lonyo - Thursday, November 15, 2007 - link

They can't really test in XP that easily.Either they test in Vista, or they test in Vista AND XP (to be able to run DX10 benchmarks).

I expect it's just easier to do all the tests in one OS, rather than having half run in Windows XP, except for DX10 which they run in Vista.

MGSsancho - Thursday, November 15, 2007 - link

I agree with you on that. I think there will be another UVD article later. like nothing but what video cards can offload parts of the video decode, what minimial cpu is needed for like a HTPC to run HD movies.Xp would be cool.

but Anand, could you do a 32b v 64b? i know you mentioned it in the article, but can you do 1gb, 2gb, 4gb, and 8gb configs? maybe current games with single core (AMD 57FX the old king), with a dual core then a quad core? i bring up 8gb for a reason. now aday we can get 2gb dimms. And some of us us our comps for other task like running a few virtual mahchines minimized. we minimize our work, game for a 30 min break then go back to work. or maybe were running apache for a home website. or many other task that simply eat up ram (leaving FF open for weeks}.

Im not asking for a dual socket god machine. but with current mobos, its possible to do 8gb of ram. thanks for reading this and take care.

Locut0s - Thursday, November 15, 2007 - link

With all the buzz in the CPU world nowadays being about more cores and not more MHZ it's interesting to see that the latest graphics cards have been all about more MHZ and more features. It seems to me that it's in the graphics card world that more cores would make the most sense given the almost infinite scalability of rendering. Instead of making the next generation of GPUs more and more complex than the previous generation why not work instead on making these GPUs work together better. Then your next generation card could just be 4 or 5 of the current generation GPUs on the same die or card. Think of it, if they can get the scaling and drivers down pat then you could churn out blazingly fast cards just by adding more cores to the card. And as long as you are manufacturing the same generation chip and doing so at HUGE volumes the cost per chip should go down too.Think this is something we will start to see soon?

Gholam - Thursday, November 15, 2007 - link

In case you haven't noticed, graphics cards have been packing cores by the dozens from the beginning - and lately, by the hundreds.