Intel "Harpertown" Xeon vs. AMD "Barcelona" Opteron

by Jason Clark & Ross Whitehead on September 18, 2007 5:00 PM EST- Posted in

- IT Computing

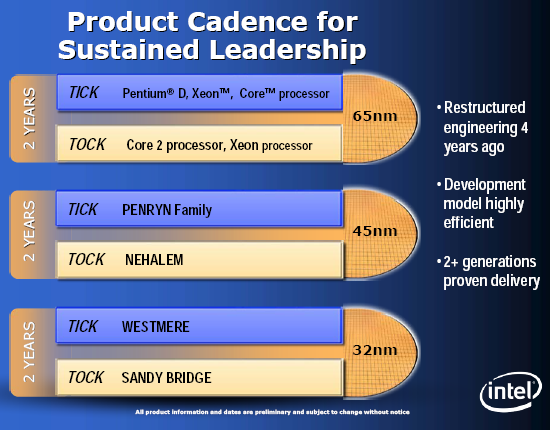

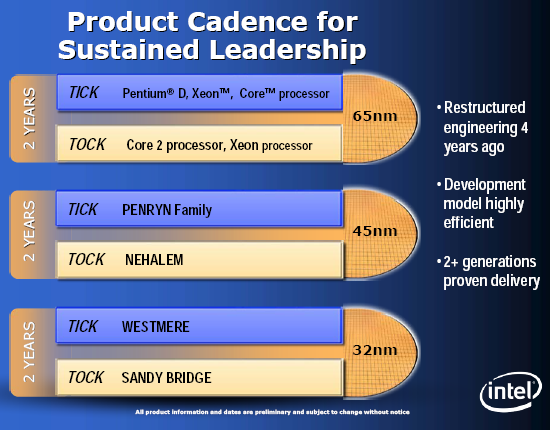

The clock has "ticked" and Intel has released a refresh to the quad-core Xeon line-up, code-named Harpertown. AMD has also finally released their quad-core Opteron, code-named Barcelona. Intel is on what they like to call a tick-tock release cycle of processors. Every "tick" is a refresh of the current architecture, and a "tock" represents a new architecture. AMD doesn't seem to be on any pattern of release cycles, and the Barcelona launch is a bit late and not as well organized as some of their previous product launches.

Harpertown will launch with clock speeds all the way up to 3.16GHz, and will also ship two low voltage parts (2.3GHz and 2.6GHz). The rumor mill speculates that Intel may be able to reach 3.4GHz with the new 45nm process shrink. Barcelona on the other hand is launching at 2.0GHz with speeds down to 1.7GHz. There will be three low voltage Barcelona parts at launch: 1.7GHz, 1.8GHz and 1.9GHz. Frankly, it's more than a bit disappointing that AMD wasn't able to launch at higher clock-speeds; however, they are planning to have higher-clocked parts towards year-end that will only require a few more watts to run.

For quite some time now Intel has been living the high-life in the quad-core arena, even though both AMD and the media criticized them for gluing two dual-core processors together to create their quad-core product line. AMD has lost market share to Intel over the past couple of years, mostly due to the success Intel has had with their current Core architecture. One does wonder if AMD might have sat too long on the Opteron before making head-way into a new design or moving along a bit quicker to quad-core; yes, there was work happening, including an aborted architecture, but when you're fighting the reigning heavyweight such mistakes can be costly. Obviously, AMD has had a rough year with respect to their finances, but hopefully they are on the mend and Barcelona is the beginning of an upswing.

We've already looked at Barcelona in several previous articles, but Harpertown is the new kid on the block this week. That being the case, we'll start with a closer look at Intel's latest addition to their lineup.

Harpertown will launch with clock speeds all the way up to 3.16GHz, and will also ship two low voltage parts (2.3GHz and 2.6GHz). The rumor mill speculates that Intel may be able to reach 3.4GHz with the new 45nm process shrink. Barcelona on the other hand is launching at 2.0GHz with speeds down to 1.7GHz. There will be three low voltage Barcelona parts at launch: 1.7GHz, 1.8GHz and 1.9GHz. Frankly, it's more than a bit disappointing that AMD wasn't able to launch at higher clock-speeds; however, they are planning to have higher-clocked parts towards year-end that will only require a few more watts to run.

For quite some time now Intel has been living the high-life in the quad-core arena, even though both AMD and the media criticized them for gluing two dual-core processors together to create their quad-core product line. AMD has lost market share to Intel over the past couple of years, mostly due to the success Intel has had with their current Core architecture. One does wonder if AMD might have sat too long on the Opteron before making head-way into a new design or moving along a bit quicker to quad-core; yes, there was work happening, including an aborted architecture, but when you're fighting the reigning heavyweight such mistakes can be costly. Obviously, AMD has had a rough year with respect to their finances, but hopefully they are on the mend and Barcelona is the beginning of an upswing.

We've already looked at Barcelona in several previous articles, but Harpertown is the new kid on the block this week. That being the case, we'll start with a closer look at Intel's latest addition to their lineup.

77 Comments

View All Comments

Justin Case - Friday, September 21, 2007 - link

The point is not whether Barcelona is bad or not (I think it's a huge disappointment in terms of performance, mainly due to the low clock speed, and I'm not convinced by the L3), and I expect the Xeons to beat the crap out of it in terms of peak performance. In fact, since I work mainly in effects and image processing, the Xeon is by far the best choice for me (for the workstations, at least; render nodes are a different matter, and cost becomes more relevant).The point is that the Xeons do have a very big weakness for power-critical server environments: the consumption of FB-DIMMs. And leaving 75% of the Xeon's memory slots empty is just not something that people will do, when running a server under high loads.

If a system can take up to 16 DIMMs, and if you cannot or do not want to test multiple configurations (ex., 4, 8, 16), which would have been useful in an article about performance-per-watt, then the logical choice is to fill half the memory banks. You (or whoever supplied it - we still don't know who that was, AMD?) did that with the Opteron system. It doesn't cover every possible case, but it covers the "average" (and I daresay most common) configuration.

I can certainly understand Intel asking you to test it with less FB-DIMMs. I cannot understand you complying with their request, when your main obligation is (or should be) to your readers.

I wonder if you would also accept not running any 3D rendering benchmarks on Opteron systems if AMD asked you to, because they know they're at a disadvantage there?

And if you don't see the problem with having a "site view" that turns your entire website into a giant ad for one manufacturer, I guess I can't explain it to you. It's not labelled as an ad, it looks just like another Anandtech "section", and there are even links to it at the end of some of your articles (again, not labelled as advertising). At the very least change the banner at the top to "Intel", not "Anandtech". Your wish for "more sponsored site views" is, to say the least, worrying about the state of web IT journalism, and Anandtech in particular. But hey, I guess "sponsored world views" work well for Fox News, etc. Why get an objective picture of things when you can just dive straight into the channel that confirms and reinforces your preconceptions, wrapped in an aura of "journalism"?

Maybe the people who wrote this article are just very naive or very distracted, and somehow overlooked the excessive number of fans in the (cooler) Opteron system and the reduced number of (power-hungry) FB-DIMMs in the Xeon system. That's within the realm of possibility. Or maybe they noticed that but thought it wouldn't affect the results of a performance-per-watt comparison (which is stretching it a bit). But when you couple that with other recent articles and the "ad disguised as information" that is the "resource center", your credibility is at stake. Or, as far as I'm concerned, and for some of your writers, it's not even "at stake" anymore.

PS. Where exactly are your Verizon or T-Mobile or Gigabyte "site view" ads...? Last time I checked, when I click on those (clearly identifiable) ads I'm taken to the manufacturers' websites, I don't get a "morphed" version of Anandtech.

PPS. Hans Maulwurf's question below is also an interesting one.

JarredWalton - Friday, September 21, 2007 - link

Re: Hans' question,AS3AP is a complete benchmark suite, right? Just like SYSmark2007 in a sense (although that's sort of stretching it). There are different factors that go into the composite score. Without knowing more about the benchmark, I can't tell you how the overall vs. the Scalable scores. I would think there's a possibility that the overall score is heavily I/O limited (RAID arrays and such), in which case the scores of Intel and AMD in those tests might be a tie. In that case, cutting out those results to show the actual CPU/RAM scaling is a reasonable decision. Intel is 27% faster in the overall result, and up to 55% faster (something like that) in the scalable tests. If as an example there are three scalable tests and three tests that are I/O bound, the overall score should be about half the scalable score. Again, however, I don't know enough about the benchmark to answer - feel free to email Jason/Ross.

--------

Anyone that looks at the Intel Resource Center and doesn't recognize that it's basically a different form of advertising is beyond my help. They - and you - have probably already decided that we're bought out, and I doubt there's anything I can say that will change their mind. The fact is that we ripped on Intel for three years with NetBurst and praised AMD. Now the tables have turned, AMD is having problems and we're pointing them out, and Intel has some great CPUs and we're praising them for their performance; suddenly we're "bought out". (Funny thing: I seem to recall a lot of AMD adverts back when they were on top and not as many from Intel; now the situation has reversed.)

The articles in the Intel section are not written for the intent of marketing, though they can be used that way. They are honest opinions on the state of hardware at the time of the articles - anyone actually saying that Intel isn't the faster CPU on the desktop these days would have to be smoking something pretty potent. Are some of the opinions wrong? Probably to varying degrees, and all opinions are biased in some fashion - I'm biased towards price/performance and overclocking, for example, so I think stuff like Core 2 Extreme and Athlon FX is just silly.

When AMD was on top, we did far fewer Intel mobo reviews - even though the market was still 80%+ Intel boards (though not the enthusiast market). Now Intel is on top, and personally I couldn't care less about what the best AM2 board is, because I don't intend to buy one until AMD can become competitive again. (But then, I overclock most of my systems.)

It sucks for AMD that they are the smaller company *and* they have a lower performing part. It sucks for consumers that if there's no competition, R&D tends to stagnate. I'd love to see a competitive AMD again, and the Barcelona 2.5GHz chips might even make it to that stage. I'm more interested in Phenom X4 vs. Core 2 Quad, though, both running with DDR2 RAM and doing the type of work I'm likely to do. More likely than not, however, my next upgrade will be to Core 2 Quad Q6600 and X38, with some overclocking thrown in to get me up to around 3.3-3.5 GHz.

----------

For what it's worth, I'm writing this from my Opteron 165 setup, which is still my primary computer. The X1900 XT is getting a bit sluggish, so I have to go elsewhere for gaming at times (Core 2 E4300 @ 3.3GHz and an 8800 GTX), but for all non-gaming, non-encoding tasks this system is still excellent. It's also a lot cooler/quieter than some of the high-end setups I have access to, though with winter coming on I might want to bring a quad-core over to my desk for use as a space heater. Oh yeah - and I'm still running XP, which is one more reason to save gaming for another setup... I can try out DX10 without actually having to fubar my work computer. :)

Justin Case - Friday, September 21, 2007 - link

Again, you're trying to turn this into Intel vs. AMD and talk about all sorts of unrelated things while avoiding the issue. The issue isn't who makes the fastest CPUs. The issue is Anandtech's testing methodology and system setup options. If Anandtech had chosen to put 8 FB-DIMMs on the Xeon system and just 4 DIMMs on the Opteron system, and stick 16 fans into the Xeon, we would complain in exactly the same way. The issue isn't who wins. The issue is whether we can trust your methods, results and conclusions.From your posts here, it seems that Intel supplied you the Xeon system, and decided to install just 4 FB-DIMMs, is that correct?

Who supplied the AMD system, and who decided what its configuration should be? AMD? Intel? Neither?

PS. - I recognize the "Intel resource center" exactly for what it is. I'm sure Microsoft would "sponsor" an Anandtech "site view" in a blink if you wrote a couple of Vista vs. OSX articles based on creatively prepared systems and benchmarks. But of course, then you have to keep being nice and creative, or they might decide not to sponsor you any more (bummer). After all, a banner ad is a banner ad; the manufacturer controls what's in it. But if you want them to pay you to use your articles for marketing (as you said above), obviously you know what the conclusions of those articles must be. I wasn't born yesterday and I'm sure you weren't, either.

JarredWalton - Saturday, September 22, 2007 - link

I'm bringing up all the other issues because you brought them up. This isn't an answer to a single post.If we were to do what you suggest with the articles, we would lose readership in a large way. I can control what I write, but not so much for other articles. No article is perfect, and since I didn't write this article and I didn't perform the testing, I can't say for sure how flawed the comparisons are. Yes, we used faster Intel CPUs than AMD CPUs, but that's because Intel sent Harpertown and AMD sent Barcelona and with both being new, we basically used what we got. There is an update with 2.5GHz Barcelona coming.

For the fans, you're an IT guy so obviously you know what sort of fans go into a server. The answer is: the fans that the server supplier uses. And no, I don't know who specifically makes these servers... I think it was mentioned in a previous article, perhaps? I also don't know what amperage is on the Intel fans or the AMD fans. Eight lower RPM fans can actually use less power than four higher RPM fans... or they can use less. Ask Jason/Ross for details if you want, but the fact is that's how these servers are configured. I worked in a datacenter for a while, and let me tell you, the thought of removing/disabling fans in any system never crossed my mind. So just like we're stuck with different motherboards, we're stuck with different fan configs based on what the server manufacturer chooses. Considering that the Intel setup *does* use more power in many situations (particularly with more FB-DIMMs), I don't believe that the Intel fans are low RPM models. The real problem may be the internal layout and design decisions of the AMD server - the Intel system seems to have been better engineered in regards to ducting and heat sinks.

Who sent the systems? I don't know. It seems the Intel setup changed from previous articles while the AMD remained the same. Is that because Intel said, "we don't like the configuration you used - here's a better alternative"? I don't know that either. I'm guessing Intel worked with a third party to configure a server that they feel shows them in the best light. AMD probably did the same with the original setup, and AMD is welcome to change chassis/server as well. If they don't it's either because they don't care enough or because it wouldn't make enough of a difference. I'm inclined to think it's the latter: that these tests are still only a look at a small subset of performance, and what they show is enough useful information for people in the know to make decisions.

What *do* these tests show? To me, they show that at lower loads Intel is now a lot closer to AMD thanks to Harpertown, and that AMD per-socket performance has increased thanks to Barcelona. However, at higher loads Intel offers clearly superior performance - even if you stick with Clovertown. Performance/watt is influenced by a lot of things, so I personally take those results with a grain of salt. If a business is really concerned with performance per watt and power density, they'd likely be looking at blade servers instead. The results in this article may or may not apply to blade configurations, so I'm not willing to make that jump.

And as previously discussed, the amount of RAM a company intends to install is a consideration. If a company is going to load all DIMM slots, the Intel servers look like their power requirements will jump close to 50W relative to Barcelona... which means that Harpertown would be more like Clovertown on the graphs. I'd imagine 2.5GHz Barcelona will also require more power, but until I see results I don't know for sure. Companies looking at loading all RAM sockets are probably very concerned with overall performance as well, in which case Intel seems to have the lead... except that in a virtualized server environment, the results here may not show up.

So many factors need to be considered, that I'd be very concerned with anyone looking at this one article and then trying to come to a solid conclusion for all their IT needs. This is just one look at a couple configurations. Then again, a lot of IT departments just go with whatever they've used in the past (Dell, IBM, HP...) and take the advice of the server provider.

Justin Case - Saturday, September 22, 2007 - link

Again, you're replying to "complaints" that no one made.No one is "complaining" about the fact that the Xeon system is faster than the Opteron. I (and anyone else with a clue) would be extremely surprised if it wasn't. If we were going to "complain" about that to anyone, it would be to AMD.

You say that "companies looking at loading all RAM sockets are probably very concerned with overall performance as well, in which case Intel seems to have the lead". You're 100% right about the "seems". That is indeed the idea one gets from this article (where the Xeons seem to be the best choice both in terms of peak performance and in power efficiency). However, as soon as you load more than 1/4th of the memory banks on the Xeon, the tables are turned in power consumption. And when you load all of them, the difference is huge (10 watts per FB-DIMM adds up to 120 watts above your numbers). So, anyone who needs a lot of RAM but also needs to keep power consumption within certain limits (which is the norm rather than the exception, in dense server environemnts) cannot really go with the Xeons. And companies that need all the CPU power they can get (ex., 3D render farms) will naturally go with the Xeons and either reduce the amount of RAM or find some way of dealing with the extra power consumption and heat.

In other words, the Xeons only "seem" like the best choice for people planning to fill all RAM banks because your performance per watt calculations were made with 75% of those banks empty. That is what we (or I, at least) are complaining about.

Testing a 2 GHz Barcelona is fine. Hell, even testing a 1.6 GHz would be fine, if that was all you had. As long as you're honest about it. But tweaking one of the system's configuration (using just 25% of the memory slots), to hide its main weakness for server use (which is precisely what this article is supposed to be about) shows either incompetence or bias.

I thought that by posting here I already was "asking Jason / Ross about it". For some reason they don't seem to want to answer (publicly, at least).

Don't you think that the source of the servers (and the people or companies responsible for deciding their configuration, and how much they knew about the benchmark that was going to be used) should be mentioned in the article?

You talk about things that "maybe" and "probably" AMD did, but apparently you don't even know who supplied the AMD system (it seems to be in a desktop tower case), or how much memory the Intel system actually came with (wouldn't it be interesting if Intel had in fact supplied it with 16 FB-DIMMs?). I can also make conjectures about what may have happened and who may have configured the systems. But if you (as a member of Anandtech's staff) are also limited to guessing, maybe you're not the right person to reply.

Hans Maulwurf - Friday, September 21, 2007 - link

Sorry for posting here, but I think you didnt notice my post at the bottom of this page. No problem, I know this article is rather old now ;) An answer here would be nice. Thanks."Barcelona is about as fast as Harpertown in AS3AP. OK.

In your article you write:

"The Scalable Hardware benchmark measures relational database systems. This benchmark is a subset of the AS3AP benchmark and tests the following: ..."

Now you choose a subset of this test in which Harpertown is much faster. Obviously AS3AP consist of several substest and you could as well choose one where Barcelona is much faster. But whats the use of this? You tested all subtest together with your AS3AP-Test.

Its the same as testing a game and both CPUs having the same score. Then you choose a subtest(e.g. KI only) where Harpertown is faster and conclude its faster overall.

So what did I miss here? From what I read Barcelona is as fast in AS3AP as Harpi(and should be faster in some subtest and slower in others) while you conclude:

"Intel has made some successful changes to the quad-core Xeon that have helped it achieve as much as a 56% lead in performance over the 2.0GHz Barcelona part."

I dont understand this. "

Proteusza - Friday, September 21, 2007 - link

I think these tests are full of holes, and its a pity,I was geniunely curious to see how both new chips performed.

instead, we get this sponsored advertising.

In the full A3SAP benchmark, the AMD punches above its weight, while in the subset, it doesnt.

Now, if the results in the scalable CPU benchmarks were a subset of the A3SAP benchmarks (which we are told they are) and a 3 GHz Harpertown was able to lead a 2 GHz Barcelona by 27%, then we can say that the average difference between harpertown and barcelona is 27%.

now, if the chosen subset in question was 59%, that would mean it dragged the average down quite a bit. so what are the rest of the numbers?

there is no point in choosing on particular subset if you test the whole thing.

Justin Case - Thursday, September 20, 2007 - link

Taking it to the extreme, obviously running 16 2GB FB-DIMMs uses a lot more power than 16 2GB DDR2-667 DIMMs. I'm not sure how many businesses actually use that approach, thoughWell, the general idea when you buy a motherboard with 16 RAM slots is that you're going to fill it with 16 DIMMs, not leave 75% of the slots empty. Especially in the case of high-load servers, maxing out the RAM is pretty much the norm. But if you decide that's "too much", at least fill half the memory slots with RAM. That means 8 FB-DIMMs and 8 DIMMs, respectively (both boards have 16 slots).

As mentioned by others above, it's hard to believe that people working for one of the biggest hardware review sites in the world would overlook this kind of thing involuntarily. I'd risk saying that Anand or Johan never would.

The purpose of a product review is to make an objective comparison between products, and not to avoid or minimize anything that would expose the weaknesses of one of them.

Reducing the number of FB-DIMMs in the Intel system when testing for power consumption is comparable to limiting the total load on the AMD server when testing for peak performance.

Although the review gives the idea that both systems were configured and assembled by Anandtech, your post above suggests that the Xeon system was in fact configured by Intel ("The Intel setup came with 2GB DIMMs... obviously Intel knows that you pay a power penalty for every FB-DIM"). Yes, Intel knows that... so they're allowed to leave 75% of the RAM slots in your test system empty, to minimize that penalty? And who, exactly, configured the Opteron system?

you seem to want us to intentionally handicap Intel just for your own benefit.

Handicap? By actually using (at least) 50% of the available RAM slots? And... "our own benefit"? Hell, yeah. It's to the consumer's benefit to have objective reviews that point out both the strengths and weaknesses of all products. Or is that such an alien concept? Or maybe you think your readers take advertising money from AMD...? Last time I checked, there was no paid "AMD resource center" in my back yard.

Proteusza - Thursday, September 20, 2007 - link

I agree wholeheartedly, and sadly I dont think we will get a meaningful response from AT.It appears everyone has their price.

Proteusza - Thursday, September 20, 2007 - link

You dont seem to get it.8 sticks ram (any size) = lots of power

4 sticks ram (any size) = less power.

7 fans = lots of power

3 fans = less power

You were testing the CPU and the platform in general, not the case or ram. so using differing amounts of ram and fans means your power consumption results are meaningless.

Okay read what you said again slowly. Why didnt you have 4x2GB in the AMD setup? You say yourself any business in need of such a platform wont bother with 8x1GB.

The only thing that I would benefit from is an ubiased test. If you say that switching teh Intel to 1 Gb sticks would unfairly penalize it, doesnt the same hold true of AMD? How can what is unfair to Intel not be unfair to AMD? I'll tell you, a little thing called marketing.

Read the first post about the ram, its not the total amount, its the configuration. give each platform 4 sticks of 2 GB each, then we will see.

I dont think its that difficult to understand, you guys either made an error which makes your results meaningless, or were paid, which still makes them meaningless.