A New SSE Instruction Set: AMD Announces SSE5

by Ryan Smith on August 30, 2007 4:00 PM EST- Posted in

- CPUs

A couple of weeks ago we had our first look at AMD's Lightweight Profiling Proposal with the promise of more to come as part of AMD's latest initiative in improving computer performance via additions to the x86 instruction set. Today AMD has lifted the veil off of the next part, and as we have been expecting, this part is a more traditional extension to the x86 instruction set with the addition of new high-performance instructions. This marks the first time AMD has made such a move since the last revision of the 3DNow instruction set several years ago.

To that extent, AMD has opted to cease development of 3DNow and instead focus on further augmenting Intel's SSE instruction set, which won the instruction set wars some time ago with AMD's adoption of it. The result, confusingly enough, is what AMD is calling SSE5, breaking the tradition of all of the iterations of SSE being an Intel product. This is part of a larger AMD effort to inject themselves in to the process of developing further iterations of SSE, as along with the SSE5 specification they are already telling us they're hard at work getting the discussion going in the computing industry about SSE6 and beyond. Intel's name is noticeably absent from any of today's materials, so we will no doubt be hearing their opinion soon on AMD's encroachment in to designing SSE specifications.

As for SSE5, today's announcement is in many ways a very standardized one for an industry that produces a new iteration of SSE approximately every 2 years. Citing the slow growth in clock speed and instruction per second rates in the past few years, AMD has been looking at other ways to improve the performance of their processors. One such way has been by adding more processor cores for extracting additional performance via thread-level parallelism, which has given birth to the modern core wars. Another such effect has been adding more execution units in a single processor core to extract more instruction-level parallelism within a thread, which we have seen occur with Intel's Conroe design, and which AMD will be doing themselves with the upcoming Barcelona core and its derivatives.

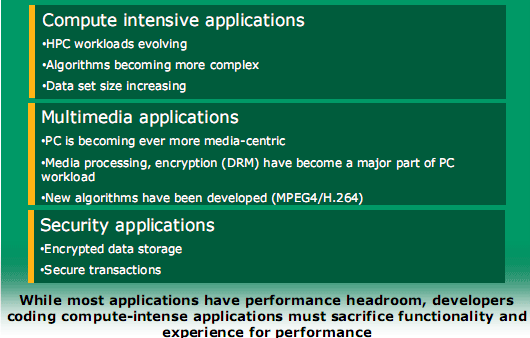

Finally however there is improving processor performance at an instruction level, either by making instructions execute/retire faster or designing new instructions, the latter of which is the traditional scope of SSE and is where AMD is focusing SSE5 today. As with the past iterations of SSE, AMD is calling for new instructions that will improve the performance of computing-intensive tasks. For SSE5 AMD is focusing on improving performance in 3 specific areas: Security applications, traditional computing-intensive applications, and the now obligatory multimedia applications category. As far as the instructions go, few are actually field limited, but these are the areas that AMD believes will improve the most from the instructions they've selected.

With that said, it's important to note that while AMD is announcing SSE5 on the eve of the Barcelona launch, SSE5 is not going to be shipping with Barcelona or any of its derivatives or immediate successors. AMD will start including SSE5 support in the Bulldozer core, which with an expected launch date of 2009 is still two years off. Today's announcement and publication of their specifications is being done a bit more ahead of time than what we've seen in the past with other variations of SSE so that developers have ample time to learn about it and implement it in time for Bulldozer's launch. Ideally AMD hopes to bypass some of the chicken/egg problems that have occurred with past iterations of SSE.

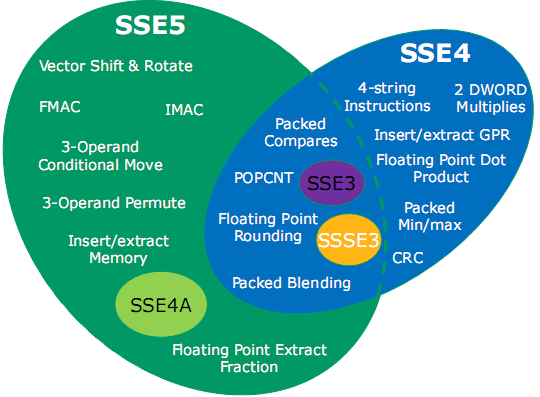

Unfortunately, with AMD's own SSE5 support we feel they have made a major snafu here in not supporting other versions of SSE. Previously, SSE has been entirely backwards compatible; if a processor supported SSE3 for example, you could count on it supporting SSE2, SSE1, and MMX. Intel's own new SSE extension, SSE4, is launching with Penryn later this year, but AMD will only be supporting a fraction of the SSE4 instruction set in Bulldozer, as seen in the graph below.

We're extremely disappointed in AMD going this route. Some of SSE4's instructions are quite potent, others we have been told are not; AMD believes that the subset of SSE4 they are including are the most useful of these. But we believe it's still worth including such instructions, and by using the name SSE5 and adding it to Bulldozer they have made a very strong implication about backwards compatibility that isn't all there. While this is a valid addition to the SSE instruction set, AMD should have named it something other than SSE5 to avoid confusion.

Finally, we've been told that full SSE4 support is coming for AMD's chips, but without a date. We know that it won't be in Bulldozer, which means SSE4 support won't be happening until a Bulldozer refresh, which will be no earlier than 2010.

17 Comments

View All Comments

skiboysteve - Friday, August 31, 2007 - link

this is stupid. they are adding SSE5 before SSE4. wow.tygrus - Friday, August 31, 2007 - link

SSE numbers/description becoming like model numbers. Confusing and virtually meaningless. Need CPU core with microcode to convert non-native SSE? instructions into sequence of native instuctions (micro-ops/macro-ops).If that doesn't happen then the compilers may need to re-write the code sequences for target(s) at compile time or execution.

yyrkoon - Thursday, August 30, 2007 - link

Just from what I have seen in the past, whenever AMD does something like this, Intel tries to seperate themselves by going a different direction, this is why I think Intel will rename their future instruction sets to something else.If AMD and Intel were to actually work together on this, then maybe Intel would opt in on some of the better portions of the instruction set that enhanced their CPUs, but somehow I do not think this is the case.

I watch the Intel/AMD 'rivalry' from the outside looking in, and I see the Coke/Pepsi 'war' all over again. Little kids going so far as to pull an engine out of a new delivery truck, paint it another color other than blue, because that *is* their rivals colors . . . At what cost for your share holders ? Nonesense !

jeromekwok - Thursday, August 30, 2007 - link

I don't think of a good reason we should care this SSE5A, or should we call it 3dnow technically. AMD may gain back a few benchmark scores, but it is hard to get developers move from Intel compiler suites.Do you guys feel the same. When the MADD goes thru the OOO, it should be decoded as MUL and ADD micro-ops. There should not be a big difference if we use two instructions MUL and ADD, which should get similar micro-ops. May be there is something AMD is weak at.

saratoga - Thursday, August 30, 2007 - link

Since muls have a very high latency compared to adds, and a dependency would exist between the ops, this would not be a good way to do things.

The result is the same (obviously), but its slower and complicates scheduling for no logical reason. Compared to a multiplier, adders are very cheap.

redpriest_ - Thursday, August 30, 2007 - link

Look at Itanium's fused multiply add.jiulemoigt - Thursday, August 30, 2007 - link

Well I wrote several versions but what it comes down to is I'm scratching my at the example as it looks like it was written by marketing without asking an engineer how to code it. The first can be written in half that many lines of code and more efficiently, it looks like vb code that was automatically translated by a very bad compiler. I've written code for both chips and generally hand coding will give code than four about four times faster but is not practical considering time constraints and the number of people that can write assembler code. Yet using instructions is supposed to speed up the rate code goes because the computer performs a series of instructions that have predefined procedures ie store data in A, store data in B, ADD A to B, repeat C times, return B, where as this looks like store data in A, store data in B, Add A,B store in C, return C, repeat with new numbers multiple times, go back and get data returned from C and store in A compare to data from pass two stored in B store result in C return C, get data just returned compare to data from pass three, store in C return C, get data just returned etc... with the second one using the location but still using a third location! Instead of ADD a,b with the result in B, return B to location 1, return B to loc2, then store loc 1 in A, loc 2 in B ADD A,B return B.The interesting thing about the number of instructions in the example is that the time it takes to one instruction to complete is far different, as store statements are not equal to compare statements are not equal to ADD/MUL statements, the computer can do an ADD statement faster than it can find data on local cache let alone system memory. One of the reason graphic cards are so much faster at MADD tends to do with the data being right there, which is why graphic DRR is so much more expensive than system memory. and now AMD wants to join the slowest instruction with the fast ones? This is something people should be really wondering about since it kills prefetch as it is going to make the system wait for data with every pass including the ones that should be really fast. That suggests they are going to try and force the scheduler to get longer blocks of data like Intel did with its P4 which was a very bad design since branching logic is only so good, and every miss will cause the CPU to sit ideal, covering up misses with longer cycles.

Any way for the non-coders SSE takes low level code and packages chunks of code that can be pasted to the CPU as one chunk it knows what to do with. Usually this makes the chunks get processed faster as scheduler on the CPU takes the chunks as one piece and it all gets pushed through no waiting, only in this case it is forcing the CPU to be an in-order CPU for every instruction so coded, which is bad because normally it can crank through fast instructions ideal through slow ones, this will force it to ideal through many slow ones, as opposed to simply burning through the fast short ones, less ideal time fast the job gets done, but with everything waiting on store statements there will be an increase in ideal time, since it is easier to stack a bunch of small legos in a box than four bowling balls. Just think of store statements as getting the legos or the bowling balls to put in the box you may have to make more trips to get enough legos to fill the box but the trips are faster and if you get two many legos the amount that does not fit will be small where as that last bowling balls is a significant amount compared to what is in the box. Rough analogy but I'm supposed to be relaxing not thinking about work.

Oh and MMX when it first came out was a PR stunt and it was only about two years after being added that someone found a use for it, as kludge to simply coding for people who were not willing to do it right. 3DNow was just as bad SSE was the first set that was actually useful, when added to compiler to speed up certain repetitive tasks like encoding and rendering. Though this new set defeats the purpose of having all those new registers to use!

PeteRoy - Thursday, August 30, 2007 - link

Return of the Jedi anyone?her34 - Thursday, August 30, 2007 - link

next for amd:the geforce 10800xt

peldor - Thursday, August 30, 2007 - link

This strikes me as a way to distract from the lack of a complete SSE4 implementation.