ATI Radeon HD 2900 XT: Calling a Spade a Spade

by Derek Wilson on May 14, 2007 12:04 PM EST- Posted in

- GPUs

AMD CFAA Performance and Image Quality

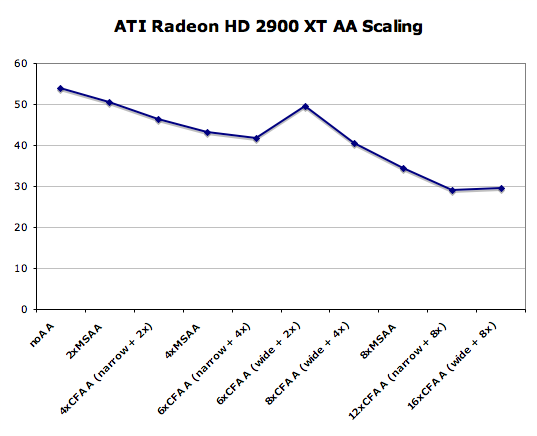

While we've already talked about CFAA, let's take a look at how it compares to other AA methods. We've already seen NVIDIA's CSAA in action, which is able to better determine how subsample colors should be weighted within a pixel. How does it stack up to AMD's tent filters? Let's take a look:

Zip file of uncropped JPG images (1.7MB)

Clearly CFAA does do a good job at reducing the impact of high contrast edges. As we mentioned before though, this doesn't come without drawbacks. Antialiasing shouldn't just filter out high frequency image data (which comes in the form of high contrast edges). The problem lies in the fact that some of these edges are supposed to be there.

Applying a blur to everything isn't the best general purpose answer. Ideally we want to balance eliminating high frequency data we don't want (aliased edges) while preserving the high frequency data we do want (fine grained detail in either geometry or interior textures). A balance needs to be kept here, and (as we've seen many times in the past) the answer for the end user can often be subjective.

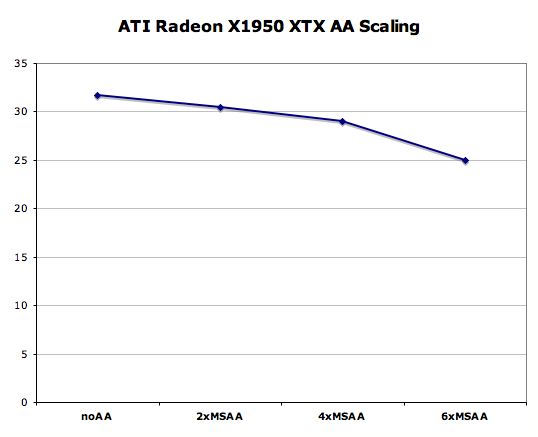

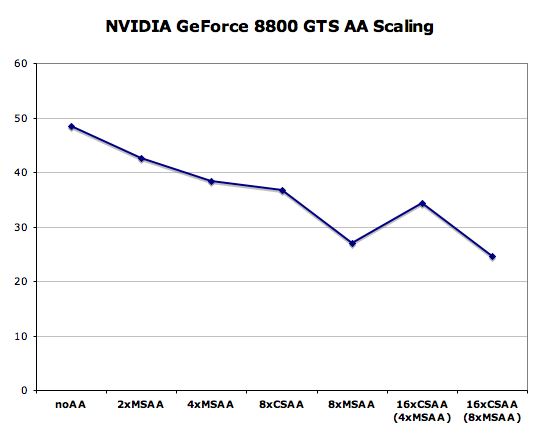

This is certainly an interesting solution, but we will stick with simple 4x box filtered MSAA for our current and future tests as it still offers the best balance between image quality and performance - especially at very small pixel sizes. But before we leave the subject completely, let's take a look at how CFAA performs on R600. We'll compare it to all the non-transparent texture aware AA modes available on the X1950 XTX and 8800 GTS 640MB.

86 Comments

View All Comments

Roy2001 - Tuesday, May 15, 2007 - link

The reason is, you have to pay extra $ for a power supply. No, most probably your old PSU won't have enough milk for this baby. I will stick with nVidia in future. My 2 cents.Chaser - Tuesday, May 15, 2007 - link

Such a revealing tech article. Thanks for other sources Tom.

archcommus - Tuesday, May 15, 2007 - link

$300 is the exact price point I shoot for when buying a video card, so that pretty much eliminates AMD right off the bat for me right now. I want to spend more than $200 but $400 is too much. I'm sure they'll fill this void eventually, and how that card will stack up against an 8800 GTS 320 MB is what I'm interested in.H4n53n - Tuesday, May 15, 2007 - link

Interesting enough in some other websites it wins from 8800 gtx in most games,especially the newer ones and comparing the price i would say it's a good deal?I think it's just driver problems,ati has been known for not having a very good driver compared to nvidia but when they fixed it then it'll windragonsqrrl - Thursday, August 25, 2011 - link

lol...fail. In retrospect it's really easy to pick out the EPIC ATI fanboys now.Affectionate-Bed-980 - Tuesday, May 15, 2007 - link

I skimmed this article because I have a final. ATI can't hold a candle to NV at the moment it seems. Now while the 2900XT might have good value, I am correct in saying that ATI has lost the performance crown by a buttload (not even like X1800 vs 7800) but like they're totally slaughtered right?Now I won't go and comment about how the 2900 stacks up against competition in the same price range, but it seems that GTSes can be acquired for cheap.

Did ATI flop big here?

vailr - Monday, May 14, 2007 - link

I'd rather use a mid-range older card that "only" uses ~100 Watts (or less) than pay ~$400 for a card that requires 300 Watts to run. Doesn't AMD care about "Global Warming"?Al Gore would be amazed, alarmed, and astounded !!

Deusfaux - Monday, May 14, 2007 - link

No they dont and that's why the 2600 and 2400 don't existochentay4 - Monday, May 14, 2007 - link

Let me start with this: i always had a nvidia card. ALWAYS.Faster is NOT ALWAYS better. For the most part this is true, for me, it was. One year ago I boght a MSI7600GT. Seemed the best bang for the buck. Since I bought it, I had problems with TVout detection, TVout wrong aspect ratios, broken LCD scaling, lot of game problems, inexistent support (nv forum is a joke) and UNIFIED DRIVER ARQUITECTURE. What a terrible lie! The latest official drivers is 6 months ago!!!

Im really demanding, but i payed enough to demand a 100% working product. Now ATi latest offering has: AVIVO, FULL VIDEO ACC, MONTHLY DRIVER UPDATES, ALL BUGS I NOTICED WITH NVIDIA CARD FIXED, HDMI AND PRICE. I prefer that than a simple product, specially for the money they cost!

I will never buy a nvidia card again. I'm definitely looking forward ATis offering (after the joke that is/was 8600GT/GTS).

Enough rant.

Am I wrong?

Roy2001 - Tuesday, May 15, 2007 - link

Yeah, you are wrong. Spend $400 on a 2900XT and then $150 on a PSU.