Apple's Mac Pro: A Discussion of Specifications

by Anand Lal Shimpi on August 9, 2006 3:54 PM EST- Posted in

- Mac

Understanding FB-DIMMs

Since Apple built the Mac Pro out of Intel workstation components, it unfortunately has to use more expensive Intel workstation memory. In other words, cheap unbuffered DDR2 isn't an option, its time to welcome ECC enabled Fully Buffered DIMM (FBD) to your Mac.

Years ago, Intel saw two problems happening with most mainstream memory technologies: 1) As we pushed for higher speed memory, the number of memory slots per channel went down, and 2) the rest of the world was going serial (USB, SATA and more recently, Hyper Transport, PCI Express, etc...) yet we were still using fairly antiquated parallel memory buses.

The number of memory slots per channel isn't really an issue on the desktop; currently, with unbuffered DDR2-800 we're limited to two slots per 64-bit channel, giving us a total of four slots on a motherboard with a dual channel memory controller. With four slots, just about any desktop user's needs can be met with the right DRAM density. It's in the high end workstation and server space that this limitation becomes an issue, as memory capacity can be far more important, often requiring 8, 16, 32 or more memory sockets on a single motherboard. At the same time, memory bandwidth is also important as these workstations and servers will most likely be built around multi-socket multi-core architectures with high memory bandwidth demands, so simply limiting memory frequency in order to support more memory isn't an ideal solution. You could always add more channels, however parallel interfaces by nature require more signaling pins than faster serial buses, and thus adding four or eight channels of DDR2 to get around the DIMMs per channel limitation isn't exactly easy.

Intel's first solution was to totally revamp PC memory technology, instead of going down the path of DDR and eventually DDR2, Intel wanted to move the market to a serial memory technology: RDRAM. RDRAM offered significantly narrower buses (16-bits per channel vs. 64-bits per channel for DDR), much higher bandwidth per pin (at the time a 64-bit wide RDRAM memory controller would offer 6.4GB/s of memory bandwidth, compared to a 64-bit DDR266 interface which at the time could only offer 2.1GB/s of bandwidth) and of course the ease of layout benefits that come with a narrow serial bus.

Unfortunately, RDRAM offered no tangible performance increase, as the demands of processors at the time were no where near what the high bandwidth RDRAM solutions could deliver. To make matters worse, RDRAM implementations were plagued by higher latency than their SDRAM and DDR SDRAM counterparts; with no use for the added bandwidth and higher latency, RDRAM systems were no faster, if not slower than their SDR/DDR counterparts. The final nail in the RDRAM coffin on the PC was the issue of pricing; your choices at the time were this: either spend $1000 on a 128MB stick of RDRAM, or spend $100 on a stick of equally performing PC133 SDRAM. The market spoke and RDRAM went the way of the dodo.

Intel quietly shied away from attempting to change the natural evolution of memory technologies on the desktop for a while. Intel eventually transitioned away from RDRAM, even after its price dropped significantly, embracing DDR and more recently DDR2 as the memory standards supported by its chipsets. Over the past couple of years however, Intel got back into the game of shaping the memory market of the future with this idea of Fully Buffered DIMMs.

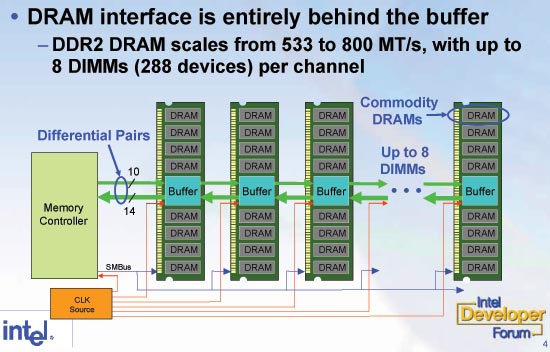

The approach is quite simple in theory: what caused RDRAM to fail was the high cost of using a non-mass produced memory device, so why not develop a serial memory interface that uses mass produced commodity DRAMs such as DDR and DDR2? In a nutshell that's what FB-DIMMs are, regular DDR2 chips on a module with a special chip that communicates over a serial bus with the memory controller.

The memory controller in the system stops having a wide parallel interface to the memory modules, instead it has a narrow 69 pin interface to a device known as an Advanced Memory Buffer (AMB) on the first FB-DIMM in each channel. The memory controller sends all memory requests to the AMB on the first FB-DIMM on each channel and the AMBs take care of the rest. By fully buffering all requests (data, command and address), the memory controller no longer has a load that significantly increases with each additional DIMM, so the number of memory modules supported per channel goes up significantly. The FB-DIMM spec says that each channel can support up to 8 FB-DIMMs, although current Intel chipsets can only address 4 FB-DIMMs per channel. With a significantly lower pin-count, you can cram more channels onto your chipset, which is why the Intel 5000 series of chipsets feature four FBD channels.

Bandwidth is a little more difficult to determine with FBD than it is with conventional DDR or DDR2 memory buses. During Steve Jobs' keynote, he put up a slide that listed the Mac Pro as having a 256-bit wide DDR2-667 memory controller with 21.3GB/s of memory bandwidth. Unfortunately, that claim isn't being totally honest with you as the 256-bit wide interface does not exist between the memory controller and the FB-DIMMs. The memory controller in the Intel 5000X MCH communicates directly with the first AMB it finds on each channel, that interface is actually only 24-bits wide per channel for a total bus width of 96-bits (24-bits per channel x 4 channels). The bandwidth part of the equation is a bit more complicated, but we'll get to that in a moment.

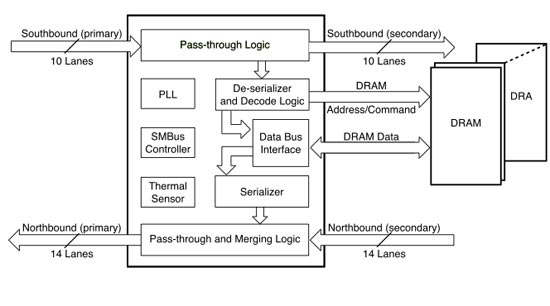

Below we've got the anatomy of a AMB chip:

The AMB has two major roles, to communicate with the chipset's memory controller (or other AMBs) and to communicate with the memory devices on the same module.

When a memory request is made the first AMB in the chain then figures out if the request is to read/write to its module, or to another module, if it's the former then the AMB parallelizes the request and sends it off to the DDR2 chips on the module, if the request isn't for this specific module, then it passes the request on to the next AMB and the process repeats.

33 Comments

View All Comments

michael2k - Friday, August 11, 2006 - link

fb dimms, found in Mac Pros, are fast serial ram using DDR chips.OddTSi - Friday, August 11, 2006 - link

Perhaps you missed the part where I said "non-ad hoc."I know what FB-DIMMs are, but they're more of a band-aid fix or a hack than a ground-up design.

michael2k - Friday, August 11, 2006 - link

Maybe you misused "ad hoc". Ad hoc means unplanned and temporary. Why do you think fb-dimm is a band-aid or a hack? Because the RAM chips themselves are not serial in nature?I mean, are you asking "Is there any designs or plans for serial memory chips?"

To be cost effective you either have to use existing infrastructure, or create a logical evolution/adaptation of the existing infrastructure.

AdvanS13 - Thursday, August 10, 2006 - link

does anyone know apples market segment share for dual processor workstations?peternelson - Thursday, August 10, 2006 - link

1) I think a gpu swap will need drivers or firmware updating.

2) To buy a commodity sata drive is good but it MIGHT require the apple carrier in order to fit into the chassis.

3) You compare apple memory with commodity FBDIMM.

In the table you quote Apple's UPGRADE (ie on top of base machine) price against the complete cost of the memory. This makes Apple's pricing appear better than it is. Even then it looks like a ripoff, but also consider they are charging you for the base memory in with the basic system price.

aliasfox - Friday, August 11, 2006 - link

As far as I've read, the Mac Pros come with carriers in all four bays - carriers that don't need cables (ribbon or round). Didn't know the backs of SATA drives were similar enough that they could just be plugged in.JeffDM - Saturday, August 12, 2006 - link

It's not stated in the Anand article, but all drive carriers are included. Apple's Tech Specs page says it, although it could have been more clearly stated. For what it's worth, I think it is worth downgrading the stock drive to 160GB and spending that difference toward additional drives. Going from 250GB to 160GB saves $75, that price difference would buy you a 250GB SATAII drive.JAS - Thursday, August 10, 2006 - link

It appears that some people managed to receive their Mac Pro quickly.http://www.macworld.com/weblogs/macword/2006/08/ma...">http://www.macworld.com/weblogs/macword/2006/08/ma...

IntelUser2000 - Wednesday, August 9, 2006 - link

http://www.tomshardware.co.uk/2006/06/26/xeon_wood...">http://www.tomshardware.co.uk/2006/06/2...odcrest_...Check out the memory bandwidth benchmark. Quad channel is needed to match Core 2 systems' memory bandwidth using only dual channel. Dual channel on Xeon 5100 drops to approximately 68% of the quad channel bandwidth. That in numbers is 3.8GB/sec. Not to mention Xeon 5100 series has 25% higher memory FSB. It needs 25% higher FSB and 2x memory channels to achieve the same memory bandwidth numbers the desktop Core 2's can. According to memory latency benchmarks, the latency is also significantly higher on the Woodcrest than Conroe's platform.

The chipset on the Xeon 5100 is worse in performance than the chipset on the Core 2. It will NOT beat Core 2 because of the 25% higher FSB, it will rather be SLOWER. Not to mention FB-DIMM makes it even slower.

SpecFP benchmarks also support this:

Xeon 5160(3GHz/1333MHz FSB/4MB L2/8x1024MB FB-DIMM DDR2-667): 2775

Core 2 Extreme X6800(2.93GHz/1066MHz FSB/4MB L2/2x1024MB DDR2-800 5-5-5-15): 3046

Core 2 Extreme gets almost 10% higher in the memory substem portion of the SpecCPU 2K. benchmark, even though it has 2.2% less clock speed than the Xeon 5160.

Look here: http://www.anandtech.com/IT/showdoc.aspx?i=2772&am...">http://www.anandtech.com/IT/showdoc.aspx?i=2772&am...

"ScienceMark didn't agree completely and reported about 65-70 ns latency on the Opteron system and 70-76 ns (230 cycles) on the Woodcrest system. We have reason to believe that Woodcrest's latency is closer to what LMBench reports: the excellent prefetchers are hiding the true latency numbers from Sciencemark. It must also be said that the measurements for the Opteron on the Opteron are only for the local memory, not the remote memory."

Xeon 5160 got 70-76ns in ScienceMark, what did Core 2 get?? It got 36.75. Xeon 5160's ScienceMark latency is higher than Pentium Extreme Edition 965's latency, and twice the latency of Core 2.

Everest shows the same thing: http://pc.watch.impress.co.jp/docs/2006/0801/graph...">http://pc.watch.impress.co.jp/docs/2006/0801/graph...

Xeon 5160: 99.1

Opteron 285: 57.7(seems higher than FX-62 results but this system uses Registered DDR DIMM, you can see in AT's results that AM2 further lowers latency)

Core 2 Extreme: 59.8

http://www.anandtech.com/cpuchipsets/showdoc.aspx?...">http://www.anandtech.com/cpuchipsets/showdoc.aspx?...

dcalfine - Wednesday, August 9, 2006 - link

Overall, I think this is a very well-designed system, and in price comparisons with Dell, the Mac Pro came out over a thousand dollars cheaper for a similar system. I may be a fanboy, but I can admit that Apple still has some work to do here. As good as the Mac Pro is, I think Apple needs to start having better video options. For starters, the X500 chipset is used, which means that there's only one 16X PCIe lane. Also, Apple should get closer with Nvidia and start working in SLI, as well as FX4500X2 and FX5500. A Vanilla FX4500 just doesn't make the cut anymore. Also, the X500 chipset supports one 133X PCIX slot, which, I think, Apple should have incorporated, since not every expansion card has moved to the PCIe format.I'd like to see some speed comparisons between the mac pro and some pcs. I imagine that in most (if not all) test the Mac Pro will come out slightly slower than the PC due to the bells and whistles of Mac OS X, but I'd like to see just how much slower it runs, and how it runs in Boot Camp running Windows/Linux.

But, yeah. Good goin', Apple!

And AnandTech, get your hans on one of these ASAP!