DirectX 10

Visual changes aside, there are numerous changes under the hood of Vista, and for much of our audience, DirectX 10 will be the biggest of such changes. DirectX 10 has enjoyed an odd place recently in what amounts to computer mythology, as it has been in development for several years now while Microsoft has extended DirectX 9 to accommodate new technologies. Previously, Microsoft was doing pretty good at providing yearly updates to DirectX.

Unlike the previous iterations of DirectX, 10 will be launched in a different manner due to all the changes to the operating system needed to support it. Of greatest importance, DirectX 10 will be a Vista-only feature; Microsoft will not be backporting it to XP. DirectX 10 will not only include support for new hardware features, but relies on some significant changes Microsoft is making to how Windows treats GPUs and interfaces with them, requiring the clean break. This may pose a problem for users that want to upgrade their hardware without upgrading their OS. It is likely that driver support will allow for DX9 compatibility, while new feature support could easily be added through OpenGL caps, but the exact steps ATI and NVIDIA will take to keep everyone happy will have to unfold over time.

There seems to be some misunderstanding in the community that DX9 hardware will not run with DirectX 10 installed or with games designed using DirectX 10. It has been a while, but this transition (under Vista) will be no different to the end user than the transition to DirectX 8 and 9, where users with older DirectX 7 hardware could still install and play most DX 8/9 games, only without the pixel or vertex shaders. New games which use DirectX 10 under Vista while running on older DX9 hardware will be able to gracefully fall back to the proper level of support. We've only recently begun to see games come out that refuse to run on DX8 level hardware, and it isn't likely we will see DX10-only games for several more years. Upgrading to Vista and DX10 won't absolutely require a hardware upgrade. The benefit comes in the advanced features made possible.

While we'll have more on the new hardware features supported by DirectX 10 later this year, we can talk a bit about what we know now. DirectX 10 will be bringing support for a new type of shader, the geometry shader, which allows for the modification of triangles in the middle of rendering at certain stages. Microsoft will also be implementing some technology from the Xbox 360, enabling the practical use of unified shaders like we've seen on ATI's Xenos GPU for the 360. Although DirectX 10 compliance does not require unified hardware shaders, the driver interface will be unified. This should make things easier for software developers, while at the same time allowing hardware designers to approach things in the manner they see best. Pixel and vertex shading will also be receiving some upgrades under the Shader Model 4.0 banner.

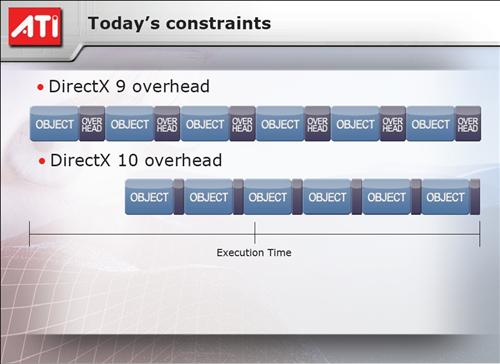

DirectX 10 will also be implementing some significant optimizations to the API itself, as the continuous building of DirectX versions upon themselves along with CPU-intensive pre-rendering techniques such as z-culling and hidden surface removal has resulted in a fairly large overhead being put on the CPU. Using their developer tools, ATI has estimated that the total CPU utilization spent working directly on graphics rendering (including overhead) can approach 40% in some situations, which has resulted in some games being CPU limited solely due to this overhead. With these API changes, DX10 should remove a good deal of the overhead, and while it still means that there will be a significant amount of CPU time required for rendering (20% in ATI's case), the 20% savings can be used to ultimately render more or more complex frames. Unfortunately, these API changes will work in tandem with hardware changes to support them, so these benefits will only be available to DirectX 10 class hardware.

The bigger story at the moment with DirectX 10, however, is how it also forms the basis of Microsoft's changes to how Windows will treat and interface with GPUs. With current GPU designs and the associated treatment from the operating system, GPUs are treated as single-access devices; one application is effectively given sovereign access and control of the GPU's 3D capabilities at any given moment. To change which application is utilizing these resources, a very expensive context switch must take place that involves swapping out the resources of the first application for that of the second. This can be clearly seen today when Alt+Tabbing out of a resource intensive game, where it may take several seconds to go in and out of it, and is also part of the reason that some games simply don't allow you to Alt+Tab. Windowed rendering in turn solves some of this problem, but it incurs a very heavy performance hit in some situations, and is otherwise a less than ideal solution.

With the use of full 3D acceleration on the desktop now with Aero, the penalties become even more severe for these context switches, which has driven Microsoft to redesign DirectX and how it interfaces with the GPU. The result of this is a new group of interface standards, which Microsoft is calling the Windows Display Driver Model, which will replace the older XP Display Driver Model used under XP.

The primary change with the first iteration of the WDDM, which is what will be shipping with the release version of Vista, is that Microsoft is starting a multi-year plan to influence hardware design so that Windows can stop treating the GPU as a single-tasking device, and in the inevitable evolution of GPUs towards CPUs, the GPU will become a true multi-tasking device. WDDM 1.0 as a result is largely a clean break from the XP DDM; it is based on what current SM2.0+ GPUs can do, with the majority of the change being what the operating system can do to attempt multitasking and task scheduling with modern hardware. For the most part, the changes brought in WDDM 1.0 will go unnoticed by users, but it will be laying the groundwork for WDDM 2.0.

While Microsoft hasn't completely finalized WDDM 2.0 yet, what we do know at this point is that it will require a new generation of hardware, again likely the forthcoming DirectX 10 class hardware, that will be built from the ground up to multitask and handle true task scheduling. The most immediate benefit from this will be that context switches will be much cheaper, so applications utilizing APIs that work with WDDM2.0 will be able to switch in/out in much less time. The secondary benefit of this will be that when there are multiple applications running that want to use the full 3D features of the GPU, such as Aero and an application like Google Earth, that their performance will be improved due to the faster context switches; at the moment context switches mean that even in a perfectly split load neither application is getting nearly 50% of the GPU time (and thus fall short of their potential performance in a multitasking environment). Even further in the future will be WDDM 2.1, which will be implementing "immediate" context switching. A final benefit is that the operating system should now be able to make much better use of graphics memory, so it is conceivable that even lower-end GPUs with large amounts of memory will have a place in the world.

In the mean time, Microsoft's development of WDDM comes at a cost: NVIDIA and ATI are currently busy building and optimizing their drivers for WDDM 1.0. The result of this is that along with Vista already being a beta operating system, their beta display drivers are in a very early state, resulting in what we will see is very poor gaming performance at the moment.

Visual changes aside, there are numerous changes under the hood of Vista, and for much of our audience, DirectX 10 will be the biggest of such changes. DirectX 10 has enjoyed an odd place recently in what amounts to computer mythology, as it has been in development for several years now while Microsoft has extended DirectX 9 to accommodate new technologies. Previously, Microsoft was doing pretty good at providing yearly updates to DirectX.

Unlike the previous iterations of DirectX, 10 will be launched in a different manner due to all the changes to the operating system needed to support it. Of greatest importance, DirectX 10 will be a Vista-only feature; Microsoft will not be backporting it to XP. DirectX 10 will not only include support for new hardware features, but relies on some significant changes Microsoft is making to how Windows treats GPUs and interfaces with them, requiring the clean break. This may pose a problem for users that want to upgrade their hardware without upgrading their OS. It is likely that driver support will allow for DX9 compatibility, while new feature support could easily be added through OpenGL caps, but the exact steps ATI and NVIDIA will take to keep everyone happy will have to unfold over time.

There seems to be some misunderstanding in the community that DX9 hardware will not run with DirectX 10 installed or with games designed using DirectX 10. It has been a while, but this transition (under Vista) will be no different to the end user than the transition to DirectX 8 and 9, where users with older DirectX 7 hardware could still install and play most DX 8/9 games, only without the pixel or vertex shaders. New games which use DirectX 10 under Vista while running on older DX9 hardware will be able to gracefully fall back to the proper level of support. We've only recently begun to see games come out that refuse to run on DX8 level hardware, and it isn't likely we will see DX10-only games for several more years. Upgrading to Vista and DX10 won't absolutely require a hardware upgrade. The benefit comes in the advanced features made possible.

While we'll have more on the new hardware features supported by DirectX 10 later this year, we can talk a bit about what we know now. DirectX 10 will be bringing support for a new type of shader, the geometry shader, which allows for the modification of triangles in the middle of rendering at certain stages. Microsoft will also be implementing some technology from the Xbox 360, enabling the practical use of unified shaders like we've seen on ATI's Xenos GPU for the 360. Although DirectX 10 compliance does not require unified hardware shaders, the driver interface will be unified. This should make things easier for software developers, while at the same time allowing hardware designers to approach things in the manner they see best. Pixel and vertex shading will also be receiving some upgrades under the Shader Model 4.0 banner.

|

| Click to enlarge |

DirectX 10 will also be implementing some significant optimizations to the API itself, as the continuous building of DirectX versions upon themselves along with CPU-intensive pre-rendering techniques such as z-culling and hidden surface removal has resulted in a fairly large overhead being put on the CPU. Using their developer tools, ATI has estimated that the total CPU utilization spent working directly on graphics rendering (including overhead) can approach 40% in some situations, which has resulted in some games being CPU limited solely due to this overhead. With these API changes, DX10 should remove a good deal of the overhead, and while it still means that there will be a significant amount of CPU time required for rendering (20% in ATI's case), the 20% savings can be used to ultimately render more or more complex frames. Unfortunately, these API changes will work in tandem with hardware changes to support them, so these benefits will only be available to DirectX 10 class hardware.

The bigger story at the moment with DirectX 10, however, is how it also forms the basis of Microsoft's changes to how Windows will treat and interface with GPUs. With current GPU designs and the associated treatment from the operating system, GPUs are treated as single-access devices; one application is effectively given sovereign access and control of the GPU's 3D capabilities at any given moment. To change which application is utilizing these resources, a very expensive context switch must take place that involves swapping out the resources of the first application for that of the second. This can be clearly seen today when Alt+Tabbing out of a resource intensive game, where it may take several seconds to go in and out of it, and is also part of the reason that some games simply don't allow you to Alt+Tab. Windowed rendering in turn solves some of this problem, but it incurs a very heavy performance hit in some situations, and is otherwise a less than ideal solution.

With the use of full 3D acceleration on the desktop now with Aero, the penalties become even more severe for these context switches, which has driven Microsoft to redesign DirectX and how it interfaces with the GPU. The result of this is a new group of interface standards, which Microsoft is calling the Windows Display Driver Model, which will replace the older XP Display Driver Model used under XP.

The primary change with the first iteration of the WDDM, which is what will be shipping with the release version of Vista, is that Microsoft is starting a multi-year plan to influence hardware design so that Windows can stop treating the GPU as a single-tasking device, and in the inevitable evolution of GPUs towards CPUs, the GPU will become a true multi-tasking device. WDDM 1.0 as a result is largely a clean break from the XP DDM; it is based on what current SM2.0+ GPUs can do, with the majority of the change being what the operating system can do to attempt multitasking and task scheduling with modern hardware. For the most part, the changes brought in WDDM 1.0 will go unnoticed by users, but it will be laying the groundwork for WDDM 2.0.

While Microsoft hasn't completely finalized WDDM 2.0 yet, what we do know at this point is that it will require a new generation of hardware, again likely the forthcoming DirectX 10 class hardware, that will be built from the ground up to multitask and handle true task scheduling. The most immediate benefit from this will be that context switches will be much cheaper, so applications utilizing APIs that work with WDDM2.0 will be able to switch in/out in much less time. The secondary benefit of this will be that when there are multiple applications running that want to use the full 3D features of the GPU, such as Aero and an application like Google Earth, that their performance will be improved due to the faster context switches; at the moment context switches mean that even in a perfectly split load neither application is getting nearly 50% of the GPU time (and thus fall short of their potential performance in a multitasking environment). Even further in the future will be WDDM 2.1, which will be implementing "immediate" context switching. A final benefit is that the operating system should now be able to make much better use of graphics memory, so it is conceivable that even lower-end GPUs with large amounts of memory will have a place in the world.

In the mean time, Microsoft's development of WDDM comes at a cost: NVIDIA and ATI are currently busy building and optimizing their drivers for WDDM 1.0. The result of this is that along with Vista already being a beta operating system, their beta display drivers are in a very early state, resulting in what we will see is very poor gaming performance at the moment.

75 Comments

View All Comments

rqle - Friday, June 16, 2006 - link

dont really need bad memory module, overclock the memory just a tad bit to give errors while keeping the cpu clock constant or known stable clock.JarredWalton - Friday, June 16, 2006 - link

That still only works if the memory fails. Plenty of DIMMs can handle moderate overclocks. Anyway, it's not a huge deal I don't think - something that can sometimes prove useful if you're experiencing instabilities and think the RAM is the cause, but even then I've had DIMMs fail MemTest86 when it turned out the be a motherboard issue... or simply bad timings in the BIOS.PrinceGaz - Saturday, June 17, 2006 - link

Erm, no. Just overclock and/or use tighter-timings on a known good module beyond the point at which it is 100% stable. It might still seem okay in general usage but Memtest86 will spot problems with it. Now see if Vista's memory tester also spots problems with it. Pretty straightforward to test.xFlankerx - Friday, June 16, 2006 - link

I love how I was browsing the website, and I just refresh the page, and there's a brand new article there...simply amazing.xFlankerx - Friday, June 16, 2006 - link

Masterful piece of work though. Excellent Job.