The Elder Scrolls IV: Oblivion GPU Performance

by Anand Lal Shimpi on April 26, 2006 1:07 PM EST- Posted in

- GPUs

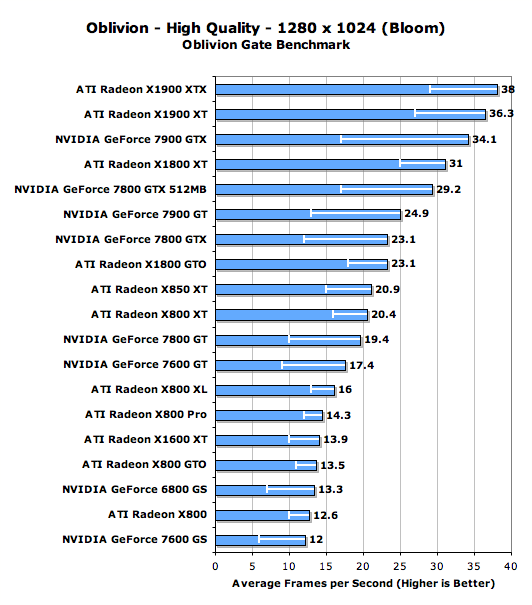

High End GPU Performance w/ Bloom Enabled

The only Shader Model 2.0 cards we have in this comparison are ATI's Radeon X800 series, the rest of the contenders are SM3.0 capable. While the SM2.0 vs. 3.0 distinction doesn't really exist in Oblivion, there is one feature that requires the later spec: lighting. Oblivion's HDR lighting setting requires a Shader Model 3.0 capable card, otherwise you're left with a less precise lighting solution called Bloom or nothing at all. Bloom naturally runs faster on all GPUs so we couldn't really throw the X800 numbers in with the rest of the HDR capable cards from above, instead we were forced to do a second run of our benchmarks with Bloom enabled on all GPUs to show you X800 owners whether or not it made sense to upgrade just to get a higher frame rate.

We left the multi-GPU solutions out of these graphs to save time and make them easier to digest; you've already seen how having multiple GPUs improves performance in these tests, so the focus here will be on the single card upgrade paths available to X800 and X850 series owners.

The white lines within the bars indicate minimum frame rate

ATI's X850 and X800 series performs quite well despite its age, with even the X800 GTO outpacing the GeForce 6800 GS. Unfortunately, if you want a good upgrade from your X850/X800 card you're going to have to set your sights (and budget) fairly high. The GeForce 7900 GT and Radeon X1800 GTO are probably your best bets for upgrades, but if you have an X850 XT or X800 XT don't expect the performance difference to be tremendous; instead, you'll have to look towards the Radeon X1900 series.

We continue to see this trend of NVIDIA GPUs posting lower minimum frame rates than ATI GPUs here which, unfortunately for NVIDIA, makes us strongly recommend choosing ATI instead.

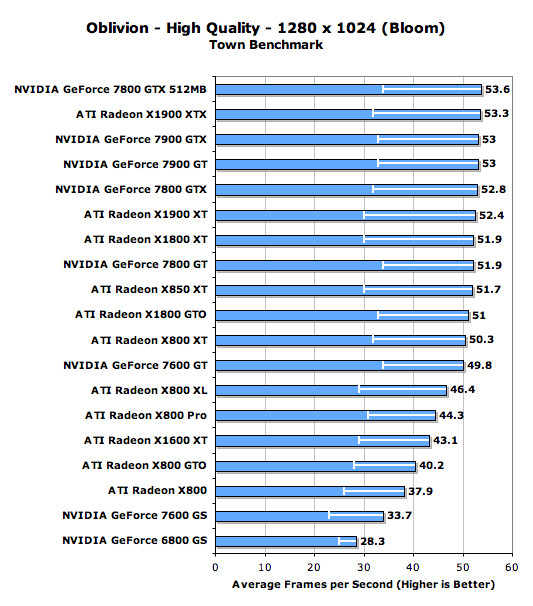

The white lines within the bars indicate minimum frame rate

Performance in our Town benchmarks is pretty much as expected and as we've seen before; the very high end GPUs all hit a performance wall right around 50 fps. The Radeon X800 series starts to pull up the rear but still offers significantly better performance than NVIDIA's GeForce 6800 GS (which is a performance equivalent to the GeForce 6800 GT/Ultra depending on clock speeds).

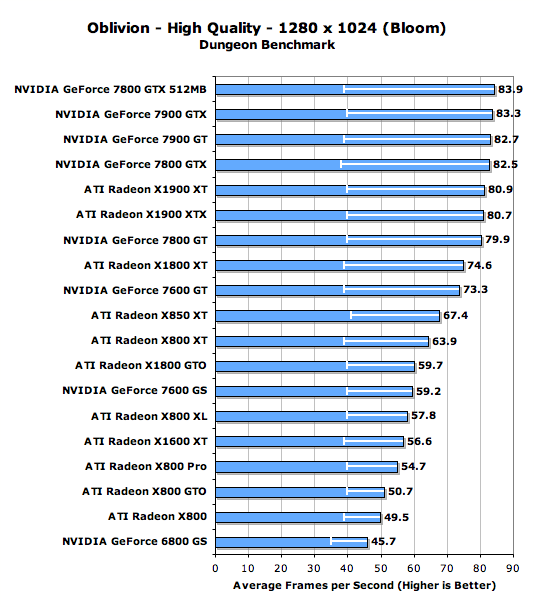

The white lines within the bars indicate minimum frame rate

While all of the GPUs have similar minimum frame rates in our Dungeon test, there is a pretty clear breakdown of performance once we look at cards slower than the GeForce 7800 GT. The standings however don't really change from what we've already seen, the X850/X800 cards continue to significantly outperform the GeForce 6800 GS while making any upgrade path that yields a reasonable improvement fairly expensive.

100 Comments

View All Comments

smitty3268 - Friday, April 28, 2006 - link

Well, all the tests that had the XT ahead of the XTX were obviously CPU bound, so for all intents and purposes you should have read the performance as being equal.I would like to know a bit about the drivers though. Were you using Catalyst AI and does it make a difference?

coldpower27 - Thursday, April 27, 2006 - link

Quite a nice post there, well said Jarred.JarredWalton - Thursday, April 27, 2006 - link

LOL - a Bolivian = Oblivion. Thanks, Dragon! :D (There are probably other typos as well. Sorry.)alpha88 - Thursday, April 27, 2006 - link

Opteron 165, 7800GTX 256megI run at 1920x1200 with every ingame setting set to max, HDR, no AA, (16x AF)

The game runs just fine.

I don't know what the framerates are, but whatever they are, it's very playable.

I have a few graphics mods installed (new textures), and the graphics are good enough that I randomly stop and take screenshots, the view looked so awesome.

z3R0C00L - Thursday, April 27, 2006 - link

The game is a glimpse at the future of gaming. The 7x00 series is old. True, nVIDIA were able to remain competitive with revamped 7800's which they now call 7900's but consumers need to remember that these cards have a smaller die space for a reason... they offer less features, less performance and are not geared towards HDR gaming.Right now nVIDIA and ATi have a complete role reversal from the x800XT PE vs. 6800 Ultra. The 6800 Ultra performed on par or beat the x800XT PE. The kick was that the 6800 Ultra produced more heat (larger die) was louder (larger cooler) but had more features and was more forward looking. Right now we have the same thing.

ATi's x1900 series has a larger die, produces more heat (larger die means more voltage to operate) and comes with a larger cooler. The upside is that it's a better card. The x1900 series totally dominate the 7900 series. Some will argue about OpenGL others will point to inexistant flaws in ATi drivers... the truth is those who make these comments on both sides are hardware fans. Product wise.. the x1900 series should be the card you buy if you're looking for a highend card... if you're looking more towards the middle of the market the x1800XT is better then the 7900GT.

Remember performance, features and technology.. the x1k series has all of them above the 7x00 series. Larger die space.. more heat. Larger die space.. more features.

Heat/Power for features and performance... hmmm fair tradeoff if you ask me.

aguilpa1 - Thursday, April 27, 2006 - link

inefficient game programming is no excuse to go out and spend 1200 on a graphics system. Games like the old Crytek Cryengine have proven they can provide 100% of the oblivion immersion and eye candy without crippling your graphics system and bring your computer to a crawl, ridicoulous game and test,....nuff said.dguy6789 - Thursday, April 27, 2006 - link

The article is of a nice quality, very informative. However, what I ponder more than GPU performance in this game is CPU performance. Please do an indepth cpu performance article that includes Celerons, Pentium 4s, Pentium Ds, Semprons, Athlon 64s, and Athlon 64 X2s. Firing squad did an article, however it only contained four AMD cpus that were of relatively the same speed in the first place. I, as well as many others, would greatly appreciate an indepth article speaking of cpu performance, dual core benefits, as well as anything else you can think of.coldpower27 - Thursday, April 27, 2006 - link

I would really enjoy a CPU scaling article with Intel based processors from the Celeron D's, Pentium 4's, and Pentium D's in this game.frostyrox - Thursday, April 27, 2006 - link

It's something i already knew but I'm glad Anandtech has brought it into full view. Oblivion is arguably one of the best PC games i've seen in 2006, and could very well turn out to be one of the best we'll see all year. Instead of optimizing the game for the PC, Bethesda (and Microsoft indirectly) bring to the PC a half *ss, amature, embarassing, and insanely bug-ridden 360 Port. I think I have the right to say this because I have a relatively fast PC (a64 3700+, x800 xl, 2gb cosair, sata2 hdds, etc) and I'm roughly 65hrs into Oblivion right now. Next time Bethesda should use the Daikatana game engine - that way gamers with decent PCs might not see framerates of 75 go to 25 everytime an extra character came onto the screen and sneezed. Right now you may be thinking that I'm mad about all this. Not quite. But I will say this much: next time I get the idea of upgrading my pc, I'll have to remember that upgrading the videocard may be pointless if the best games we see this year are 360 ports running at 30 frames. So here's to you Bethesda and Microsoft, for ruining a gaming experience that could've been so much more if you gave a d*mn about pc gamers.trexpesto - Thursday, April 27, 2006 - link

Maybe Oblivion should be $100?