ATI's New Leader in Graphics Performance: The Radeon X1900 Series

by Derek Wilson & Josh Venning on January 24, 2006 12:00 PM EST- Posted in

- GPUs

Day of Defeat Performance

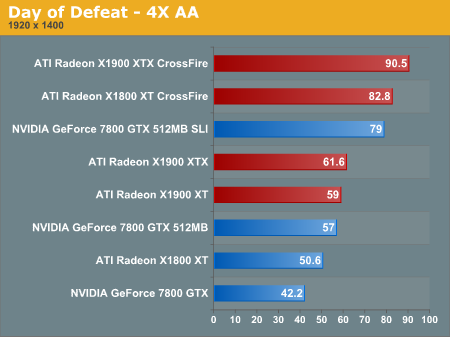

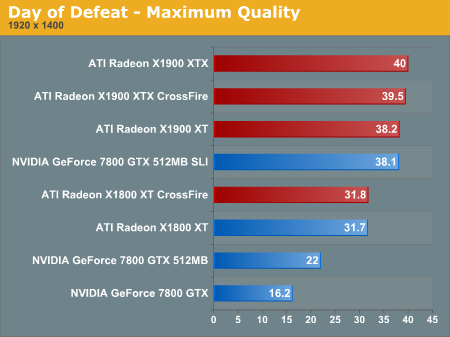

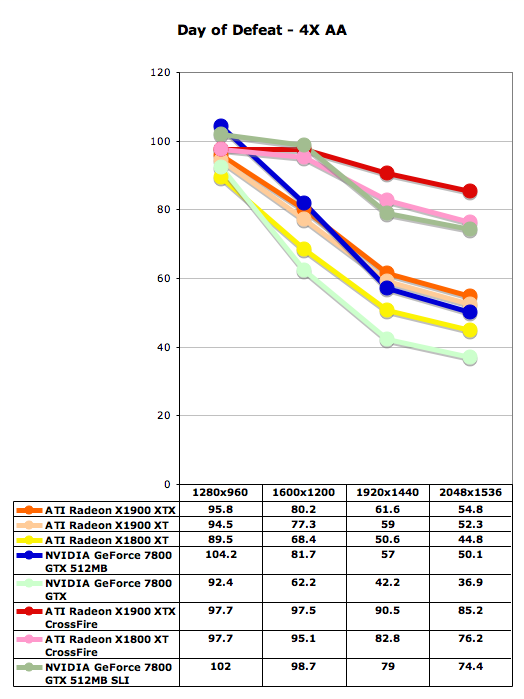

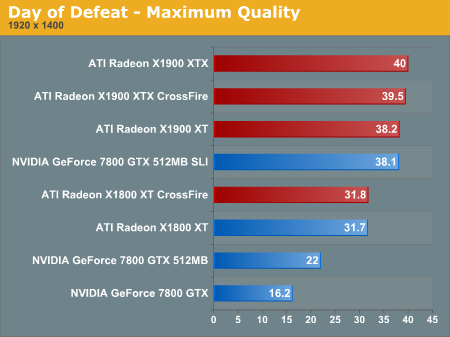

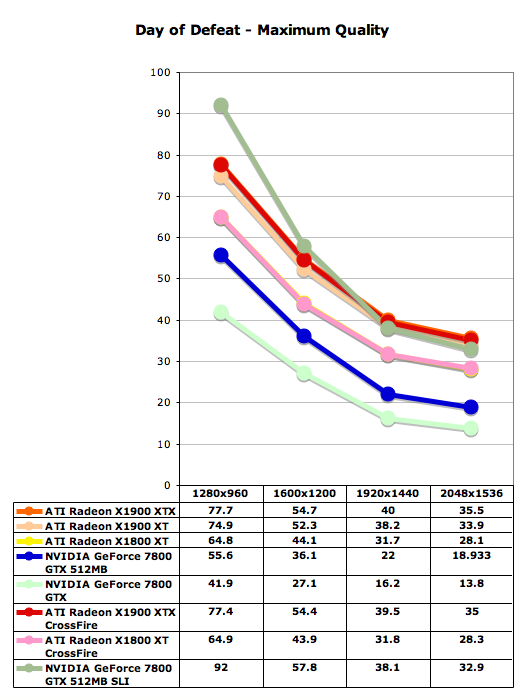

Day of Defeat uses Valve's new HDR technology on the Halflife 2 engine, which makes this game a good performance benchmark. One of the most interesting things to note here is how much of a performance hit NVIDIA takes when maximum quality settings are enabled in the control panel. Specifically, the 7800 GTX 512 gets roughly half the framerate with the max settings enabled as without.

With this game, we've omitted tests without AA enabled because there tends to be a CPU limitation on higher-end cards. Notice that while ATI gets only slightly better scores with AA enabled than NVIDIA, when maximum quality is enabled in the driver, the gap widens considerably and ATI does a much better job across resolutions. ATI gets playable framerates at the highest resolutions with the maximum quality enabled, but without an sli setup, NVIDIA can't really manage similar settings (18.9 fps at 2048x1536 with max quality and 22 fps at 1920x1440 with max quality).

Day of Defeat uses Valve's new HDR technology on the Halflife 2 engine, which makes this game a good performance benchmark. One of the most interesting things to note here is how much of a performance hit NVIDIA takes when maximum quality settings are enabled in the control panel. Specifically, the 7800 GTX 512 gets roughly half the framerate with the max settings enabled as without.

With this game, we've omitted tests without AA enabled because there tends to be a CPU limitation on higher-end cards. Notice that while ATI gets only slightly better scores with AA enabled than NVIDIA, when maximum quality is enabled in the driver, the gap widens considerably and ATI does a much better job across resolutions. ATI gets playable framerates at the highest resolutions with the maximum quality enabled, but without an sli setup, NVIDIA can't really manage similar settings (18.9 fps at 2048x1536 with max quality and 22 fps at 1920x1440 with max quality).

120 Comments

View All Comments

tuteja1986 - Tuesday, January 24, 2006 - link

wait for firing squad review then :) if you want AAx8beggerking - Tuesday, January 24, 2006 - link

Did anyone notice it? the breakdown graphs doesn't quite reflect the actual data..the breakdown is showing 1900xtx being much faster than 7800 512, but in the actual performance graph 1900xtx is sometimes outpaced by 7800 512..

SpaceRanger - Tuesday, January 24, 2006 - link

All the second to last section describes in the Image Quality. There was no explaination on power consumtion at all. Was this an accidental omit or something else??Per Hansson - Tuesday, January 24, 2006 - link

Yes, please show us the power consumption ;-)A few things I would like seen done; Put a low-end PCI GFX card in the comp, boot it and register power consumption, leave that card in and then do your normal tests with a single X1900 and then dual so we get a real point on how much power they consume...

Also please clarify exactly what PSU was used and how the consumption was measured so we can figure out more accuratley how much power the card really draws (when counting in the (in)efficiency of the PSU that is...

peldor - Tuesday, January 24, 2006 - link

That's a good idea on isolating the power of the video card.From the other reviews I've read, the X1900 cards are seriously power hungry. In the neighborhood of 40-50W more than the X1800XT cards. The GTX 512 (and GTX of course) are lower than the X1800XT, let alone the X1900 cards.

vaystrem - Tuesday, January 24, 2006 - link

Anyone else find this interesting??Battlefield 2 @ 2048x1536 Max Detail

7800GTX512 33FPS

AIT 1900XTX 32.9FPS

ATI 1900XTX Crossfire. 29FPS

-------------------------------------

Day of Defeat

7800GTX512 18.93FPS

AIT 1900XTX 35.5PS

ATI 1900XTX Crossfire. 35FPS

-------------------------------------

Fear

7800GTX512 20FPS

AIT 1900XTX 36PS

ATI 1900XTX Crossfire. 49FPS

-------------------------------------

Quake 4

7800GTX512 43.3FPS

AIT 1900XTX 42FPS

ATI 1900XTX Crossfire. 73.3FPS

DerekWilson - Tuesday, January 24, 2006 - link

Becareful here ... these max detail settings enabled superaa modes which really killed performance ... especially with all the options flipped on quality.we're working on getting some screens up to show the IQ difference. but suffice it to say that that the max detail settings are very apples to oranges.

we would have seen performance improvements if we had simply kept using 6xAA ...

DerekWilson - Tuesday, January 24, 2006 - link

to further clarify, fear didn't play well when we set AA outside the game, so it's max quality ended up using the in game 4xaa setting. thus we see a performance improvement.for day of defeat, forcing aa/af through the control panel works well so we were able to crank up the quality.

I'll try to go back and clarify this in the article.

vaystrem - Wednesday, January 25, 2006 - link

I'm not sure how that justifies what happens. Your argument is that it is the VERY highest settings so that its ok for the 'dual' 1900xtx to have lower performance than a single card alternative? That doesn't seem to make sense and speaks poorly for the ATI implementation.Lonyo - Tuesday, January 24, 2006 - link

The XTX especially in Crossfire does seem to give a fair boost in a number of tests over the XT and XT in Crossfire.