ATI's X1000 Series: Extended Performance Testing

by Derek Wilson on October 7, 2005 10:15 AM EST- Posted in

- GPUs

Day of Defeat: Source Performance

Rather than test Half-Life 2 once again, we decided to use a game that pushed the engine further. The reworked Day of Defeat: Source takes 4 levels from the original game and adds not only the same brush up given to Counter Strike: Source, but HDR capabilities as well. The new Source engine supports games with and without HDR. The ability to use HDR is dependent on whether or not the art assets needed to do the job are available.

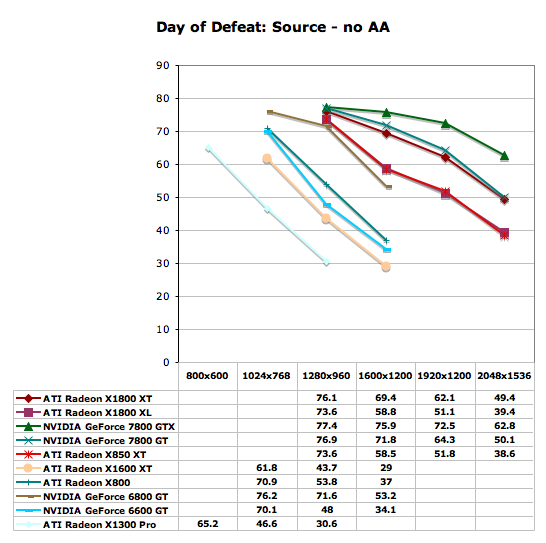

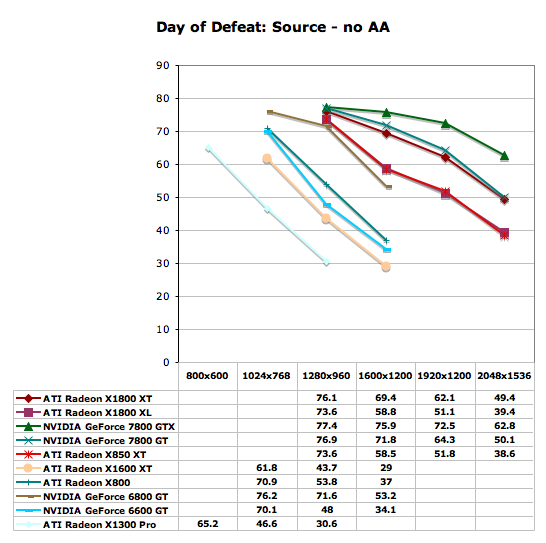

In order to push this test to the max, the highest settings possible were tested. This includes enabling the reflect all and full HDR options. Our first test was run with no antialiasing and trilinear filtering, and our second set of numbers was generated after 4x antialiasing and 8x anisotropic filtering were enabled.

From the looks of our graph, we hit a pretty hard CPU barrier near 80 frames per second. The 7800 GTX sticks the closest to this limit for the longest, but still can't help but fall off when pushing two to three megapixel resolutions. The 7800 GT manages to out-perform the X1800 XT, but more interestingly, the X850 XT and X1800 XL put up nearly identical numbers in this test. The X1300 Pro and the X1600 XT parallel each other as well, with the X1600 XT giving almost the same numbers as the X1300 Pro one resolution higher. The 6600 GT (and thus the 6800 GT as well) out-performs the X1600 XT pretty well.

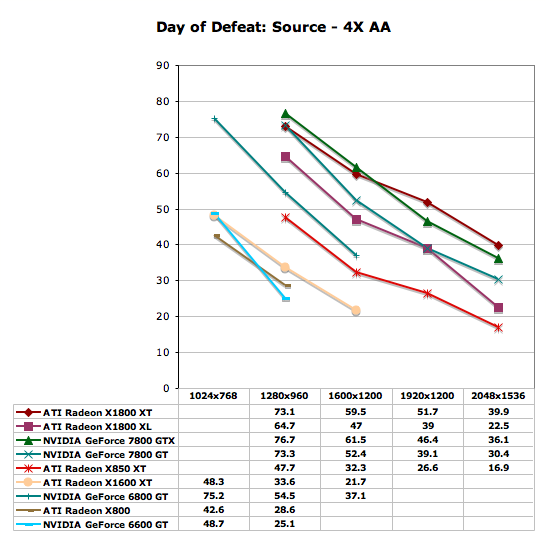

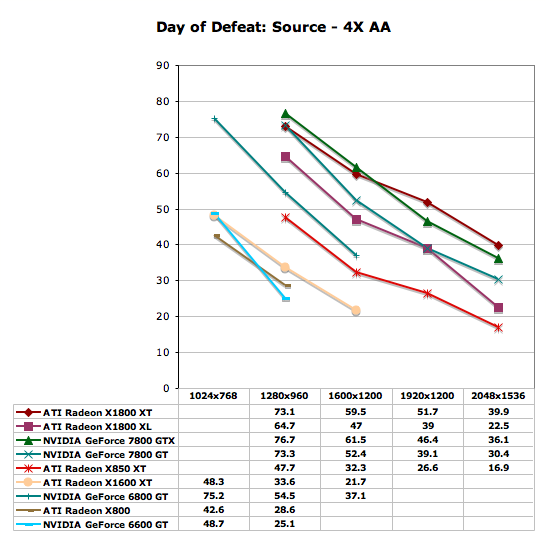

Antialiasing changes the performance trends a bit, but at lower resolutions, we still see our higher end parts reaching up towards that 80 fps CPU limit. The biggest difference that we see here is that the X1000 series are able to gain the lead in some cases as resolution increases with AA enabled. The X1800 XT takes the lead from the 7800 GTX at 1920x1200 and at 2048x1536. The 7800 GT manages to hold its lead over the X1800 XL, but neither is playable at the highest resolution with 4xAA/8xAF enabled. The 6600 GT and X1600 XT manage to stop being playable at anything over 1024x768 with AA enabled. On those parts, gamers are better off just increasing the resolution to 1280x960 with no AA.

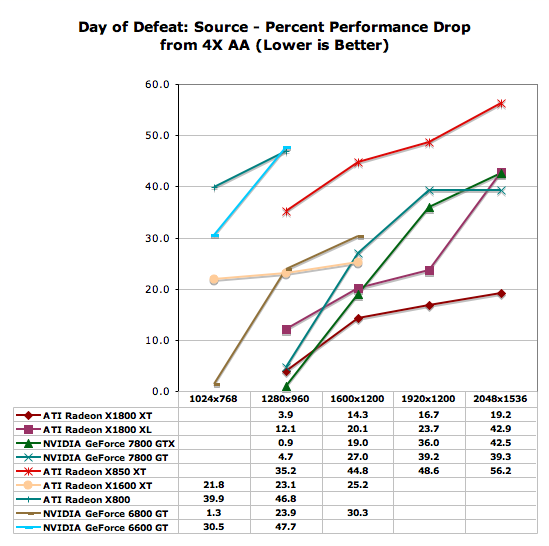

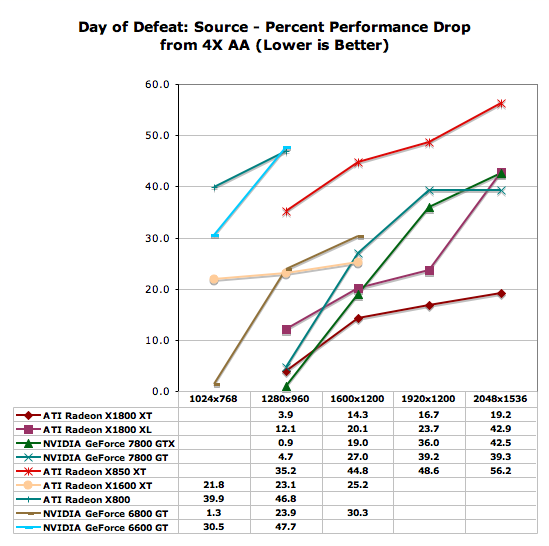

The 7800 series parts try to pretend that they scale as well as the X1800 series at 1600x1200 and below when enabling AA. The X850 XT scales worse than the rest of the high end, but this time, the X1600 XT is able to show that it handles the move to AA better than either of the X8xx parts test and better than the 6600 GT as well. The 6800 GT takes the hit from antialiasing better than the X1600 XT though.

Doom 3 showed NVIDIA hardware leading at every step of the way in our previous tests. Does anything change when looking at numbers with and without AA enabled?

Rather than test Half-Life 2 once again, we decided to use a game that pushed the engine further. The reworked Day of Defeat: Source takes 4 levels from the original game and adds not only the same brush up given to Counter Strike: Source, but HDR capabilities as well. The new Source engine supports games with and without HDR. The ability to use HDR is dependent on whether or not the art assets needed to do the job are available.

In order to push this test to the max, the highest settings possible were tested. This includes enabling the reflect all and full HDR options. Our first test was run with no antialiasing and trilinear filtering, and our second set of numbers was generated after 4x antialiasing and 8x anisotropic filtering were enabled.

From the looks of our graph, we hit a pretty hard CPU barrier near 80 frames per second. The 7800 GTX sticks the closest to this limit for the longest, but still can't help but fall off when pushing two to three megapixel resolutions. The 7800 GT manages to out-perform the X1800 XT, but more interestingly, the X850 XT and X1800 XL put up nearly identical numbers in this test. The X1300 Pro and the X1600 XT parallel each other as well, with the X1600 XT giving almost the same numbers as the X1300 Pro one resolution higher. The 6600 GT (and thus the 6800 GT as well) out-performs the X1600 XT pretty well.

Antialiasing changes the performance trends a bit, but at lower resolutions, we still see our higher end parts reaching up towards that 80 fps CPU limit. The biggest difference that we see here is that the X1000 series are able to gain the lead in some cases as resolution increases with AA enabled. The X1800 XT takes the lead from the 7800 GTX at 1920x1200 and at 2048x1536. The 7800 GT manages to hold its lead over the X1800 XL, but neither is playable at the highest resolution with 4xAA/8xAF enabled. The 6600 GT and X1600 XT manage to stop being playable at anything over 1024x768 with AA enabled. On those parts, gamers are better off just increasing the resolution to 1280x960 with no AA.

The 7800 series parts try to pretend that they scale as well as the X1800 series at 1600x1200 and below when enabling AA. The X850 XT scales worse than the rest of the high end, but this time, the X1600 XT is able to show that it handles the move to AA better than either of the X8xx parts test and better than the 6600 GT as well. The 6800 GT takes the hit from antialiasing better than the X1600 XT though.

Doom 3 showed NVIDIA hardware leading at every step of the way in our previous tests. Does anything change when looking at numbers with and without AA enabled?

93 Comments

View All Comments

flexy - Saturday, October 8, 2005 - link

there is an interesting article (in german, sorry) where they compare the old cards' (X850) performance with the new adaptive antialiasing turned on.You can see that some games do pretty well with minor performance loss - eg. but FarCry gets a HUGE hit by enabling adaptive antialiasing. I also did some tests on my own (X850XT) and the hit is as big as 50% in FarCry benchmark.

My question would be how the new cards handle this and how big the performance hit would be eg. with a 1800XL/XT in certain engines.

Also, i think the 6xAntiAliasing modi are a bit under-represented - i for my part am used to play HL2 1280x1024 with 6xAA and 16xAF....and i am not that interested in 4xAA 8xAF since i ASSUME that a high-end card like the 1800XT should be pre-destined to run the higher AA/AF modi PLUS adaptive antialiasing. Maybe also please note that a big number (?) of people might not even be able to run monster resolutions like 2048x but MIGHT certainly be interested in resolutions upto 1600x but with max AA/AF/adaptive modi on.

flexy - Saturday, October 8, 2005 - link

here the link, sorry forgot above:http://www.3dcenter.org/artikel/2005/10-04_b.php">http://www.3dcenter.org/artikel/2005/10-04_b.php

cryptonomicon - Friday, October 7, 2005 - link

I want to see ATI release a product that takes back the performance crown.. only then they can sit on the high price premiums for their cards again because they own the highest performance. Until then they can get busy slashing prices...ElFenix - Friday, October 7, 2005 - link

you guys fail to realize that, at retail prices for nvidia cards, the ati cards slot quite nicely. best buy and compusa still sell 6600GTs for nearly $200, and 6800GTs for nearly $300. so, comparing those prices to the ATi prices reveals that ATi is quite price competitive. of course, no one who reads this site buys at retail (unless it's a hot deal), but there isn't any reason to think that ATi cards can't come down in price as quickly as the nvidia cards.bob661 - Saturday, October 8, 2005 - link

Ummm yeah. We, the geeks, don't shop at CompUSA or Best Buy. Therefore, ATI's new hardware is NOT price competitive. Also, if Nvidia 6600GT's are $200 and 6800GT's are $300 at said stores, how would the ATI cards magically not get a price gouging too?ElFenix - Tuesday, October 11, 2005 - link

did you even bother to read my post before shooting off your idiotic post? i said that no one here shops at best buy. and you don't know if ati's hardware is price competitive or not because, at the moment, you can't buy it. once it gets out into the channel maybe newegg and zzf and monarch will stock them at competitive prices as the nvidia parts. maybe not. but you don't know that yet, so making blanket statements like 'ati is not price competitive' is stupid!i'm not really sure what this 'price gouging' is you're referring to, but because you've already demonstrated your inability to comprehend the english language i'm going to assume its because you think best buy and compusa are selling for more than msrp. they're not. they are selling at msrp. and at best buy and compusa ati cards will sell at msrp. and ati cards at msrp are quite price competitive with nvidia cards at msrp.

shabby - Friday, October 7, 2005 - link

Lets see some hdr+aa benchmarks.DerekWilson - Saturday, October 8, 2005 - link

There are no games where we can test this feature yetTinyTeeth - Friday, October 7, 2005 - link

You make up for the flaws of the last review and show that you still are the best hardware site out there. Keep it up!jojo4u - Friday, October 7, 2005 - link

The graphs give a nice overview, good work.Please consider to include the information what AF level was used into the graphs. This is something all recent reviews here have have been lacking.

About the image quality: The shimmering was greatly reduced with the fixed driver (78.03). So it's down to NV40 level now. But 3dCenter.de[1] and Computerbase.de conclude that only enabling "high quality" in the Forceware brings comparable image quality to "A.I. low". Perhaps you find the time to explore this issue in the image quality tests.

[1] http://www.3dcenter.de/artikel/g70_flimmern/index_...">http://www.3dcenter.de/artikel/g70_flimmern/index_...

This article is about the unfixed quality. But to judge the G70 today, have a look at the 6800U videos.

http://www.hexus.net/content/item.php?item=1549&am...">http://www.hexus.net/content/item.php?item=1549&am...

This article shows the performance hit of enabling "high quality"