Gigabyte's i-RAM: Affordable Solid State Storage

by Anand Lal Shimpi on July 25, 2005 3:50 PM EST- Posted in

- Storage

We All Scream for i-RAM

Gigabyte sent us the first production version of their i-RAM card, marked as revision 1.0 on the PCB.There were some obvious changes between the i-RAM that we received and what we saw at Computex.

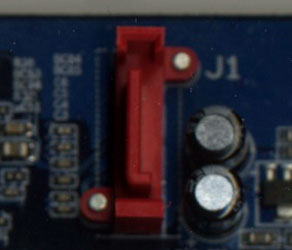

First, the battery pack is now mounted in a rigid holder on the PCB. The contacts are on the battery itself, so there's no external wire to deliver power to the card.

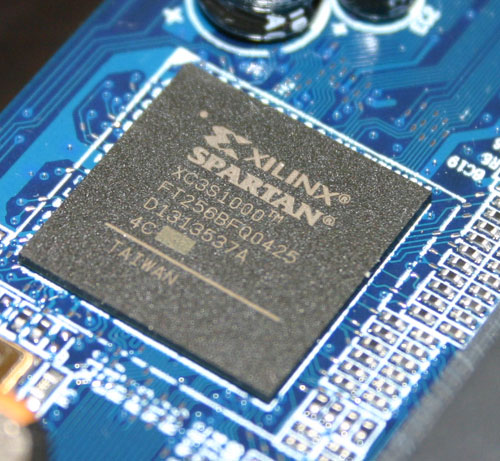

The Xilinx FPGA has three primary functions: it acts as a 64-bit DDR memory controller, a SATA controller and a bridge chip between the memory and SATA controllers. The chip takes requests over the SATA bus, translates them and then sends them off to its DDR controller to write/read the data to/from memory.

Gigabyte has told us that the initial production run of the i-RAM will only be a quantity of 1000 cards, available in the month of August, at a street price of around $150. We would expect that price to drop over time, and it's definitely a lot higher than what we were told at Computex ($50).

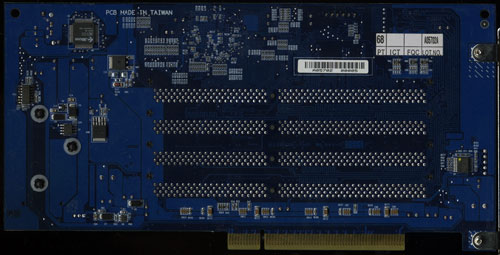

The i-RAM is outfitted with 4 184-pin DIMM slots that will accept any DDR DIMM. The memory controller in the Xilinx FPGA operates at 100MHz (DDR200) and can actually support up to 8GB of memory. However, Gigabyte says that the i-RAM card itself only supports 4GB of DDR SDRAM. We didn't have any 2GB unbuffered DIMMs to try in the card to test its true limit, but Gigabyte tells us that it is 4GB.

The Xilinx FPGA also won't support ECC memory, although we have mentioned to Gigabyte that a number of users have expressed interest in having ECC support in order to ensure greater data reliability.

Although the i-RAM plugs into a conventional 3.3V 32-bit PCI slot, it doesn't use the PCI connector for anything other than power. All data is transfered via the Xilinx chip and over the SATA connector directly to your motherboard's SATA controller, just like any regular SATA hard drive.

With SATA as the only data interface, Gigabyte made the i-RAM infinitely more useful than software based RAM drives because to the OS and the rest of your system, the i-RAM appears to be no different than a regular hard drive. You can install an OS, applications or games on it, you can boot from it and you can interact with it just like you would any other hard drive. The difference is that it is going to be a lot faster and also a lot smaller than a conventional hard drive.

The size limitations are pretty obvious, but the performance benefits really come from the nature of DRAM as a storage medium vs. magnetic hard disks. We have long known that modern day hard disks can attain fairly high sequential transfer rates of upwards of 60MB/s. However, as soon as the data stops being sequential and is more random in nature, performance can drop to as little as 1MB/s. The reason for the significant drop in performance is the simple fact that repositioning the read/write heads on a hard disk takes time as does searching for the correct location on a platter to position them. The mechanical elements of hard disks are what make them slow, and it is exactly those limitations that are removed with the i-RAM. Access time goes from milliseconds (1 x 10-3) down to nanoseconds (1 x 10-9), and transfer rate doesn't vary, so it should be more consistent.

Since it acts as a regular hard drive, theoretically, you can also arrange a couple of the i-RAM cards together in RAID if you have a SATA RAID controller. The biggest benefit to a pair of i-RAM cards in RAID 0 isn't necessarily performance, but now you can get 2x the capacity of a single card. We are working on getting another i-RAM card in house to perform some RAID 0 tests. However, Gigabyte has informed us that presently, there are stability issues with running two i-RAM cards in RAID 0, so we wouldn't recommend pursuing that avenue until we know for sure that all bugs are worked out.

133 Comments

View All Comments

NStriker - Thursday, July 28, 2005 - link

Anand quotes $90 per GB of RAM here, but I'm wondering if the I-Ram works with the much cheaper high-density junk you see out there all the time. Like 128Mx4 modules. On motherboards, usually only SiS chipsets can handle that type of RAM, but there's no reason the Xilinx FPGA couldn't.Right now I'm seeing 1GB of that stuff for $63.

jonsin - Thursday, July 28, 2005 - link

Since Athlon64 north bridge no need the memory controller. Why shouldn't the original memory controller used for iRam purpose. By supporting both SDRam and DDR Ram, people can make use of their old RAM (which no longer useful nowadays) and make it as Physical Ram Drive.Spare some space for additional DDR module slot on motherboard exclusively for iRam, and additional daughter card can be added for even more Slots.

Would it be a cheaper solution for iRam ultimately ?

jonsin - Thursday, July 28, 2005 - link

And more, power can be directly drive from ATA power in motherboard. By implementing similar approach to iRam, an extra battery can power the ram for certain hours.By enabling north bridge to be DDR/SDRam capability is not a new technology, every chipset compnay have such tech. They can just stick the original memory controller with lower performance (DDR200, so more moudle can be supported and lower cost) to north bridge, the cost overhead is relatively small.

What I think the extra cost comes from extra motherboard layout, north bridge die size, chipset packaging cost (more pins). I suppose it can cost as low as $20 ?

jonsin - Thursday, July 28, 2005 - link

More, the original SATA physical link can be omitted as the controller in North Bridge can communicate directory to SATA controller internally (South bridge thru HT ?) In this case, would the performance increate considerably and the overall layout more tidy ? (no need external cable and cards)mindless1 - Friday, July 29, 2005 - link

NO these are all problems. The purpose is to have a universal platform support that is gentle on power consumption. That means a tailored controller and even then we're seeing the main limit is the battery. "Tidy" is an unimportant human desire, particularly less important inside a closed PC case. All they have to do is route bus traces well on the card and be done.slumbuk - Wednesday, July 27, 2005 - link

HP sell an add on for their DL 380 server for $200 (at discount) that gets you 128MB of disk write cache... makes a good system also fast for disk writes.This card could be used by linux vendors to enable file-system data and control logging for similar money for GB(s) of write cache... Cheap, reliable, fast general purpose file servers.. that have fast disk write speed without risking data loss.. Speed meaning no disk-head latency, no rotational latency - just transfer time.

It would sell better with ECC memory.. or the ability to use two cards in a mirror.. at least to careful server buyers..

slumbuk - Wednesday, July 27, 2005 - link

You could set up the iRam drive as the journal device for Resier or Ext-3 logged file systems - and log both control info and data - for fast, safe systems without too much fuss.I think I want one - but not as much as I want other stuff..

AtaStrumf - Wednesday, July 27, 2005 - link

Interesting but hardly useful for most. Kind of makes sense to only make 1000, but of course that's where the $150 price tag comes from.rbabiak - Wednesday, July 27, 2005 - link

i guess it would add to the base board cost, but a SATA controller on the PCI card would make it a littl nicer as then you are not takeing up one of your SATA channels, i only have 2 and they are current both used for a Raid-0Also if they made the PCI card a SATA interface and then short circeted the backend to conect directly to the memory, wouldn't they then be able to get much higher transfer speeds than sata and yet all the existint SATA divers could be used with it, given they emulate a existing SATA interface.

DerekWilson - Thursday, July 28, 2005 - link

Better to use the onboard ports ...a 33MHz/32bit PCI slot only grants a max of 133MB/sec. This would make the PCI bus a limiting factor to the SATA controller.

Step beyond that and remember that the PCI bus is shared among all your PCI cards. Depending on the motherboard some onboard devices can be built onto the PCI bus.

With bandwidth on current southbridge chips already being dedicated to SATA (or SATA-II), it would be a waste in more ways than one to build a SATA controller into the i-RAM.

That's my take on it anyway.

Derek Wilson