The Quest for More Processing Power, Part Two: "Multi-core and multi-threaded gaming"

by Johan De Gelas on March 14, 2005 12:05 AM EST- Posted in

- CPUs

Threads & Performance

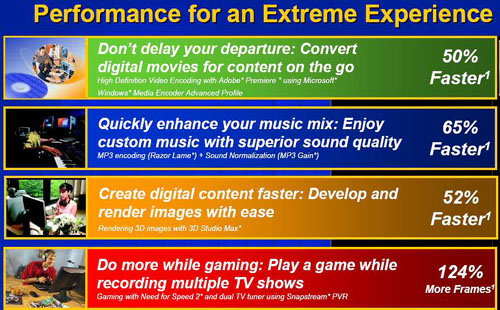

"Threads" is a popular discussed subject. Therefore, we like to give a small introduction to those of you who are not familiar with threads. To understand threads, you first must understand processes. Any decent OS controls the memory allocation to the different programs or processes. A process gets its own private, virtual address space in memory from the OS. Thus, a process cannot communicate/exchange data with other processes without the help of the kernel, the heart of the OS that controls everything. Processes can split up in threads, parallel tasks that share the virtual address space, which can exchange data very quickly without intervention of the OS (global, static, and instance fields, etc.).The thread is the entity to which the modern operating system (Windows NT based, Solaris, Linux) assigns CPU time. While you could split a CPU intensive program in processes (modern OS sees it as 1 process consisting of one thread), threads of the same process have much less overhead and synchronize data much quicker. The operating system assigns CPU time to running threads based on their priority. Performance gains of multi-CPU or multi-core CPU configurations are only high if: You have more than one CPU intensive thread; The threads are balanced - there is not one very intensive and a few others that are hardly CPU intensive; Synchronization between threads (shared data) either happens quickly, thanks to fast interconnects, or little synchronization is necessary; The OS provides well-tuned load-balanced scheduling; The threads are cache friendly (memory latency!) and do not push the memory bandwidth to the limits. In that case, you may typically expect a 70% to 99% performance speed-up, thanks to the second core. Be warned that Intel was already showing performance increases, which are not realistic "up to 124%". [1]

The benchmarks compare a Pentium 4 EE 840, a Dual Core Pentium 4 3.2 GHz (1 MB L2), to a 3.73 GHz Pentium 4 EE with 2 MB L2. Especially in the last benchmark, a game running in foreground with two PVR (Personal Video Recorders), and tuners running in the background gives a very weird result. How can a slower Dual core be more than 100% faster than a single core with a higher clock speed, bigger caches and a faster FSB? When we first asked Intel, they pointed to the platform (newer chipset, etc.), but no new chipset can make up for a 33 % slower FSB.

We suspected that different thread priorities (giving the game thread a higher priority) might have been the explanation, but Intel's engineers had another interesting explanation. They pointed out that the Windows scheduler can sometimes be inefficient when running many heavy tasks on a single CPU and might have given the game less CPU time than normal. The Windows scheduler didn't have that problem when two CPUs were present: less context switching between threads, and no reason to give the game not enough CPU time. Prepare for a load of hard-to-interprete benchmarks on the Internet...

Threads & Programming

Programming in Threads brings many advantages, especially on dual-cores. Threads with long running CPU intensive processing are not able to the give the system a sluggish unresponsive feeling when you want you do something else at the same time. The OS scheduler should take care of that as long as the CPU is fast enough, but the Intel benchmarks above show you that that is only true in theory. Dual and multi-core can definitely help here. Threads make a system more responsive and offer a very nice performance boost on multi-CPU systems. But the other side of the medal is complexity. Running separate tasks in separate threads that do not need to share data is the easiest part of making a program more suitable to multi-core CPUs. But that has been done a long time ago, and the real challenge is to handle threads that have to share data. The programmer also has to watch over the fact that high amounts of threads introduce overhead in the form of (unnecessary) context switches even on dual core CPUs.A nasty problem that might pop-up is a "deadlock", when two threads are each waiting for the other to complete, resulting in neither thread ever completing. A race between two threads might sound speedier, but it means that the result of a program's operation depends on which of two or more threads completes first. The problem becomes exponentionally worse if more and more threads are able to run into these problems. Both the Java and .Net ("Threadpool") platform provide classes and tools to deal with thread management - programmers are not left on their own. The problem is not creating threads, but debugging the multithreaded programs. The result is that multithreading has been used sparingly and with as few threads as possible to keep complexity down. But the right tools are coming, right?

Multi-threading toolbox

Intel does provide a few interesting tools for multithreading.OpenMP is the industry standard for "portable" multi-threaded application development, and can do fine grain (loop level) and large grain (function level) threading.

The newest Intel compilers are even capable of Auto-Parallelization. That sounds fantastic - would multithreading be as easy as using the right compiler? After all, Intel's compiler is able to vectorize existing FP code too. Just recompile your FP intensive code with the right compiler flags and you get speed-ups of 100% and more as the Intel compiler is able to replace x87 instructions by faster SSE-2 alternatives.

Let us see what Intel says about auto-parallelization:

"Improve application performance on multiprocessor systems using auto-parallelization for automatic threading of loops. This option detects parallel loops capable of being executed safely in parallel and automatically generates multi-threaded code. Automatic parallelization relieves the user from having to deal with the low-level details of iteration partitioning, data sharing, thread scheduling and synchronizations. It also provides the benefit of the performance available from multiprocessor systems and systems that support Hyper-Threading Technology."So, it is just a matter of using the right tools? A chicken and egg problem? When the hardware is there, the software will follow? Is it just a matter of having the right tools and enough market penetration of multi-core CPUs? We asked Tim Sweeney, founder of Epic and a multi-threaded game engine programming guru.

49 Comments

View All Comments

hzmonte - Tuesday, October 4, 2005 - link

For everyone's convenience, here is Part 3: http://www.anandtech.com/cpuchipsets/showdoc.aspx?...">http://www.anandtech.com/cpuchipsets/showdoc.aspx?...bmayer - Friday, March 25, 2005 - link

About the automatic parallization:It can be fairly easy to do. I work on some Cray X1 and X1es. A little bit about the X1. The Processors (called Multi-Streaming Processors (MSP)) are made up of 4 Single Streaming Processors (SSP). They are vector units with a lenght of 64 or 32 (can't remember, the point is they are good sized vector units). The processors are clocked at 800MHz for the X1 or 1.3GHz if you have an X1e.

Ok so what do we see? These CPUs *suck* if your code is not vectorized and running in parallel.Guess what the Cray compiler does? Automatically vectorizes, streams (takes advantage of a full MSP instead of a single SSP), and parallizes.

They lay out very clearly what the conditions are where the compiler can NOT optimize, and give you directives where you can force it to do so. You can also get a listing of why it did not do a given optimization for any given line. Actually it gives you all information by default which combined with grep is nice.

OK so there are different types of parallelizm, and the one I have just talked about is different then what they are trying to do. This has been talking about speeding up the execution of some inner loop, which is very different from doing two different things at the same time (AI module and sound module running at the same time). BUT this can still be used for great effect. When the inner loops execute for half the time as on a single core/CPU machine we now have more time to do other things, and thus see a speed improvement.

I have thought that Sony/IBM should get in touch with Cray to supply compiler tech for the Cell processor. If the Cell is as easy to write parallel code for as the X1 is we will have some very awesome games, and clusters of PS3s.

If you want to see a very nice overview of processor history and some of the crazy things people are proposing to do with the multi-cores check out ftp://ftp.cs.wisc.edu/sohi/talks/2003/pact.pdf

I agree that parallel C++ is just not happening very well. There are languages like UPC which are starting to gain hold in the HPC market, which *could* find some use in the game market. But as the state of the art stands it is Fortran which is really great for automatically generating parallel code. But who could serriously say that someone outside of engineering writes a code in Fortran?

Great article, lots to think about!

blckgrffn - Friday, March 18, 2005 - link

Loved it. All of it. Especially the interview with Sweeney - it is always nice to hear where the future *will* lie with regards to at least one major application/game. Now, just get an interview with Carmack, and I will be happy for a long time... :DNat

Caleb Jasin - Friday, March 18, 2005 - link

#41Sorry for the late reply.

And yeah pthreads are not threads really. They are processes. When you call the pthread_create() function you create a new proccess ;)

ravedave - Wednesday, March 16, 2005 - link

Sorry to double post. You could easily make a benchmark that saw 1000000000% speed increase. Take one application give it high priority and have it loop for 5 days. It would lock everything else up. Throw in a second processor and you no longer have that problem, hence a huge speedup in the other processes. I dont trust any numbers from any manufacturer.ravedave - Wednesday, March 16, 2005 - link

Excellent article. Extremely excllent. I like the fact that you mentioned GUI updates, most people forget that almost all applications are multi-thread as far as GUI/core go. I really think that Microsoft is on the right track with .NET though. I belive .NET 2 or 2.5 will really take multithreading to the next level.RockHydra11 - Tuesday, March 15, 2005 - link

My fear is that instead of creating new architctures for their processors to increase performance, they will just shove more cores on it and pass it off on people.Verdant - Tuesday, March 15, 2005 - link

#40i do see a shift to something like C# but anything that brings a "performance" hit, is likely to scare away developers, especially since on non-windows platforms the hit is pretty huge atm.

you are right that a compiler probably isn't an answer, i was merely stating that if the industry was dedicated to creating a "deserializing" compiler it would be possible, extremely complex, and probably technically more than a "compiler" but still possible...

also you are thinking of UNIX, the linux kernel has supported ever since i can remember, take a look at the pthreads and linuxthreads (glibc2) libraries

Caleb Jasin - Tuesday, March 15, 2005 - link

#35Yeah agreed, from my own experience C# threadding is much easier than threadding in C. And I would say it is the same for most code. Developing in C# is generally much faster than in C or C++. And the tests I have seen shows about a 10% performance hit between optimal C# and C++ code. So I think it is just a question of time before we see games coded primarily in C#. In the end, the time saved could be used to write more optimal code I guess, so maybe the performance hit would be negligable.

However, I don't think that we will see compilers that are smart enough to multithread code any time soon. I wrote a very simple compiler for a very simple language in university and coding compilers is extremely complicated. As Tim says, they aren't threadding gamelogics and that wouldn't make much sense either because there are too many dependencies. And even though threadding takes alot of time, there are alot of relatively easily paralizeable code in games.

Btw, there is a small error in the article. It says that Linux has thread support. It really doesn't. A thread in Linux is a process. There is no diffrence at kernel level between starting a new thread and forking a new process.

melgross - Tuesday, March 15, 2005 - link

Don't forget that the idea behind these game engines is the reusability of the code. What I mean is that they will first tackle the problems that Sweeney thought most important, and easier, and then, one by one, the harder problems will be resolved. This might take years, but performance increases are always going to be appreciated. Competing products are always going to put pressure on on each other.Ten years from now the discussion will be about how they accomplished all of this.

While dual-cored GPUs have never been used, since that is just now becoming a viable technology, dual and quad GPUs have been used for many years now on the high end boards. Not the gamer boards that we see for $500 and below.