Gigabyte Dual GPU: nForce4, Intel, and the 3D1 Single Card SLI Tested

by Derek Wilson on January 6, 2005 4:12 PM EST- Posted in

- GPUs

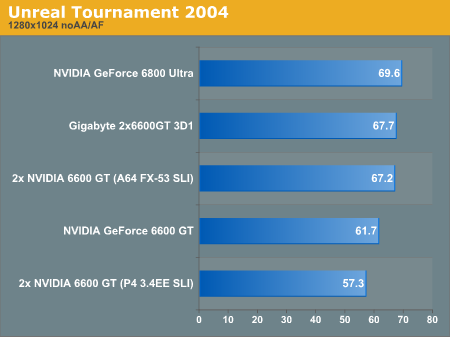

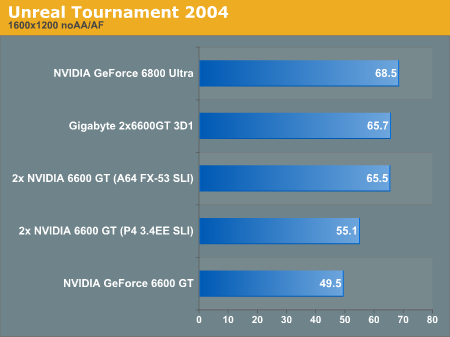

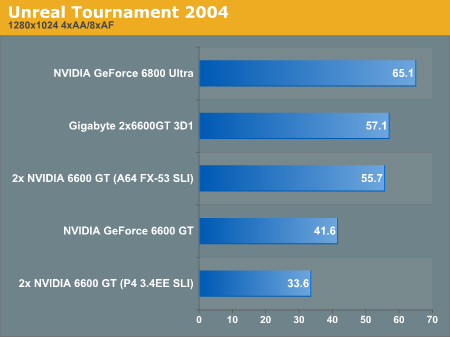

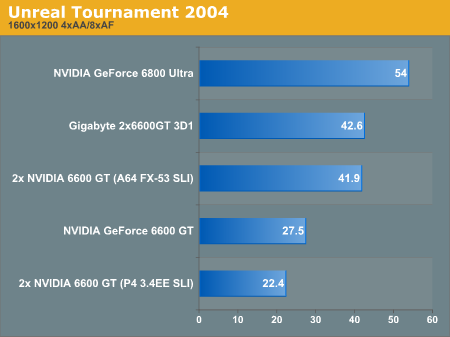

Unreal Tournament 2004 Performance

Under a DX8.1 game, the 3D1 and 6600GT SLI solutions still run neck and neck, with the Intel SLI coming in slower than the single 6600GT on the AMD platform.

Kicking on AA and AF just serves to push the performance of the Intel solution lower.

43 Comments

View All Comments

johnsonx - Friday, January 7, 2005 - link

To #19:from page 1:

"....even if the 3D1 didn't require a special motherboard BIOS in order to boot video..."

In other words, the mainboard BIOS has to do something special to deal with a dual-GPU card, or at least the current implementation of the 3D1.

What NVidia should do is:

1. Update their drivers to allow SLI any time two GPU's are found, whether they be on two boards or one.

2. Standardize whatever BIOS support is required for the dual GPU cards to POST properly, and include the code in their reference BIOS for the NForce4.

At least then you could run a dual-GPU card on any NForce4 board. Maybe in turn Quad-GPU could be possible on an SLI board.

bob661 - Friday, January 7, 2005 - link

#19I think the article mentioned a special bios is needed to run this card. Right now only Gigabyte has this bios.

pio!pio! - Friday, January 7, 2005 - link

#18 use a laptopFinalFantasy - Friday, January 7, 2005 - link

Poor Intel :(jcromano - Friday, January 7, 2005 - link

From the article, which I enjoyed very much:"The only motherboard that can run the 3D1 is the GA-K8NXP-SLI."

Why exactly can't the ASUS SLI board (for example) use the 3D1? Surely not just because Gigabyte says it can't, right?

Cheers,

Jim

phaxmohdem - Friday, January 7, 2005 - link

ATI Rage Fury MAXX Nuff said...lol #6 I think you're on to something though. Modern technology is becoming incredibly power hungry I think that more steps need to be taken to reduce power consumption and heat production, however with the current pixel pushing slugfest we are witnessing FPS has obviously displaced these two worries to our beloved Video card manufacturers. At some point though when consumers refuse to buy the latest Geforce or Radeon card with a heatsink taking up 4 Extra PCI slots, I think that they will get the hint. I personally consider a dual slot heatsink solution ludicrous.

Nvidia, ATI, Intel, AMD... STOP RAISING MY ELECTRICITY BILL AND ROOM TEMPERATURE!!!!

KingofCamelot - Friday, January 7, 2005 - link

#16 I'm tired of you people acting like SLI is only doable with an NVIDIA motherboard, which is obviously not the case. SLI only applies to the graphics cards. On motherboards SLI is just a marketing term for NVIDIA. Any board with 2 16x PCI-E connectors can pull off SLI with NVIDIA graphics cards. NVIDIA's solution is unique because they were able to split a 16x line and give each connector 8x bandwidth. Other motherboard manufacturer's are doing 16x and 4x.sprockkets - Thursday, January 6, 2005 - link

I'm curious to see how all those lame Intel configs by Dell and others pull off SLI long before thie mb came out.Regs - Thursday, January 6, 2005 - link

Once again - history repeats itself. Dual core SLI solutions are still a far reach from reality.Lifted - Thursday, January 6, 2005 - link

Dual 6800GT's???? hahahahahehhehehehahahahah.Not laughing at you, but those things are so hot you'd need a 50 pound copper heatsink on the beast with 4 x 20,000 RPM fans running full boar just to prevent a China Syndrome.

Somebody say dual core? Maybe with GeForce 2 MX series cores.