Gigabyte Dual GPU: nForce4, Intel, and the 3D1 Single Card SLI Tested

by Derek Wilson on January 6, 2005 4:12 PM EST- Posted in

- GPUs

Half-Life 2 Performance

Unfortunately, we were unable to test the Intel platform under Half-Life 2. We aren't quite sure how to explain the issue that we were seeing, but in trying to run the game, the screen would flash between each frame. There were other visual issues as well. Due to these issues, performance was not comparable to our other systems. Short of a hard crash, this was the worst possible kind of problem that we could have seen. It is very likely that this could be an issue that NVIDIA may have fixed between 71.20 and 66.81 on Intel systems, but we are unable to test any other driver at this time.We are also not including the 6800 Ultra scores, as the numbers that we were using as a reference (from our article on NVIDIA's official SLI launch) were run using the older version 6 of Half-Life 2 as well as older (different) versions of our timedemos.

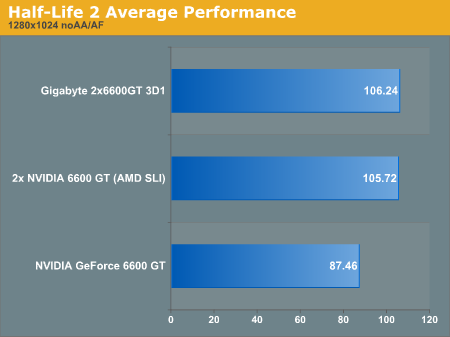

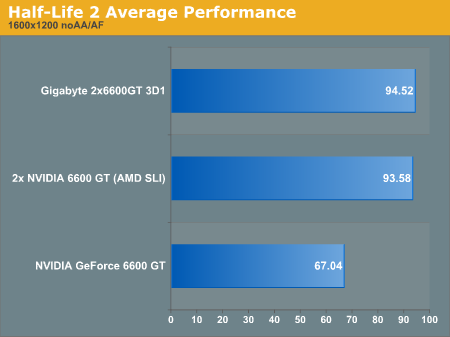

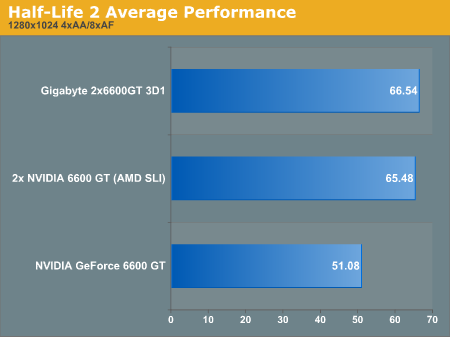

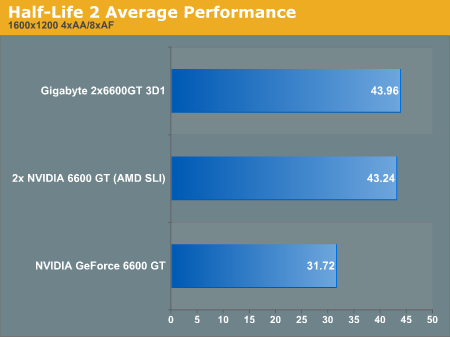

Continuing our trend with Half-Life 2 and graphics card tests, we've benched the game in 5 different levels in two resolutions with and without 4xAA/8xAF enabled. We've listed the raw results in these tables for those who are interested. For easier analysis, we've taken the average performance of each of the 5 level tests and compared the result in our graphs below.

| Half-Life 2 1280x1024 noAA/AF | |||||

| at_c17_12 | at_canals_08 | at_coast_05 | at_coast_12 | at_prison_05 | |

| NVIDIA GeForce 6600 GT | 76.1 | 71.3 | 115 | 94.9 | 80 |

| 2x NVIDIA 6600 GT (AMD SLI) | 77.2 | 98.8 | 118.5 | 117.5 | 116.6 |

| Gigabyte 2x6600GT 3D1 | 77.3 | 99.1 | 118.7 | 117.9 | 118.2 |

| Half-Life 2 1600x1200 noAA/AF | |||||

| at_c17_12 | at_canals_08 | at_coast_05 | at_coast_12 | at_prison_05 | |

| NVIDIA GeForce 6600 GT | 61.1 | 55.7 | 91.5 | 69.3 | 57.6 |

| 2x NVIDIA 6600 GT (AMD SLI) | 73.5 | 85.8 | 110.8 | 104.9 | 92.9 |

| Gigabyte 2x6600GT 3D1 | 73.6 | 87 | 111.4 | 106 | 94.6 |

| Half-Life 2 1280x1024 4xAA/8xAF | |||||

| at_c17_12 | at_canals_08 | at_coast_05 | at_coast_12 | at_prison_05 | |

| NVIDIA GeForce 6600 GT | 40.5 | 40.1 | 74.8 | 54.9 | 45.1 |

| 2x NVIDIA 6600 GT (AMD SLI) | 45.2 | 47.8 | 92.4 | 81 | 61 |

| Gigabyte 2x6600GT 3D1 | 45.7 | 47.8 | 94.1 | 82.8 | 62.3 |

| Half-Life 2 1600x1200 4xAA/8xAF | |||||

| at_c17_12 | at_canals_08 | at_coast_05 | at_coast_12 | at_prison_05 | |

| NVIDIA GeForce 6600 GT | 27.3 | 27.2 | 43.3 | 32.7 | 28.1 |

| 2x NVIDIA 6600 GT (AMD SLI) | 33.8 | 35.3 | 58.1 | 49.2 | 39.8 |

| Gigabyte 2x6600GT 3D1 | 33.8 | 35.3 | 59.3 | 50.8 | 40.6 |

The 3D1 averages about one half to one frame higher in performance than two stock clocked 6600 GT's in SLI mode. This equates to a difference of absolutely nothing with frame rates of near 100fps. Even the overclocked RAM doesn't help the 3D1 here.

We see more of the same when we look at performance with anti-aliasing and anisotropic filtering enabled. The Gigabyte 3D1 performs on par with 2 x 6600GT cards in SLI. With the RAM overclocked, this means that the bottleneck under HL2 is somewhere else when running in SLI mode. We've seen GPU and RAM speed to impact HL2 performance pretty evenly under single GPU conditions.

43 Comments

View All Comments

sprockkets - Friday, January 7, 2005 - link

Thanks for the clarification. But also some were using the Server Intel chipset cause it had 2 16x slots, instead of the desktop chipset to use SLI. Like the article said though, the latest drivers only like the nvidia sli chipet.ChineseDemocracyGNR - Friday, January 7, 2005 - link

#29,The 6800GT PCI-E is probably going to use a different chip (native PCI-E) than the broken AGP version.

One big problem with nVidia's SLI that I don't see enough people talking about is this:

http://www.pcper.com/article.php?aid=99&type=e...

Jeff7181 - Friday, January 7, 2005 - link

Why is everyone thinking dual core CPU's and dual GPU video cards is so far fetched? Give it 6-12 months and you'll see it.RocketChild - Friday, January 7, 2005 - link

I seem to recall ATi was frantically working on a solution like this to bypass Nvidia's SLI solution and I am not reading anything about their progress. From the position the article points to BIOS hurdles, does it look like we are going to have to wait for ATi to release their first chipset to support a multi-GPU ATi card? Anyone here have any information or speculations?LoneWolf15 - Friday, January 7, 2005 - link

25, the reason you'd want to buy two 6600GT's instead of one 6800GT is that PureVideo functions work completely on the 6600GT, whereas they are partially broken on the 6800GT. If this solution didn't work in only Gigabyte boards, I'd certainly consider it myself.skiboysteve - Friday, January 7, 2005 - link

Im confused as to why anyone would buy this card at all. Your paying the same price as a 6800GT and getting the same performance with all the issues that go with Gigabyte SLI. thats retarded.ceefka - Friday, January 7, 2005 - link

Are there any cards available for the remaining PCI-E slots?Ivo - Friday, January 7, 2005 - link

Obviously, the future belongs to the matrix CPU/GPU (IGP?) solutions with optimized performance/power consumption ratios. But there is still a relatively long way (2 years?) to go. The recent NVIDIA's NF4-SLI game is more marketing, then technical in nature. They are simply checking the market, concurrence, and … enthusiastic IT society :-) The response is moderate, as the challenge is. But the excitements are predetermined.Happy New Year 2005!

PrinceGaz - Friday, January 7, 2005 - link

I don't understand why anyone would want to buy a dual-core 6600GT rather than a similarly priced 6800GT.DerekWilson - Friday, January 7, 2005 - link

I appologize for the omission of pictures from the article on publication.We have updated the article with images of the 3D1 and the K8NXP-SLI for your viewing pleasure.

Thanks,

Derek Wilson