Inno3D

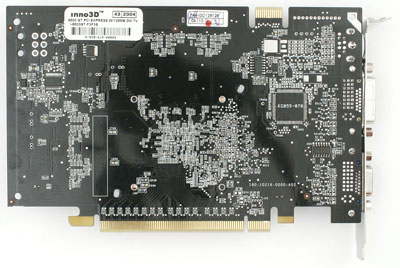

Inno3D didn't overclock the highest, has the loudest fan, and isn't the coolest card of the bunch (though it was competitive with other box-shaped HSF solutions). But the one thing that Inno3D did better than everyone else that we had looked at was the way that they attached their heatsink to the board. The HSF doesn't move and makes excellent contact with the GPU.

The sound of the HSF had a higher pitched hair dryer quality to it, though we are still talking about a mid-range card level fan speed. This was the loudest card that we ran, but that speaks well for Geforce 6600 GT cards. On the upside, the heatsink is all copper, as are the ramsinks. They also used 1.6ns GDDR3 on their board, but clocked it at 500MHz. In light of this, a 600MHz memory clock speed should be achievable even though we were unable to push it that high.

84 Comments

View All Comments

Pete - Friday, December 10, 2004 - link

Obviously Derek OCed himself to get this article out, and he's beginning to show error. Better bump your (alarm) clocks down 10MHz (an hour) or so, Derek.pio!pio! - Friday, December 10, 2004 - link

Noticed a typo. At one point your wrote 'clock stock speed' instead of 'stock clock speed' easy mistake.Pete - Friday, December 10, 2004 - link

Another reason to narrow the distance b/w the mic and the noise source is that some of these cards may go into SFFs, or cases that sit on the desk. 12" may well be more indicative of the noise level those users would experience.Pete - Friday, December 10, 2004 - link

Great article, Derek!As usual, I keep my praise concise and my constructive criticism elaborate (although I could argue that the fact that I keep coming back is rather elaborate praise :)). I think you made the same mistake I made when discussing dB and perceived noise, confusing power with loudness. From the following two sources, I see that a 3dB increase equates to 2x more power, but is only 1.23x as loud. A 10db increase corresponds to 10x more power and a doubling of loudness. So apparently the loudest HSFs in this roundup are "merely" twice as loud as the quietest.

http://www.gcaudio.com/resources/howtos/voltagelou...

http://www.silentpcreview.com/article121-page1.htm...

Speaking of measurements, do you think 1M is a bit too far away, perhaps affording less precision than, say, 12"?

You might also consider changing the test system to a fanless PSU (Antec and others make them), with a Zalman Reserator cooling the CPU and placed at as great a distance from the mic as possible. I'd also suggest simply laying the test system out on a piece of (sound-dampening) foam, rather than fitting it in a case (with potential heat trapping and resonance). The HD should also be as quiet as possible (2.5"?).

I still think you should buy these cards yourselves, a la Consumer Reports, if you want true samples (and independence). Surely AT can afford it, and you could always resell them in FS/FT for not much of a loss.

Anyway, again, cheers for an interesting article.

redavnI - Thursday, December 9, 2004 - link

Very nice article, but any chance we could get a part 2 with any replacement cards the manufacturers send and I'd like the see the Pine card reviewed too. It's being advertised as the Anandtech Deal at the top of this article and has dual dvi like the XFX card. Kind of odd one of the only cards not reviewed gets a big fat buy me link.To me it seems that with the 6600GT/6800 series Nvidia has their best offering since the Geforce4 TI's...I'm sure I'm not the only one still hanging on to my Ti4600.

Filibuster - Thursday, December 9, 2004 - link

Something I've just realized: The Gigabyte NX66T256D is not a GT yet supports SLI. Are they using a GT that can't run at the faster speeds and selling it as a 6600 standard? It has 256MB.We ordered two from a vendor who said it definately does SLI.

http://www.giga-byte.com/VGA/Products/Products_GV-...

Can you guys find out for sure?

TrogdorJW - Thursday, December 9, 2004 - link

Derek, the "enlarged images" all seem to be missing, or else the links are somehow broken. I tested with Firefox and IE6 and neither one would resolve the image links.Other than that, *wow* - who knew HSFs could be such an issue? I'm quite surprised that they are only secured at two corners. Would it really have been that difficult to use four mount points? The long-term prospects for these cards are not looking too good.

CrystalBay - Thursday, December 9, 2004 - link

Great job on the quality control inspections of these cards D.W. Hopefully IHV's take notice and resolve these potentially damageing problems.LoneWolf15 - Thursday, December 9, 2004 - link

I didn't see a single card in this review that didn't have a really cheesey looking fan...the type that might last a couple years if you're really lucky, but might last six months on some cards if you're not. The GeForce 6600GT is a decent card; for $175-250 (depending on PCIe or AGP) you'd think vendors would put a fan deserving of the price. My PNY 6800NU came with a squirrel-cage fan and super heavy heatsink that I know will last. Hopefully, Arctic Cooling will come out with an NV Silencer soon for the 6600 family; I wouldn't trust any of the fans I saw here to last.Filibuster - Thursday, December 9, 2004 - link

What quality settings were used in the games?I am assuming that Doom 3 is in medium since these are 128MB cards.

I've read that there are some 6600GT 256MB cards coming out (Gigabyte GV-NX66T256D and MSI 6600GT-256E, maybe more) Please show us some tests with the 256MB models once they hit the streets (or if you know they are definately not, please tell us that too)

Even though the cards only have 128bit bus, wouldn't the extra ram help out in places like Doom 3 where texture quality is a matter of ram quantity? The local video ram still has to be faster than fetching it from system ram.