Doom3 Linux and Windows Battlegrounds

by Kristopher Kubicki on October 13, 2004 12:50 AM EST- Posted in

- Linux

Full Screen Anti Aliasing

An interesting and helpful quality about the AnandTech FrameGetter is that it always records the first frame of a timedemo, so long as the timedemo takes more than 2 seconds to load. This makes sense, since the screen before the timedemo is almost always a static screen that just says "loading"; nothing new is outputted from the frame buffer. In any case, this provides us with excellent opportunity to do some very neat IQ testing.Curiously, our NVIDIA drivers have a slider for 16X AA. This is generally unsupported outside of the Quadro cards for NVIDIA on Windows. Attempting to run Doom3 on 16X AA resulted in less than 10FPS during the demo1 timedemo. In fact, we can enable 2X Bilinear, 2X Quincunx, 4X Bilinear or 4X 9-tap Gaussian, 8X or 16X AA. We have a simple analysis, which follows, of the below image under various AA settings.

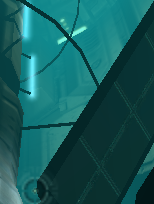

The screenshot image that we are using for analysis can be seen below in 16X AA. Feel free to download our AA raw data files here.

Now, we look at a smaller piece of the puzzle for each image.

| AA Setting | Image (mouse-over for No AA) |

Difference Map (click to enlarge) |

| No AA |  |

|

| 2X AA Bilinear |  |

|

| 2X AA Quincunx |  |

|

| 4X AA Bilinear |  |

|

| 4X AA 9-tap Gaussian |  |

|

| 8X AA |  |

|

| 16X AA |  |

|

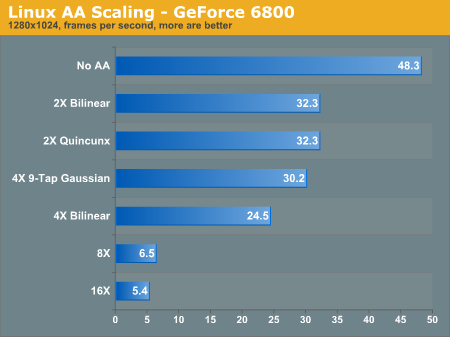

16X is clearly working on our Linux machine, to the advantage of our Linux users over our Windows users. Performance is abysmal, but it's not something that we are totally concerned about right now, since abysmal performance and Doom3 tend to go hand in hand a lot. The graph below demonstrates how AA affected performance in our demo1 timedemo on the 6800 for 1280x1024.

It should be noted that when setting AA higher than 4X on our GeForce 6800 cards, the screen would occasionally corrupt into a static/snowy image, and then freeze our entire machine.

It seems that our sweet spot for FSAA on Doom3 is right in the 4X 9-Tap Gaussian mode. 16X AA looks amazing;there is a clear, visual difference. However, the performance gap is extremely noticeable. Below, we have provided a difference map of 4X 9-Tap and 16X. The shadow seems considerably sampled, it looks less artificial now.

Although the difference is definitely visible between the two screenshots, it seems that 4X Gaussian does a fairly good job of cleaning up the jagged edges around the sides of the machine. The real difference seems to occur right in the center where the 16X image really blends the hard edges into a more fluid looking object.

36 Comments

View All Comments

Guspaz - Thursday, October 14, 2004 - link

I'm sorry, I snapped. The tone of my post was uncalled for.I still believe, however, that further investigation is in order.

LittleKing - Thursday, October 14, 2004 - link

I've said it before and I'll say it again, the rollover images don't work with FireFox. It's a shame Anand won't support it I guess.LK

KristopherKubicki - Wednesday, October 13, 2004 - link

Guspaz: We get different frame rates with 8X and 16X. Even if they produce the same image, I suspect they use different algorithms. I don't know why that would seem unprofessional?Kristopher

walmartshopper - Wednesday, October 13, 2004 - link

#20... If it's not doing 16x, how do you explain the performance drop? Just because the images are the exact same does not mean it's not doing the work. Theres a limit to antialiasing, meaning there's a limit to how smooth an edge can be. I think in many cases, 8x hits that limit, and 16x is just overkill. But just because there's no difference in this particular screenshot doesn't mean theres not ever a difference. I'm sure it makes some kind of difference in most cases, even if only a few pixels. Just imagine if 32xAA existed... compare 16x to 32x and i doubt you would ever be able to find any difference. But that doesn't mean the card isn't doing extra work. With 8x and 16x you are starting to get into the same territory where anything above 8x makes little or no difference.Sorry, but that post gave me a good laugh.

sprockkets - Wednesday, October 13, 2004 - link

Keep in mind that SuSE uses xfree86 in 9.1and will go to x.org in the next release, or it seem by the way the ftp is hinting it will.walmartshopper - Wednesday, October 13, 2004 - link

I'm getting noticably better performance on Linux over xp. I run 1600x1200 high quality with 4xAA on a 6800gt.Linux:

Slackware 10

kernel: custom 2.6.8.1

X: xorg 6.8

NV driver: 6106

Desktop: KDE 3.3 (4 desktops, 4 webcam monitors, amsn, gaim, desktop newsfeeds, and a kicker loaded with apps all running while i play)

xp:

fresh copy with nothing more than a few games installed

NV driver: 66.72

At the same settings, the game feels noticably smoother on linux. Thanks to ReiserFS, the loading time is also much faster. Sorry for no benchmarks, but I got rid of the windows install after my first time playing on Linux. I had problems with the 6111 driver crashing, but 6106 works flawlessly. (Although I can switch drivers in less than a minute without even rebooting) I can't wait until nvclock supports overclocking on the 6800 series.

I'm a little disappointed that all the testing was done on SuSE. The beauty of Linux is being able to customize and optimize just about anything. I realize that SuSE is a distro that average joe is likely to use, but I think you should also include some scores from a simple, fast, optimized distro/kernel such as Slack or Gentoo to show what Linux is really capable of.

3500+ @ 2.44ghz

1gb ddr @ 444mhz 2.5-3-3-7

k8n neo2 platinum

Guspaz - Wednesday, October 13, 2004 - link

I did a difference on the 8x and 16x AA images.You're wrong. Your review cards are NOT doing 16xAA. They're doing 8xAA.

Considering how the 8x and 16x images look identical, that should have been your first hint; once you run a diff and find they ARE identical, I'd expect you to know better.

Seriously, make a correction, this looks unprofessional.

Saist - Wednesday, October 13, 2004 - link

#15 - My bad to make such generalizations..but the fact that you can't even use an ATI card to play D3 doesn't bode well for Linux gaming...

****

Keep in mind, ATi is several months, if not a year or more behind Nvidia in supporting Linux. Give ATi time, and things like this will probably become as obsolete as Win95.

Olias - Wednesday, October 13, 2004 - link

At 800x600-high quality, I get 32fps in XP and 45 in Linux. Linux is 13fps(40%) faster.AMD Athlon XP 3200+

nForce2 chipset

5900XT Video Card

Memory: 2x512MB PC-3200 CL2.5 (400MHz)

Distro: Gentoo Linux

Kernel: 2.6.7-r14

X: xorg-x11-6.7.0-r2

ath50 - Wednesday, October 13, 2004 - link

You list on the first page"Timothee Besset, Linuxgamers.com " as the source of the quote, but its actually Linuxgames.com, linuxgamers is one of those search pages with popups that tries to reset your homepagge :

Just a little thing...I went off to linuxgamers.com first hehe.