NVIDIA's GeForce 6200 & 6600 non-GT: Affordable Gaming

by Anand Lal Shimpi on October 11, 2004 9:00 AM EST- Posted in

- GPUs

Although it's seeing very slow adoption among end users, PCI Express platforms are getting out there and the two graphics giants are wasting no time in shifting the competition for king of the hill over to the PCI Express realm.

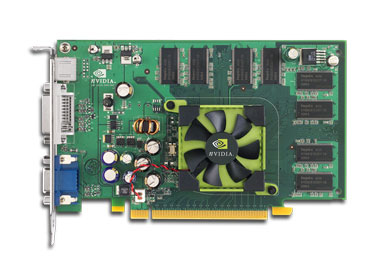

ATI and NVIDIA have both traded shots in the mid-range with the release of the Radeon X700 and GeForce 6600. Today, the battle continues in the entry-level space with NVIDIA's latest launch - the GeForce 6200.

The GeForce 6 series is now composed of 3 GPUs: the high end 6800, the mid-range 6600 and now the entry-level 6200. True to NVIDIA's promise of one common feature set, all three of the aforementioned GPUs boast full DirectX 9 compliance, and thus, can all run the same games, just at different speeds.

What has NVIDIA done to make the 6200 slower than the 6600 and 6800?

For starters, the 6200 features half the pixel pipes of the 6600, and 1/4 that of the 6800. Next, the 6200 will be available in two versions: one with a 128-bit memory bus like the 6600 and one with a 64-bit memory bus, effectively cutting memory bandwidth in half. Finally, NVIDIA cut the core clock on the 6200 down to 300MHz as the final guarantee that it would not cannibalize sales of their more expensive cards.

The 6200 is a NV43 derivative, meaning it is built on the same 0.11-micron (110nm) process on which the 6600 is built. In fact, the two chips are virtually identical with the 6200 having only 4 active pixel pipelines on its die. There is one other architectural difference between the 6200 and the rest of the GeForce 6 family, and that is the lack of any color or z-compression support in the memory controller. Color and Z-compression are wonderful ways of reducing the memory bandwidth overhead of enabling technologies such as anti-aliasing. So, without support for that compression, we can expect the 6200 to take a bigger hit when turning on AA and anisotropic filtering. The benefit here is that the 6200 doesn't have the fill rate or the memory bandwidth to run most games at higher resolutions. Therefore, those who buy the 6200 won't be able to play at resolutions where the lack of color and z-compression would really matter with AA enabled. We'll investigate this a bit more in our performance tests.

Here's a quick table summarizing what the 6200 is and how it compares to the rest of the GeForce 6 family:

| GPU | Manufacturing Process | Vertex Engines | Pixel Pipelines | Memory Bus Width |

| GeForce 6200 | 0.11-micron | 3 | 4 | 64/128-bit |

| GeForce 6600 | 0.11-micron | 3 | 8 | 128-bit |

| GeForce 6800 | 0.13-micron | 6 | 16 | 256-bit |

The first thing to notice here is that the 6200 supports either a 64-bit or 128-bit memory bus, and as far as NVIDIA is concerned, they are not going to be distinguishing cards equipped with either a 64-bit or 128-bit memory configuration. While NVIDIA insists that they cannot force their vendor partners to distinguish the two card configurations apart, we're more inclined to believe that NVIDIA simply would like all 6200 based cards to be known as a GeForce 6200, regardless of whether or not they have half the memory bandwidth. NVIDIA makes a "suggestion" to their card partners that they should add the 64-bit or 128-bit designation somewhere on their boxes, model numbers or website, but the suggestion goes no further than just being a suggestion.

The next issue of variability comes in the topic of clock speeds. NVIDIA has "put a stake in the ground" at 300MHz as the desired clock speed for the 6200 GPUs regardless of configuration, and it does seem that add-in board vendors would have no reason to clock their 6200s any differently, since they are all paying for a 300MHz part. The variability really comes when you start talking about memory speeds. The 6200 only supports DDR1 memory and is spec'd to run at 275MHz (effectively 550MHz). However, as we've seen in the past, this is only a suggestion - it is up to the manufacturers as to whether or not they will use cheaper memory.

NVIDIA is also only releasing the 6200 as a PCI Express product - there will be no AGP variant at this point in time. The problem is that the 6200 is a much improved architecture compared to the current entry-level NVIDIA card in the market (the FX 5200), yet the 5200 is still selling quite well as it is not really purchased as a hardcore gaming card. In order to avoid cannibalizing AGP FX 5200 sales, the 6200 is kept out of competition by being a strictly PCI Express product. While there is a PCI Express version of the FX 5200, its hold on the market is not nearly as strong as the AGP version, so losing some sales to the 6200 isn't as big of a deal.

In talking about AGP versions of recently released cards, NVIDIA has given us an update on the status of the AGP version of the highly anticipated GeForce 6600GT. We should have samples by the end of this month and NVIDIA is looking to have them available for purchase before the end of November. There are currently no plans for retail availability of the PCI Express GeForce 6800 Ultras - those are mostly going to tier 1 OEMs.

The 6200 will be shipping in November and what's interesting is that some of the very first 6200 cards to hit the street will most likely be bundled with PCI Express motherboards. It seems like ATI and NVIDIA are doing a better job of selling 925X motherboards than Intel these days.

The expected street price of the GeForce 6200 is between $129 and $149 for the 128-bit 128MB version. This price range is just under that of the vanilla ATI X700 and the regular GeForce 6600 (non-GT), both of which are included in our performance comparison - so in order for the 6200 to truly remain competitive, its street price will have to be closer to the $99 mark.

The direct competition to the 6200 from ATI are the PCI Express X300 and X300SE (128-bit and 64-bit versions respectively). ATI has a bit of a disadvantage here because the X300 and X300SE are still based on the old Radeon 9600 architecture and not a derivative of the X800 and X700. ATI is undoubtedly working on a 4-pipe version of the X800, but for this review, the advantage is definitely in NVIDIA's court.

44 Comments

View All Comments

Sunbird - Monday, October 11, 2004 - link

bpt8056 - Monday, October 11, 2004 - link

Anand, thanks so much for updating us on the PVP feature in the NV40. I think it's high-time somebody held nVidia accountable for a "broken" feature. Do you know if the PVP is working in the PCI-Express version (NV45)? Any information you can get would be great. Thanks Anand!mczak - Monday, October 11, 2004 - link

That's an odd conclusion... "In most cases, the GeForce 6200 does significantly outperform the X300 and X600 Pro, its target competitors from ATI."But looking at the results, the X600Pro is _faster_ in 5 of 8 benchmarks (sometimes significantly), 2 are a draw, and only slower in 1 (DoomIII, by a significant margin). Not to disregard DoomIII, but if you base your conclusion entirely on that game alone why do you even bother with the other titles?

I just can't see why that alone justifies "...overall, the 6200 takes the crown".

There are some other odd comments as well, for instance at the Star Wars Battlefront performance: "The X300SE is basically too slow to play this game. There's nothing more to it. The X300 doesn't make it much better either." Compared to the 6200 which gets "An OK performer;..." but is actually (very slightly) slower than the X300?

gordon151 - Monday, October 11, 2004 - link

"In most cases, the GeForce 6200 does significantly outperform the X300 and X600 Pro, its target competitors from ATI."Eh, am I missing something or wasnt it the X600 Pro the card that significantly outperformed the 6200 in almost all areas with the exception of Doom3.

dragonic - Monday, October 11, 2004 - link

#6 Why would they drop it because the multiplayer framerate is locked? They benchmark using the single player, not the multiplayerDAPUNISHER - Monday, October 11, 2004 - link

Thanks Anand! I've been on about the PVP problems with nV40 for months now, and have become increasing fustrated with the lack of information and/or progress by nV. Now that a major site is pursuing this with vigor I can at least take comfort in the knowledge that answers will be forthcoming one way or another!Again, thanks for making this issue a priority and emphatically stating you will get more information for us. It's nV vs Anand so "Rumble young man! Rumble!" :-)

AlphaFox - Monday, October 11, 2004 - link

if you ask me, all these low end cards are stupid if you have a PCIe motherboard.. who the heck would get one of these crappy cards if they spent all the money for a brand new PCIe computer??? these cards would be perfect for AGP as they are now going to start to be lower end..ROcHE - Monday, October 11, 2004 - link

How would a 9800 Pro do against these card?ViRGE - Monday, October 11, 2004 - link

Unless LucasArts changes something Anand, you may want to drop the Battlefront test. With multiplayer, the framerate is locked to the tick rate(usually 20FPS), so its performance is nearly irrelivant.PS #1, he's talking about the full load graph, not the idle graph

teng029 - Monday, October 11, 2004 - link

"For example, the GeForce 6600 is supposed to have a street price of $149, but currently, it's selling for closer to $170. So, as the pricing changes, so does our recommendation."i have yet to see the 6600 anywhere. pricewatch only lists two aopen cards (both well over 200.00) it and newegg doesn't carry it. i'm curious as to where he got the 170.00 street price.