Intel's Architecture Day 2018: The Future of Core, Intel GPUs, 10nm, and Hybrid x86

by Dr. Ian Cutress on December 12, 2018 9:00 AM EST- Posted in

- CPUs

- Memory

- Intel

- GPUs

- DRAM

- Architecture

- Microarchitecture

- Xe

Going Beyond Gen11: Announcing the XE Discrete Graphics Brand

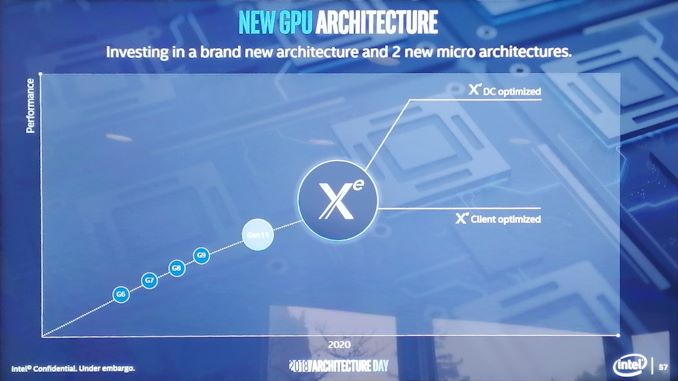

Not content with merely talking about what 2019 will bring, we were given a glimpse into how Intel is going to approach its graphics business in 2020 as well. It was at this point that Raja announced the new product branding for Intel’s discrete graphics business:

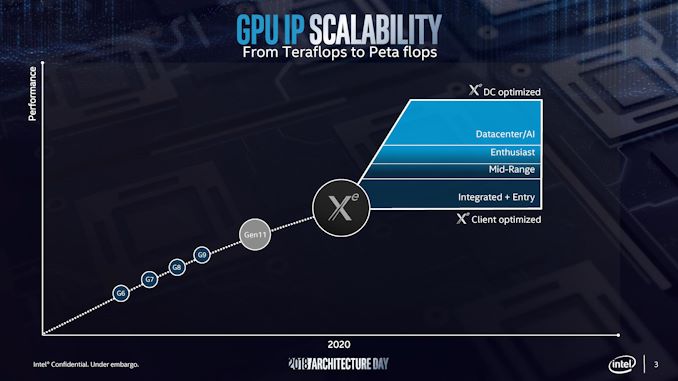

Intel will use the Xe branding for its range of graphics that were unofficially called ‘Gen12’ in previous discussions. Xe will start from 2020 onwards, and cover the range from client graphics all the way to datacenter graphics solutions.

Intel actually divides this market up, showing that Xe also covers the future integrated graphics solutions as well. If this slide is anything to go by, it would appear that Intel wants Xe to go from entry to mid-range to enthusiast and up to AI, competing with the best the competition has to offer.

Intel stated that Xe will start on Intel’s 10nm technology and that it will fall under Intel’s single stack software philosophy, such that Intel wants software developers to be able to take advantage of CPU, GPU, FPGA, and AI, all with one set of APIs. This Xe design will feed the foundation of several generations of graphics, and shows that Intel is now ready to rally around a brand name moving forward.

There was some confusion with one of the slides, as it would appear that Intel might be using the new brand name to also refer to some of it's FPGA and AI solutions. We're going to see if we can get an answer on that in due course.

148 Comments

View All Comments

Spunjji - Thursday, December 13, 2018 - link

They committed to Adaptive Sync back with Skylake, but it's taken this long to see it because they haven't released a new GPU design since then. It would have been a *very* weird move to suddenly release their own tech.gamerk2 - Thursday, December 13, 2018 - link

I think it's more likely NVIDIA just waits for HDMI 2.1, which supports VRR as part of the specification.I also suspect HDMI 2.1 will eventually kill of Displayport entirely; Now that HDMI offers more bandwidth, and given Displayport is a non-factor in the consumer (TV) market, there really isn't a compelling reason for it to continue to exist alongside HDMI. We *really* don't need competing digital video connector standards, and HDMI isn't going anywhere.

edzieba - Thursday, December 13, 2018 - link

HDMI is fantastic for AV, but has NO PLACE WHATSOEVER for desktop monitors. It causes a multitude of problems due to abusing a standard intended for very specific combinations of resolutions and refresh rates (and a completely different colour range and colour space standards), add offers zero benefits. Get it the hell off the back of my GPU where it wastes space that could be occupied by a far more useful DP++ connectorIcehawk - Thursday, December 13, 2018 - link

Setting all else aside - DP is "better" because the plugs lock IMO. HDMI and mini-DP both have no retention system and that makes it something I do my best to avoid both personally and professionally, love the "my monitor doesn't work" calls when it's just you moved your dock and it wiggled the mini-DP connector.jcc5169 - Wednesday, December 12, 2018 - link

Intel will be at a perpetual disadvantage because byt the time they bring our 7nm product, AMD will have been delivering for 2 whole years.shabby - Wednesday, December 12, 2018 - link

You belive tsmc's 7nm is equal to Intel's 7nm?silverblue - Wednesday, December 12, 2018 - link

7nm != 7nm in this case; in fact, Intel's 10nm process looks to be just as dense as TSMC's 7nm. I think the question is more about how quickly TSMC/GF/Samsung can offer a 5nm process, because I wouldn't expect a manufacturing lead anytime soon (assuming 10nm processors come out on time).YoloPascual - Wednesday, December 12, 2018 - link

10nm iNTeL iS bEttER tHAn 7nm TSMC???ajc9988 - Wednesday, December 12, 2018 - link

The nodes are marketing jargon. Intel's 10nm=TSMC 7nm for intents and purposes. Intel's 7nm=TSMC5n/3nm, approximately. TSMC is doing volume 5nm EUV next year, IIRC, for Apple during H2, while working on 7nm EUV for AMD (or something like that) with 5nm being offered in 2020 products alongside 7nm EUV. Intel's current info shows 7nm for 2021 with EUV, but that is about the time that TSMC is going to get 3nm, alongside Samsung which is keeping up on process roughly alongside TSMC. Intel will never again have a lead like they had. They bet on EUV and partners couldn't deliver, then they just kept doing Skylake refreshes instead of porting designs back to 14nm like the one engineer said he told them to do and Intel didn't listen.I see nothing ground breaking from Intel unless they can solve the Cobalt issues, as due to the resistances at the size of the connections at the smaller nodes, Cobalt is a necessity. TSMC is waiting to deal with Cobalt, same with Samsung, while Intel uses that and Ruthenium. Meanwhile, Intel waited so long on EUV to be ready, they gave up waiting and instead are waiting for that to mature while TSMC and Samsung are pushing ahead with it, even with the known mask issues and pellicles not being ready. The race is fierce, but unless someone falters or TSMC and Samsung can't figure out Cobalt or other III-V materials when Intel cracks the code, no one will have a clear lead by years moving forward. And use of an active interposer doesn't guarantee a clear lead, as others have the tech (including AMD) but have chosen not to use it on cost basis to date. Intel had to push chipsets back onto 22nm plants that were going to be shut down. Now that they cannot be shut down, keeping them full to justify the expense is key, and 22nm active interposers on processes that have been around the better part of the last decade (high yield, low costs due to maturity) is a good way to achieve that goal. In fact, producing at 32nm and below, in AMD's cost analysis, shows that the price is the same as doing a monolithic die. That means, since Intel never got a taste of chiplets giving better margins with an MCM, Intel won't feel a hit by going straight for the active interposer, as the cost is going to be roughly what their monolithic dies cost.

porcupineLTD - Thursday, December 13, 2018 - link

TSMC will start risc production of 5nm in late 2019 at the earliest, next apple SOC will be 7nm+(EUV) and so will zen 3.