The Intel Core i3-7350K (60W) Review: Almost a Core i7-2600K

by Ian Cutress on February 3, 2017 8:00 AM ESTPower Consumption

As with all the major processor launches in the past few years, performance is nothing without a good efficiency to go with it. Doing more work for less power is a design mantra across all semiconductor firms, and teaching silicon designers to build for power has been a tough job (they all want performance first, naturally). Of course there might be other tradeoffs, such as design complexity or die area, but no-one ever said designing a CPU through to silicon was easy. Most semiconductor companies that ship processors do so with a Thermal Design Power, which has caused some arguments recently based presentations broadcast about upcoming hardware.

Yes, technically the TDP rating is not the power draw. It’s a number given by the manufacturer to the OEM/system designer to ensure that the appropriate thermal cooling mechanism is employed: if you have a 65W TDP piece of silicon, the thermal solution must support at least 65W without going into heat soak. Both Intel and AMD also have different ways of rating TDP, either as a function of peak output running all the instructions at once, or as an indication of a ‘real-world peak’ rather than a power virus. This is a contentious issue, especially when I’m going to say that while TDP isn’t power, it’s still a pretty good metric of what you should expect to see in terms of power draw in prosumer style scenarios.

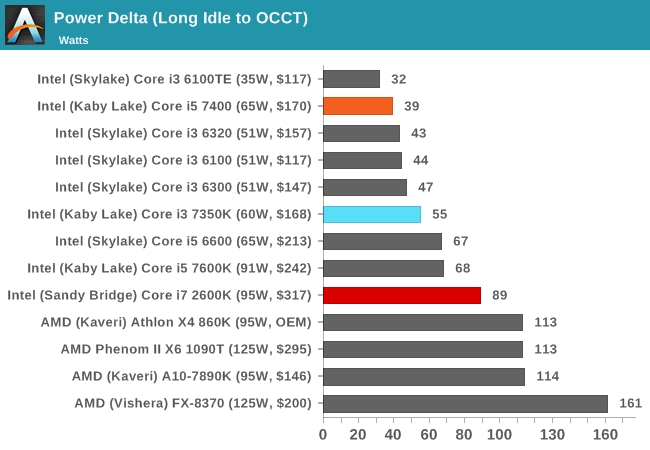

So for our power analysis, we do the following: in a system using one reasonable sized memory stick per channel at JEDEC specifications, a good cooler with a single fan, and a GTX 770 installed, we look at the long idle in-Windows power draw, and a mixed AVX power draw given by OCCT (a tool used for stability testing). The difference between the two, with a good power supply that is nice and efficient in the intended range (85%+ from 50W and up), we get a good qualitative comparison between processors. I say qualitative as these numbers aren’t absolute, as these are at-wall VA numbers based on power you are charged for, rather than consumption. I am working with our PSU reviewer, E.Fylladikatis, in order to find the best way to do the latter, especially when working at scale.

Nonetheless, here are our recent results for Kaby Lake at stock frequencies:

The Core i3-7350K, by virtue of its higher frequency, seems to require a good voltage to get up to speed. This is more than enough to go above and beyond the Core i5, which despite having more cores, is in the nicer part (efficiency wise) in the voltage/frequency curve. As is perhaps to be expected, the Core i7-2600K uses more power, having four cores with hyperthreading and a much higher TDP.

Overclocking

At this point I’ll assume that as an AnandTech reader, you are au fait with the core concepts of overclocking, the reason why people do it, and potentially how to do it yourself. The core enthusiast community always loves something for nothing, so Intel has put its high-end SKUs up as unlocked for people to play with. As a result, we still see a lot of users running a Sandy Bridge i7-2600K heavily overclocked for a daily system, as the performance they get from it is still highly competitive.

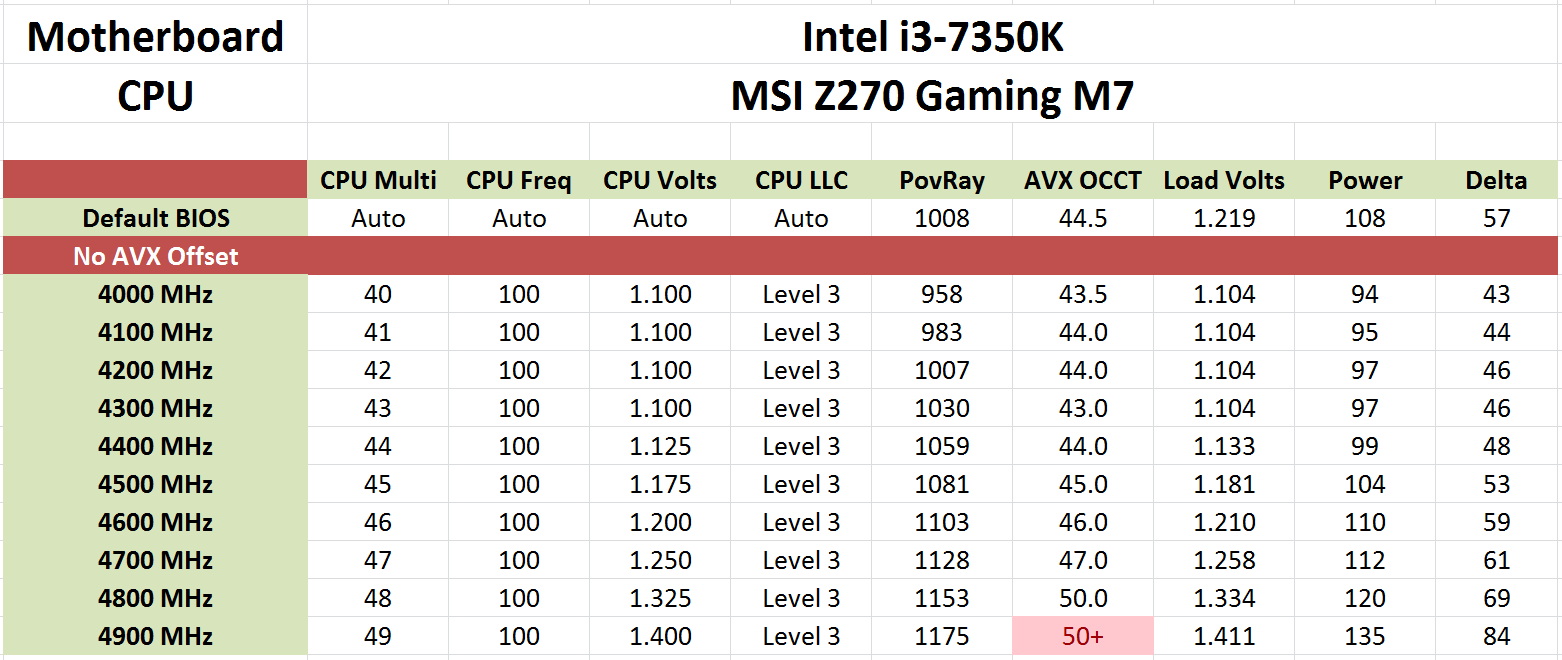

There’s also a new feature worth mentioning before we get into the meat: AVX Offset. We go into this more in our bigger overclocking piece, but the crux is that AVX instructions are power hungry and hurt stability when overclocked. The new Kaby Lake processors come with BIOS options to implement an offset for these instructions in the form of a negative multiplier. As a result, a user can stick on a high main overclock with a reduced AVX frequency for when the odd instruction comes along that would have previously caused the system to crash.

For our testing, we overclocking all cores under all conditions:

The overclocking experience with the Core i3-7350K matched that from our other overclockable processors - around 4.8-5.0 GHz. The stock voltage was particularly high, given that we saw 1.100 volts being fine at 4.2 GHz. But at the higher frequencies, depending on the quality of the CPU, it becomes a lot tougher maintain a stable system. With the Core i3, temperature wasn't really a feature here with our cooler, and even hitting 4.8 GHz was not much of a strain on the power consumption either - only +12W over stock. The critical thing here is voltage and stability, and it would seem that these chips would rather hit the voltage limit first (and our 1.4 V limit is really a bit much for a 24/7 daily system anyway).

A quick browse online shows a wide array of Core i3-7350K results, from 4.7 GHz to 5.1 GHz. Kaby Lake, much like previous generations, is all about the luck of the draw - if you want to push it to the absolute limit.

186 Comments

View All Comments

Michael Bay - Saturday, February 4, 2017 - link

>competition>AMD

Ranger1065 - Sunday, February 5, 2017 - link

You are such a twat.Meteor2 - Sunday, February 5, 2017 - link

Ignore him. Don't feed trolls.jeremynsl - Friday, February 3, 2017 - link

Please consider abandoning the extreme focus on average framerates. It's old-school and doesn't really reflect the performance differences between CPUs anymore. Frame-time variance and minimum framerates are what is needed for these CPU reviews.Danvelopment - Friday, February 3, 2017 - link

Would be a good choice for a new build if the user needs the latest tech, but I upgraded my 2500K to a 3770 for <$100USD.I run an 850 for boot, a 950 for high speed storage on an adapter (thought it was a good idea at the time but it's not noticeable vs the 850) and an RX480.

I don't feel like I'm missing anything.

Barilla - Friday, February 3, 2017 - link

"if we have GPUs at 250-300W, why not CPUs?"I'm very eager to read a full piece discussing this.

fanofanand - Sunday, February 5, 2017 - link

Those CPUs exist but don't make sense for home usage. Have you noticed how hard it is to cool 150 watts? Imagine double that. There are some extremely high powered server chips but what would you do with 32 cores?abufrejoval - Friday, February 3, 2017 - link

I read the part wasn't going to be available until later, did a search to confirm and found two offers: One slightly more expensive had "shipping date unknown", another slightly cheaper read "ready to ship", so that's what I got mid-January, together with a Z170 based board offering DDR3 sockets, because it was to replace an A10-7850K APU based system and I wanted to recycle 32GB of DDR3 RAM.Of course it wouldn't boot, because 3 out of 3 mainboards didn't have KabyLake support in the BIOS. Got myself a Skylake Pentium part to update the BIOS and returned that: Inexcusable hassle that, for me, the dealer and hopefully for the manufacturers which had advertised "Kaby Lake" compatibility for moths, but shipped outdates BIOS versions.

After that this chips runs 4.2GH out of the box and overclocks to 4.5 without playing with voltage. Stays cool and sucks modest Watts (never reaching 50W according to the onboard sensors, which you can't really trust, I gather).

Use case is a 24/7 home-lab server running quite a mix of physical and virtual workloads on Win 2008R2 and VMware workstation, mostly idle but with some serious remote desktop power, Plex video recoding ummp if required and even a game now and then at 1080p.

I want it to rev high on sprints, because I tend to be impatient, but there is a 12/24 core Xeon E5 at 3 GHz and a 4/8 Xeon E3 at 4GHz sitting next to it, when I need heavy lifting and torque: Those beasts are suspended when not in use.

Sure enough, it is noticible snappier than the big Xeon 12 core on desktop things and still much quieter than the Quad, while of course any synthetic multi-core benchmark or server load leaves this chip in the dust.

I run it with an Nvidia GTX 1050ti, which ensures a seamless experience with the Windows 7 generation Sever 2008R2 on all operating systems, including CentOS 7 virtual or physical which is starting to grey a little on the temples, yet adds close to zero power on idle.

At 4.2 GHz the Intel i3-7350K HT dual is about twice as fast as the A10-7850K integer quad at the same clock speed (it typically turbos to 4.2 GHz without any BIOS OC pressure) for all synthetic workloads I could throw at it, which I consider rather sad (been running AMD and Intel side by side for decades).

I overclocked mine easily to 4.8 GHz and even to 5 GHz with about 1.4V and leaving the uncore at 3.8 GHz. It was Prime95 stable, but my simple slow and quiet Noctua NH-L9x65 couldn't keep temperatures at safe levels so I stopped a little early and went back to an easy and cool 4.6 GHz at 1.24V for "production".

I'm most impressed running x265 video recodes on terabytes of video material at 800-1200FPS on this i3-7350K/GTX 1050ti combo, which seems to leave both CPU and GPU oddly bored and able to run desktop and even gaming workloads in parallel with very little heat and noise.

The Xeon monsters with their respective GTX 1070 and GTX 980ti GPUs would that same job actually slower while burning more heat and there video recoding has been such a big sales argument for the big Intel chips.

Actually Handbrake x265 software encodes struggle to reach double digits on 24 threads on the "big" machine: Simply can't beat ASIC power with general purpose compute.

I guess the Pentium HT variants are better value, but so is a 500cc scooter vs. a Turbo-Hayabusa. And here the difference is less than a set of home delivered pizzas for the family, while this chip will last me a couple of years and the pizza is gone in minutes.

Meteor2 - Sunday, February 5, 2017 - link

Interesting that x265 doesn't scale well with cores. The developers claim to be experts in that area!abufrejoval - Sunday, February 12, 2017 - link

Sure the Handbrake x265 code will scale with CPU cores, but the video processing unit (VPU) withing the GTX 10x series provides several orders of magnitude better performance at much lower energy budgets. You'd probably need downright silly numbers of CPU cores (hundreds) with Handbrake to draw even in performance and by then you'd be using several orders of magnitude more energy to get it done.AFAIK the VPU all the same on all (consumer?) Pascal GPUs and not related to GPU cores, so a 1080 or even a Titan-X may not be any faster than a 1050.

When I play around with benchmarks I typically have HWinfo running on a separate monitor and it reports the utilization and power budget from all the distinct function blocks in today's CPUs and GPUs.

Not only does the GTX 1050ti on this system deliver 800-1200FPS when transcoding 1080p material from x264 to x265, but it also leaves CPU and GPU cores rather idle so I actually felt it had relatively little impact on my ability to game or do production work, while it is transcoding at this incredible speed.

Intel CPUs at least since Sandy Bridge have also sported VPUs and I have tried to them similarly for the MPEG to x264 transitions, but there from my experience compression factor, compression quality and speed have fallen short of Handbrake, so I didn't use them. AFAIK x265 encoding support is still missing on Kaby Lake.

It just highlights the "identity" crisis of general purpose compute, where even the beefiest CPUs suck on any specific job compared to a fully optimized hardware solution.

Any specific compute problem shared by a sufficiently high number of users tends to be moved into hardware. That's how GPUs and DSPs came to be and that's how VPUs are now making CPU and GPU based video transcoding obsolete via dedicated function blocks.

And that explains why my smallest system really feels fastest with just 2 cores.

The only type of workload where I can still see a significant benefit for the big Xeon cores are things like a full Linux kernel compile. But if the software eco-system there wasn't as bad as it is, incremental compiles would do the job and any CPU since my first 1MHz 8-Bit Z80 has been able to compile faster than I was able to write code (especially with Turbo Pascal).