Ten Year Anniversary of Core 2 Duo and Conroe: Moore’s Law is Dead, Long Live Moore’s Law

by Ian Cutress on July 27, 2016 10:30 AM EST- Posted in

- CPUs

- Intel

- Core 2 Duo

- Conroe

- ITRS

- Nostalgia

- Time To Upgrade

Looking To The Future: 450mm Wafers in 2021, and Down to ‘2nm’

Most users following the industry will know that the name of the lithographic node, such as ‘14nm’, ‘16nm’ or ‘28nm’ is often an imperfect characterization of what is actually happening on the silicon. For example, Intel’s 14nm node is widely touted as the best in the industry, and the equivalent 14nm/16nm nodes are said to not be even close, due to Intel’s focus on several generations of FinFET compared to the other main semiconductor manufacturers. This also means that while ‘14nm’ or ‘16nm’ is mentioned, many other parts of the silicon could be manufactured on other nodes, depending on the utility.

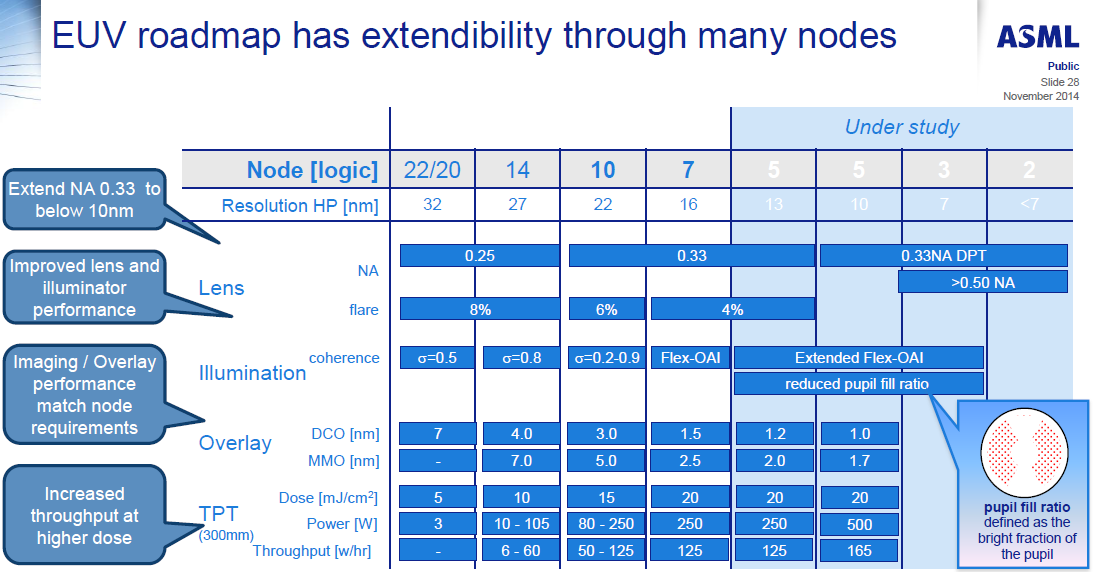

We’ve spoken in the past about equipment roadmaps to deal with the problem of shrinking lithography node sizes. This includes new lasers, droplet generation, collectors, masks, defect detection, lenses, and the technology to drive high throughput. For example, here are ASML’s EUV requirements for the next few manufacturing nodes, including requirements for 250W light sources at a 5nm node:

With the ITRS report, it makes a couple of metrics regarding node sizes and wafers. On the later, we get the prediction that 450mm wafers should be in play at around 2021 for DRAM:

This matches at DRAM half-pitch of 15nm, or a DRAM cell size of 780nm2, compared to 3480nm2 today. The movement to 450mm wafers has been expected for some time, with early predictions going for the 2008-2011 timeframe, however development seems to have stalled for a variety of reasons.

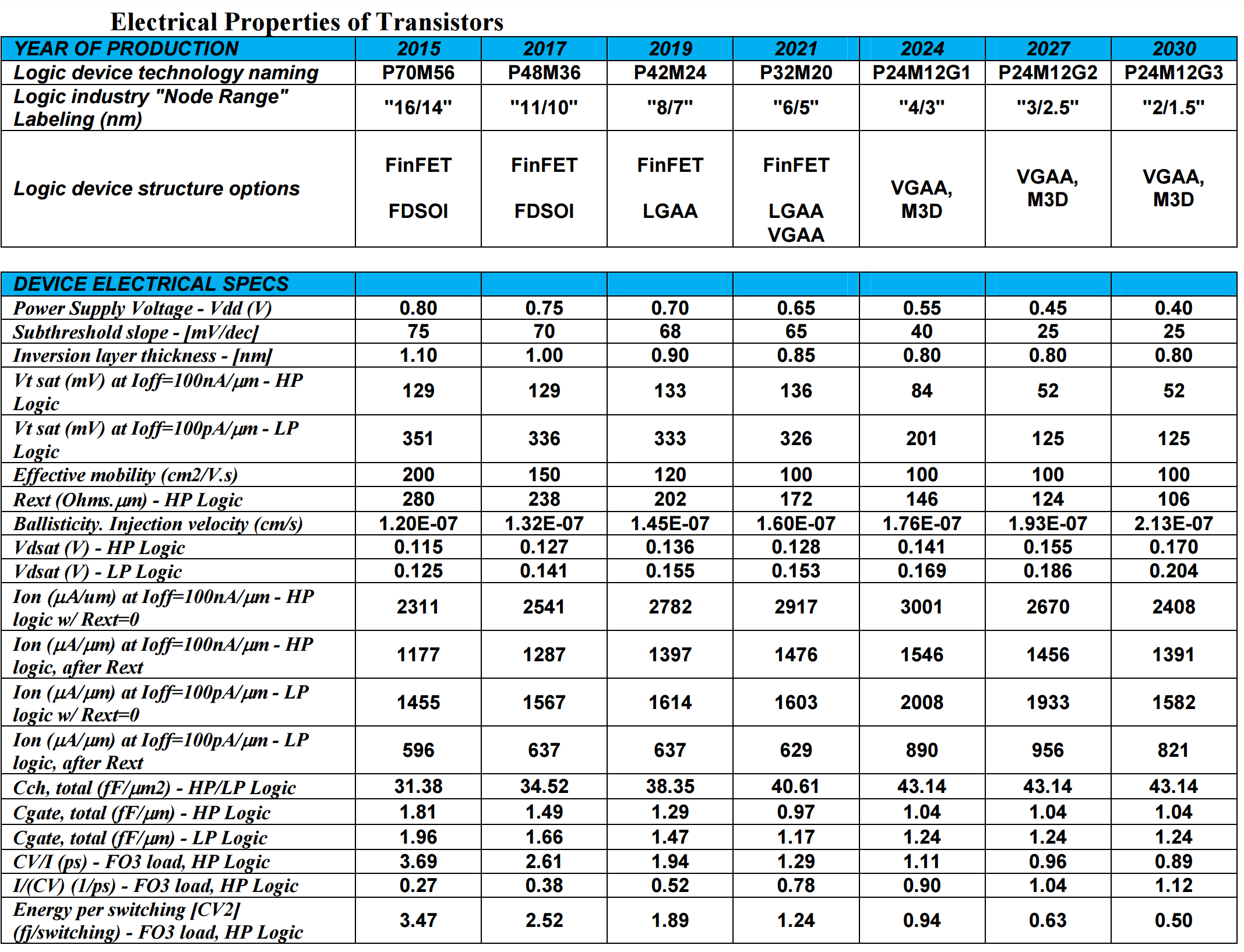

When it comes to transistors, given the node naming scheme caveats listed above, the ITRS report predicts that FinFET will make way at around the 2021 timeframe in favor of 3D stacking such as ‘vertical gate all-around’ (VGAA). As the node shrinks, as well as the transistor size, the power supply voltage will drop from 800mV today down to 400/450mV by 2027.

Moving into a world of IoT will require even lower power transistors, of which moving to a ‘smaller node’ and vertical chip integration will be a large part.

The ITRS report goes into a lot of detail regarding different stacking technologies, such as the 2.5D integration we see on products like AMD’s Fiji GPU involving a silicon interposer, as well as 3D and the different ways to implement both microchannel cooling in such chips as well as 3D TSV applications.

158 Comments

View All Comments

Akrovah - Wednesday, July 27, 2016 - link

My old E6700 is still alive and kicking. I only just replaced it as my primary system when Devil's Canyon came along. Still use it for my four year old's "first computer."djayjp - Wednesday, July 27, 2016 - link

Not a particle physicist, nor electrical engineer, so just some pie in the sky wondering here, but wouldn't it be possible to build transistors using carbon nanotubes, or light itself (using nano sized mirrors/interferometers, like DLP) or even basing the transistor gates off of protons/sub atomic particles?michael2k - Wednesday, July 27, 2016 - link

I think a more interesting question is using glass as a substrate. Imagine printing nand, CPU, GPU, ram, and along the bezels of a smartphone.That reduces a phone to six components: a display, a transducer for sound, a mic, a battery, a radio, and a chassis, which would have all the antennas.

joex4444 - Wednesday, July 27, 2016 - link

Particle physicist here. Light has the tricky property that it travels at the speed of light so I can't imagine it working but perhaps I'm envisioning your concept differently than you are. For carbon nanotubes, you'll need a materials engineer or a condensed matter physicist.3DoubleD - Wednesday, July 27, 2016 - link

Materials/Semiconductor Physics Engineer here. The problem is not what we CAN do, the problem is what is economically possible at scale. For example, FinFETs were demonstrated at the turn of the century, but took all of those years to become (1) necessary - planar transistor were getting too leaky, and (2) possible to fabricate economically in large scales.Researchers have created smaller, faster transistors years ago, but it takes a lot of time and effort to develop the EUV or quadruple patterning technologies that enable these devices to be reliably and affordably manufactured.

So I think the problem in moving "beyond silicon" is not that we don't have alternatives, it is that we have many alternatives, we just don't know which will scale. It becomes less of a purely engineering problem and manufacturing business problem. When new technologies relied purely on the established silicon industry alone, you could reasonably extrapolate how much each new technology would cost as the nodes were scaled down. When we talk about using III-V FinFETs/ All Around Gates or graphene and carbon nanotubes, we don't really know how those things will scale with the existing processes as we move them from the laboratory to the manufacturing line.

I've been looking forward to this transition for years. People moan that it is the end of Moores Law, but that could be a good thing. Silicon is a great material for forming logic circuits for many reasons, but it also has many downsides. While silicon never reached 10 GHz (as Intel once predicted), other materials easily blow past 100 GHz transistor switching speeds. When the massive engines that work tirelessly to reduce our lithography nodes nm by nm are aimed at "the next big thing", we might be pleasantly surprised by a whole new paradigm of performance.

So what competes with modern day Si CMOS on speed, power usage, and cost? Nothing... yet!

djayjp - Thursday, July 28, 2016 - link

Yes, it's fascinating stuff. Thanks for reminding me about that. I recall now that I think it was graphene that enabled those insanely high switching speeds, due to its incredible conductivity/efficiency. Hopefully it can now be made economically feasible at some point! Imagine a the next GPU that is 10x smaller and operates at 100x the clock speed. A GTX 1080Ti x 1000! Finally we can do real time true global illumination ha....jeffry - Monday, August 1, 2016 - link

Thats a good point. Like, answering a question "are you willing to pay $800 for a new CPU to double the computers speed?" Most consumers say no. It all comes down to the mass market price.wumpus - Thursday, August 4, 2016 - link

From the birth of the Univac until 10 years ago, consumers consistently said YES! and plunked down their money. Doubling the (per thread) speed of a core2duo is going to cost more than $800. Also the cost of the RAM on servers is *WAY* more than $800, so you can expect if Intel could double the power of each core, they could crank prices up by at least $800 per core on Xeons. They can't, and neither can IBM or AMD.Jaybus - Thursday, July 28, 2016 - link

Sure, but that speed is dependent on the medium. There are some proposed optical transistors using electromagnetically induced transparency. Long way off. However, silicon photonics could change some things. Capacitance is the killer for electronic interconnects, whether chip-to-chip or on-chip bus. An optical interconnect could greatly increase bandwidth without increasing the chip's power dissipation. I think an electronic-photonic hybrid is more likely, since silicon photonics components can be made on a CMOS process. We are already beginning to see optical PCI Express being deployed. I could definitely see a 3D approach where 2D electronic layers are connected through an optical rather than electronic bus.djayjp - Thursday, July 28, 2016 - link

Yes, transparency, like polarized windows that either become transparent or opaque when a current is applied (to the liquid crystals?). I wonder how small they could be made. It would be incredibly power efficient I would think.