PCIe SSD Faceoff: Samsung XP941 (128GB & 256GB) and OCZ RevoDrive 350 (480GB) Tested

by Kristian Vättö on September 5, 2014 3:00 PM ESTPerformance Consistency

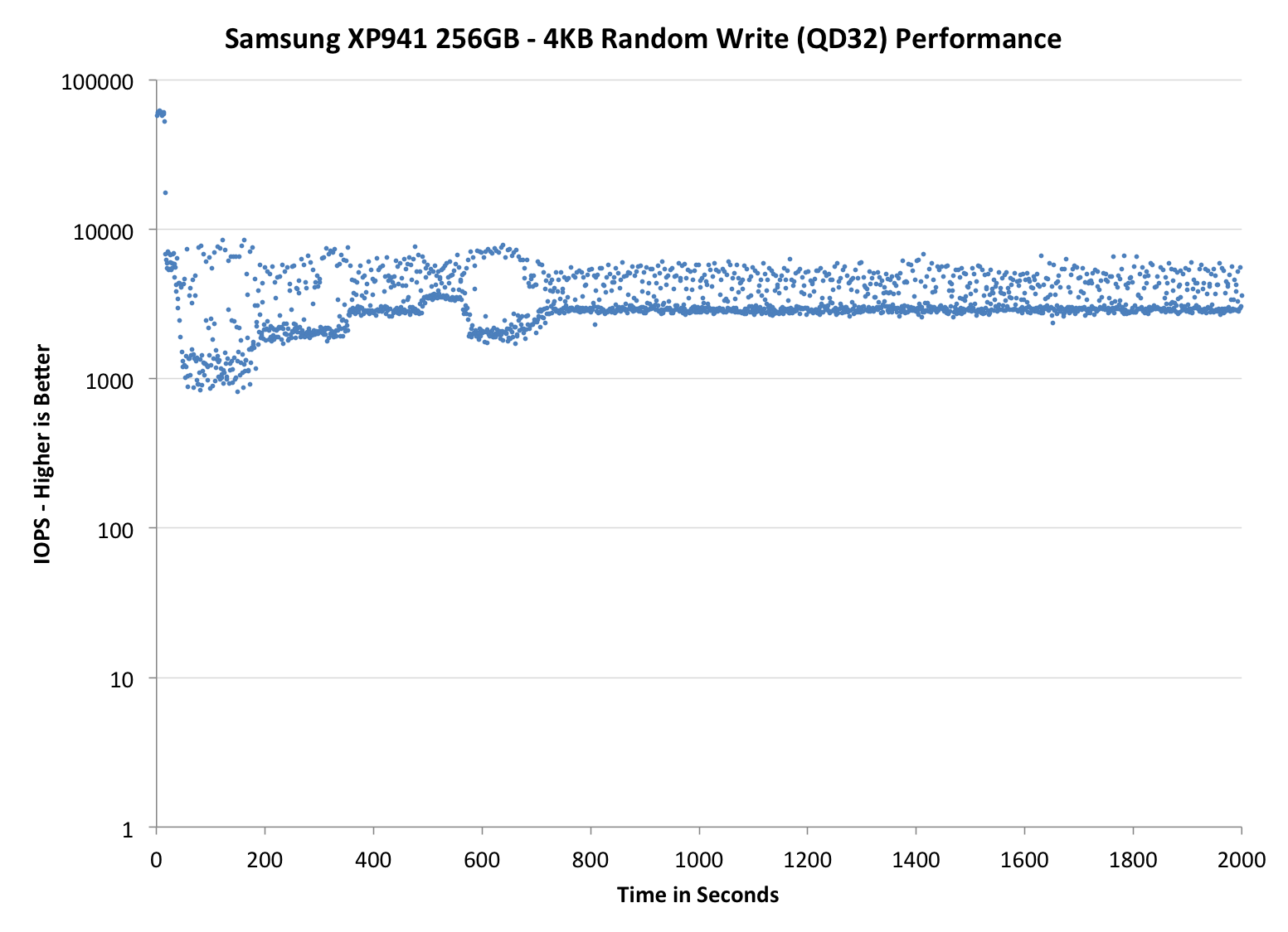

Performance consistency tells us a lot about the architecture of these SSDs and how they handle internal defragmentation. The reason we do not have consistent IO latency with SSDs is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag or cleanup routines directly impacts the user experience as inconsistent performance results in application slowdowns.

To test IO consistency, we fill a secure erased SSD with sequential data to ensure that all user accessible LBAs have data associated with them. Next we kick off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. The test is run for just over half an hour and we record instantaneous IOPS every second.

We are also testing drives with added over-provisioning by limiting the LBA range. This gives us a look into the drive’s behavior with varying levels of empty space, which is frankly a more realistic approach for client workloads.

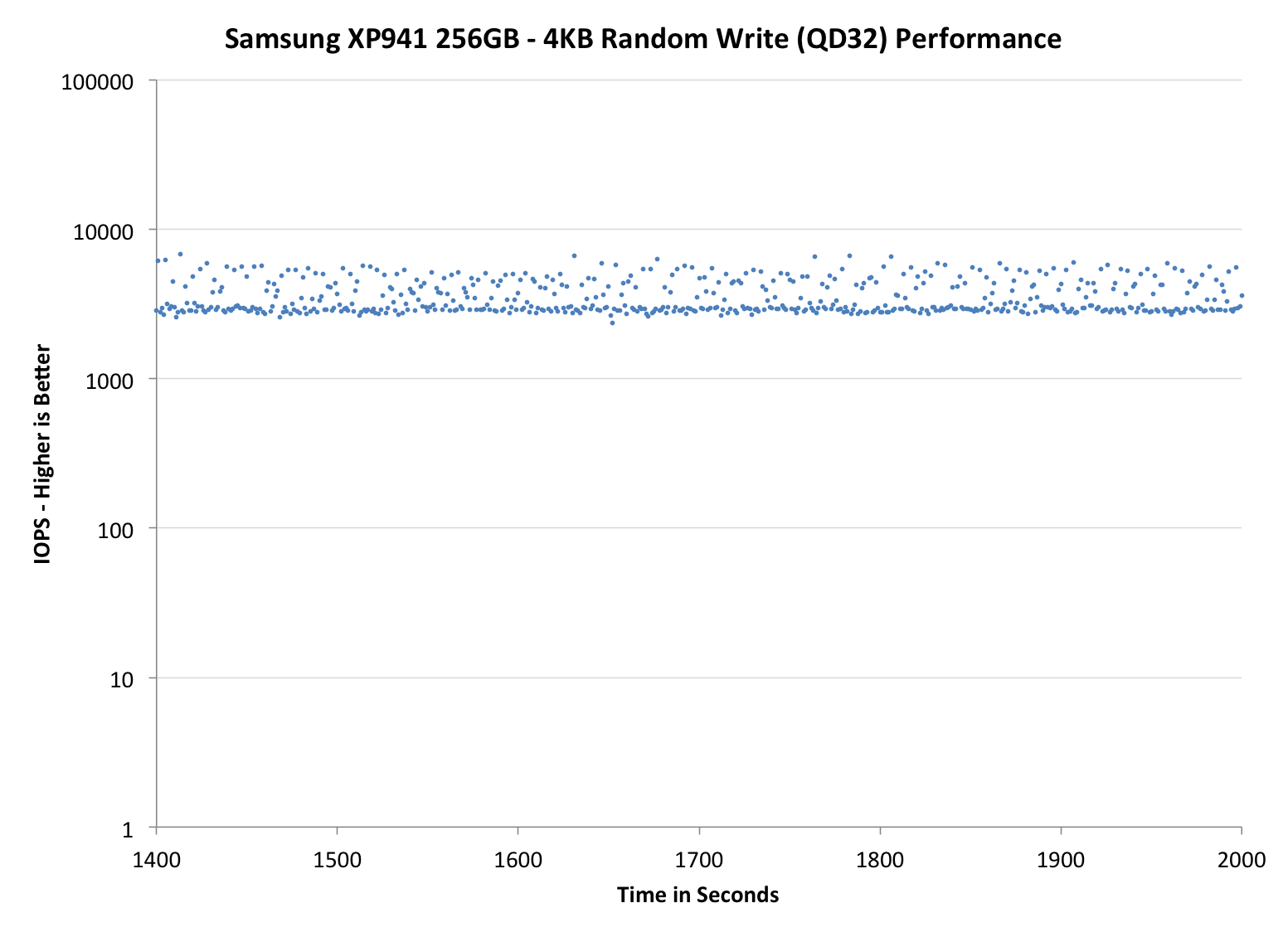

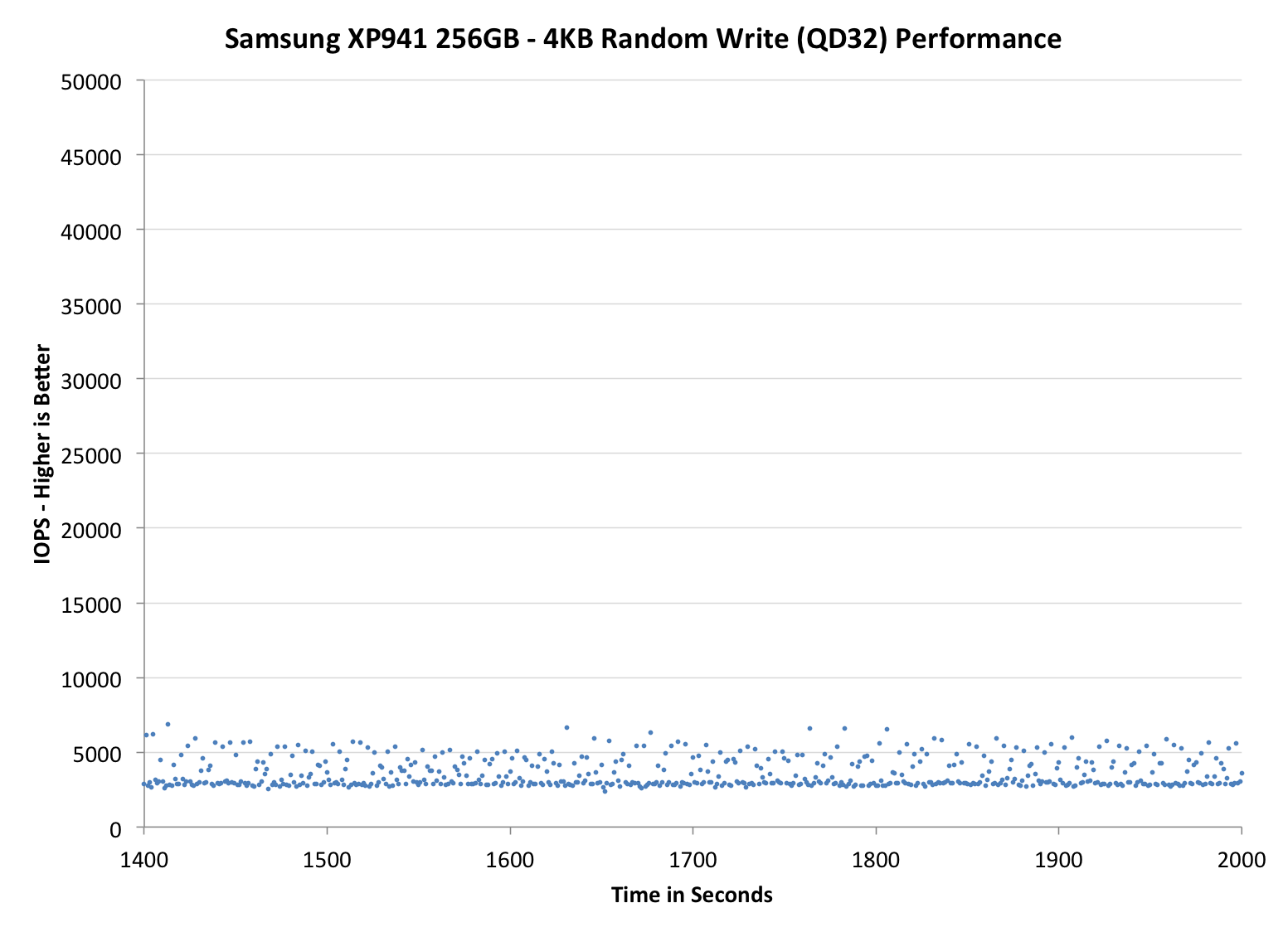

Each of the three graphs has its own purpose. The first one is of the whole duration of the test in log scale. The second and third one zoom into the beginning of steady-state operation (t=1400s) but on different scales: the second one uses log scale for easy comparison whereas the third one uses linear scale for better visualization of differences between drives. Click the dropdown selections below each graph to switch the source data.

For more detailed description of the test and why performance consistency matters, read our original Intel SSD DC S3700 article.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

The 256GB XP941 shows similar behavior as the 512GB model but with slightly lower performance. While the 512GB XP941 manages about 5,000 IOPS in steady-state, the 256GB model drops to ~3,000 IOPS. It is worth noting that the 256GB 850 Pro does over twice that, and while some of that can be firmware/controller related, most of the difference is coming from V-NAND and its lower program and erase latencies. The low-ish performance can be fixed by adding over-provisioning, though, as with 25% over-provisioning the 256GB XP941 goes above 20,000 IOPS (although the 512GB model is still quite a bit faster).

The RevoDrive, on the other hand, is a rather unique case. Since it has multiple controllers in parallel, the performance exceeds 100,000 IOPS at first, which is why I had to increase the scale of its graphs. Even after the initial burst the RevoDrive continues at nearly 100,000 IOPS, although at about 1,200 seconds that comes to an end. Taking that long to reach steady-state is rather common for SandForce based drives, but when the controller hits the wall, it hits it hard.

After 1,200 seconds, the RevoDrive's IOs are scattered all over the place. There are still plenty happening at close to 100,000 IOPS and most stay above 10,000, but with such high variation I would not call the RevoDrive very consistent. It is better than a single drive for sure, but I think OCZ's RAID firmware is causing some inconsistency and with the potential of four controllers, I would like to see higher consistency in performance. The fact that increasing over-provisioning does not help with the consistency supports the theory that the RAID firmware is the cause.

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

|

|||||||||

| Default | |||||||||

| 25% Over-Provisioning | |||||||||

TRIM Validation

I already tested TRIM with the 512GB XP941 and found it to be functional under Windows 8.1 (but not with Windows 7), so it is safe to assume that this applies to the rest of the XP941 lineup as well.

As for the RevoDrive, I turned to our regular TRIM test suite for SandForce drives. First I filled the drive with incompressible sequential data, which was followed by 30 minutes of incompressible 4KB random writes (QD32). To measure performance before and after TRIM, I ran a one-minute incompressible 128KB sequential write pass.

| Iometer Incompressible 128KB Sequential Write | |||

| Clean | Dirty | After TRIM | |

| OCZ RevoDrive 350 480GB | 447.1MB/s | 157.1MB/s | 306.4MB/s |

Typical to SandForce SSDs, TRIM recovers some of the performance but does not return the drive back to its clean state. The good news here is that the drive does receive the TRIM command, which is something that not all PCIe and RAID solutions currently support.

47 Comments

View All Comments

Intervenator - Friday, September 5, 2014 - link

Need MOAR insight into the PCIe SSD market! Wheres it going? Any noticeable performance increased to the end user? Who are the players in the market? Non performance advantages?If AnandTech has already written an article regarding any of this, could someone please point me towards it?

Intervenator - Friday, September 5, 2014 - link

Oh, and great review.Kristian Vättö - Friday, September 5, 2014 - link

We haven't done an article that collects all bits of info into one, but you may find the following articles interesting:http://www.anandtech.com/show/8006/samsung-ssd-xp9...

http://www.anandtech.com/show/7843/testing-sata-ex...

http://www.anandtech.com/show/7520/lsi-announces-s...

http://www.anandtech.com/show/8147/the-intel-ssd-d...

http://www.anandtech.com/show/8104/intel-ssd-dc-p3...

frenchy_2001 - Friday, September 5, 2014 - link

>Where is it going?M.2 form factor using PCIe gen3 x4 for portable and consumer, PCIe cards, up to Gen3 x8 for Datacenter/server. Both running NVMe protocol.

I don't see much future for SATAexpress, as the 2 other competing standards cover all the use cases already (and better too).

> Any noticeable performance increased to the end user?

Not unless you do a LOT of parallel IOs. For consumer, there is a diminutive return past the original boost from switching to SSD (mostly from lower latencies). HDD to SATA SSD gave orders of magnitude improvements. SATA3 -> PCIe is only incremental compared to that. Unless you need this for a server, video processing or other big IO limited task, there will be little difference.

>Who are the players in the market?

Still the same: Intel/Micron/Samsung/Sandisk for professionals/servers using mostly in-house designs (intel P3700 for example).

Marvel and Sandforce have announced PCIe NVMe controllers. No products for those yet.

>Non performance advantages?

M.2 will be more compact. Power draw should be more optimized. NVMe should have lower processor overhead.

Impulses - Friday, September 5, 2014 - link

Thx for that.iwod - Saturday, September 6, 2014 - link

Since speed is a relative thing. The HDD to SSD leap were amazing. But that doesn't mean you cant feel the different between SSD @ SATA 3GBps and SSD@ 6Gbps. And with the recent controller improvement on Random / Seq RW, I can also feel the difference between SATA and NVMe PCIe SSD.But with the increase memory capacity, the time to fetch things SSD will also be lower since they are likely cached. So throughput may become less of a concern after PCIe SSD, and average response time will play a role. Luckily this is already being worked on for server SSD usage. So they will likly filter down once PCIe SSD becomes mainstream.

For the future I would like to see even lower active power consumption. I wonder if they could get it under 2W.

Friendly0Fire - Friday, September 5, 2014 - link

This makes me think that the SM951 with X99 will be one hell of a beast... I want one. Heck, the XP941 would already be amazing.GrigioR - Friday, September 5, 2014 - link

Is it possible to boot from those PCIe drives using a 775 motherboard (p35chipset)? Getting rid of Satall bottleneck would be awesome on my modded Xeon system.Kristian Vättö - Friday, September 5, 2014 - link

The SM951 likely won't be bootable since it is an OEM drive, but there will be retail PCIe SSDs that are bootable even in older systems (the Plextor M6e should be, for instance).GrigioR - Sunday, September 7, 2014 - link

Nice. Too pricey 1$/GB on the 256GB model... Since my motherboard is PCIe 1.0/1.1 it would run at 500MB/s max... That should not be that noticieable. My Samsung 840 120/128GB runs at +- 250-260MB/s (read)... At least if it were a PCIe x4 it would do 1000MB (bus speed). I`ll leave this the way it is for now. Thank you very much for your input. Now i know it is possible.