Western Digital My Book Duo DAS Review

by Ganesh T S on July 12, 2014 6:00 PM EST- Posted in

- Storage

- Western Digital

- DAS

Introduction

Even as the consumer NAS market continues to experience rapid growth, it is impossible for consumers to have really fast access to data when the storage is bottlenecked by the speed of their network link. Single hard disks, by themselves, can hardly saturate today's high-speed direct-attached storage (DAS) interfaces such as eSATA, USB 3.0 and Thunderbolt. Users needing fast transfer rates (while maintaining the higher cost-effective capacities that hard disks provide) need to go in for RAID solutions. These tend to perform well for certain common workloads such as multimedia handling.

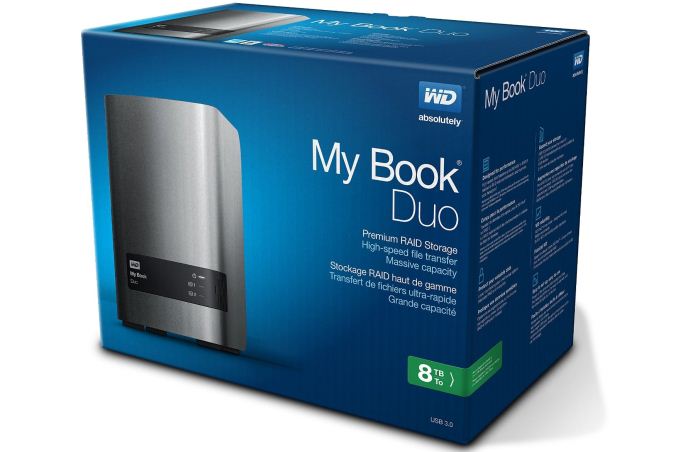

Earlier this week, we took a look at LaCie's high end 2-bay RAID DAS, the 2big Thunderbolt 2. It integrated both USB 3.0 and Thunderbolt 2 as connectivity options. At $800 for a 8 TB version, the pricing carries a premium for the Thunderbolt connectivity. USB 3.0 is, in a way, the poor man's Thunderbolt. With a focus on the average consumer, Western Digital launched the My Book Duo USB 3.0 DAS with hardware RAID capabilities a few weeks back. We got the 8 TB version in for review. The detailed specifications of the unit are provided below.

| Western Digital My Book Duo WDBLWE0080JCH | |

| Internal Storage Media | 2x 4 TB 3.5" WD40EFRX Red Hard Drives |

| Interface | 1x USB 3.0 + 2x USB 3.0 (Downstream Hub) |

| RAID Modes | RAID 0 / RAID 1 / JBOD |

| Cooling | Fan behind the front face at the base of the unit |

| Power Supply | 100-240V AC Switching Adapter (12V @ 3A DC) |

| Dimensions | 165 x 157 x 99 mm | 6.5 x 6.2 x 3.9 in. |

| Weight | 2.24 kg | 5.0 lbs. |

| Included Software |

|

| Product Page | Western Digital My Book Duo |

| Price | $450 |

Testbed Setup and Testing Methodology

Evaluation of DAS units on Windows is done with the testbed outlined in the table below. For devices with USB 3.0 connections (such as the My Book Duo that we are considering today), we utilize the USB 3.0 port directly hanging off the PCH.

| AnandTech DAS Testbed Configuration | |

| Motherboard | Asus Z97-PRO Wi-Fi ac ATX |

| CPU | Intel Core i7-4790 |

| Memory |

Corsair Vengeance Pro CMY32GX3M4A2133C11 32 GB (4x 8GB) DDR3-2133 @ 11-11-11-27 |

| OS Drive | Seagate 600 Pro 400 GB |

| Optical Drive | Asus BW-16D1HT 16x Blu-ray Write (w/ M-Disc Support) |

| Add-on Card | Asus Thunderbolt EX II |

| Chassis | Corsair Air 540 |

| PSU | Corsair AX760i 760 W |

| OS | Windows 8.1 Pro |

| Thanks to Asus and Corsair for the build components | |

Full details of the reasons behind choosing the various components in the above build, as well as the details of our DAS test suite can be found here.

26 Comments

View All Comments

voicequal - Saturday, July 12, 2014 - link

Why isn't the RAID 1 read performance closer to the RAID 0 read? Can't data be read from both drives in RAID 1?PEJUman - Saturday, July 12, 2014 - link

While in general I agree with your sentiment, I thought about this question before and one possible answer I came up with was to save the wear and tear on the 2nd drive. i.e. it only uses the 2nd drive when the 1st one have too much ECC.This approach matches well with the raid 1 goal of ultimate redundancy.

Ultimately, I wish more controller would expose the finer details on Raid tuning such as this option

madmilk - Sunday, July 13, 2014 - link

Not for sequential reads, because RAID 1 isn't striped. On RAID 0 you can read alternating stripes from each drive sequentially, but with RAID 1 you'd be reading the data twice.The random read scores are much closer between the two.

voicequal - Sunday, July 13, 2014 - link

I see your point that the reads won't be 100% sequential as seen by the drive heads, but if drive 1 starts reading at X and drive 2 at X+128KB, you can effectively get twice the read throughput over 256KB. Then you have to move the drive heads +128KB which does incur a performance cost.Still with a sufficiently large read block size, I would think there could be a substantial performance improvement reading from both drives in RAID 1. Does anyone know a RAID1 HW or SW controller that can do this?

DanNeely - Sunday, July 13, 2014 - link

The time spent skipping ahead is equal to the the time spend reading the area being skipped in a non-fragmented file. To double read speeds in a "mirrored" drive you'd need to have either the array controller or the driver in a software array store the file sectors as 02481357... on the first drive and 13570248... so that when reading the file the two drives are reading sequential sectors on the drive and alternating chunks of data in the file.Cerb - Sunday, July 13, 2014 - link

No, you wouldn't. You'd just need to alternate drives for reads, keeping them balanced, so that a total QD of say, 6 would be QD=2-4 on one drive, and QD=2-4 on the other. Where the file data actually gets stored shouldn't matter, only how the RAID implementation decides to read it. If the reads are sufficiently sequential, both drives should be able to stay quite busy, and get read performance around that of RAID 0.Most likely is that they didn't bother even trying that, as RAID 1 is not generally used for performance anyway.

voicequal - Sunday, July 13, 2014 - link

Your approach would make sequential reads quite fast, but at the expense of sequential writes which would be split across different areas of the drive.xfortis - Sunday, July 13, 2014 - link

This is a good question. I assume that most drives are set up in their controllers to present data sequentially from the beginning. I don't think it's very common that any type of program would ask a storage device for the second-half of a given file (at least not without having read the first half); I would think that the drive wouldn't have the capability within itself to address data beginning at an arbitrary point in a sequence of data - it always has to start at the beginning of the data(?).I think to implement this you would need to segment your data at the storage/RAID controller level, like striping but each drive has all the stripes in a RAID 1. Then at the controller level the controller would be able to take a request for data and, assuming the requested data spans at least two segments, it can produce two or more starting-addresses for the drives to read. But then your segment-size would have to be tuned to the kind of data you have (like allocation units) and also then there would be an additional level of addressing abstraction/complexity that would make any kind of data-recovery very difficult.

Everything I just said may be wrong. I'm just making assumptions and inferences because it's fun. Let's get a volunteer who has more knowledge or feels like trawling wikipedia for a while!

voicequal - Sunday, July 13, 2014 - link

Yes, I'm thinking this would be best done at the controller level. I've seen operating systems apply their own striping of sorts at the filesystem (i.e. NTFS) level. Try writing two large files simultaneously to the same hard drive. On an OS like Windows 8, the throughput is surprisingly good. This can only be achieved if the OS is smart enough to use a reasonably large "chunk" size for writing the file fragments to the disk. In this way the disk sees mostly sequential write activity despite the two concurrent write operations, while the number of file fragments tracked by the filesystem is minimized.TerdFerguson - Saturday, July 12, 2014 - link

If it can't connect directly to a router and it can't host a Plex server, I'm not interested.