AMD Kaveri Review: A8-7600 and A10-7850K Tested

by Ian Cutress & Rahul Garg on January 14, 2014 8:00 AM ESTTrueAudio

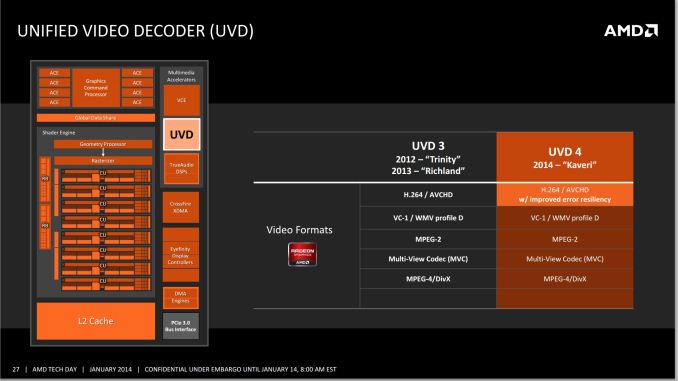

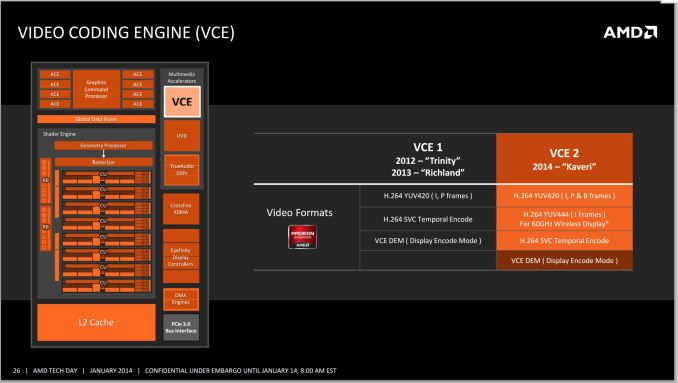

As part of the Kaveri package, AMD is also focusing on adding and updating their fixed function units / accelerators. Due to the jump on the GPU side to GCN we now have the TrueAudio DSP to allow developers to increase the audio capabilities in game, and both the Video Codec Engine (VCE) and Unified Video Decoder (UVD) have been updated.

All the major GPU manufacturers on the desktop side (AMD, NVIDIA, Intel) are pushing new technologies to help improve the experience of owning one of their products. There are clearly many ways to approach this – gaming, compute, content consumption, low power, high performance and so on. This is why we have seen feature like FreeSync, G-Sync, QuickSync, OpenCL adoption and the like become part of the fold in terms of these graphics solutions.

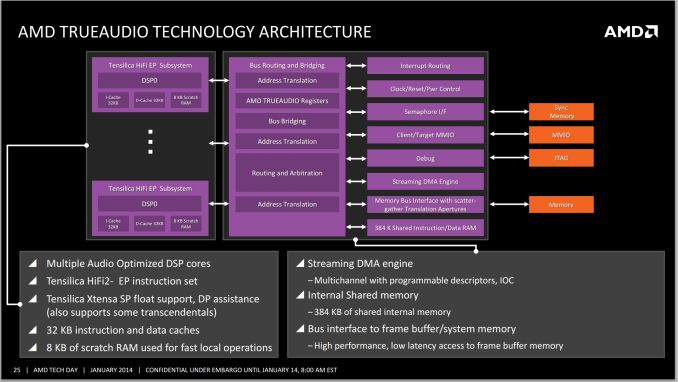

AMD’s new feature is TrueAudio - a fully programmable dedicated hardware element to offload audio tasks to.

The main problem with developing new tools comes down to whether they should be implemented in a general fashion or with a dedicated element. This comes down to the distinction of having a CPU or an ASIC do the work – if the type of work is specific and never changes, then an ASIC makes sense due to its small size, low power overhead and high throughput. A CPU wins out when the work is not clearly defined and it might change, so it opens up the realm of flexibility in exchange for performance per watt.

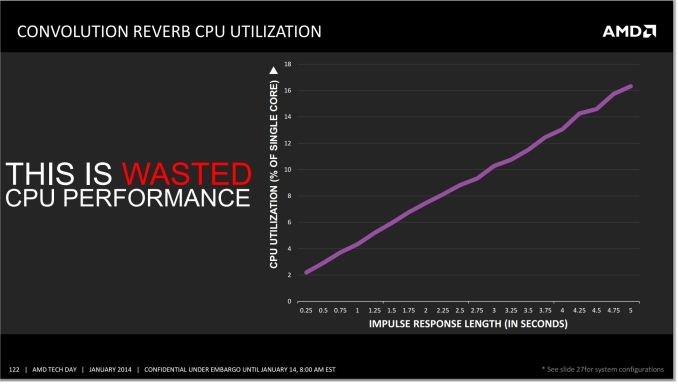

CPUs are now significantly powerful that a range of audio based techniques are available to them and the algorithms are optimized. The only limitation in this regard is the imagination of the developer or audio artist, which actually becomes part of the problem. When implementing an audio filter on the fly to a video game, the processing via the CPU can be overly taxing, especially when the effect is persistent over a long time. The example AMD gave in their press slide deck is one of adding reverb to an audio sample. The longer the reverb, the bigger the draw on CPU resources:

AMD cites this CPU usage as the effect of one filter on one audio sample. Imagine being in a firefight situation in a video game, whereby there are many people running around with multiple gunshots, splatter audio and explosions occurring. Implementing effects on all, and then transposing audio location to the position of the character is actually computationally expensive, all for the sake of realism. This is where the TrueAudio unit comes into play – the purpose is to offload all of this onto a dedicated bit of silicon that has the pathways built in for quicker calculations.

TrueAudio is also implemented on AMD's latest-generation R9 260 and R9 290 video cards – basically anything at least GCN 1.1 and up. Meanwhile we also know that the PS4’s audio DSP is based on TrueAudio, though given the insular nature of console development it's not clear whether the APIs are also the same on both platforms. AMD for their part is working with major audio middleware plugins (wwise, Bink) in order to help develop the TrueAudio ecosystem, so even in the case where the APIs are dissimilar, middleware developers can abstract that and focus on the similarities in the hardware underneath.

As is usually the case for these additional hardware features, games will need to specifically be coded to use TrueAudio, and as such the benefits of TrueAudio will be game specific. At the same time there are not any games currently on the market that can take advantage of the feature, so the hardware is arriving before there is software ready to use it. The first three games on AMD's list that will support TrueAudio are Murdered: Soul Suspect, Thief, and Lichdom. Much like FreeSync, I expect the proof is in the pudding and we will have to wait to see how it can affect the immersion factor of these titles.

Unified Video Decoder and Video Codec Engine

I wanted to include some talk about the UVD and VCE with Kaveri as both are updated – we get UVD 4, an update to error resiliency for H.264, and VCE 2, as shown below:

Of the two blocks, the improved VCE has the more interesting improvements to discuss. With the addition of support for B frames in H.264 encoding, the resulting ability to do backwards frame prediction should help improve the resulting image quality from VCE and/or reduce the required bitrates for any given quality level. Meanwhile the addition of support for the higher quality YUV444 color space in the H.264 encoder should help with the compression of primarily linear lineart/text, which in turn is important for the clarity of wireless displays.

380 Comments

View All Comments

geniekid - Tuesday, January 14, 2014 - link

Would've been nice to see a discrete GPU thrown in the mix - especially with all that talk about Dual Graphics.Ryan Smith - Tuesday, January 14, 2014 - link

Dual graphics is not yet up and running (and it would require a different card than the 6750 Ian had on hand).Nenad - Wednesday, January 15, 2014 - link

I wonder if Dual Graphics can work with HSA, although I doubt due to cache coherence if nothing else.While on HSA, I must say that it looks very promising. I do not have experience with AMD specific GPU programming, or with OpenCL, but I do with CUDA (and some AMP) - and ability to avoid CPU/GPU copy would be great advantage in certain cases.

Interesting thing is that AMD now have HW that support HSA, but does not yet have software tools (drivers, compilers...), while NVidia does not have HW, but does have software: in new CUDA, you can use unified memory, even if driver simulate copy for you (but that supposedly means when NVidia deliver HW, your unaltered app from last year will work and use advantage of HSA)

Also, while HSA is great step ahead, I wonder if we will ever see one much more important thing if GPGPU is ever to became mainstream: PREEMPTIVE MULTITASKING. As it is now, still programer/app needs to spend time to figure out how to split work in small chunks for GPU, in order to not take too much time of GPU at once. It increase complexity of GPU code, and rely on good behavior of other GPU apps. Hopefully, next AMD 'unification' after HSA would be 'preemptive multitasking' ;p

tcube - Thursday, January 16, 2014 - link

Preemtion, dynamic context switching is said to come with excavator core/ carizo apu. And they do have the toolset for hsa/hsail, just look it up on amd's site, bolt i think it's called it is a c library.Further more project sumatra will make java execute on the gpu. At first via a opencl wrapper then via hsa and in the end the jvm itself will do it for you via hsa. Oracle is prety commited to this.

kazriko - Thursday, January 30, 2014 - link

I think where multiple GPU and Dual Graphics stuff will really shine is when we start getting more Mantle applications. With that, each GPU in the system can be controlled independently, and the developers could put GPGPU processes that work better with low latency to the CPU on the APU's built in GPU, and processes for graphics rendering that don't need as low of latency to the discrete graphics card.Preemptive would be interesting, but I'm not sure how game-changing it would be once you get into HSA's juggling of tasks back and forth between different processors. Right now, they do have multitasking they could do by having several queues going into the GPU, and you could have several tasks running from each queue across the different CUs on the chip. Not preemptive, but definitely multi-threaded.

MaRao - Thursday, January 16, 2014 - link

Instead AMD should create new chipsets with dual AMU sockets. Two A8-7600 APUs can give tremendous CPU and GPU performance, yet maintaining 90-100W power usage.PatHeist - Thursday, February 13, 2014 - link

Making dual socket boards scale well is tremendously complex. You also need to increase things like the CPU cache by a lot. Not to mention that performance would tend to scale very badly with the additional CPU cores for things like gaming.kzac - Monday, February 16, 2015 - link

Having 2 or more APUs on a logic board would defeat the purpose of having an APU in the first place, which was to eliminate processing being handled by the logic board controller. With dual APU sockets, there would need to be some controller interjected to direct work to the APUs which could create a bottle neck in processing time (clock cycles). This is the very reason for the existence of multi core APUs and CPUs of today.Its my expectation that we will start to observe much more memory being added to the APU at some point, to increase throughput speeds. Essentially think of future APUs becoming a mini computer within, the only limitations currently to this issue are heat extraction and power consumption.

5thaccount - Tuesday, January 21, 2014 - link

I'm not so interested in dual graphics... I am really curious to see how it performs as a standard old-fashioned CPU. You could even bench it with an nVidia card. No one seems to be reviewing it as a processor. All reviews review it as an APU. Funny thing is, several people I work with use these, but they all have discrete graphics.geniekid - Tuesday, January 14, 2014 - link

Nvm. Too early!