Futuremark Begins Delisting "Cheating" Mobile Devices from 3DMark Database

by Anand Lal Shimpi on November 26, 2013 9:37 PM EST- Posted in

- Smartphones

- Mobile

- Tablets

Over the summer, following a tip from @AndreiF7, we documented an interesting behavior on the Exynos 5 Octa versions of Samsung's Galaxy S 4. Upon detecting certain benchmarks the device would plug in all cores and increase/remove thermal limits, the latter enabling it to reach higher GPU frequencies than would otherwise be available in normal games. In our investigation we pointed out that other devices appeared to be doing something similar on the CPU front, while avoiding increasing thermal limits. Since then we've been updating a table in our reviews that keeps track of device behavior in various benchmarks.

It turns out there's a core group of benchmarks that seems to always trigger this special performance mode. Among them are AnTuTu and, interestingly enough, Vellamo. Other tests like 3DMark or GFXBench appear to be optimized for, but on a far less frequent basis. As Brian discovered in his review of HTC's One max, the list of optimization/cheating targets seems to grow with subsequent software updates.

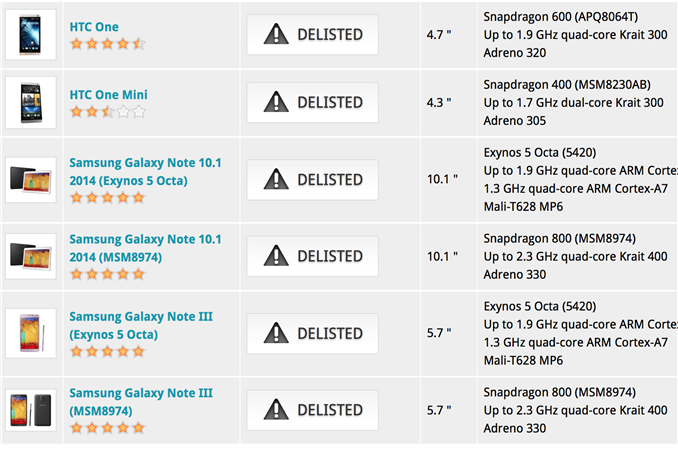

In response to OEMs effectively gaming benchmarks, we're finally seeing benchmark vendors take a public stand on all of this. Futuremark is the first to do something about it. Futuremark now flags and delists devices caught cheating from its online benchmark comparison tool. The only devices that are delisted at this point are the HTC One mini, HTC One (One max remains in the score list), Samsung Galaxy Note 3 and Samsung Galaxy Tab 10.1 (2014 Edition). In the case of the Samsung devices, both Exynos 5 Octa and Qualcomm based versions are delisted.

Obviously this does nothing to stop users from running the benchmark, but it does publicly reprimand those guilty of gaming 3DMark scores. Delisted devices are sent to the bottom of the 3DMark Device Channel and the Best Mobile Devices list. I'm personally very pleased to see Futuremark's decision on this and I hope other benchmark vendors follow suit. Honestly I think the best approach would be for the benchmark vendors to toss up a warning splash screen on devices that auto-detect the app and adjust behavior accordingly. That's going to be one of the best routes to end-user education of what's going on.

Ultimately, I'd love to see the device OEMs simply drop the silliness and treat benchmarks like any other application (alternatively, exposing a global toggle for their benchmark/performance mode would be an interesting compromise). We're continuing to put pressure on device makers, but the benchmark vendors doing the same will surely help.

Source: Futuremark

46 Comments

View All Comments

jkhoward - Tuesday, November 26, 2013 - link

Way to go Futuremark!ddriver - Wednesday, November 27, 2013 - link

So, delisting devices which toggle their CPU to run at top frequency during the test, while it is completely OK to have intel processors overclock themselves hundreds of MHz? That doesn't seem objective and sharply contrasts with the alleged "plea for objectivity" the delisting is supposed to stand for...I think someone should check recent financial transactions from apple to futuremark, since this is obviously yet another PR stunt from apple to damage its main competitors.

Rogess - Wednesday, November 27, 2013 - link

I don't get the first part of your comment. Do you mean the turbo boost clock speed of Intel-based CPU's? That wouldn't be cheating if the CPU behaves similarly in all application as the CPU is just using the thermal headroom availible.And do you have any references for linking Apple to the delisting of devices by Futuremark? I would be careful to claim something like that even though I'd support any company that tries to level the playing-field.

ddriver - Wednesday, November 27, 2013 - link

The reason samsung lock the CPU governor at max clocks for the duration of the tests is because UNLIKE real world scenarios, those benchmarks are really really short, as AT own article showed they are only a few milliseconds each, which is way too short time for the governor to ramp up the clocks, and the benchmarks actually run at lower clock speed, which is not what an actual real life application would to faced with a computationally intensive load, which is sustained 99% of the time.By locking the CPU to maximum clocks, samsung ensure that the benchmark will measure the actual performance of the chip when faced with a heavy workload instead of its performance at idling lower clock state. The reality of the situation is exactly the opposite of your claim, as any real life computationally intensive will keep the CPU at its toes, running at maximum frequency, just like samsung have provisioned for the benchmark tests.

My reference is obviousness, just look at the very image AT has deemed most fit to put on top of this article, it contains all the devices who sell the most and take most $$$ away from apple. Naturally, since futuremark is a Finish company, looking at nokia might be more logical, and nokia also have stuff to gain from tarnishing the name of their competitors, but nowhere nearly as much as apple.

And this is most certainly not an act of leveling the field, it is a cheap act that only aims to make more money for greedy and exploitative corporations.

But hey, if anything, it just shows how desperate apple is, for having to resort to such lowly stunts under fear of a healthy competition.

thunng8 - Wednesday, November 27, 2013 - link

3dmark is not shortBlack Obsidian - Wednesday, November 27, 2013 - link

It's unfortunate that you can't understand the difference between "boosting CPU speed for benchmarks while providing ABSOLUTELY NO BENEFIT to actual user programs" and "boosting CPU speed for all programs equally."Rather than try to come up with an Intel-like solution that actually benefits users, Samsung has decided to be lazy and just cheat on specific benchmarks. I can't tell if you just don't understand what Samsung is actually doing, or if you're trying to lay some sweet, sweet astroturf. Either way, good luck with that.

lukarak - Wednesday, November 27, 2013 - link

Actual real life applications also have short jumps in CPU demand, you are not doing 3 hour 100% utilization renderings on your phone, but rather various R2S scenarios.By cheating, they are giving a false impression of the performance of their devices, as the measured performance is unavailable to the user. Unlike Intel's Turbo Boost.

steven75 - Wednesday, November 27, 2013 - link

"And this is most certainly not an act of leveling the field, it is a cheap act that only aims to make more money for greedy and exploitative corporations like Samsung.But hey, if anything, it just shows how desperate Samsung is, for having to resort to such lowly stunts as cheating on benchmarks under fear of a healthy competition."

T;FTFY

Solandri - Wednesday, November 27, 2013 - link

I agree this type of cheating on benchmarks is harmful (because benchmarks are supposed to be an objective measure of performance comparable between products). But this behavior is hardly a "lowly stunt." It's actually commonplace and the accepted norm pretty much everywhere else. I know because I usually don't abide by that norm.- I didn't study for the SAT or GRE, I took them cold - they're supposed to measure what I know and how I think, not what I studied for.

- For the most part I didn't cram for tests at school. Either I knew the material or I didn't. If I knew I didn't know the material well enough, I would study it regardless of if there was a test.

- Unless my manger overrides me, I don't specially prepare my work projects for a dog and pony show. What you see is exactly what I have, nothing prepared to hide the warts and flaws. I'll do a couple run-throughs to make sure it can actually perform the behavior we want to demonstrate, but I'm not gonna hide anything that doesn't work.

- I usually don't clean my house when expecting company. Any cleaning I do is to make entertaining the guests easier, not to impress them with the cleanliness of my abode.

- I'm not on my best behavior to try to impress a girl on a date. I act like I normally do.

"Put your best foot forward" seems to be a common rule of human social behavior, so it's hardly surprising that some would try to mis-apply it to benchmarks.

KoolAidMan1 - Friday, November 29, 2013 - link

I'm blown away at the level of false equivocation here, wow.