The NVIDIA GeForce GTX 780 Ti Review

by Ryan Smith on November 7, 2013 9:01 AM ESTHands On With NVIDIA's Shadowplay

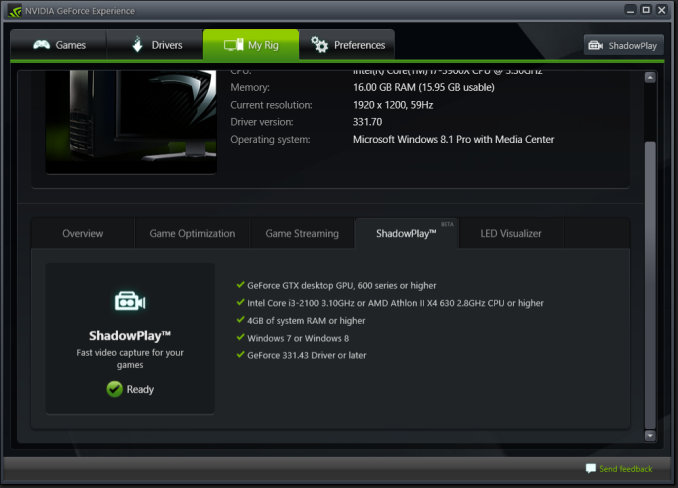

Though it’s technically not part of the GeForce GTX 780 Ti launch, before diving into our typical collection of benchmarks we wanted to spend a bit of time looking at NVIDIA’s recently released Shadowplay utility.

Shadowplay was coincidentally enough first announced back at the launch of the GTX 780. Its designed purpose was to offer advanced game recording capabilities beyond what traditional tools like FRAPS could offer by leveraging NVIDIA image capture and video encode hardware. In doing so, Shadowplay would be able to offer similar capabilities with much less overhead, all the while also being able to utilize the NVENC hardware H.264 encoder to encode to space efficient H.264 rather than the bulky uncompressed formats traditional tools offer.

With Shadowplay and NVIDIA’s SHIELD streaming capabilities sharing so much of the underlying technology, the original plan was to launch Shadowplay in beta form shortly after SHIELD launched, however Shadowplay ended up being delayed, ultimately not getting its beta release until last week (October 28th). NVIDIA has never offered a full accounting for the delay, but one of the most significant reasons was because they were unsatisfied with their original video container choice, M2TS. M2TS containers, though industry standard and well suited for this use, have limited compatibility, with Windows Media Player in particular being a thorn in NVIDIA’s side. As such NVIDIA held back Shadowplay in order to convert it over to using MP4 containers, which have a very high compatibility rate at the cost of requiring some additional work on NVIDIA’s part.

In any case with the container issue resolved Shadowplay is finally out in beta, giving us our first chance to try out NVIDIA’s game recording utility. To that end while clearly still a beta and in need of further polishing and some feature refinements, at its most basic level we’ve come away impressed with Shadowplay, with NVIDIA having delivered on all of their earlier core promises for the utility

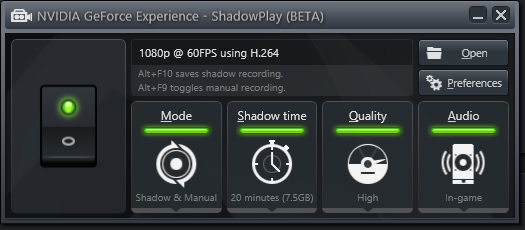

With regards to functionality, all of Shadowplay’s basic functionality is in. The utility offers two recording modes: a manual mode and a shadow mode, the former being self-explanatory while the latter being an always-active rolling buffer of up to 20 minutes that allows saving the buffer after the fact in a DVR-like fashion. Saving the shadow buffer causes the entirety of the buffer to be saved and a new buffer started, while manual mode can be started and stopped as desired.

| Shadowplay Average Bitrates | |||

| High Quality | 52Mbps | ||

| Medium Quality |

23Mbps

|

||

| Low Quality |

16Mbps

|

||

Next to being able to control the size of the shadow buffer, Shadowplay’s other piece of significant flexibility comes through the ability to set the quality (and therefore file size) of the recordings Shadowplay generates. Since Shadowplay uses lossy H.264 the recording bitrates will scale with the quality, with Shadowplay offering 3 quality levels: high (52Mbps), medium (23Mbps), and low (16Mbps). Choosing between the quality levels will depend on the quality needed and what the recording is intended for, due to the large difference in quality and size. High quality is as close as Shadowplay gets to transparent compression, and with its large file sizes is best suited for further processing/transcoding. Otherwise Medium and Low are low enough bitrates that they’re reasonably suitable for distribution as-is, however there is a distinct quality tradeoff in using these modes.

Moving on, at this moment while Shadowplay offers a range of quality settings for recording it only offers a single resolution and framerate: 1080p at 60fps. Neither the frame rate nor the resolution is currently adjustable, so whenever you record and despite the resolution you record from, it will be resized to 1920x1080 and recorded at 60fps. This unfortunately is an aspect-ratio unaware resize too, so even non-16:9 resolutions such as 1920x1200 or 2560x1600 will be resized to 1080p. Consequently at this time this is really the only weak point for Shadowplay; while the NVENC encoder undoubtedly presents some limitations, the inability to record at just a lower resolution or in an aspect ratio compliant manner is something we’d like to see NVIDIA expand upon in the final version of the utility.

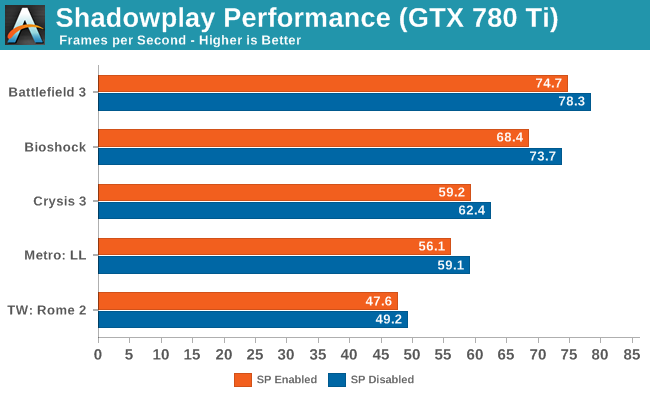

Finally, let’s talk about performance. One of Shadowplay’s promises was that the overhead from recording would be very low – after all, it needs to be low enough to make always-on shadow mode viable – and this is another area where the product lives up to NVIDIA’s claims. To be sure there’s still some performance degradation from enabling Shadowplay, about 5% by our numbers, but this is small enough that it should be tolerable. Furthermore Shadowplay doesn’t require capping the framerate like FRAPS does, so it’s possible to use Shadowplay and still maintain framerates over 60fps. Though as to be expected, this will introduce some frame skipping in the captured video, since Shadowplay will have to skip some frames to keep within its framerate limitations.

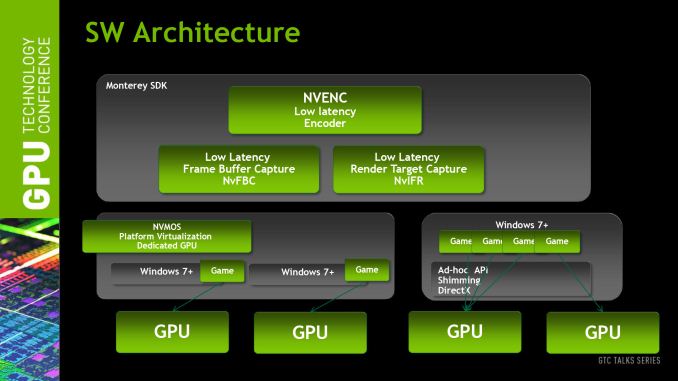

On a related note, we did some digging for a technical answer for why Shadowplay performs as well as it does, and found our answer in an excellent summary of Shadowplay by Alexey Nicolaychuk, the author of RivaTuner and its derivatives (MSI Afterburner and EVGA Precision). As it turns out, although the NVENC video encoder plays a part in that – compressing the resulting video and making the resulting stream much easier to send back to the host and store – that’s only part of the story. The rest of Shadowplay’s low overhead comes from the fact that NVIDIA also has specific hardware and API support for the fast capture of frames built into Kepler GPUs. This functionality was originally intended to facilitate GRID and game streaming, which can also be utilized for game recording (after all, what is game recording but game streaming to a file instead of another client?).

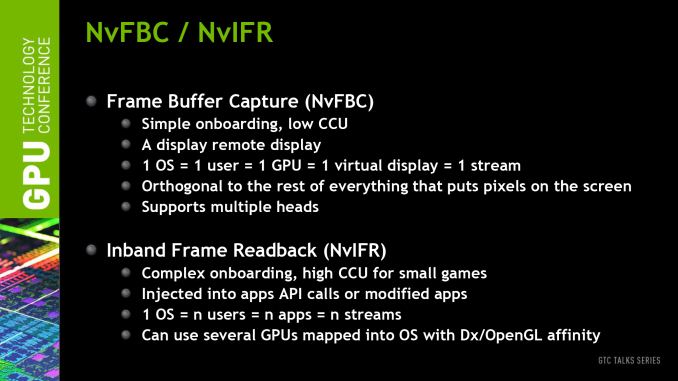

This functionality is exposed as Frame Buffer Capture (NVFBC) and Inband Frame Readback (NVIFR). NVFBC allows Shadowplay to pull finished frames straight out of the frame buffer directly at a low level, as opposed to having to traverse the graphics APIs at a high level. Meanwhile NVIFR does have operate at a slightly higher level to inject itself into the graphics API, but in doing so it gains the flexibility to capture images from render targets as opposed to just frame buffers. Based on what we’re seeing we believe that NVIDIA is using NVFBC for Shadowplay, which would be the lowest overhead option while also explaining why Shadowplay can only capture full screen games and not windowed mode games, as frame buffer capturing is only viable when a game has exclusive control over the frame buffer.

Wrapping things up, it’s clear that NVIDIA still has some polishing they can apply to Shadowplay, and while they aren’t talking about the final release this soon, as a point of reference it took about 4 months for NVIDIA’s SHIELD game streaming component to go from beta to a formal, finished release. In the interim however it’s already in a very usable state, and it should be worth keeping an eye on in the future to see what else NVIDIA does to further improve the utility.

The Test

The press drivers for the launch of the GTX 780 Ti are release 331.70, which other than formally adding support for the new card is otherwise identical to the standing 331.65 drivers.

Meanwhile on a housekeeping note, we want to quickly point out that we’ll be deviating a bit from our normal protocol and including the 290X results for both normal (quiet) and uber modes. Typically we’d only include results from the default mode in articles such as these, but since we need to cover SLI/Crossfire performance and since we didn’t have 290X CF quiet mode results for our initial 290X review, we’re throwing in both so that we can compare the GTX 780 Ti to the 290X CF without being inconsistent by suddenly switching to the lower performance quiet mode numbers. Though with that said, for the purposes of our evaluation we will be focusing almost entirely on the quiet mode numbers, given the vast difference in both performance and noise that comes from using it.

| CPU: | Intel Core i7-4960X @ 4.2GHz |

| Motherboard: | ASRock Fatal1ty X79 Professional |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Samsung SSD 840 EVO (750GB) |

| Memory: | G.Skill RipjawZ DDR3-1866 4 x 8GB (9-10-9-26) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: |

AMD Radeon R9 290X AMD Radeon R9 290 XFX Radeon R9 280X Double Dissipation AMD Radeon HD 7990 AMD Radeon HD 7970 NVIDIA GeForce GTX Titan NVIDIA GeForce GTX 780 Ti NVIDIA GeForce GTX 780 NVIDIA GeForce GTX 770 |

| Video Drivers: |

NVIDIA Release 331.58 WHQL NVIDIA Release 331.70 Beta AMD Catalyst 13.11 Beta v1 AMD Catalyst 13.11 Beta v5 AMD Catalyst 13.11 Beta v8 |

| OS: | Windows 8.1 Pro |

302 Comments

View All Comments

Wreckage - Thursday, November 7, 2013 - link

The 290X = Bulldozer. Hot, loud, power hungry and unable to compete with an older architecture.Kepler is still king even after being out for over a year.

trolledboat - Thursday, November 7, 2013 - link

Hey look, it's a comment from a permanently banned user at this website for trolling, done before someone could of even read the first page.Back in reality, very nice card, but sorely overpriced for such a meagre gain over 780. It also is slower than the cheaper 290x in some cases.

Nvidia needs more price cuts right now. 780 and 780ti are both badly overpriced in the face of 290 and 290x

neils58 - Thursday, November 7, 2013 - link

I think Nvidia probably have the right strategy, G-Sync is around the corner and it's a game changer that justifies the premium for their brand - AMD's only answer to it at this time is going crossfire to try and ensure >60FPS at all times for V-Sync. Nvidia are basically offering a single card solution that even with the brand premium and G-sync monitors comes out less expensive than crossfire. 780Ti for 1440p gamers, 780 for for 1920p gamers.Kamus - Thursday, November 7, 2013 - link

I agree that G-Sync is a gamechanger, but just what do you mean AMD's only answer is crossfire? Mantle is right up there with g-sync in terms of importance. And from the looks of it, a good deal of AAA developers will be supporting Mantle.As a user, it kind of sucks, because I'd love to take advantage of both.

That said, we still don't know just how much performance we'll get by using mantle, and it's only limited to games that support it, as opposed to G-Sync, which will work with every game right out of the box.

But on the flip side, you need a new monitor for G-Sync, and at least at first, we know it will only be implemented on 120hz TN panels. And not everybody is willing to trade their beautiful looking IPS monitor for a TN monitor, specially since they will retail at $400+ for 23" 1080p.

Wreckage - Thursday, November 7, 2013 - link

Gsync will work with every game past ad present. So far Mantle is only confirmed in one game. That's a huge difference.Basstrip - Thursday, November 7, 2013 - link

TLDR: When considering Gsync as a competitive advantage, add the cost of a new monitor. When considering Matnle support, think multiplatform and think next-gen consoles having AMD GPUs. Another plus side for NVidia is shadowplay and SHIELD though (but again, added costs if you consider SHIELD).Gsync is not such a game changer as you have yet to see both a monitor with Gsync AND its pricing. The fact that I would have to upgrade my monitor and that that Gsync branding will add another few $$$ on the price tag is something you guys have to consider.

So to consider Gsync as a competitive advantage when considering a card, add the cost of a monitor to that. Perfect for those that are going to upgrade soon but for those that won't, Gsync is moot.

Mantle on its plus side will be used on consoles and pc (as both PS4 and Xbox One have AMD processors, developpers of games will most probably be using it). You might not care about consoles but they are part of the gaming ecosystem and sadly, we pc users tend to get the shafted by developpers because of consoles. I remember Frankieonpc mentioning he used to play tons of COD back in the COD4 days and said that development tends to have shifted towards consoles so the tuning was a bit more off for pc (paraphrasing slightly).

I'm in the market for both a new monitor and maybe a new card so I'm a bit on the fence...

Wreckage - Thursday, November 7, 2013 - link

Mantle will not be used on consoles. AMD already confirmed this.althaz - Thursday, November 7, 2013 - link

Mantle is not used on consoles...because the consoles already have something very similar.Kamus - Thursday, November 7, 2013 - link

You are right, consoles use their own API for GCN, guess what mantle is used for?*spoiler alert* GCN

EJS1980 - Thursday, November 7, 2013 - link

Mantle is irrefutably NOT coming to consoles, so do your due diligence before trying to make a point. :)