The Xeon Phi at work at TACC

by Johan De Gelas on November 14, 2012 1:44 PM EST- Posted in

- Cloud Computing

- CPUs

- IT Computing

- Intel

- Larrabee

- Xeon

- Xeon Phi

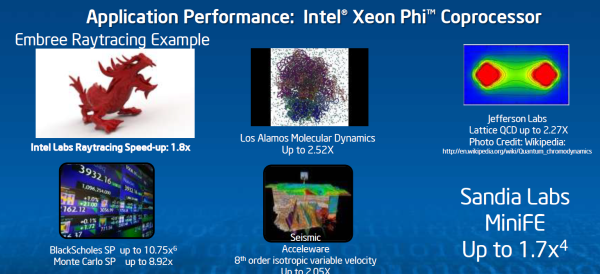

One of the big selling points of the Xeon Phi is that you can simply run multi-threaded Xeon code on the Xeon Phi. If you want to get decent performance out of the Xeon Phi, that code should be compiled with the Intel C or fortan Compiler and the Intel MKL math libraries. In that case, Intel claims many "typical applications" get about 2 to 2.5 higher performance with the Xeon Phi. A few exceptions get more.

That is an impressive performance boost, but not earth shattering. These numbers are much more realistic than the typical benchmarks of 100x that are throw around by the GPU folks. Those benchmarks are typically comparing a single threaded non SIMD binaries running on a CPU to a fully threaded carefully tuned application running on a GPU.

The question remains in which applications a cheaper quad CPU solution is more effective. Before the Xeon E5 (Sandy Bridge EP) came out, AMD was quite succesful with their less expensive quad CPU platforms in the HPC world. It will be interesting to compare the performance per dollar and performance per watt of such quad CPU platforms with a CPU + Phi solution. There are certainly applications where the CPU + Phi wins hands down, but we are willing to bet that there are lots of HPC applications where it is a close call (e.g. highly threaded, but harder to vectorize code).

The point is of course that the time investment to get there is a lot lower than is the case with CUDA on NVIDIA's Tesla K20. We have heard from several companies that debugging CUDA code is still a pretty daunting experience. One good example can be found here. The maturity of the Intel compilers and high performance software is a big plus for the Xeon Phi. The numerous papers and OpenMP to CUDA frameworks/translators clearly indicate that porting OpenMP applications to CUDA is not necessarily straightforward. That in contrast with the Xeon Phi, where existing OpenMP applications run faster on the Xeon Phi without a recompile. OpenMP is simply the ecosystem where the Xeon Phi thrives. And Intel has an excellent track record when it comes to supporting OpenMP in its compilers.

The Xeon Phi might also prove to be a bit more flexible and forgiving. The Xeon Phi architecture still, at a high level, resembles a general purpose Xeon core. We're talking about 60 in-order x86 cores with wider SIMD units, a 512KB L2 feeding 4 threads per core.

GPUs on the other hand are built for more "extreme" parallelism: hundreds of stream processors, with small shared L1-caches and one relatively small L2-cache.

We'll have to hold final judgement until we get a Xeon Phi equipped system in house, but our first impressions are that the Xeon Phi looks like a more cost effective, potentially easier to use alternative to high-end GPUs for HPC.

46 Comments

View All Comments

tipoo - Wednesday, November 14, 2012 - link

I wonder if we'll ever have more numerous smaller cores like these working in conjunction with larger traditional cores. A bit like the PPE and SPEs in the Cell processor, with the more general core offloading what it can to the smaller ones.A5 - Wednesday, November 14, 2012 - link

That's called heterogeneous computing. It's definitely where things are going in the future and you can argue that it's already here with Trinity.nevertell - Wednesday, November 14, 2012 - link

The great thing about the Cell was that both the PPE and the SPEs had access to the same memory. Trinity doesn't and while that may be because there isn't an OS that would take advantage of that, hardware is as capable as software is efficient for that exact hardware solution.There is no need for major parallelism in the consumer space, since nobody is willing to rewrite their programs to run on something faster whilst the general public is already served well enough by a Core i3 or i5.

name99 - Friday, November 16, 2012 - link

"The great thing about the Cell was that both the PPE and the SPEs had access to the same memory."Hmm. This is not a useful statement.

Cell had a ludicrous addressing model that was clearly irrelevant to the real world. It's misleading to say that the cores had access to "the same memory". The way it actually worked was that each core had a local address space (I'm think 12bit wide, but I may be wrong, maybe 14 bits wide) and almost every instruction operated in that local address space. There were a few special purpose instructions that moved data between that local address space and and the global address space. Think of it as like programming with 8086 segments, only you have only one data segment (no ES, no SS), you can't easily swap DS to access another segment, and the segment size is substantially smaller than 64K.

Much as I dislike many things about Intel, more than anyone else they seem to get that hardware that can't be programmed is not especially useful. And so we see them utilizing ideas that are not exactly new (this design, or transactional memory) but shipping them in a form that's a whole lot more useful than what went before.

This will get the haters on all sides riled up, but the fact is --- this is very similar to what Apple does in their space.

dcollins - Wednesday, November 14, 2012 - link

That's exactly how this supercomputer, and all supercomputers offering accelerated compute, work. Xeon or Opteron CPUs handle complex branching tasks like networking and work distribution while the accelerators handle the parallelizable problem solving work.Merging them onto a single die is simply a matter of having enough die space to fit everything while making sure that economics of a single chip is better than separate products.

tipoo - Wednesday, November 14, 2012 - link

*in consumer computing I mean.Gigaplex - Wednesday, November 14, 2012 - link

Both AMD Fusion and Intel Ivy Bridge support this right now. The software just needs to catch up.tipoo - Wednesday, November 14, 2012 - link

Sort of I suppose, but I think something like this would be easier to use for most compute tasks for the reasons the article states, these are still closer to general processor cores than GPU cores are.frostyfiredude - Wednesday, November 14, 2012 - link

Something like ARM's big.LITTLE in a sense seems like a good idea to me. I'm not sure how feasable it is, but having one or two small Atom-like cores paired to larger and more complex Core processing cores all sharing the same L3 sounds like a decent idea for mobile CPUs to cut idle power use. My guess is the two types of cores would need to share the same instructions, so the differences would be things like OoO vs In-order, execution width, designed for low clock speed vs high clock speed. The Atom SoCs can hit power use around that of ARM SoCs, so if Intel can get that kind of super low power use at low loads and ULV i7 performance out of the same chip when stressed that'd be super killer.CharonPDX - Thursday, November 15, 2012 - link

One rumor I had heard upon Larrabee getting cancelled and turned into Knights Ferry was that this technology might be released as a coprocessor that used the same socket as the "main" Xeon.That you could mix-and-match them in one system. If you wanted maximum conventional performance, you put in 8 conventional Xeons. If you wanted maximum stream performance, you'd put in one "boot" conventional Xeon, and 7 of these. (At the time, there were also rumors that Itanium was going to be same-socket-and-platform, which now looks like it will come true.)