AMD A10-5800K & A8-5600K Review: Trinity on the Desktop, Part 1

by Anand Lal Shimpi on September 27, 2012 12:00 AM ESTCompute & Synthetics

One of the major promises of AMD's APUs is the ability to harness the incredible on-die graphics power for general purpose compute. While we're still waiting for the holy grail of heterogeneous computing applications to show up, we can still evaluate just how strong Trinity's GPU is at non-rendering workloads.

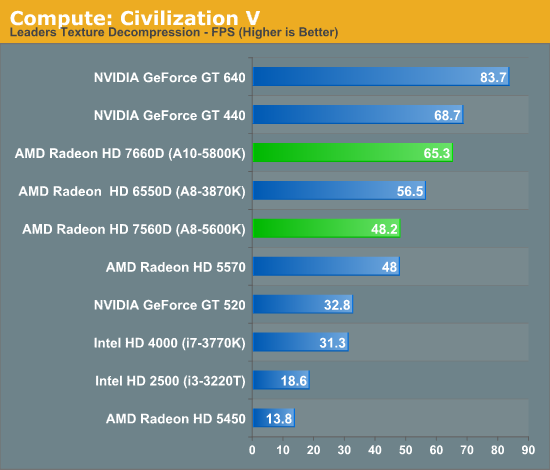

Our first compute benchmark comes from Civilization V, which uses DirectCompute 5 to decompress textures on the fly. Civ V includes a sub-benchmark that exclusively tests the speed of their texture decompression algorithm by repeatedly decompressing the textures required for one of the game's leader scenes. And while games that use GPU compute functionality for texture decompression are still rare, it's becoming increasingly common as it's a practical way to pack textures in the most suitable manner for shipping rather than being limited to DX texture compression.

Similar to what we've already seen, Trinity offers a 15% increase in performance here compared to Llano. The compute advantage here over Intel's HD 4000 is solid as well.

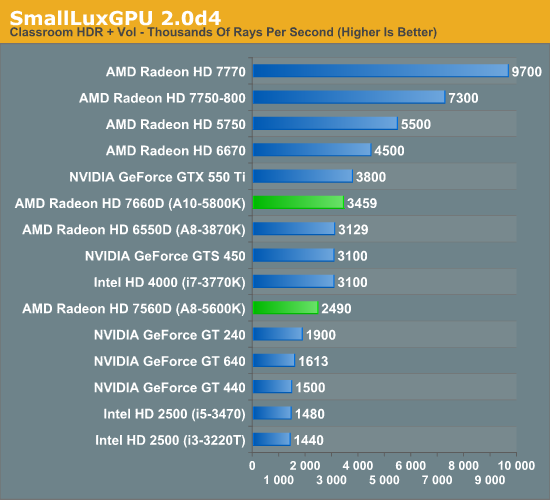

Our next benchmark is SmallLuxGPU, the GPU ray tracing branch of the open source LuxRender renderer. We're now using a development build from the version 2.0 branch, and we've moved on to a more complex scene that hopefully will provide a greater challenge to our GPUs.

Intel significantly shrinks the gap between itself and Trinity in this test, and AMD doesn't really move performance forward that much compared to Llano either.

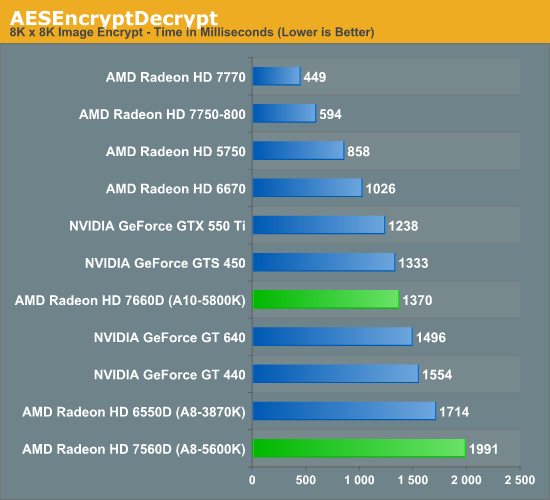

For our next benchmark we're looking at AESEncryptDecrypt, an OpenCL AES encryption routine that AES encrypts/decrypts an 8K x 8K pixel square image file. The results of this benchmark are the average time to encrypt the image over a number of iterations of the AES cypher. Note that this test fails on all Intel processor graphics, so the results below only include AMD APUs and discrete GPUs.

We see a pretty hefty increase in performance over Llano in our AES benchmark. The on-die Radeon HD 7660D even manages to outperform NVIDIA's GeForce GT 640, a $100+ discrete GPU.

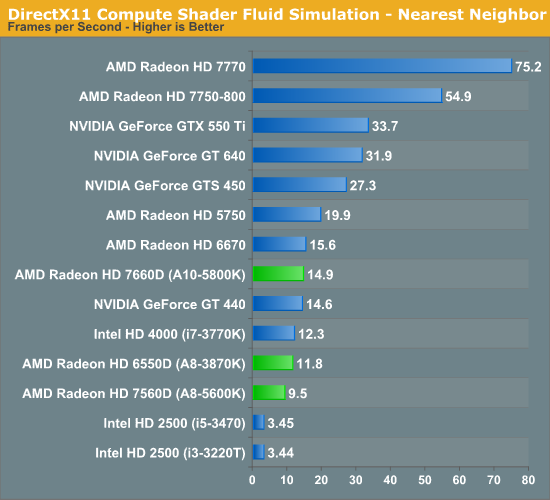

Our fourth benchmark is once again looking at compute shader performance, this time through the Fluid simulation sample in the DirectX SDK. This program simulates the motion and interactions of a 16k particle fluid using a compute shader, with a choice of several different algorithms. In this case we're using an (O)n^2 nearest neighbor method that is optimized by using shared memory to cache data.

For our last compute test, Trinity does a reasonable job improving performance over Llano. If you're in need of a lot of GPU computing horsepower you're going to be best served by a discrete GPU, but it's good to see the processor based GPUs inch their way up the charts.

Synthetic Performance

Moving on, we'll take a few moments to look at synthetic performance. Synthetic performance is a poor tool to rank GPUs—what really matters is the games—but by breaking down workloads into discrete tasks it can sometimes tell us things that we don't see in games.

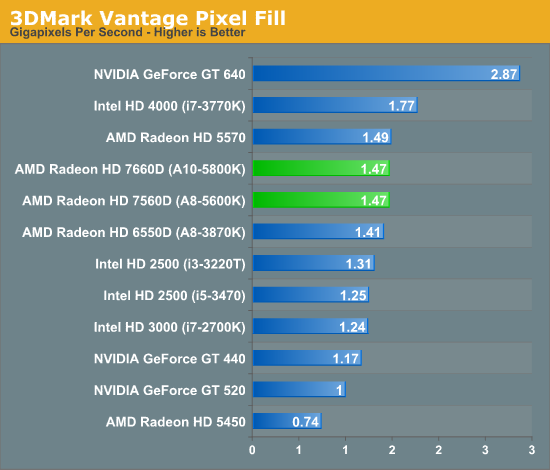

Our first synthetic test is 3DMark Vantage's pixel fill test. Typically this test is memory bandwidth bound as the nature of the test has the ROPs pushing as many pixels as possible with as little overhead as possible, which in turn shifts the bottleneck to memory bandwidth so long as there's enough ROP throughput in the first place.

Since our Llano and Trinity numbers were both run at DDR3-1866, there's no real performance improvement here. Ivy Bridge actually does quite well in this test, at least the HD 4000.

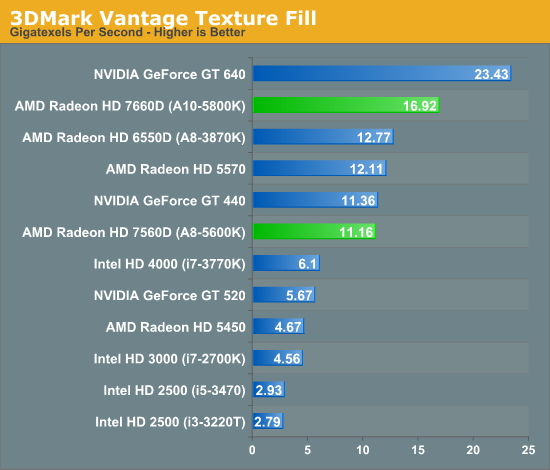

Moving on, our second synthetic test is 3DMark Vantage's texture fill test, which provides a simple FP16 texture throughput test. FP16 textures are still fairly rare, but it's a good look at worst case scenario texturing performance.

Trinity is able to outperform Llano here by over 30%, although NVIDIA's GeForce GT 640 shows you what a $100+ discrete GPU can offer beyond processor graphics.

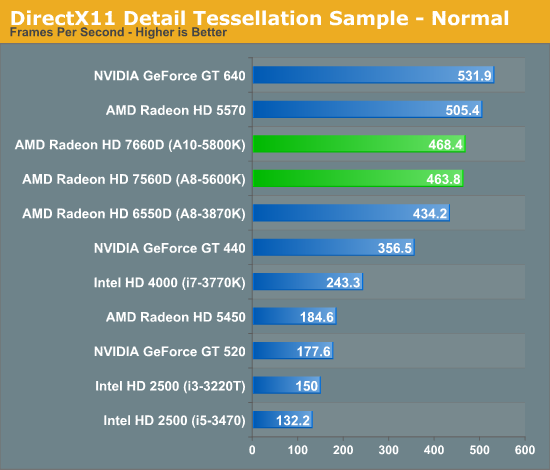

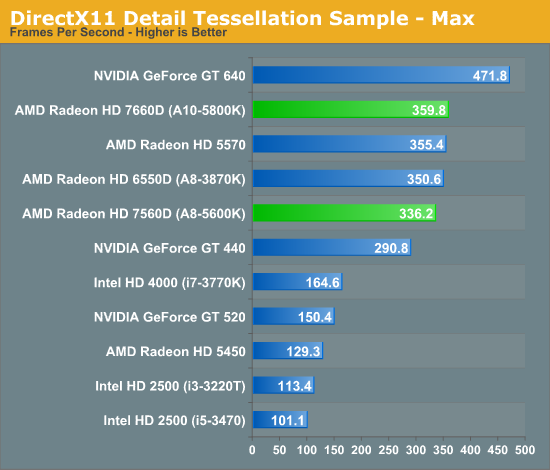

Our final synthetic test is the set of settings we use with Microsoft's Detail Tessellation sample program out of the DX11 SDK. Since IVB is the first Intel iGPU with tessellation capabilities, it will be interesting to see how well IVB does here, as IVB is going to be the de facto baseline for DX11+ games in the future. Ideally we want to have enough tessellation performance here so that tessellation can be used on a global level, allowing developers to efficiently simulate their worlds with fewer polygons while still using many polygons on the final render.

The tessellation results here were a bit surprising given the 8th gen tessellator in Trinity's GPU. AMD tells us it sees much larger gains internally (up to 2x), but using different test parameters. Trinity should be significantly faster than Llano when it comes to tessellation performance, depending on the workload that is.

139 Comments

View All Comments

deontologist - Thursday, September 27, 2012 - link

Anand - always 3 months late to the party.Devo2007 - Thursday, September 27, 2012 - link

What are you talking about? AMD is just now lifting the NDA on the Trinity A10-5800K & A8-5600K desktop CPUs (and even then, sites can only talk about GPU performance).If any site had reviewed a Trinity APU several months ago, it was the mobile version (A10-4600M). Anandtech even reviewed it here:

http://www.anandtech.com/show/5831/amd-trinity-rev...

karasaj - Thursday, September 27, 2012 - link

I believe he was referring to this:http://www.tomshardware.com/reviews/a10-5800k-a8-5...

Samus - Thursday, September 27, 2012 - link

None of those numbers compare Trinity to the competition. They're mostly worthless.Samus - Thursday, September 27, 2012 - link

Engadget has word the A10 is aiming at i3 prices and i5 performance on the CPU side. We've already seen A8 and A10 cream the i3 and i5 in GPU. I'm excited. I haven't built an AMD system in years, and the A8 65w might be a perfect HTPC CPU.jwcalla - Thursday, September 27, 2012 - link

Tom's has benchmarks against a Core i3-2100 if you'd like to see how it stacks up.Samus - Thursday, September 27, 2012 - link

i can't find any of tom's benchmarks showing a comparison of THESE chips against any Intel chips. They all compare the A10 and A8 to eachother.GazP172 - Thursday, September 27, 2012 - link

If its anything like the Lano, the top end 65w's will basically only be released to the OEM's. Which to me are the only ones worth having.Taft12 - Thursday, September 27, 2012 - link

That was because of AMD's lousy yields and contracts which prioritized access of the supply to the likes of HP and Acer over the retail channel.OEMs still have first dibs, but yield issues are apparently better now. I have high hopes for the 65W parts (which includes actually being able to buy them on Newegg!) The A10-5700 could be the best of all worlds.

mikato - Monday, October 1, 2012 - link

Agree! I want to A10-5700 probably. No brainer.