ASUS Eee Pad Transformer Prime & NVIDIA Tegra 3 Review

by Anand Lal Shimpi on December 1, 2011 1:00 AM ESTBattery Life

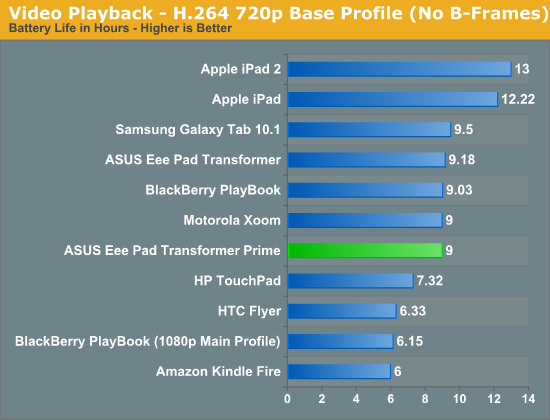

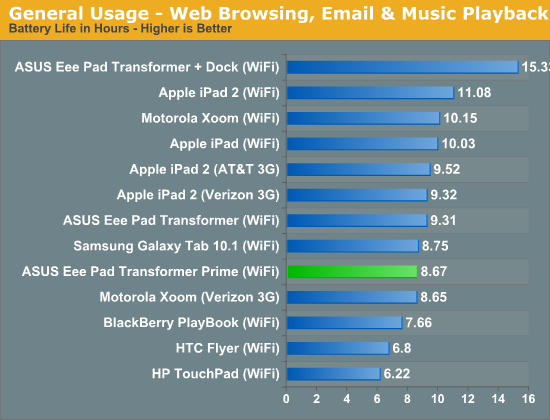

With 39 hours to test I was pretty limited in what I could do when it came to battery life testing. I was able to run through two tests (one run a piece) and only in one configuration each. I wanted to see how Tegra 3 and the Prime fared in the worst case scenario so I picked the Normal power profile. Over the coming days I'll look at battery life in the other two profiles as well, not to mention run through more iterations of our test suite.

My bigger concern has to do with the malfunctioning WiFi in my review unit. For our video playback battery life test WiFi was on but not actively being used, those numbers should be ok. It's our general use test that loads web pages and downloads emails over WiFi and it's there that I believe things could've suffered a bit.

In both cases I saw around 9 hours of continuous battery life out of the Transformer Prime, without its dock. These numbers are a bit lower than the original Transformer but it's unclear to me how much of this is due to the additional cores/frequency or the misbehaving WiFi. The fact that we're within striking range of the original Transformer with the Prime running in Normal mode tells me that it's possible to actually exceed the Transformer's battery life with the Balanced or Power Saver profiles. That's very impressive for an SoC built on the same manufacturing process as its predecessor but with twice the CPU cores and a beefier GPU.

What I'm not seeing however is the impressive gains in battery life NVIDIA promised its companion core would deliver. I'm not saying that the companion core doesn't deliver a tangible improvement in battery life, I'm just saying that I need more time to know for sure.

That the Transformer Prime can deliver roughly the same battery life as its predecessor without any power profile tweaking may be good enough for many users. Both ASUS and NVIDIA shared their own numbers which peg the Prime's battery life in the 10 - 13 hour range. As I mentioned before, I'll have more data in the coming days.

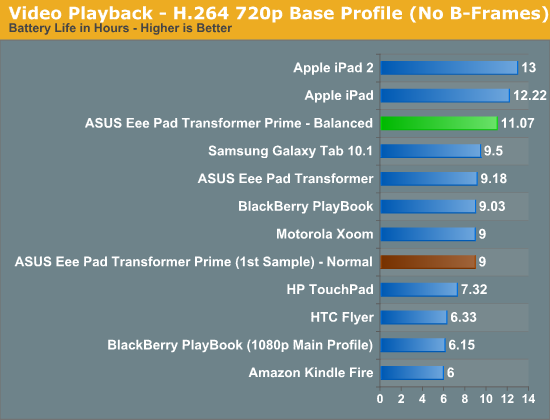

Update - With a replacement Transformer Prime in house, battery life is looking a lot better already:

Update 2: Even more battery life results in our follow-up.

204 Comments

View All Comments

abcgum091 - Thursday, December 1, 2011 - link

After seeing the performance benchmarks, Its safe to say that the ipad 2 is an efficiency marvel. I don't believe I will be buying a tablet until windows 8 is out.ltcommanderdata - Thursday, December 1, 2011 - link

I'm guessing the browser and most other apps are not well optimized for quad cores. The question is will developers actually bother focusing on quad cores? Samsung is going with fast dual core A15 in it's next Exynos. The upcoming TI OMAP 4470 is a high clock speed dual core A9 and OMAP5 seem to be high clock speed dual core A15. If everyone else standardizes on fast dual cores, Tegra 3 and it's quad cores may well be a check box feature that doesn't see much use putting it at a disadvantage.Wiggy McShades - Thursday, December 1, 2011 - link

If the developer is writing something in java (most likely native code applications too) it would be more work for them to ensure they are at most using 2 threads instead of just creating as many threads as needed. The amount of threads a java application can create and use is not limited to the number of cores on the cpu. If you created 4 threads and there are 2 cores then the 4 threads will be split between the two cores. The 2 threads per core will take turns executing with the thread who has the highest priority getting more executing time than the other. All non real time operating systems are constantly pausing threads to let another run, that's how multitasking existed before we had dual core cpu's. The easiest way to write an application that takes advantage of multiple threads is to split up the application into pieces that can run independently of each other, the amount of pieces being dependent on the type of application it is. Essentially if a developer is going to write a threaded application the amount of threads he will use will be determined by what the application is meant to do rather than the cores he believes will be available. The question to ask is what kind of application could realistically use more than 2 threads and can that application be used on a tablet.Operaa - Monday, January 16, 2012 - link

Making responsive today UI most certainly requires you to use threads, so shouldn't be big problem. I'd say 2 threads per application is absolutely a minimum. For example, talking about browsing web, I would imagine useful to handle ui in one thread, loading page in one, loading pictures in third and running flash in fourth (or more), etc.UpSpin - Thursday, December 1, 2011 - link

ARM introduced big.LITTLE which only makes sense in Quad or more core systems.NVIDIA is the only company with a Quad core right now because they integrated this big.LITTLE idea already. Without such a companion core does a quad core consume too much power.

So I think Samsung released a A15 dual core because it's easier and they are able to release a A15 SoC earlier. They'll work on a Quad core or six or eight core, but then they have to use the big.LITTLE idea, which probably takes a few more months of testing.

And as we all know, time is money.

metafor - Thursday, December 1, 2011 - link

/bogglebig.Little can work with any configuration and works just as well. Even in quad-core, individual cores can be turned off. The companion core is there because even at the lowest throttled level, a full core will still produce a lot of leakage current. A core made with lower-leakage (but slower) transistors can solve this.

Also, big.Little involves using different CPU architectures. For example, an A15 along with an A7.

nVidia's solution is the first step, but it only uses A9's for all of the cores.

UpSpin - Friday, December 2, 2011 - link

I haven't said anything different. I just added that Samsung wants to be one of the first who release a A15 SoC. To speed things up they released a dual core only, because there the advantage of a companion core isn't that big and the leakage current is 'ok'. It just makes the dual core more expensive (additional transistors needed, without such a huge advantage)But if you want to build a quad core, you must, just as Nvidia did, add such a companion core, else the leakage current is too high. But integrating the big.LITTLE idea probably takes additional time, thus they wouldn't be the first who produced a A15 based SoC.

So to be one of the first, they chose to take the easiest design, a dual core A15. After a few months and additional time of RD they will release a quad core with big.LITTLE and probably a dual core and six core and eigth core with big.LITTLE, too.

hob196 - Friday, December 2, 2011 - link

You said:"ARM introduced big.LITTLE which only makes sense in Quad or more core systems"

big.LITTLE would apply to single core systems if the A7 and A15 pairing was considered one core.

UpSpin - Friday, December 2, 2011 - link

Power consumption wise it makes sense to pair an A7 with a single and dual core already.Cost wise it doesn't really make sense.

I really doubt that we will see some single core A15 SoC with a companion core. And dual core, maybe, but not at the beginning.

GnillGnoll - Friday, December 2, 2011 - link

It doesn't matter how many "big" cores there are, big.LITTLE is for those situations where turning on even a single "big" core is a relatively large power draw.A quad core with three cores power gated has no more leakage than a single core chip.