Sandy Bridge Memory Scaling: Choosing the Best DDR3

by Jared Bell on July 25, 2011 1:55 AM EST7-Zip

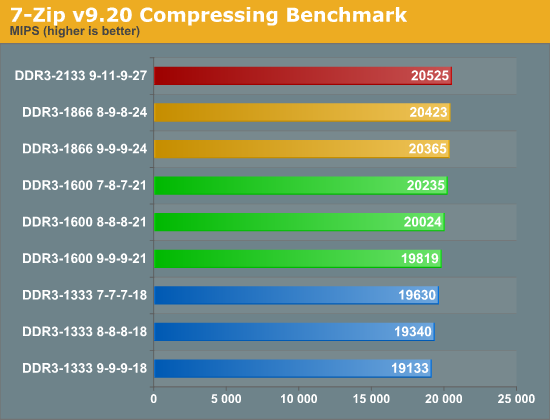

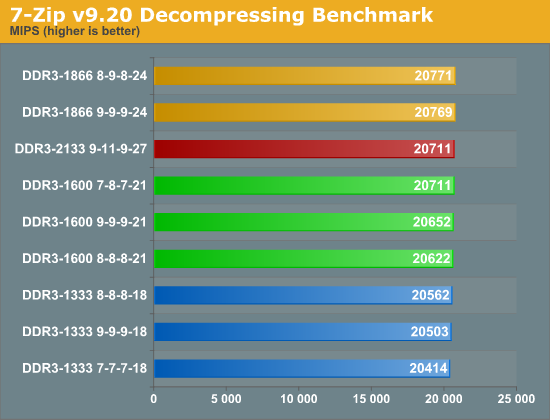

Many people are moving over to 7-Zip for their compression/decompression needs. 7-Zip is not only free and open-source, but it also has a built in benchmark for measuring system performance using the LZMA compression/decompression method. Keep in mind that these tests are ran in memory and bypass any potential disk bottlenecks. The compression routines in particular can put a heavy load on the memory subsystem, as many MB worth of data is scanned for patterns that allow the compression to take place. In a sense, data compression is one of the best real-world tests for memory performance.

The compression test shows a linear performance increase with a ~7% variance between the fastest and slowest. If you do a fair amount of compressing, you could potentially save some time in the long run by using faster memory. This, of course, is assuming you're not bottlenecked elsewhere such as in your I/O or CPU performance. The decompression test isn’t affected by faster memory in the same way, as there’s no pattern recognition going on; it’s simply expanding the already found patterns into the original files. With less than 2% separating the range, it's unlikely to make much of a difference if you’re primarily decompressing files.

x264 HD Benchmark

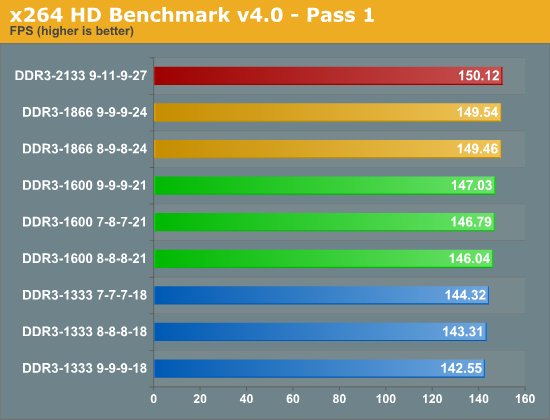

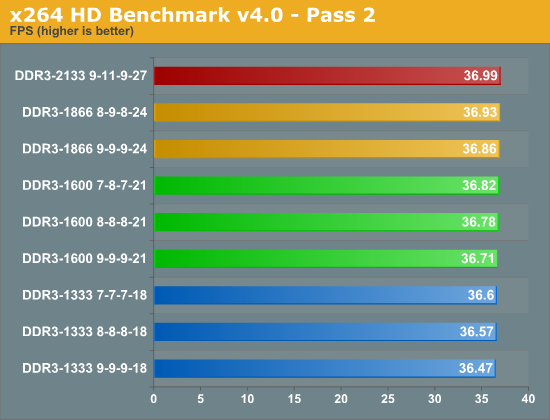

The x264 HD Benchmark measures how fast your system can encode a short HD-quality video clip into a high quality H.264 video file. There are two separate passes performed and compared. Multiple passes are generally used to ensure the highest quality video output, and the first pass tends to be more I/O bound while the second pass is typically constrained by CPU performance.

While not a huge spread, we do see a difference of 5% from the fastest to the slowest in the first pass. The second pass, however, shows a less than 2% gain. If encoding is one of your systems primary tasks, it's possible that having faster memory could pay off over time, but a faster CPU will be far more beneficial.

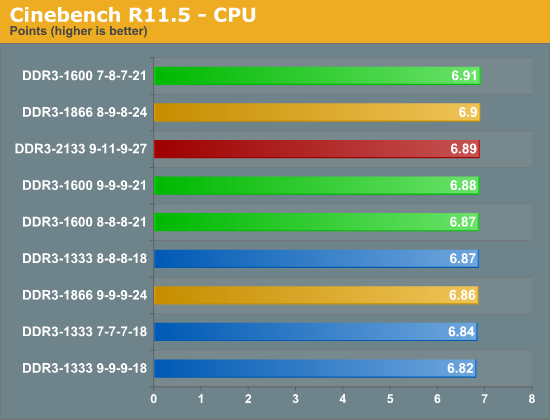

Cinebench 11.5

The Cinebench CPU test scenario uses all of your system's processing power to render a photorealistic 3D scene containing approximately 2,000 objects and nearly 300,000 polygons. This scene makes use of various algorithms to stress all available processor cores, but how does memory speed come into play?

Apparently not much in this benchmark. We're looking at a less than 2% difference from the fastest to the slowest. It's possible that CAS latency is more important for this type of load, but due to the extremely small variance, I don't believe that statement is conclusive. Overall, even a single CPU bin would be enough to close the gap between the fastest and slowest memory we tested.

76 Comments

View All Comments

Rick83 - Monday, July 25, 2011 - link

Do they take into account, that we should be using 1.5V DIMMs for Sandy Bridge?The addition of that requirement usually limits choice quite a bit.

compudaze - Monday, July 25, 2011 - link

The SNB datasheet does suggest that the max memory voltage is 1.575V, however, many motherboard and memory manufactures state that they haven't had any problems with memory running at 1.65V on SNB.compudaze - Monday, July 25, 2011 - link

Also, if you stick to the spec sheet, you shouldn't be running faster than DDR3-1333 memory.Taft12 - Monday, July 25, 2011 - link

You should be using 1.5V DIMMs anyway - if a memory OEM needs 1.65V to achieve the same speed and timings another vendor does at 1.5V, it's inferior memory.jdogi - Monday, July 25, 2011 - link

Just as your daily driver vehicle is likely inferior to a Mercedes or Ferrari. You should get a new car. You should not make any attempt to balance cost with the value. Just get the best. It's the only way to go. What's best for Taft is best for all.;-)

Iketh - Tuesday, July 26, 2011 - link

you didn't understand the logicMrSpadge - Wednesday, July 27, 2011 - link

I'm sure he did. What Taft failed to mention was that "at the same price, you should be using the memory spec'ed for less voltage". However, if some memory needs a little more voltage, but is way cheaper - balance cost and value.MrS

Rick83 - Wednesday, July 27, 2011 - link

Actually, the higher voltage is out of spec for the CPU memory controller and may wel impact longevity.So it's like buying the Ferrari, and running it on Biofuel with too much Ethanol that eats right through the tubing, but is marginally cheaper.

jfelano - Tuesday, July 26, 2011 - link

Not inferior, just older. All 1600mhz memory was 1.65v when it debuted. Then they came out with 1.5v, now even 1.35v.cervantesmx - Thursday, July 28, 2011 - link

That is correct indeed. Just purchased 8GB at 1600mhz running on 1.25v. $59.99. Free shipping.