Fusion E-350 Review: ASUS E35M1-I Deluxe, ECS HDC-I and Zotac FUSION350-A-E

by Ian Cutress on July 14, 2011 11:00 AM ESTI decided to dedicate an extra page to looking at two features on these Fusion boards that are, in my eyes, quite interesting to discuss.

On the one hand, we are dealing with low power CPUs which can't process that much very fast, so if you want to overclock them, that overclock also has a significant impression on any integrated GPU gaming being used.

On the other, we have access to a PCIe x16 slot, capable of running a full length, high-end GPU (should you want to). This PCIe slot actually runs at 4x, which in certain circumstances would cripple the discrete GPU. Pair this crippling with a not-so-great CPU, and we're not expecting the gaming capability to take off, so I've examined this as well.

Overclocking, and Gaming Performance

By default, we have a 1600 MHz, dual core Fusion CPU, combined with an 80 SP iGPU at 500 MHz, designated the HD 6310. In terms of pure CPU throughput, we saw on all boards that a percentage increase in clock speed gave a direct increase in benchmark result for the 3D Particle Movement benchmark.

In terms of gaming, we need to analyze what this overclock does. Apart from the default CPU speed increase, we're getting a direct GPU clock speed increase as well. The DDR3 memory is also getting an increase, thus the memory bandwidth to the iGPU is increased as well. So any overclock will increase its own effectiveness in two major areas.

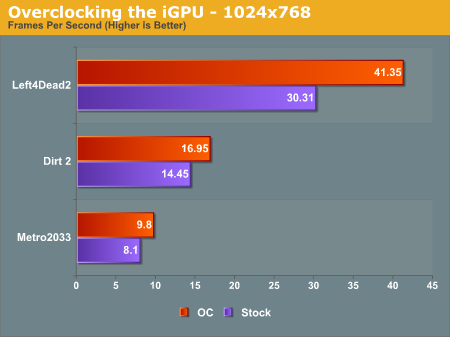

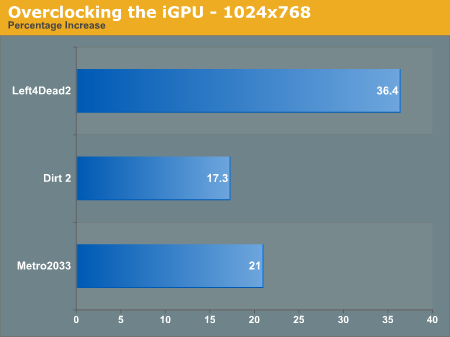

I'll take the ASUS E35M1-I Deluxe for this explanation, which allowed a 10% overclock from 1600 MHz to 1760 MHz. From the gaming perspective on the iGPU, we have a large increase in scores:

Out largest increase was in the DirectX 9 game, Left4Dead2 - a staggering 36.4 % increase in frame rate from 30.3 fps to 41.4 fps, making the game more playable at the 1024x768 resolution. Even Metro2033 had a 21.0 % increase, and Dirt2 a 17.3% increase. Is the iGPU itself capable of playing the major games? Probably not, but at least those older ones can feel smoother.

The PCIe slot running at 4x - Is it worth using a beefy GPU, like a GTX 580?

The short answer is no, probably not. Normally we see a full length PCIe slot run at 4x only when it's the second or third PCIe slot on the board, and usually at the detriment to SATA or USB ports that have to be switched off as a result. Here, we have two main issues - will the CPU be fast enough to be able to navigate data across the PCIe bus to and from the discrete graphics, or will the 4x speed of the bus be the crippling factor?

(Note: I understand getting a GTX580 isn't realistic with a Fusion, but it's the most powerful GPU I have to hand and most apt for this test as GPU power should not be an issue.)

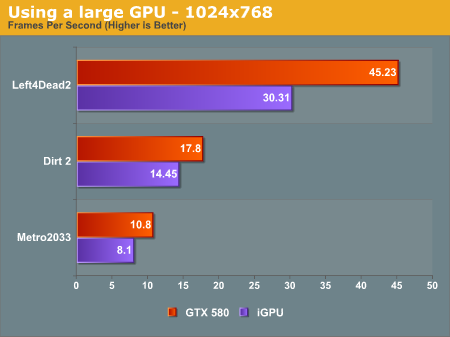

For this test, I ran the GTX 580 at the same settings as the iGPU tests, and then at the 1920x1080 resolutions and settings that we normally do for the high end motherboards (8xMSAA, 16xAF). First, at the iGPU resolutions on the ASUS E35M1-I Deluxe:

Despite using a $500 GPU, our biggest increase in frame rate, at 1024x768 resolution, is only 50%. In Left4Dead2 on Sandy Bridge, at 1680x1050, we see over 200 fps - we know L4D2 can be fairly CPU limited, so the fact that we only see 45 fps is definitely testament to the Fusion CPU.

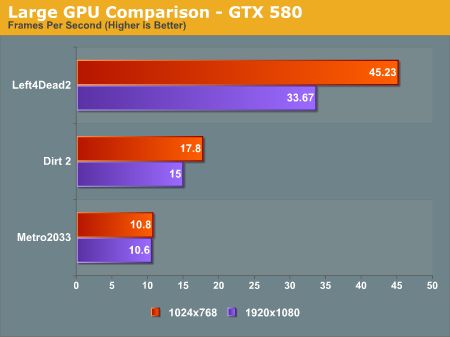

Now, at the full 1920x1080 resolution:

In Metro 2033, we didn't see any real decline from 1024x768 to 1920x1080, but there was a significant drop in Left4Dead2. These results are also due to the CPU holding the GPU back, meaning that even with a GTX 580 on Fusion, only the old games will be playable, but this time at a higher resolution.

67 Comments

View All Comments

sprockkets - Thursday, July 14, 2011 - link

I have the AsRock board. I get 18w idle and 24w under load via a killawatt device. Granted it uses an 80w power supply, but I'm kinda wondering how you got 59w for something that is practically the same setup in each board.IanCutress - Thursday, July 14, 2011 - link

I was using a less than ideal power supply for the power draw tests which was very inefficient in this range (<20% of maximum power), and unfortunately I don't have anything more appropriate at hand to test with. The comparisons (I believe) between the boards are more than relevant though. I will hopefully rectify this in future reviews of lower powered systems.Ian

formulav8 - Thursday, July 14, 2011 - link

Why didn't you wait to do power consumption tests then?bah12 - Thursday, July 14, 2011 - link

While not ideal, I'd say the whole point of this article was to illustrate the differences in the boards. Thus as long as they all suffered from the same inefficient PS, the information is not useless in that you can still draw a conclusion based on the differences at the board level. All and all, not ideal but useful.BushLin - Friday, July 15, 2011 - link

I once tried to reason with the fanboys at AMDZone on Anands behalf, defending that the reviews here were objective... I think I'm starting to believe that their might be some truth in their beliefs that the odds are stacked against AMD when their products are reviewed on here.At best, this review is a misguided. It focuses far too heavily on areas these systems are not aimed at, misinforms (or fails to inform) on areas that it's market are interested in and answers stupid questions that no-one is asking. Testing a GTX 580 with an E-350 at 4x PCI-E... really? Why not test out how well these work as a HTPC compared to something like ION and the latest Atom?

At worst, this review could almost be seen as a deliberate undermining of a technology that's potentially superior to it's Intel's offering and how often could you honestly say that since Core2?. Most of the tests are irrelevant (or become irrelevant when comparing to much higher TDP chips), the one test you did manage to do which is very relevant (power consumption) was so high that it prompted me to look at other reviews and take the time to write this comment!

This review has idle power consumption as at least 36w, Xbit have it at 7.3w even with a 880w PSU. One of these reviews has it very wrong, I know which one I'm more inclined to believe.

http://www.xbitlabs.com/articles/cpu/display/amd-e...

IKeelU - Friday, July 15, 2011 - link

I have to agree with your assessment of the review.- These boards are aimed at HTPC market, but the review was focused...elsewhere (frankly, I can't tell what the focus was).

- How is the audio quality? I was very interested in the ASUS board until I noticed it doesn't have 6-channel direct out. This is important!

- Another, less important, point: The features/specs for each board should come first. Double points for a feature comparison table.

AnandThenMan - Friday, July 15, 2011 - link

It is extremely unfortunate that Anandtech has sacrificed their integrity when it comes to reviewing some of AMD's products. I really hope that more and more people are made aware of what is going on, these reviews are downright dishonest.The most important question people need to ask is, why is this happening? What is the incentive for Anandtech.com to publish these misleading reviews?

ET - Saturday, July 16, 2011 - link

Can you explain what is dishonest or misleading about this review? I agree that it could be better, but I don't see anything to indicate that anything was falsified here.medi01 - Sunday, July 17, 2011 - link

Seriously?Cough "This review has idle power consumption as at least 36w, Xbit have it at 7.3w even with a 880w PSU. ", cough?

Oh, it's irrelevant, because we're comparing motherboards of the same platform? Orly? What if I read this, say "OMG it consumes so much energy" and go buy Atom?

Tell me how to get that 36w idle thing, what kind of PSU should be used, to justify 7.3w (with bloody 880w PSU!!!!) vs 36w please?

What are 5850 580gtx doing in this review?

Shameless...

Finraziel - Thursday, September 1, 2011 - link

Monstrously late reply... but I just can't not leave this comment... Did any of you actually read the xbit article? Those power draw measurements are measured between the PSU and the components, only measuring what the components are actually using, completely ignoring the efficiency of the PSU (the way xbitlabs has been testing for years I might add). So the fact that they were using an 880W PSU has absolutely zero bearing on their readings.Granted, it's still a shame that these boards couldn't have been tested with something like a pico psu, and I do agree the article could have been better (for instance, how much noise does that tiny fan on the ECS board actually make? apart from an easily missed remark in the conclusion nothing is said about it), but it's not as bad as you people are making it out to be.