Memory: The Compatriot of the GPU

While GTC was a show built around NVIDIA’s GPUs, it’s more than just a GPU that makes up a video card. Both Samsung and Hynix had a presence at the show, the two of them being the principle suppliers of GDDR5 at the moment (while there are other manufacturers, every single card we have is Samsung or Hynix). Both companies recently completed transitions to new manufacturing nodes (40nm), and are now bringing up denser GDDR5 memory that they were at the show to promote.

First off was Samsung, who held a green-themed session about their low power GDDR5 and LPDDR2 products, primarily geared towards ODMs and OEMs responsible for designing and building finished products. The adoption rate on both of these product lines has been slow so far.

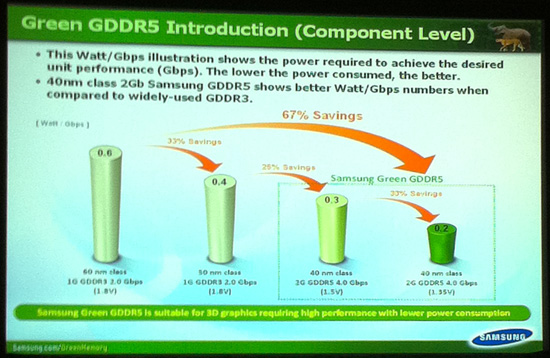

Starting with GDDR5, normally GDDR5’s specification calls for a 1.5v operating voltage, but Samsung also offers a line of “Green Power” GDDR5 which operates at 1.35v. Going by Samsung’s own numbers, dropping from 1.5v to 1.35v reduces GDDR5 power consumption by 33%. The catch of course is that green power GDDR5 isn’t capable at running at the same speeds as full power GDDR5, with speeds topping out at around 4Gbps. This makes green power GDDR5 unsuitable for cards such as the Radeon 5800 and 5700 series which use 5Gbps GDDR5, but it would actually make it a good fit for AMD’s lower end cards and all of NVIDIA’s cards, the latter of which never exceed 4Gbps. Of course there are tradeoffs to consider; we don’t know what Samsung is doing with respect to bank grouping on the green parts to hit 4Gbps, and bank grouping is the enemy of low latencies. And of course there’s price, as Samsung charges a premium for what we believe are basically binned GDDR5 dies.

The other product Samsung was quickly discussing was LPDDR2. We don’t delve on this too much, but Samsung is very interested in moving the industry away from LPDDR1 (and even worse, DDR3L) as LPDDR2 consumes less power than either. Samsung believes the sweet spot for memory pricing and performance will shift to LPDDR2 next year.

Finally, we had a chance to talk to Samsung about the supply of their recently announced 2Gbit GDDR5. 2Gbit GDDR5 will allow cards using a traditional 8 memory chip configuration to move from 1GB to 2GB of memory, or for existing cards such as the Radeon 5870 2GB, move from 16 chips to 8 chips and save a few dozen watts in the process. The big question right now with regards to 2Gbit GDDR5 is the supply, as tight supplies of 1Gbit GDDR5 was fingered as one of the causes of the limited supply and higher prices of Radeon 5800 series cards last year. Samsung tells us that their 2Gbit GDDR5 is in mass production and shipping, however the supply is constrained through the end of the year.

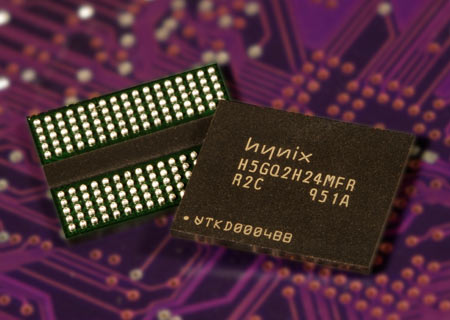

Moving on, as we stated earlier Hynix was also at GTC. Unlike Samsung they weren’t doing a presentation, but they did have a small staffed booth in the exhibition hall touting their products. Like Samsung their 2Gbit GDDR5 is in full production and officially is available right now. Currently they’re offering 2Gbit and 1Gbit GDDR5 up to 6Gb/sec (albeit at 1.6v, 0.1v over spec) which should give you an idea of where future video cards may go. Like Samsung it sounds like they have as much demand as they can handle at the moment for their 2Gbit parts, so supply may be tight for high speed 2Gbit parts for the rest of the year throughout the industry.

There was one other thing on the brochure they were handing out that we’d like to touch on: things reaching End of Life. In spite of the fact that the AMD 5xxx series and NVIDIA 4xx series cards all use GDDR5 or plain DDR3, GDDR3 is still alive and well at Hynix. While they’re discontinuing their 4th gen GDDR3 products this month, their 5th gen products live on to fill video game consoles and any remaining production of last-generation video cards. What is being EOL’d however is plain DDR2, which meets its fate this month. DDR3 prices have already dropped below DDR2 prices, and it looks like DDR2 is now entering the next phase of its life where prices will continue to creep up as it’s consumed as upgrades for older systems.

Scaleform on Making a UI for 3D Vision

One of the sessions we were particularly interested in seeing ahead of time was a session by Scaleform, a middleware provider that specializes in UIs. Their session was about what they’ve learned in making UIs for 3D games and applications, a particularly interesting subject given the recent push by NVIDIA and the consumer electronics industry for 3D. Producing 3D material is still more of a dark art than a science, and for the many of you that have used 3D Vision with a game it’s clear to see that there are some kinks left to work out.

The problem right now is that traditional design choices for UIs are built around 2D, which leads to designers making the implicit assumption that the user can see the UI just as well as the game/application, since everything is on the same focal plane. 3D and the creation of multiple focal planes usually results in the UI being on one focal plane, and often quite far away from the action at that, which is why so many games (even those labeled as 3D Vision Ready) are cumbersome to play in 3D today. As a result a good 3D UI needs to take this in to account, which means breaking design rules and making new ones.

Scaleform’s presentation focused both on 3D UIs for applications and for gaming. For applications many of their suggestions were straightforward, but were elements that required a conscientious effort of the developer, such as not putting pop-out elements at the edge of the screen where they can get cut off, and rendering the cursor at the same depth as the item it’s hovering over. They also highlighted other pitfalls that don’t have an immediate solution right now, such as being able to maintain the high quality of fonts when scaling them in a 3D environment.

As for gaming, their suggestions were often those we’ve heard from NVIDIA in the past. The biggest suggestions (and biggest nuisance in gaming right now) had to deal with where to put the HUD: painting a HUD at screen depth doesn’t work; it needs to be rendered at depth with the objects the user is looking at. Barring that it should be tilted inwards to lead the eye rather than being an abrupt change. They mentioned Crysis 2 of this as an example of this, as it has implemented its UI in this manner. Unfortunately for 2D gamers, it also looks completely ridiculous on a 2D screen, so just as how 2D UIs aren’t great for 3D, 3D UIs aren’t great for 2D.

Crysis 2's UI as an example of a good 3D UI

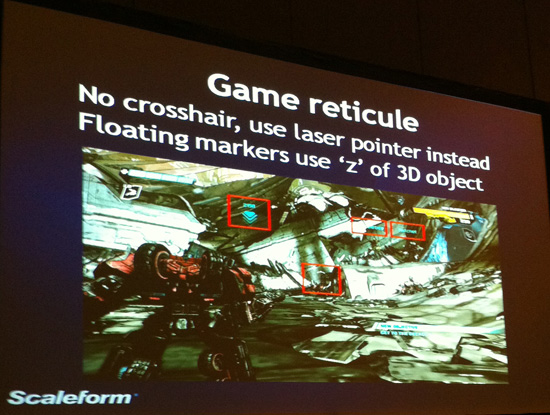

Their other gaming suggestions focused on how the user needs to interact with the world. The crosshair is a dead concept since it’s 2D and rendered at screen depth. Instead, taking a page from the real world, laser sights (i.e. the red dot) should be used. Or for something that isn’t first-person like an RTS, selection marquees need to map to 3D objects – whether items should be selected should be based upon how the user would see it rather than absolute position.

19 Comments

View All Comments

adonn78 - Sunday, October 10, 2010 - link

This is pretty boring stuff. I mean the projectors ont eh curved screens were cool but what about gaming? anything about Nvidia's next gen? they are really falling far behind and are not really competing when it comes to price. I for one cannot wait for the debut of AMD's 6000 series. CUDA and PhysX are stupid proprietary BS.iwodo - Sunday, October 10, 2010 - link

What? This is GTC, it is all about the Workstation and HPC side of things. Gaming is not the focus of this conference.bumble12 - Sunday, October 10, 2010 - link

Sounds like you don't understand what CUDA is, by a long mile.B3an - Sunday, October 10, 2010 - link

"teh pr0ject0rz are kool but i dun understand anyting else lolz"Stupid kid.

iwodo - Sunday, October 10, 2010 - link

I was about the post Rendering on Server is fundamentally, but the more i think about it the more it makes sense.However defining a codec takes months, actually refining and implementing a codec takes YEARS.

I wonder what the client would consist of, Do we need a CPU to do any work at all? Or would EVERYTHING be done on server other then booting up an acquiring an IP.

If that is the case may be an ARM A9 SoC would be enough to do the job.

iwodo - Sunday, October 10, 2010 - link

Just started digging around. LG has a Network Monitor that allows you to RemoteFX with just an Ethernet Cable!.http://networkmonitor.lge.com/us/index.jsp

And x264 can already encode at sub 10ms latency!. I can imagine IT management would be like trillion times easier with centrally managed VM like RemoteFX. No longer upgrade every clients computer. Stuff a few HDSL Revo Drive and let everyone enjoy the benefit of SSD.

I have question of how it will scale, with over 500 machines you have effectively used up all your bandwidth...

Per Hansson - Sunday, October 10, 2010 - link

I've been looking forward to this technology since I heard about it some time ago.Will be interesting to test how well it works with the CAD/CAM software I use, most of which is proprietary machine builder specific software...

There was no mention of OpenGL in this article but from what I've read that is what it is supposed to support (OpenGL rendering offload)

Atleast that's what like 100% of the CAD/CAM software out there use so it better be if MS wants it to be successful :)

Ryan Smith - Sunday, October 10, 2010 - link

Someone asked about OpenGL during the presentation and I'm kicking myself for not writing down the answer, but I seem to recall that OpenGL would not be supported. Don't hold me to that, though.Per Hansson - Monday, October 11, 2010 - link

Well I hope OpenGL will be supported, otherwise this is pretty much a dead tech as far as enterprise industries are concerned.This article has a reply by the author Brian Madden in the comments regrading support for OpenGL; http://www.brianmadden.com/blogs/brianmadden/archi...

"For support for apps that require OpenGL, they're supporting apps that use OpenGL v1.4 and below to work in the VM, but they don't expect that apps that use a higher version of OpenGL will work (unless of course they have a DirectX or CPU fallback mode)."

Sebec - Sunday, October 10, 2010 - link

Page 5 -"... and the two companies are current the titans of GPU computing in consumer applications."Current the titans?

"Tom believes that ultimately the company will ultimately end up using..."