Everything You Always Wanted to Know About SDRAM (Memory): But Were Afraid to Ask

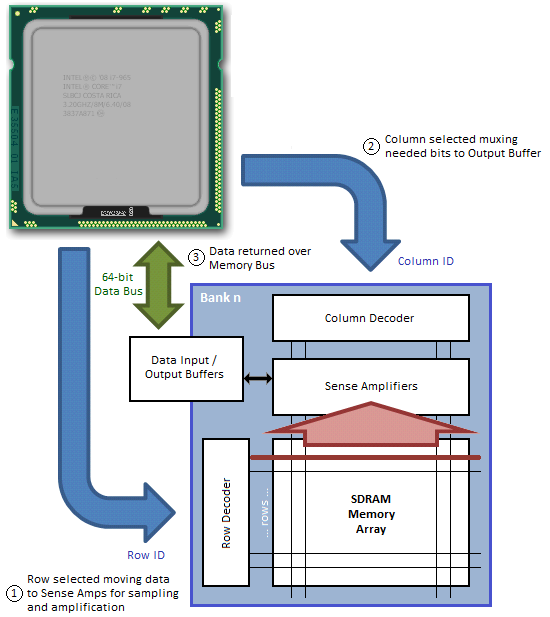

by Rajinder Gill on August 15, 2010 10:59 PM ESTThe process of moving data in and out of the Memory Array and over the Memory Bus is not overly complicated, although the massive parallelization of the actual effort can make it somewhat difficult to fully envision what's really happening without some pretty concise visual aids. We'll try our best to help you out in this regard.

Both read and write access to DDR[3] SDRAM is burst oriented; access starts at a selected location and continues in a pre-programmed sequence for a Burst Length (BL) of 8-bits, or 1 byte, per bank. This begins with the registration of an ACT command and is followed by one or more READ or WRI commands.

Chip Select (S0#, S1#), one for each rank, either enables (LOW) or disables (HIGH) the command decoder which works like a mask to ensure commands are acted upon by the desired rank only.

The length of the each Read Burst (tBurst) is always 4 clocks (4T) as DDR memory transmits data at twice the host clock rate (4 clocks x 2 transactions/clock = 8 transactions or 8 bits per bank).

The address bits registered coincident with the ACT command are used to select the bank and page (row) to be accessed. For our hypothetical 2GB DIMM described on Page 2 of this article, Bank Selects BA0-BA2 indicate the bank and Address Input Selects A0-A13 indicate the page. Three bits are needed to uniquely address all eight banks; likewise 14 bits are needed to address all 16,384 (214) pages.

The address bits registered coincident with the READ or WRI command are used to select the targeted starting column for the burst. A0-A09 select the column starting address (210 = 1,024). A12 is also sampled during this operation to determine if a Burst Chop (BC) of 4-bits has been commanded (A12 HIGH). Even though a Burst Chop delivers only half that data of a regular Read Burst, the time period to complete the transfer is still the same: 4T. The SDRAM core simply masks the outgoing data clock strobe for the second half of the full read cycle.

During a Precharge command A10 is sampled to determine whether the precharge is intended for one bank (A10 LOW; BA selects) or all banks (A10 HIGH).

Data Input/Output pins DQ0-DQ63 provide the 64-bit wide data interface between the memory controller embedded in the CPU and each DIMM. Those with a triple-channel capable CPU, like the Intel Core i7-series processor, will come to understand why the memory bus width is reported as 192-bit – three independently operated channels each with a 64-bit interface makes 192. Those of you running a Core 2 or a Core i3/i5 will have to make do with just two channels for a total bus width of 128 bits.

Each channel can be populated with up to two DIMMs. This means there could be a maximum of four ranks per channel, assuming we install a matched pair of dual-rank modules. Installing more than one DIMM per channel does not double the Memory Bus bandwidth, as modules co-located in the same channel must compete for access to a shared 64-bit sub-bus; however, adding more modules does have the added benefit of doubling the number of pages that may be open concurrently (twice the ranks for twice the fun!).

Figure 3 attempts to provide a top-down look at the minimum cycle needed to first open a page in memory, and then read data from the activated page; Figure 4 shows the same, only from a much more fundamental perspective; and Figure 5 provides a detailed accounting of the timing involved.

In this example we assume the bank has no open page, thus is already in the proper precharged state to support a new page access command. Step 1 selects the bank; Step 2 selects the column; and Step 3 bursts the data out over the Memory Bus. A 1-bit row address and a 2-bit column address are all we need to read any data stored in our 2 x 4-bit x 1 (bank) Memory Array.

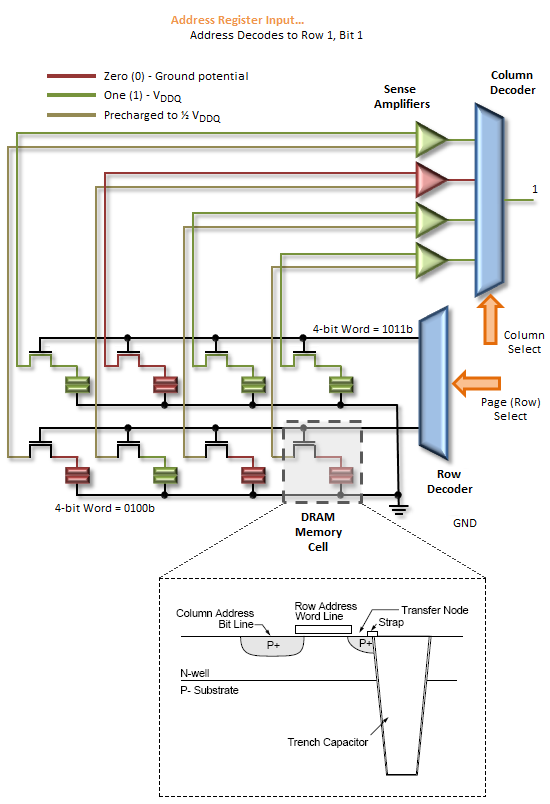

An Activate command prompts the routing of the specified page address to be accessed to the Row Decoder where it triggers the chosen Word Line to appear at the input of the Sense Amps. As previously stated, this takes a finite time - Row-Column (or Command) Delay (tRCD) is used to program the minimum wait time the memory controller allows for this to occur before it issues the next command in the sequence. Attempting to set too low a timing can lead to inconclusive operation, often resulting in data corruption and other data access issues that ultimately lead to system crashes and other application errors.

Next, the column address provided with the Read command selects the right Bit Line, beginning the process of disregarding those bits that were not addressed. The wait associated with these events is the CAS Latency (CL or tCAS).

The Sense Amps work by sensing the direction of the voltage swing induced on the sense line when the Word Line is activated. Activating the page gates-on the switching element holding back the accumulated charge in a trench filled with dielectric material used to create the capacitive storage element of the memory cell. When this happens the sense line, starting from VRefDQ (½ VDDQ), either swings positive or negative, depending on the potential of the sampled memory cell. An increase in voltage encodes a 1, while a decrease means 0.

The Sense Amps are not comparators. Rather, each Sense Amp interfaces with a pair of memory cells, reducing the total number of amplifiers needed to otherwise sense the entire array by a factor of two.

Following the read, any charge stored in the memory cells is obliterated. This is what is meant by a destructive read: not only do the Sense Amps cache the page for access, they now hold the only known copy of that page of memory! Precharging the bank will force the Sense Amps to "write" the page back to the array and will prepare the sense lines for the next page access by "precharging" them to ½ VDDQ. This accomplishes two thing: (1) It returns all sense rails to a known, consistent potential, and (2) it sets the pre-sense line voltage at exactly half the full-scale value of VDDQ, ensuring whatever the potential stored in the cell, there will be a swing in voltage when the proper Word Line is activated.

46 Comments

View All Comments

Dwebtron - Monday, August 16, 2010 - link

How did you know I was afraid to ask!!0ldman79 - Tuesday, May 28, 2019 - link

It's because we're all from the future.neslog - Monday, August 16, 2010 - link

Thank you for a great article on memory and you are right, I was afraid to ask.landerf - Monday, August 16, 2010 - link

I've found for the i7 platform the perfect ram setup is 1200 Mhz + cas5 or 6 timings, a 3:1 uncore ratio, and a B2B of 4. Not only does this perform well even in synthetics, it provides the "smoothest" intel experience. Something people who use amd and intel have been complaining about intel lacking. Check this chart and see how well that setup performs compared to all the conventional 2:1 setups. https://spreadsheets.google.com/ccc?key=0AsaXlcTga...Servando Silva - Monday, August 16, 2010 - link

Thanks for a great article. It will take me a while to read it carefully and fully understand it.Kris + Raju = Killer combo.

neslog - Monday, August 16, 2010 - link

On page 8 you may want to change the wording in the last paragraph " Once you've had...to cordially invite[d] (you) to do some..."

Thanks again for the article. I appreciate all the work that went into putting it together

elforeign - Monday, August 16, 2010 - link

It's a site willing to go the extra mile like this to report and educate the masses that are truly worth the time to peruse and read the posted articles. I check this site daily because there is always something interesting to read. Thank you to all the staff who do a great job here!chizow - Monday, August 16, 2010 - link

Just kidding....Or am I? :D

JarredWalton - Monday, August 16, 2010 - link

There's obviously benefits to either direction. Reducing latency is definitely a priority, but something not mentioned in the text that bears repeating is that latency is a factor of clock speed as well as the various timings. While CAS 6 will always be better than CAS 7 at the same base clock (and likewise for the other timings), if you have a faster memory speed CAS 7 could end up being better.So here's the scoop:

DDR3-1066 = 266MHz base clock, or 3.75ns per cycle.

DDR3-1333 = 333MHz base clock, or 3.00ns per cycle.

DDR3-1600 = 400MHz base clock, or 2.50ns per cycle.

DDR3-2000 = 500MHz base clock, or 2.00ns per cycle.

That gives this table in order of increasing latency, with rough pricing for 2x2GB. Based on pricing and latency, I've starred the best buys on Newegg:

CAS 6 DDR3-2000 = 12.0ns. ($180)

CAS 7 DDR3-2000 = 14.0ns. ($140)

CAS 6 DDR3-1600 = 15.0ns. ($115) ***

CAS 8 DDR3-2000 = 16.0ns. ($150)

CAS 7 DDR3-1600 = 17.5ns. ($101) ***

CAS 9 DDR3-2000 = 18.0ns. ($100) ***

CAS 6 DDR3-1333 = 18.0ns. ($100) ***

CAS 10 DDR3-2000 = 20.0ns. ($118)

CAS 8 DDR3-1600 = 20.0ns. ($85) ***

CAS 7 DDR3-1333 = 21.0ns. ($90)

CAS 9 DDR3-1600 = 22.5ns. ($92)

CAS 8 DDR3-1333 = 24.0ns. ($92)

CAS 7 DDR3-1066 = 26.3ns. ($80)

CAS 9 DDR3-1333 = 27.0ns. ($85)

CAS 8 DDR3-1066 = 30.0ns. ($93)

Notice how the total latency often comes in groups. The DDR3-1333 CL6, DDR3-1600 CL7, and DDR3-2000 CL9 are all priced around $100. If you buy any of these modules, there's a good chance (though YMMV) that you can tweak timings to run at whichever value makes you happiest. I'd probably err on the side of buying the higher speed rated modules, though, or at least grab the 1600MHz set.

Rick83 - Monday, August 16, 2010 - link

Your pricing comparison is sadly missing one important factor:Operating voltage.

I was at first surprised by the high cost of 1333/9, but I expect the voltage of that kit to be around 1.5, where most 1333/7 kits already clock in at 1.65.

The 2000/9 kit probably also runs higher V's than the identically priced 1333/6?

Lower voltages are usually preferred, as they give you a) more headroom and b) less heat at stock - with on-die controllers even less cpu heat.